On Substack, Elizabeth Nickson charts the career of Elizabeth Gilbert who wrote Eat Pray Love and more recently a memoir of her life up to the point where she planned to murder her “once in a million year” partner:

Gilbert, you certainly know, wrote Eat Pray Love which was a massive international bestseller made into a film with Julia Roberts, which was also very successful. During the Pray portion, Gilbert retreated to an ashram in India to worship a living sub-deity called The Mother. At the time I was still tangentially aware of life in the world of moderately successful upscale arty women from the mega-cities and I’d heard of the Mother and her clinging clanging worship sessions — Siddha Yoga — going round the Pilates and yoga studios and the upscale self-help programs. The Mother’s satsangs were guaranteed to put you into an ecstatic state where you fused with the divine. And then you’d heal. From the abuse of the Patriarchy.

During the Pray section, Gilbert had a series of intense moments, which &mddash; coupled with an earlier session on the bathroom floor where God told her to wash her face and go to bed — meant, to her, a great deal. Her “God” gave her direction and purpose, where before she was caught in an unhappy marriage, being apparently the breadwinner in that marriage with a husband who a) didn’t work, b) wanted her to buy more and more stuff and c) have a child.

This seems a poor choice for a husband, but never mind. Gilbert was successful in the New York world of publishing and magazines and much occupied with that pursuit, a business which I now suspect is financed by the drug trade and used to launder money. In that world where success is one in ten thousand, one hundred thousand, and where Gilbert experienced perhaps the biggest literary success of her generation. She became universally, ridiculously, excessively loved.

And embrace it she did. For the past 15 years, Gilbert has traveled the world, usually with a woman companion to keep her on the rails, dishing out nostrums and platitudes with relish meant to show you how to “find yourself” and “live your truth” to women searching for purpose. “Creativity” or “art” is now substituted for what women in the before times used to call service to their communities and families, which is now called slavery to the patriarchy.

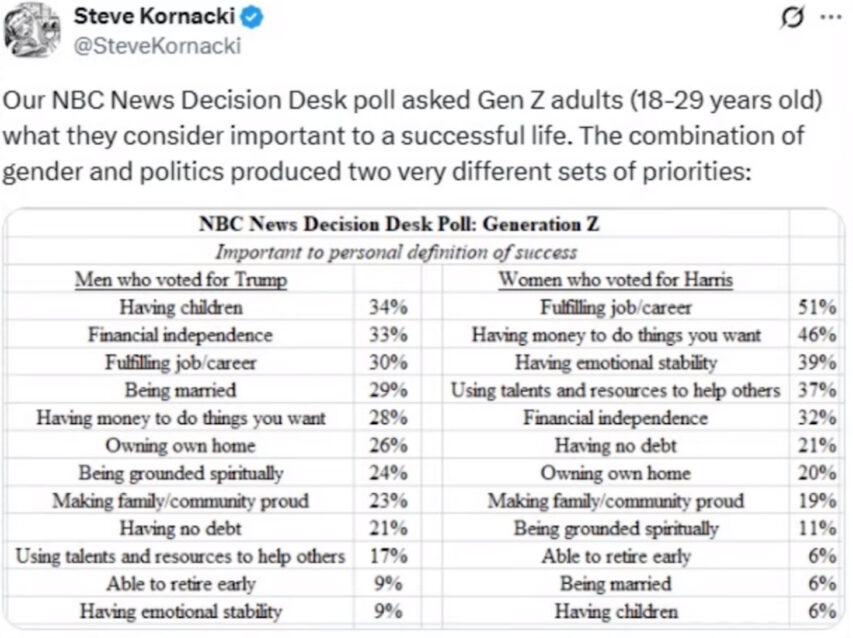

The following is the progression of “evolving” for modern left-of-center women, for whom finding a meaningful work is the number one priority, children being the last, as the below illustrates.

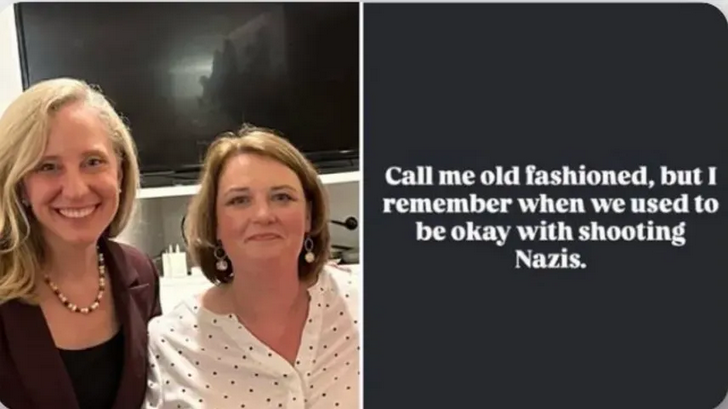

When the recognition of slim to no talent or at least un-sellable talent, is made and a future of grinding for multinationals is revealed, and spiritual enlightenment or Kundalini awakening seems out of reach, the desperation moves onto Democrat politics, and ends in middle-aged and elderly woman on the streets, face contorted in rage. Those women, a full 40% of whom are childless and family-less, spend their lives slogging away in some corporate or health or educational structure, becoming semi-insane. As an aside note, in my years-long investigation of voter fraud, many of the operators are women just like these below: middle-aged, put together, well dressed, polite, fully criminal.

In searching for your creativity — the highest good — you have to become fully aligned with your child self, your spiritual self, and that self becomes the most cherished part of you. Your intelligence, your executive function is demoted. Your creativity, your spirituality, then becomes fused to others whom you perceive being as weak as that child self you have elevated as spiritually superior. Women, it seems hardwired, must have people to care about. In the absence of family, it is the helpless to whom you assign your life.

Gilbert’s once-in-a-million-years love was a gay Syrian immigrant hairdresser with a history of heroin addiction and incarceration. No more victimish victim can be found.

For Gilbert’s millions of acolytes, spiritual worth, meaning,creative power is found in allyship with the weak, with whom they fully identify. And meaning is also found in hysterical advocacy and fury on behalf of the weak. There is no thinking attached to any of this, no analysis, no study. Just intense emotionality.