The L-word is not taken to mean American “liberalism”, the distressingly anti-liberal, lawyer-driven politics of increasing governmental planning and regulation and physical coercion. It is instead the rest of the world’s “liberalism”, economist driven, “the liberal plan”, as old Adam Smith wrote in 1776, “of [social] equality, [economic] liberty and [legal] justice”, with a modest, restrained government giving real help to the poor. True modern liberalism.

A liberal “rhetoric” explains the good features of the modern world compared with earlier and later illiberal régimes — the economic success of the modern world, its arts and sciences, its kindness, its toleration, its inclusiveness, and especially its massive liberation of more and more people from violent hierarchies ancient and modern. Its enemies claim that it also explains alleged evils, such as the reduction of everything to money or the loss of community or the calamity of immigration by non-Christians. But they are mistaken.

Dierdre McCloskey, “The power of liberalism can combat oppression in all its forms”, The Economist, 2020-01-08.

May 28, 2026

QotD: Re-defining economic liberalism

May 27, 2026

QotD: “Bring your whole self to work”

My “favourite” stupid workplace idea is “bring your whole self to work”. Only someone who does not understand how teams work would suggest such a toxically dumb idea.

Organisations and institutions are formalised teams. Due to past ruthless selection — see the Neolithic y-chromosome bottleneck — the male expression of Homo sapien genes is much better at teams than is the female expression of the same. This does turn out to matter.

We have spent centuries, millennia, dealing with the bad traits of men in power. We better start wrestling seriously and quickly with the bad traits of women in power, or we could end up with a cascading collapse of complex systems (see the LA fires for an example). We are already seeing some serious institutional degradation.

But if we remain stuck in “if you criticise men, it’s feminism; if you criticise women, it’s misogyny”, we have a potentially terminal problem.

Lorenzo Warby, Substack Notes, 2026-02-21.

May 26, 2026

QotD: Entropy versus Revolution

… the “Left” has nothing to do with even Marx anymore, much less anything so CisHetPatWhite as “the Rights of Man and Citizen”. Your “rights” are whatever the State says they are today, as determined by a snap poll of Blue Checkmarks. The “left” is, ironically, a bit better about paying lip service to their “tradition” than is the “right” — the “left” will still give you a good sermon about Evil Corporations, for instance, even as they’re using Big Tech, Big Bank, and Big Pharma to stomp you — but it’s clear that they believe in nothing, Lebowski, nothing!

They’re simply nihilists, and their nihilism is just a way station to suicide. Their “program”, such as it is, aims at absolute stasis — they want everyone and everything to be exactly one thing, now and forever, because this is the closest to annihilation they can get without being forced to admit to themselves that what they’re really longing for is the sweet release of death. The purpose of all those bespoke sexualities, for instance, clearly isn’t “to find a likeminded person to have sex with”; rather, it’s to make sure you can never have sex with anyone at all.

Ooops, sorry, you only fulfill 459 of the 462 bullet points on the checklist.

Which is weird, I realize, because the “Left” (for rhetorical convenience) are always in frantic motion. But it’s displacement activity. As I’ve written before, you can call it “permanent revolution”, but it’s Isaac Newton’s version, not Leon Trotsky’s — forever spinning in place, going nowhere. So long as they never stop spinning, they’ll never hear the vast emptiness of their own lives. They’ll never have to look their death wish straight in the eye.

The “Right” (again for rhetorical convenience) seems to be locked in a never-ending battle against entropy. That’s what it seems to boil down to. Things fall apart and pass away, and in their breakdown we are robbed of our fundamental dignity. In the end, that’s the only thing worth “conserving” — your fundamental dignity; the only “right” that matters is the right not to be a clown.

One always loses the battle against entropy eventually, but the dignity is in the fight. For the “left”, who have no dignity, the fight is just a distraction, sound and fury to distract from the nothingness that always threatens to overwhelm them … and that they secretly long for.

Severian, “Entropy vs. Revolution”, Founding Questions, 2022-06-21.

May 25, 2026

QotD: Modern movie casting

In the English speaking world, it has become common to “blackwash” movies and television shows. This is the process of removing white characters and replacing them with non-white characters. The stated claim is that popular entertainment needs to reflect the changing nature of the audience. Of course, the reason the audience is changing is that the same people blackwashing films and television shows are ethnically cleansing white societies with mass immigration.

For a long time now, Hollywood has been taking great care to make the good characters black and the bad ones white. For a short while, the bad guys could be Arab terrorists, but now bad guys are white again. If they need to be foreign baddies, then they are neo-Nazis from eastern Europe or Russian gangsters. Of course, the smartest characters are black or female. If we’re lucky, the brainiac is a black lesbian. Every computer hacker is now non-white or female.

On occasion the blackwashing gets ridiculous. Some figure from white history is played by a black actor. A black guy in a show about medieval Europe could be amusing, but that’s not how it is done. Instead, we get black cowboys saving a white town or a black playing King Lear. It will not be long before we have historical dramas in which well-known figures from white history are played by black actors as black people. Imagine Ben Franklin played by Morgan Freeman.

The Z Man, “Blackwashing”, The Z Blog, 2020-10-02.

May 24, 2026

QotD: Historians, past and present

The average ancient historian led troops, tutored a prince, governed a province, advised a king, made a fortune, fell from favor, was exiled, and buried 7 of their 10 children. The average modern historian passed a few tests then wrote a book on their laptop next to their cat. And worse, they all passed the same tests at the same institutions. And they all wrote the same statements on their applications to get into those institutions. And while attending those institutions, they all adopted the same opinions. Anyone who did otherwise was filtered out before they could become a professor with a publishing deal. Everything is like this now.

Meanwhile Xenophon was an Athenian student of Socrates who joined a Greek mercenary group that marched 1000 miles into Persia to overthrow the King of Kings on behalf of the King’s brother. When the King’s brother died and the group’s commanders were all killed by Persian treachery, he led the troops 1000 miles home himself while being constantly harried by hostile armies. He then tried to establish a colony on the Black Sea, survived a mutiny, raided the Thracians, fought for the Spartans, was exiled by Athens, and settled down to manage an estate and write it all up.

Contrast Xenophon with Mary Beard, who studied at Cambridge and now teaches at Cambridge. She holds the same opinions as everyone else at Cambridge. She’s remarked before that, “I actually can’t understand what it would be to be a woman without being a feminist”. This seems like a peculiar failing for an ancient historian. After 9/11, she wrote an article saying that many people thought “the United States had it coming”, and that “world bullies, even if their heart is in the right place, will in the end pay the price”. That caused some controversy on the world stage, but earned her a promotion at Cambridge. I don’t know if she’s ever talked publicly about religion or democracy or climate change or immigration, but I could tell you exactly what she thinks about these things anyway. So why would you bother reading what she thinks about Rome? The answers are just as predictable.

Roman Helmet Guy, “New Books Aren’t Worth Reading”, Atlas Press, 2026-01-13.

May 23, 2026

QotD: Egypt within the Roman Empire

When it comes to Roman governance in Egypt, perhaps the best summary of what we know about how typical it was would be to say that Roman rule in Egypt was somewhat unusual, but rather less unusual than we used to think it was, and it became more typical over time (so the level of unusualness is greatest under Augustus and then declines as a factor of time). Ironically, it has been in no small part coming to understand the wealth of the papyrus evidence that has led to this shift, revealing that our literary sources sometimes overstated the degree to which Egypt was unusual.

A lot of that comes from how Tacitus represents the structure of Roman rule in Egypt: he describes Augustus as having “kept in the [imperial] house” (retinere domi) the governance of Egypt, assigning it to an equestrian prefect. Egypt was a relatively late addition to Rome’s growing Empire; the Ptolemaic dynasty had ruled it since the death of Alexander the Great in 323. From the 160s that Ptolemaic kingdom had become effectively a client of Rome, its independence maintained by the threat of Roman arms (demonstrated vividly in 168 when Rome turned back a Seleucid invasion of Egypt with nothing more than a consultum of the Senate), but had remained independent until Cleopatra‘s disastrous decision to back Marcus Antonius (Mark Antony) in the last phase of Rome’s civil war. After their defeat, Octavian (soon to be Augustus) had in 30 BC after the suicide of Cleopatra, annexed the kingdom, creating the province of Roman Egypt.

Tacitus’ description of Augustus keeping the rule of Egypt “in the house” led early scholars to assume that Egypt was taken essentially as the private property of the emperors. This is less crazy than it initially sounds; later emperors administered massive estates through a parallel state treasury called the fiscus (distinct from the main treasury of the Roman state, the aerarium Saturni; the fiscus was the private accounts and property of the emperor) administered in some cases by equestrian officials, so the idea of running an entire province effectively out of the fiscus, with the whole of Egypt effectively the private property of the emperor administered by an equestrian official wouldn’t have seemed impossible and it certainly seems to be what Tacitus is describing.

But as our evidence for the activity of these prefects has improved, what we see are officials who act quite a lot like other provincial governors, despite their non-senatorial origins. Praefecti Aegpyti typically served around three years (fairly typical), where generally not from the province they oversaw (also typical), and wouldn’t be reassigned to a post back in that province (also typical). Unlike with the earlier Ptolemaic government, there was no royal court in Egypt, the prefect’s entourage more nearly resembling that of a Roman governor, nor was the emperor personally present. Residents of Egypt who wished to petition the emperor had to do it through the same channels as any other resident of the Roman Empire. The military enforcement forces in the province, too, were typically Roman, drawn (as was normal) from provinces other than where they served. Consequently, as Dominic Rathbone (op. cit.) notes, local elites looking to operate with this new form of government found that they had to adjust themselves to a system of rule, quintessentially Roman, rather than the more personalistic Ptolemaic regime where favor might be curried with important local figures or the royal court itself.

That said, while we’ve increasingly found that the Praefectus Aegypti was more of a normal governor than we thought, vision into the lower levels of the Roman administration in Egypt reveal a complex and in some cases peculiar system. In most of the Roman Empire, Roman governors oversaw largely self-governing communities, run by local elites, which handled most local affairs. Those communities generally delegated governing functions to elected or appointed magistrates who were amateur part-timers drawn from the elite (the curiales, we’ve mentioned these fellows before).

In Egypt, by contrast, while the Romans disassembled the royal Ptolemaic court, they initially seem to have left much of its administrative apparatus of salaries administrators in place. The division of Egypt into administrative districts – called nomes – was kept and the seat of government in the province was firmly entrenched in Alexandria (whereas at least in the first two centuries, most Roman provinces had no clearly established “capital”). Each of the nomes was governed by a strategos (while the word means “general” these were purely civilian officials), typically drawn from the Alexandrian upper-class (rather than being truly local elites), assisted by a salaried basilikos grammateus, “royal scribe”. Villages also generally had a komogrammateus, village scribe, who reported to the strategos; these fellows also seem to have initially been salaried officials. Some of these positions gradually became truly liturgic in nature, mirroring more closely systems of local governance in much of the rest of the Roman world, but perhaps only in the late second century.

Similarly, it was often assumed early on that land ownership and tenure would look very different with the emperor maintaining a lot of direct control and nearly all of the land in Egypt being effectively public land. That perspective was potentially reinforced by the evidence out of the Arsinoite nome (again, modern el-Fayyum) because most of the land there under the Ptolemies belonged to military settlers and thus had special obligations placed on it and was thus not truly private land. But what we see under the Romans is that first this military settler (cleruchic or katoikic; the distinctions here are a post for another day) land is fully privatized and taxed like it would be anywhere else. Meanwhile, the evidence from the other nomes on the Nile itself suggest that private land was more common there even under the Ptolemies. That said, the expansion of private land holdings seems to have been a process taking place mostly under Roman rule, which in turn meant that in many cases land tenure might look quite different in Egypt (where much land was either public or held by temples) than in the rest of the empire where most land was in private hands (although public and temple lands were also common), though it tended to look more and more like the rest of the empire over time, with the process supposed to be substantially complete by the end of the second century. Scholars broadly seem to still be very much divided on the degree to which late Ptolemaic and early Roman Egyptian landholding was exceptional, but it certainly had its substantial quirks.

Meanwhile the Romans did another odd thing in that they didn’t change: the currency system. While the Roman Empire minted its currency in a series of regional mints (not centrally), the Romans almost always brought new areas under their control into the existing Roman currency system (based principally around the gold aureus, the silver denarius and the copper-alloy sestertius). That was both a tool of Roman imperialism, a way to make physical Rome’s notional dominion over conquered lands, but it also served (probably unintentionally) to lower transaction costs and encourage economic interaction between provinces. But Egypt was not brought into the Roman currency system, instead maintaining the Ptolemaic currency system based on the silver tetradrachma (Egypt was already a very monetized economy under the Ptolemies). That barrier between the economy in Egypt and outside of it can make it tricky to know how representative prices within Roman Egypt were for the rest of the empire. Egypt is only brought into the broader Roman currency system with the currency “reforms” of Diocletian (r. 284-305).

At the same time, Egypt was hardly “cut off” from the broader Roman economy. We have good evidence of quite a lot of trade out of Egypt, particularly in agricultural staples. But here again, Egypt is strange: Egyptian grain was the foundation for the imperial era annona civilis, the distribution of free grain to select citizens in the city of Rome itself. That meant a massive, continuous state-organized transfer of grain, specifically wheat grain, from Egypt to Rome. Some of that grain was taxed in kind, but much of it seems to have been purchased in Egypt; in either case transport was essentially subcontracted by the state. Egypt was hardly the only source of grain for the annona (the province of Africa, modern Tunisia, was another major source), but few provinces likely saw the scale of state-organized goods transfer that Egypt did. And it’s striking that attested Egyptian agriculture is quite heavily dominated by wheat farming, rather more than we might normally expect, which both speak to the high yields the Nile could offer but also Egypt’s role as the breadbasket of the Roman Empire.

Bret Devereaux, “Collections: Why Roman Egypt Was Such a Strange Province”, A Collection of Unmitigated Pedantry, 2022-12-02.

May 22, 2026

QotD: The cargo cults of New Guinea

When I was twelve years old, my grandfather gave me a copy of Jared Diamond’s Guns, Germs, and Steel. This single fact probably goes farther than any other in explaining How I Got This Way: the book blew my mind and kicked off a lifelong fascination with big-picture, multidisciplinary investigations of how the world, well, Got This Way. (Or, if you’re a hereditarian: roughly 25% of my genes come from a guy who thought this was a good book to buy for a twelve-year-old girl.)

You may remember that Guns, Germs, and Steel is framed as a reply to a man named Yali, a “remarkable local politician” whom Diamond encountered while walking on the beach in New Guinea in July of 1972. (Back before Diamond’s second career as a pop-science public intellectual, he was an ornithologist focusing on the birds of northern Melanesia.)1 They chatted for a while about the prospects for New Guinean independence, and local birds, and then Yali asked a question that Diamond spends a couple of paragraphs boiling down to something like, “Why did human development proceed at such different rates on different continents?” (Which is of course what Guns, Germs, and Steel tries to answer.) But that’s not actually the way Yali put it, and his real question — indeed, his whole story, which is fascinating in its own right — suggests a whole ‘nother set of answers

Yali should be better-known.2 He may have been from a backwards backwater, but he’s one of the true Player Characters of history. If we lived in a better world, he would be the subject of a prestige cable drama3 — or maybe a Robert Eggers film, because the values and assumptions of his society are incredibly foreign to a Western audience. And so to really understand and appreciate Yali’s story (and the question he asked an American ornithologist on the beach one day) you need some background about the tribal cultures of the New Guinea coast and their reaction to contact with Europeans. Which is to say, you need to understand cargo cults! Because what Yali actually asked (per Diamond’s recollection twenty-odd years later) was: “Why is it that you white people developed so much cargo and brought it to New Guinea, but we black people had little cargo of our own?”

“Cargo” is the catchall word for Western material culture in Pidgin English,4 the lingua franca of New Guinea’s many language isolates, and New Guineans were understandably obsessed: before European contact, they were living in the literal Stone Age. It would be an exaggeration to say that they hadn’t made any technological progress since their ancestors settled the island 50,000 years earlier, since they domesticated several local plants (taro, yams, and the cooking banana) and got pigs plus a little admixture from some passing Austronesians about 1500 BC, but they were solidly Neolithic and had been since time immemorial. So of course as soon as they encountered cargo — especially steel tools, tinned meat and dried rice, and cotton cloth — they wanted it desperately. And they almost universally believed they could get it by ritual activity.

The prescribed rituals varied. One set, recorded in secret by an American Lutheran missionary in the late 1930s, involved the locals setting up tables in front of the local cemetery and decorating them with flowers, food, and tobacco. Then they danced wildly until dawn in twitching, trembling fits so uncontrolled that some devotees continued to sway and shake for days or weeks afterward. Those lucky people were believed to have a special connection to the ancestors that would let them receive dream messages about the cargo shipments their tables and dancing would surely bring. A different cult was led by a man who had a long piece of iron he claimed brought him messages from the future. He told his followers that if they set out all their food in cemeteries as offerings to their ancestors, handed all their Western goods and money to him for safekeeping, and renamed Tuesday to Sunday, they could expect a god to send them airplanes full of cargo flown by the spirits of the dead disguised as Japanese servicemen. These spirits would bring them rifles, tanks, and other materiel and help them drive out the white people, and then the god would change the natives’ skin from black to white. Oh, and also there would be storms and earthquakes of unimaginable violence.

Forget everything you think you know about cargo cults. (Especially forget those pictures you may have seen of “decoy” airplanes or satellite dishes made out of straw and wood: one popular airplane photo is from a Japanese straw festival, another is a Soviet wind tunnel model, and the radio telescope is just one advertisement from a British ice cream company.)5 Nowadays we use “cargo cult” as a lazy shorthand for “copying what someone successful seems to be doing without really knowing why and hoping you get the same result,” but that’s not what was happening at all. If the New Guinea natives built airstrips, it wasn’t out of a belief that airstrips attract cargo planes like planting milkweed brings Monarch butterflies — that would be seem silly but basically understandable from our frame of reference. No, it’s much weirder than that. They built airstrips for exactly the same reason anyone else does: because they thought cargo planes were coming. They just thought the planes were coming because of the dancing.

This is a story about epistemology. And also about Jesus sending you a case of Spam in the mail.

Jane Psmith, “REVIEW: Road Belong Cargo, by Peter Lawrence”, Mr. and Mrs. Psmith’s Bookshelf, 2025-04-21.

- Okay, fine, it’s actually his third career — he was a specialist in cell membrane biophysics before he started publishing on birds.

- Kudos to commenter Gary Mar, who did his part in this project by alerting me to this book in the first place.

- Just in case anyone reading this has contacts in showbiz, my other idea for a cable drama is the story of Charles V, Philip II, and William of Orange. The emperor of half the known world, the son and heir raised far away, the beloved ward who betrayed him… It would win twelve Emmys.

- Which is not actually a pidgin but a creole! Nowadays it’s more often called Tok Pisin (etymologically, obviously, from “talk pidgin”). Most Tok Pisin vocabulary comes from English, but the grammar and pronunciation are very different and the orthography makes it hard to read. Still, if you try saying it out loud you can sometimes get the gist: “Wetman noken haitim samting moa” pretty easily becomes “white man no can hide’em something more”, and actually means something like “the white man will not keep anything secret from us any longer”.

- Credit for tracking down the sources of those images goes to Ken Shirriff in this blog post, which Gwern kindly sent me when I started talking about this book review.

May 21, 2026

QotD: “Theory” in film interpretation

[David G. Hughes] You often situate your ideas in reference to things like geography, the animal kingdom, sexuality, history, and tidbits of quirky detail — earthly, tangible things. It’s different from the dominant theoretical approach in film interpretation, and there’s humour. Would you describe your work as atheoretical, or even anti-theoretical?

[Camille Paglia] What has been called “theory” since the arrival of deconstruction in elite U.S. universities in the 1970s is in my view one of the most pointless and pretentious movements in modern cultural history. The catastrophic results should be obvious by now: the humanities are in ruin and have lost public respect and even internal support in academe, where budget reduction has come to the fore. I would refer those seeking greater specifics to my long attack on poststructuralism, Junk Bonds and Corporate Raiders: Academe in the Hour of the Wolf, published by Arion in 1991. Seven years ago, I did a follow-up assessment of current “theory” when the Chronicle of Higher Education asked me to review three new academic books by women about the bondage and domination trend. My unhappy response was “Scholars in Bondage”, which laments the damage done to promising young professors by a tyrannical academic establishment still chained to the bleached-out corpse of “theory”.

My approach to art is grounded in the sensory. Art is not philosophy. Art by definition refracts meaning through some medium of the material world. Hence my interpretation of art is grounded in the five senses. Perhaps the only theorist who fully grasped this issue was Gaston Bachelard in his 1957 book, The Poetics of Space, animated by a phenomenology that partly aligns with my own practice. It is no coincidence that I have spent most of my teaching career at art schools, where the body remains front and center in most art forms. Digital genres are certainly spreading and flourishing, but dance, music, and theater remain grounded in physicality — which is partly why art schools are finding it so difficult to adapt to the harsh, distancing realities of the virus crisis.

May 20, 2026

QotD: “Gilded Age” Robber Barons didn’t have access to what even working-class Americans have now

Where Marx really went wrong was — and I know this sounds flip, but I’m as serious as cancer — being born in 1818. He lived his entire miserable life in a world where “labor” really was a physical thing. The richest robber baron of the Gilded Age lived a far different life, materially, than the poorest serf-in-all-but-name working in his factories …

… but the robber baron knew he needed the serfs. Their relationship was purely dialectical. Without his factory hands, no robber baron. And in a strange but very real way, the higher up the food chain your Gilded Age robber baron went, the more he was dependent on his serfs for his lifestyle. J.P. Morgan is usually credited as being the first guy to become a Robber Baron purely through finance. Carnegie, Rockefeller, all those guys had most of their wealth in financial instruments, of course, but those financial instruments rested on control of a physical product — Carnegie Steel, Standard Oil.

I’m probably being unfair to Jay Cooke, the Michael Milken of his day, but since more people have heard of J.P. Morgan let’s roll with it. Even though Morgan’s wealth was entirely on paper — he was nothing but a securities trader — his lifestyle utterly depended on a battalion of servants. In a very real way, you yourself, right now, live much better than J.P. Morgan did in his heyday. And not just because you have aspirin, antibiotics, and air conditioning, three taken-for-granted things ol’ J.P. would’ve given half his kingdom for. But because you have more time. If you’re hungry, you can open the fridge or the microwave and have all the food you need in a matter of minutes.

J.P. couldn’t. J.P. had to deploy an army of servants every time he wanted a snack, and those servants were constrained by things like “availability of ice” and “when is the fishmonger at his stall”. You’re hungry at 2am, you jump in your car and get some Taco Bell. It takes ten minutes. J.P.’s hungry at 2am and it’s tough titty, J.P., your ass is going hungry. Because even though you’re the richest man in the world and have legions of manservants at your beck and call, Taco Bell just isn’t there. Even if someone had had the brilliant idea to create a Gilded Age Taco Bell, it still would’ve taken hours:

Wake up the manservant. Wake up the groom and stableboy. Hell, wake up the horse, then saddle the horse, ride to the drive thru window … which in this case means “the house of the guy who runs Gilded Age Taco Bell”. At which point he has to fire up the oven, start pounding the tortillas, send his own legion of valets and stableboys and whatnot out to get the refried beans …

And that’s the other thing, J.P. — you’d best not pull that shit too often, because those people know where you live. Not only do they know where you live, they live with you. Literally under the same roof. You want to sleep easy? You’d best not beat the servants too often, buddy.

There’s only so much “class consciousness” one can develop in that world. Oh yeah, J.P. thought of himself as one of the Masters of the Universe, there’s no denying that. But J.P. lived in what was still a brutally physical world, in a way we PoMo people really can’t grasp. If you can’t imagine what it would take to get some Gilded Age Taco Bell, maybe geography will do the trick. Ever seen Gangs of New York? Even if you haven’t, you’ve probably heard the name “Five Points”. The worst slum in America in the 19th century, and 19th century American slums were world class …

That was right down the street from Wall Street. Literally. I am not in any way joking, and if I’m exaggerating a little for effect when I say “J.P. could’ve hit Five Points with a five iron from his swanky digs on Central Park West”, I promise you I’m not exaggerating much. You can look it up for yourself. The main reason the Union rushed troops straight from the Gettysburg battlefield, and no-shit shelled parts of the city with gunboats, during the Draft Riots was because Five Points (et al) was right fucking there, and they might’ve gotten it into their heads to lynch a few Masters of the Universe. Rich man’s war, poor man’s fight, right? Let’s see how you like it, you bankster bastards …

The PoMo “information economy” removes all that. The other day I joked about colleges like Bennington and Goucher. I cracked some jokes, yeah, but I wasn’t really joking. Those places aren’t for us. Wall Street is still a physical location, but it might as well be on the dark side of the moon for all any of us have access to it. J.P. couldn’t beat the servants too hard, or too often. The modern equivalent of J.P. isn’t even aware that he has servants. He just clicks on a website, and stuff appears at his door. Like magic. Hell, it IS magic for all he knows, and he surely doesn’t care, because all that shit is his by right. He went to Bennington, after all. He has achieved full class consciousness.

All of which suggests, of course, that while Marx was wrong about the end state — the State will not, in fact, wither away — he might well have been right about the solution to the “contradictions of capitalism”, if you follow me. And if that makes me some kind of godless pinko Commie subversive, well … I’ve been called worse by better.

Anybody got the lyrics to La Marseillaise in English?

Severian, “On Losing the Cold War”, Founding Questions, 2022-07-02.

Update, 21 May: Welcome, Instapundit readers! Have a look around at some of my other posts you may find of interest. I send out a daily summary of posts here through my Substack – https://substack.com/@nicholasrusson that you can subscribe to if you’d like to be informed of new posts in the future.

May 19, 2026

QotD: Software developers as wizards

Is it weird that AI coding assistance is not giving me identity fracture?

A lot of software developers are feeling disoriented and threatened these days. Programming by hand is clearly going the way of the buggy whip and the hand-cranked auger. Which is how we’re finding out that a lot of people have their identities bound up in being good at hand-coding and how it feels to do that.

That’s not me. It’s not me at all. Rather to my surprise, I don’t miss coding by hand, not any more than I missed writing assembler when compilers ate the world and made that unnecessary. (That was in a couple years back around 1983, for you youngsters.)

Maybe the fact that I’m not feeling any of this disorientation disqualifies me from having anything to say to people who are. On the other hand … if you can learn to emulate my mental stance and be completely unbothered, maybe that would be a good thing?

So. If you’re a programmer, and you’re feeling disoriented, try this on for size:

I like being a wizard. I like being able to speak spells, to weave complex patterns of logic that make things happen in the world. Writing code is a way to manifest my will.

Yes, I’ve piled up a lot of arcane knowledge over the 50 years I’ve been doing this. But languages of invocation, they come and they go. Been a long time since I’ve had any use for being able to program in 8086 assembler, and that’s okay. I have better spells now, and these days some rather powerful familiars.

What I’m inviting you to do is think of yourself as a wizard. Not as a person who writes code, but as a person who is good at assuming the kind of mental states required to bend reality with the application of spells.

And if that’s who you are, does it matter if the spells are painstakingly scribed in runes of power, versus being spoken to an obedient machine spirit?

It’s all one; it’s all the manifestation of will. Arcane languages come and go, machine spirits appear and then diminish to be replaced by more powerful ones, but you? You are the magic-wielder. Without you, none of it happens.

Same as it ever was. Same is it ever was. And so mote it be.

ESR, the social media site formerly known as Twitter, 2026-02-17.

Update, 21 May: Welcome, Instapundit readers! Have a look around at some of my other posts you may find of interest. I send out a daily summary of posts here through my Substack – https://substack.com/@nicholasrusson that you can subscribe to if you’d like to be informed of new posts in the future.

May 18, 2026

QotD: Medical ads

I don’t understand commercials for medicine anymore. I mean, I understand what they’re trying to say when they advertise a medication and list its possible side effects. I just don’t understand why they bother anymore. Nobody takes these advertisements seriously. The other day, I saw a spot for something called Restless Legs Syndrome. I was stunned when it ended without turning into a “Good news; I just saved 15 percent on my car insurance by switching to Geico” commercial. That’s how bad it’s gotten. It doesn’t even matter how legitimate the affliction is. It could be cancer at this point. It could be a pill to stop spontaneous human combustion. Wouldn’t matter. I see these commercials and instinctively shrug them off. I suffer from Grain of Salt Disorder.

Jonathan David Morris, “Thoughts On Health”, Libertarian Enterprise, 2005-09-18.

May 17, 2026

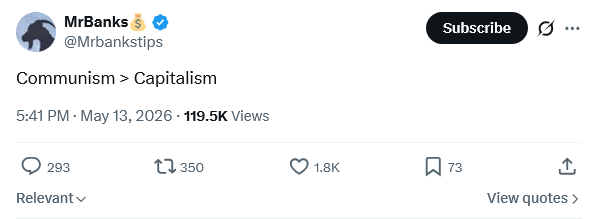

“Communism > Capitalism”

Once again, thanks to the auto-translation feature on the social media site formerly known as Twitter, Brivael Le Pogam responds to a fan of the most evil economic system yet devised by man:

“Communism > Capitalism”

Brother. You’re tweeting this from an iPhone. Designed in California. Made in a Chinese factory that only exists because Deng Xiaoping realized in 1978 that Maoism mostly produced corpses and decided to do capitalism in disguise.

The greatest reduction in poverty in human history (China 1980-2020) happened at the exact moment when China stopped doing communism. The greatest famine in human history (China 1958-62, 45M dead) happened at the exact moment when they started.

Same country. Same people. Same territory. Two systems. One built smartphones, the other built mass graves. Pick your side.

Communism’s trophy board:

USSR: collapsed

China: pivoted to capitalism, prospered

Vietnam: pivoted to capitalism, prospered

Cuba: still rationing soap in 2026

North Korea: eating tree bark

Venezuela: sitting on the world’s largest oil reserves, imports gasoline

Cambodia: killed 25% of its own populationCommunism isn’t an ideology. It’s a hiring program for people incapable of finding a real job, dressed up as economic theory. When you can’t build, you redistribute. When redistribution fails, you hunt for saboteurs. When you run out of saboteurs, you become someone else’s saboteur.

100 million dead. Zero examples that work. The most expensive LARP in human history.

But please, keep tweeting “Communism > Capitalism” from your capitalist phone, on your capitalist app, funded by capitalist ads. We need the comedy.

QotD: Battlefield morale and cohesion in movies/games versus real history

I’ve focused on game morale systems here, but of course this blends over into film as well, where the “mooks” often charge the heroes seemingly utterly heedless of their losses – frequently despite the fact that the last identical group of mooks to do so just got taken apart before their very eyes. And invariably they do this until they are so beaten that they switch to the other binary state, simply running away.

Actual armies have far more than two states of morale and behaved in far more dynamic, unpredictable and interesting ways!

The first problem with this “binary model” of morale is that it assumes just a single factor (“leadership” or “morale”) but in practice we ought to be thinking about at least two different ingredients here: morale and cohesion.

Morale is the commitment the combatants have to their leadership and their cause. To simplify a bit, we might say that soldiers with good morale believe three things: that their cause is a worthy one, that they are on the road to success and that their leaders have a good (enough) plan to achieve final victory. Poor morale can result from a breakdown in any of those three elements: troops might for instance believe both in their goal and its eventual possibility but not in their leaders to produce it (this seems to have been the case, for instance, in the French Mutiny of 1917). On the other hand, regardless of the charisma of leaders, few people come to a war intending to die in it; if the cause appears impossible, morale will sink regardless. And armies that do not believe in the cause at all are extremely difficult to motivate by other means.

On the other hand cohesion is the force that holds a specific unit together through the power of the bonds holding the individual combatants to each other and/or to their (generally junior or non-commissioned) officers. There are a lot of ways to build that cohesion: people are generally unwilling to abandon neighbors, close friends and relatives, for one. They are also reluctant to expose themselves to shame at home for having done so; shame is one of the few things people fear as much, if not more than, death. For armies that can’t rely on that sort of organic cohesion, it can be built by reconstructing the soldier’s unit as his primary social group. Drill can do this: it creates an experience of shared suffering and achievement which bonds the soldiers together creating strong “artificial” cohesion.

These two ingredients have different roots, but they also function differently. The formulation that has always stuck with me is one from James McPherson’s For Cause and Comrades: Why Men Fought in the Civil War (1998): morale (McPherson discusses it under the heading of “the Cause”) will get men into uniform, it will sustain them on large marches and cold nights and it will get them to the battle, but it will not get them through the battle. Instead, cohesion (the “comrades” of the title) gets men through the terror of actual combat, when fear has driven “the cause” far from mind. But of course cohesion isn’t enough on its own either, since it provides no reason to advance or attack or really to do anything at all except stick together.

Adding further complication to this, morale and cohesion are not, as they often exist in games, inherent properties of a unit, but rather emergent properties of the interactions of a whole bunch of individuals. In a strategy game, units exist primarily as extension of the player’s will; in film units typically exist as extensions of their commander’s or the main character’s will (note how common it is that right as the hero begins winning his duel with the villain, so too his army begins winning the battle). But of course actual armies are composed of lots of humans, each with their own individual will and agency.

Those humans are continually making calculations about risks, goals and survival. It’s not hard here to see why, by the by, morale won’t carry troops through high risk conditions: if your only goal is to survive to experience the end-state of the war, then it is always in your interest to let someone else do the dying; it doesn’t serve your end to stay in a high risk position. By contrast, if you are held there by the fear of shame if your close comrades see you run, that still applies. Thus these calculations get progressively more “primal” as the sense of danger rises (fear makes a mess of those higher brain functions), but they do not stop.

Bret Devereaux, “Collections: Total Generalship: Commanding Pre-Modern Armies, Part IIIC: Morale and Cohesion”, A Collection of Unmitigated Pedantry, 2022-07-01.

May 16, 2026

May 15, 2026

QotD: Rediscovering Cræft

Of course this isn’t just a book about hedging, that would be silly. There’s also haymaking, shepherding, walling, beekeeping, weaving, tanning, basketry, thatching, plowing, and the making of everything from ponds to quicklime, because Alex Langlands is obsessed with preserving (and if necessary recovering) the skills of the rural past. He wants you to understand what’s been lost to industrialization, and how our contact area with the world has shrunk, and why doing things with your body is part of being human, and … oh wait I’m sorry I nodded off, because I’ve written this all like twelve times already.

So why am I telling you about a book on how to do things by hand that you can do far more quickly and efficiently with a machine?

Well.

Langlands frames his book around the concept of cræft, which (as you can probably guess from that æsc) is the Old English origin of our modern “craft”. The ancestral word is richer and more complicated than the modern one, though, pointing to far more than handmade tchotchkes and beer with too much hops. The Dictionary of Old English explains:

“Skill” may be the single most useful translation for cræft, but the senses of the word reach out to “strength”, “resources”, “virtue”, and other meanings in such a way that it is often not possible to assign an occurrence to one sense in [modern English] without arbitrariness and loss of semantic richness.

Like the modern “craft”, it does convey a sense of ability, especially when it comes to one’s livelihood: the students in Ælfric’s Colloquy use cræft as well as weorc when discussing what they do all day. But it can also mean might or power: when the Old English Orosius tells us that the strength of the Medes failed them in battle, for example, it’s Meða cræft that gefeoll,1 and when Judah Maccabee’s foes join the fray, they begin to fight mid cræfte. Of course, there are semantic connections among these varied meanings: the ideas of physical strength and physical skill blend into one another at the edges, and a word for a thing you’re good at doing with your hands can also be used for a thing you’re good at doing with your mind. (After all, we still refer to writing as a “craft”.) And ideally you’re fairly talented at whatever set of things provide your livelihood! So we can say that Old English cræft broadly means something like “a person’s ability to bring his will to bear on the world, and his skill in doing so”.

There’s one more meaning, though, and it appears more or less exclusively in the writings of Alfred the Great: cræft as spiritual or mental excellence.2 Anglo-Saxon scholars had mostly used cræft as a way of rendering Latin ars, but when King Alfred translated Boethius into Old English he used cræft for Latin virtus, virtue as in moral excellence.3 A contemporary reader might be tempted to see this as merely an extension of the “mental skill” sense of the word (a virtuous person is one who is good at being good), but that would be misleading; the general meaning of cræft leaves the word freighted with powerful and inescapably physical implications. (Remember, too, that before the Reformation the Christian image of spiritual excellence universally emphasized asceticism, which necessarily involves the body a great deal.) Cræft as virtue is not an internal moral condition, it’s an internal activity, a kind of doing or making of the soul.

Or, as Langlands glosses his title, cræft is “a hand-eye-head-heart-body coordination that furnishes us with a meaningful understanding of the materiality of our world”.

Langlands is now a professor of archaeology at Swansea University, but he got his professional start as a circuit digger, the kind of “hired trowel” real estate developers pay to quickly catalog all the ancient remains they’re about to turn into the foundation of a new Tesco. It was not a fulfilling job — “crude and expedient” is the line he uses for his commercial excavations — and he was beginning to grow disillusioned with archaeology as a field. So naturally he did what any sensible person would do if he didn’t like his job: he applied to be on a TV show. This was in 2003, and BBC Two was advertising for people to spend a year in 1620, living on and running a historical farm using reconstructed period techniques and equipment. Langlands got the gig (along with Ruth Goodman and another archaeologist whose book I haven’t read), and had a wild year in the Stuart era and then a few more in the Victorian and Edwardian periods.

The shift from examining the archaeological record to experiencing how it was made was an eye-opener, and the success of that first program took him by surprise: “I’d often wondered to myself who on earth would want to watch a bunch of cranky, oddball re-enactors and archaeologists bimbling around in costume, pretending to be in the past”, he writes. “But I didn’t care too much because I was spending nearly every single hour of every day immersed in historical farming. I was tending, ploughing, scything, chopping, sweeping, hedging, sowing, walling, slicing, chiselling, digging, sharpening, thatching, shoveling; the list was almost endless.” And the longer he spent doing all these things (he was on three more shows), the more he realized that the skills, and the knowledge they required, were slipping away.

True cræft, in Langlands’s version, is a combination of know-how and make-do. It’s when you live on the Outer Hebrides and don’t have any trees, so you use whatever driftwood washes up as the ridgebeam for your roof. No timber for the rafters? No problem — a sufficiently strong rope, drawn tightly enough over the ridgeline and secured on both sides, makes something like a giant net on which you can lay your thatch. The straw left in the fields after harvest will do nicely for making both rope and thatch, but if (say) it’s the early twentieth century and you’ve abandoned cereal crops because cheap North American grain knocked the bottom out of the market, then you can make rope from heather and thatch with bunches of bracken. (On the Danish coast, they use seaweed.)

Or it’s when you’re an early Anglo-Saxon who wants to boil some water. A few generations ago, some Romano-Briton on the same spot would simply have bought a beautifully thrown pot from any one of a dozen proto-industrial centers across the Empire, but these days that production has slowed or stopped and the trade networks that would’ve brought them to you are kaput anyway. All you’ve got is some lousy local clay, too weathered to be easily worked. You don’t even have the fuel to fire it hot. So you add organic tempers like grass or chaff (or even dung) to make the clay more plastic, you shape it by hand without a wheel, and when you fire your pot the chaff burns away and leaves tiny voids in the ceramic. Your pot is soft, it’s brittle, it’s kind of lumpy, and fifteen hundred years from now Bryan Ward-Perkins is going to point to it as evidence that civilization collapsed when Rome fell — but it’s still a pot, and it still holds water. You’ve made ingenious use of the world around you to solve your problem. You are, in a word, cræfty.

So when Langlands says cræft, he means the way people behave under conditions of scarcity and resource constraint. And when you’re in that kind of situation, of course you have to be intimately familiar with all your materials — you have to squeeze every last drop of performance out of them! And while Langlands is interested in preindustrial techniques, this isn’t just a matter for drystone wallers and skep-making beekeepers; you can also be cræfty with machines or computers. Cræft is the Havana mechanics who keep 1950s cars running on an income of $40/month, or the engineers who fit all the computer code for the Apollo Guidance Computer into 80 kilobytes. It’s the defining feature of the Real Programmer who “tuck[ed] a pattern matching program into a few hundred bytes of unused memory in a Voyager spacecraft that searched for, located, and photographed a new moon of Jupiter”. We rightly admire these cræfty solutions for their elegance and their makers’ skills, but aside from a few weird hobbyists we don’t imitate them. You don’t spend days hauling rocks and building a wall to keep your sheep in when you have wire fencing. You don’t learn the skies so you can time your haymaking for clement weather when you can just wrap your machine-mown grass in plastic and make silage instead. And you don’t work in unreal mode when you have 64-bit processor. Technological advances have freed up our time precisely because they’ve freed us from the need for clever, thoughtful, material-aware solutions to our problems. No one is cræfty in the midst of abundance, because they don’t have to be.

Your reaction to that last paragraph reveals where you fall in the Wizard/Prophet divide: are you pumping your fist for humanity, or are you a little sad that a kind of mastery has been lost? Is our ability to simply throw more resources at the problem and go on with our day a blessed liberation from the bonds of brute necessity, or is it a tragic separation of our thinking, making, doing selves from our world? Are our practical limitations something to be defeated or innovated around, or are they something to embrace because they are, in some sense, good for us?

Langlands is, unsurprisingly, well over on the Prophet side. He warns that “while some machines are clever, the net result of our using them is that we become lazy, stupid, desensitized, and disengaged” — it’s not that a thing made by hand is better as an object than its mass-produced counterpart (although in some cases it is, and a stone wall does last longer than a wire fence), it’s that the making changes the maker. And while he likes to warn that climate change or Peak Oil or the fragility of international supply chains make our uncræftiness a serious survival risk (think of those poor imported-pot-dependent Britons when Rome withdrew!), that’s not really the point. Even if our technological society never falters — even if we soar to greater and greater heights of prosperity and can afford to automate and mechanize more and more of our interface with the world — Langlands argues that would just mean more missing out.

Jane Psmith, “REVIEW: Cræft, by Alexander Langlands”, Mr. and Mrs. Psmith’s Bookshelf, 2025-03-24.

- As in modern German and Dutch, Old English used the ge– prefix for past participles.

- For more on Old English cræft, especially in the Alfredian corpus, see here. Langlands quotes from the late Peter Clemoes, who wrote extensively on the topic, but no obliging Kazakh has put that online for me.

- This is a fascinating word choice, because virtus is also a complicated and interesting word; it’s derived from the Latin word for man, vir, and means things like “force” but also “manliness” or “bravery” (like Greek ἀνδρεία). In the classical world, it came to mean something like moral worth or excellence in a particularly masculine way, and though it was adopted as a western Christian term for something like spiritual ἀρετή, it retained some of those echoes.