When Voltaire made his often-quoted statement that the country of Britain has “a hundred religions and only one sauce”, he was saying something which was untrue and which is equally untrue today, but which might still be echoed in good faith by a foreign visitor who made only a brief stay and drew his impressions from hotels and restaurants. For the first thing to be noticed about British cookery is that it is best studied in private houses, and more particularly in the homes of the middle-class and working-class masses who have not become Europeanised in their tastes. Cheap restaurants in Britain are almost invariably bad, while in expensive restaurants the cookery is almost always French, or imitation French. In the kind of food eaten, and even in the hours at which meals are taken and the names by which they are called, there is a definite cultural division between the upper-class minority and the big mass who have preserved the habits of their ancestors.

Generalising further, one may say that the characteristic British diet is a simple, rather heavy, perhaps slightly barbarous diet, drawing much of its virtue from the excellence of the local materials, and with its main emphasis on sugar and animal fats. It is the diet of a wet northern country where butter is plentiful and vegetable oils are scarce, where hot drinks are acceptable at most hours of the day, and where all the spices and some of the stronger-tasting herbs are exotic products. Garlic, for instance, is unknown in British cookery proper: on the other hand mint, which is completely neglected in some European countries, figures largely. In general, British people prefer sweet things to spicy things, and they combine sugar with meat in a way that is seldom seen elsewhere.

Finally, it must be remembered that in talking about “British cookery” one is referring to the characteristic native diet of the British Isles and not necessarily to the food that the average British citizen eats at this moment. Quite apart from the economic difference between the various blocks of the population, there is the stringent food rationing which has now been in operation for six years. In talking of British cookery, therefore, one is talking of the past or the future – of dishes that the British people now see somewhat rarely, but which they would gladly eat if they had the chance, and which they did eat fairly frequently up to 1939.

George Orwell, “British Cookery”, 1946. (Originally commissioned by the British Council, but refused by them and later published in abbreviated form.)

November 9, 2019

QotD: British Cookery

November 7, 2019

Blanchard’s transsexualism typology

At Quillette, Louise Perry interviews Dr. Ray Blanchard about transsexualism, and his controversial-in-the-LGBT-community typology of transsexualism:

Ray Blanchard is an adjunct Professor of Psychiatry at the University of Toronto who specialises in the study of human sexuality, with a particular focus on sexual orientation, paraphilias, and gender identity disorders. In the 1980s and 1990s he developed a theory around the causes of gender dysphoria in natal males that became known as “Blanchard’s transsexualism typology“. This typology — which continues to attract a great deal of controversy — categorizes trans women (that is, natal males who identify as women) into two discrete groups.

The first group is composed of “androphilic” (sometimes termed “homosexual”) trans women, who are exclusively sexually attracted to men and are markedly feminine in behaviour and appearance from a young age. They typically begin the process of medical transition before the age of 30.

The second group are motivated to transition as a result of what Blanchard termed “autogynephilia”: a sexual orientation defined by sexual arousal at the thought or image of oneself as a woman. Autogynephiles are typically sexually attracted to women, although they may also identify as asexual or bisexual. They are more likely to transition later in life and to have been conventionally masculine in presentation up until that point.

Although Blanchard’s typology is supported by a wide range of sexologists and other researchers, it is strongly rejected by most trans activists who dispute the existence of autogynephilia. The medical historian Alice Dreger, whose 2015 book Galileo’s Middle Finger included an account of the autogynephilia controversy, summarises the conflict:

There’s a critical difference between autogynephilia and most other sexual orientations: Most other orientations aren’t erotically disrupted simply by being labeled. When you call a typical gay man homosexual, you’re not disturbing his sexual hopes and desires. By contrast, autogynephilia is perhaps best understood as a love that would really rather we didn’t speak its name. The ultimate eroticism of autogynephilia lies in the idea of really becoming or being a woman, not in being a natal male who desires to be a woman.

I interviewed Blanchard over email and Skype. The text has been lightly edited for clarity.

QotD: Evolved sexual differences

Because male sexuality is all about the visuals, men’s magazines are filled with pictures of naked women with freakishly large breasts and women’s magazines are filled with pictures of beauty products and ass-cantilevering $2,000 stilettos. Men evolved to go for signs of reproductively hot prospects — an hourglass figure, youth, clear skin, symmetrical faces and bodies, and long shiny hair: all indicators that a woman is a healthy, fertile candidate to pass on a man’s genes.

Women co-evolved to try to make themselves look reproductively hot, though that’s not how we think of it. […] Because men are turned on by disembodied photos of boobs, butts, and coochies, they’re quick to pull down their pants, click their cameraphone, and text some woman they just met a close-up of their zipperwurst. Really bad idea.

Men who’ve done this should pick up a Harlequin romance, which is basically porn for women (from the ravishing by some hot gazillionaire to the final commitment-gasm).

See any photo spreads of male crotch shots tucked in there anywhere, boys?

This is not an error of omission. Women aren’t fantasizing about seeing your willy; they’re fantasizing that somebody in the royal family will pluck them out of suburbia and marry them in Westminster Abbey.

Amy Alkon, Good Manners for Nice People Who Sometimes Say F*ck, 2014.

November 6, 2019

Whitewashing the Vikings

Sarah Hoyt notices the way history is being presented to subtly (or not-so-subtly) denigrate the recent past and idealize (some of) the distant past:

Their distortion of history so that everything America ever did is wrong and evil-bad is designed to make our own kids hate their own country and imagine themselves as “citizens of the world” which is to say citizens of nowhere.

Which in turn allows for wide open borders which bring in the population of 3rd world serfs the statists count on to keep them in power forever.

For the last ten years I’ve been disquieted and disturbed by the persistent myth of: Our ancestors were far more cleanly, happy and prosperous than we think. Yep. Your foot-in-the-mud ancestor didn’t suffer under the lash of his feudal overlord. Oh, no. He had hot running water, regular baths, religious holidays off and–

Spits. And the girls sang as they wove garlands on Mayday, I suppose.

Most of these myths are arrant nonsense. Some are arrant nonsense on stilts with a dash of oikophobia thrown in.

I’ve mentioned here that I went to the Viking exhibit at the museum some years back, and it was all about how free and egalitarian the Vikings were, male and female. Which I suppose was true, if you miss the large component of slavery. And the fact that they raided foreign shores for slaves and loot. And that almost every skilled artisan was a slave. And–

Then there is the continuous “The Vikings were much cleaner than the Christians and women preferred them.”

First let’s cut the crap. We have zero clue if women preferred them. When the raiders come to town, they don’t stop to ask thee fleeing women to sign “affirmative consent” forms.

Second, yeah, I’m sure in some Viking villages they were cleaner. We do have have reason to suspect some areas had functioning saunas. But then some of the areas raided had functioning Roman baths still extant.

I’m sure for some times and places, that was true. I’m also absolutely sure that for most times and places the Vikings were about as clean as everyone else, which is to say not very, due to the lack of easy-accessible soap (yes, it existed. In certain times and places. NOT everywhere and not of a kind you’d want to use on your skin) of easily accessible acceptable-temperature water, and/or of warm enough places to bathe in.

No, medieval people weren’t as utterly filthy as it’s imagined (though there were some, I’m sure) but I’m also utterly sure, having experienced this in a temperate climate, that washing in winter would be limited, careful, and therefore maybe not as thorough as we imagine. Or to put it another way, when the Victorians went on about catching a chill, they weren’t just blowing smoke, guys. People didn’t willingly strip down and dip in lukewarm water in the dead of winter and when clothes would take forever to dry, unless they had other clothes, and facilities for getting warm right after.

In other words, Vikings and the rest of the Middle Ages were, from our POV a little wiffy. As were most places until the late 20th century.

So why the cleanly and perfumed Vikings (Particularly since the records of the time don’t support this view, except in very few, highly publicized circumstances?)

Oh, that’s the “don’t go imagining Christians were better” wing of the oikophobe chorus. They will tell us Christians were filthy. The pagans, on the other hand, were cleanly and perfumed.

Weirdly the one people we know were cleaner than Christians, also more literate and prone to less domestic violence never come in for praise in these comparisons. I suspect being part of the foundational build of the West, the Jews aren’t considered “wonderfully other” enough. Or given some of the recent bs on the left and the people they embrace, perhaps it’s a hate thing.

BTW that Christians being filthy is bullshit. Later on, in defense of “but medieval people weren’t that filthy” they’ll bring in the injunction to change your underwear daily. Which is more than a little confusing when you researched the heck out of “underwear use” in various places in the renaissance and know most women at least wore none. Eventually you find out the injunction to change underwear was in monasteries. Monk’s orders in fact, also had various guidelines on cleanliness which, for their time, were amazingly enlightened. Even if, yes, by our standards, they were all a bit wiffy.

The same applies to a ton of other things. These revisionists tell us they ate better than we think, oh, and by the way, except for infant mortality they lived as long as we do.

All this is insanity on stilts.

October 15, 2019

QotD: Over-protected children become insecure adults

Kids need conflict, insult, exclusion – they need to experience these things thousands of times when they’re young in order to develop into psychologically mature adults. Every adult has to learn to handle these things and not get upset, especially by minor instances. But in the name of protecting our children we have deprived them of the unsupervised time they need to learn how to navigate conflict among themselves. That is one of the main reasons why kids and even college students today find words, ideas and social situations more intolerable than those same words, ideas and situations would have been for previous generations of students.

Jonathan Haidt, quoted by Naomi Firsht, “The Fragile Generation”, Spiked, 2017-08-31.

October 4, 2019

“Economics is … the science of not being able to have your cake and eat it”

Philip Booth on Greta Thunberg’s message and its economic over-simplifications:

In many senses, economic problems are more complex than scientific problems and Thunberg is, implicitly at least, pronouncing on economic matters. Whilst knowledge about climate science is uncertain, a judgement has to be and can be made on the balance of evidence. But economic decisions involve trade-offs. Economics is, as Lionel Robbins put it, the science of not being able to have your cake and eat it. We cannot both decrease carbon emissions hugely and enjoy standards of living increasing at the rate that would have been possible if emissions were not reduced.

It is tempting the believe the green rhetoric that we will all have fluffy green jobs and a green standard of living without any hardship from reducing emissions. We cannot. Reducing carbon emissions quickly to zero means that we will have much less of everything else. We might prefer decarbonisation to other goods and services, but it is not a cost-free choice. We considering this, we should remember that the average income in the UK is ten times the average income in the rest of the world. When other people face these trade-offs the sacrifice of decarbonisation is that much greater.

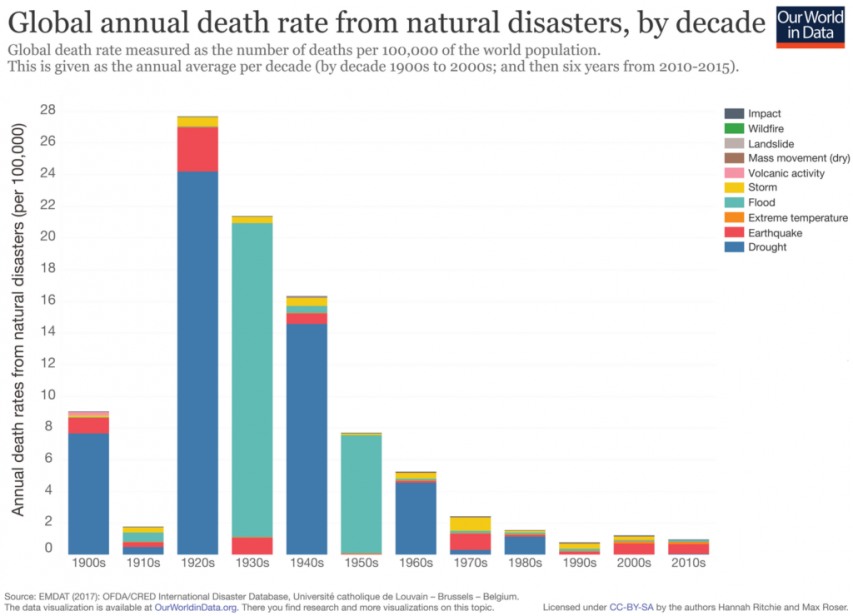

One of the advantages of being richer is that we are more resilient to natural disasters. It follows from this that there is a trade-off between decarbonisation, which might lead to fewer natural disasters, and our ability to cope with them, which might reduce if we become less rich. As we have become richer, deaths from natural disasters have plummeted. The figure shows the fall in deaths in natural disasters over the last century – they have reduced by, perhaps, 90 per cent.

The use of air conditioning illustrates this trade-off in a rather stark way. In a letter on the environment written by Pope Francis in 2015 called Laudato si, the pontiff strongly criticised the adoption of air conditioning in the strongest terms. An academic paper on air conditioning in the US produced such remarkable results that the abstract is worth quoting at length:

“the mortality effect of an extremely hot day declined by about 80% between 1900-1959 and 1960-2004. As a consequence, days with temperatures exceeding 90°F were responsible for about 600 premature fatalities annually in the 1960-2004 period, compared to the approximately 3,600 premature fatalities that would have occurred if the temperature-mortality relationship from before 1960 still prevailed. Second, the adoption of residential air conditioning (AC) explains essentially the entire decline in the temperature-mortality relationship. In contrast, increased access to electricity and health care seem not to affect mortality on extremely hot days.”

Air conditioning leads to higher carbon emissions and, most likely, higher global temperatures. But the increase in resilience arising from air conditioning is astonishing – it has led to an 80 per cent drop in deaths from heat.

September 11, 2019

QotD: Male versus female social structure

Female social structure is completely different from male social structure. Men tend to organize in a hierarchy of sorts. There’s one guy at the top, a few guys he trusts underneath him, etc. downward until you reach the guys that are on the bottom of the pile. The structure makes sense. It’s efficient, everyone knows who’s in charge, and it’s largely based around the individual. Someone can rise or fall in this hierarchy based on any number of things, but their position is usually pretty clear from the outside looking in.

Female social structure is more fluid. It’s based more on group identity than on individual characteristics. You tend to have one woman who is kind of in charge, your queen bee as it were. Those in her favor circle around her, and the circles continue outward until you have the women who are not part of the group. They aren’t at the bottom of the pile, they pretty much don’t exist. At least, if they’re lucky they don’t. One’s position in the social hierarchy can change at any time, to include who the queen bee is. Remember the scene in Mean Girls where Regina tries to sit at the lunch table with the other plastics but isn’t wearing the right color clothes? She was just knocked out of her position in the social hierarchy.

In a social structure like this, there’s no room for difference of opinion or people who stand out too much. If an individual, even by noncompliance, threatens the group identity; she will gain the ire of the entire group. They will hunt her without mercy until she complies with the demands of the group, is removed from their reach by either death or physical distance (which is getting much harder with the internet), or manages to win enough females to her side to either form a new group around her or replace the existing leader. The last option allows her to push her standard on the group as a whole. There is no option to live and let live. In other words, female social structure is closer to the Borg than the complex world we currently enjoy.

I can already hear the cries of, “But women aren’t violent!” If you’re talking about direct violence, you’re mostly right. Women are not men, and we don’t hunt in the way that men do. Our weapons are usually words and emotions, not direct violence. Instead of a brawl in which two people fight it out and determine which is the strongest, you’re looking at extreme emotional abuse for anyone who is outside the group and unlucky enough to have their attention. Emotional abuse attacks a person in the most deeply personal of ways, and the scars it creates are more damaging than the physical scars of a beating. We wonder why girls who stand out grow so bitter, but if you’re a little too pretty or a little too different, other girls swarm on you and rip you apart with constant emotional abuse. They attack who you are at the base of your being, your very self-perception. This is why you see truly beautiful women who struggle to see their worth. Other girls have been ripping into them since the nursery, and it only gets worse as they get older. Boys court you and try in their awkward boyish way to get your attention, but you’re mostly convinced that it’s a cruel joke they’re playing on you, because of course you aren’t pretty or smart or special. The scars run deep and never fully heal.

“outofthedarkness”, guest posting at According to Hoyt, 2017-08-09.

September 10, 2019

We’ve noticed this too…

Sarah Hoyt on the increasing “green-ness” of her appliances — and the increasing uselessness of same:

“A-rated energy appliances” by Tom Raftery is licensed under CC BY-NC-SA 2.0

For years we got expensive front loaders, and yet our clothes kept smelling, there were stains that would not come out, and these things seemed to last only 5 years, on the outside. And I knew it wasn’t our problem, as such, because at the same time we started noticing we couldn’t get our clothes clean, the detergent aisle of the supermarket sprouted an entire section of odor removing things, Febreeze got added to detergents, and, in general, people smelled odd …

Then the washer broke while we were also very, very broke (we were paying mortgage and rent in the run up to buying this. I saw an ad for, I THINK a $300 washer, and we went to look. What we found, instead, was a $200, not advertised washer. As we’re looking at it the saleswoman hurries over and tells us we don’t want it. This washer, she says, uses lots of water. For those who don’t know I suffer from an unusual form of eczema. While it’s triggered mostly by stress with a side of carbs, it can also, out of the blue, take offense at a slight trace of detergent left on the clothes. I’ve found that the eczema got markedly worse the less water the washer used. And it required me to run the washer three times, once with soap and two without to avoid major outbreaks. The idea of using lots and lots of water was great, so I was all excited. Which shocked the poor saleswoman halfway to death. I will point out, though, though that this washer washes well enough I can get away with only one extra rinse cycle and if I forget it it’s usually survivable. Also, our clothes don’t smell. Unfortunately, we’ve not found that [type] of washer any of the times we’ve walked through the appliance aisle, so I think that choice has been eliminated.

Certainly the choice of dishwashers that use “lots” (i.e. what they used 20 years ago) of water and electricity was never offered to us. And since we seemed to have really lousy luck with dishwashers, I found every time we replaced one over the last 20 years, they had less space for dishes (more insulation, to allow for less electricity) to the point that I needed to do 3 or even 4 loads for a family of four. I mean, I cook from scratch, but I really don’t use that much stuff. And it ran slower than before. Right now our dishwasher actually washes (a bonus) but it takes four hours to run a cycle. I rarely do more than one wash a day, though, because it’s just Dan and I, and we … well … the kids used a lot more glasses and little plates, and frankly meals get more complicated for four people.

All the same, there was a time there, for like 10 years, where we were running all this “green” approved stuff, and not only was I running the washer and drier more or less continuously, but to make things more “interesting” I was using MORE water and electricity, in the sense that I was running the appliances a ton more.

This of course is what I also found with the “low flush” toilets. We had them in our previous house, and we found that we spent an inordinate amount of time flushing the toilet. Also, since it took four or five flushes to do the job or one, the fact we were actually only using half the water per flush didn’t save any water. We spent instead twice to three times the amount of water the “high flush” toilets had spent.

All this, btw, to appease Paul Ehrlich — the prophet of wrong. As in, if he foresees something it will be wrong — and his ridiculous idea we’d run out of potable water in 1978. Apparently none of these people have noticed that 1978 has come and gone with no problems. And as for electricity, if they stop their idiocy about nuclear, it’s not even a consideration. (And no, Chernobyl isn’t a caution about nuclear energy. It’s a caution about stupid communist regimes. They can’t run anything — not even a nuclear plant — without destroying it.)

Lightbulbs are another favourite … several years back, our provincial government was pushing us all to get rid of our old incandescent bulbs and replace them with these great new energy-efficient compact fluorescent bulbs. The new CFLs cost roughly ten times as much as the old bulbs, but we were assured up and down that they’d last twenty times longer, so we’d not only save money on electricity, but also have to replace the bulbs so infrequently. Of course, the CFL bulbs were pathetically bad — not only were they expensive, the light they gave was (at best) marginal and they didn’t even last as long as typical incandescent bulbs.

So now, of course, we’re being encouraged to use LED bulbs. Sure, they’re more expensive than the old incandescent bulbs, but they save on electricity and last many times longer! Except, of course, they don’t. The old incandescent bulbs in my office started to fail one after another, so when I was down to only one working bulb, I gave in and bought four replacement LED bulbs … they were on sale for only 2-3 times as much as the old bulbs! That was in March. I’ve already had to replace two of the LED bulbs. This is starting to feel familiar…

On the bright side (pun unintentional), the LED bulbs don’t provide the entertainment of a toxic waste cleanup in your home the way the CFL bulbs did when they were broken.

July 24, 2019

Wait, you mean there might be a downside to cannabis legalization?

As a libertarian of long standing, I’m on the record as being in favour of legalizing cannabis since long before it was cool (geeky and perpetually uncool libertarians probably helped keep it from being cool for at least a few years longer). I’m not enthused to hear that we may have been undersold on the risks of cannabis use … not that the government didn’t try telling is it was deadly, deadly poison (they did, repeatedly, and at great length), but they institutionalized the role of the boy who cried wolf, and every illegal narcotic got basically the same description. I’m actually not kidding here: the first health class I got in middle school included a lecture and a pamphlet on the dangers of pot; the second class covered the dangers of cocaine; the third warned against LSD; and so on … but they used a copy/paste to discuss the physical and mental risks of the different drugs, and they all read the same way. All those evil drugs are evil, bad, and rot your brain. Knowing that the pothead (“Hi, Gary!”) at the back of the class hadn’t suddenly had a psychotic break and tried to fly off the top of the school was the first hint that we were being oversold on the real world risks of (some) illegal drug use. The declared fact that some illegal narcotics actually are deadly, deadly poison ran up against the observed fact that a significant majority of people over the age of fifteen had tried cannabis and found it somewhat less scary than advertised.

Along with the beginnings of doubt that the government was being honest with us, and the clear understanding that even if using drugs wasn’t as dangerous as we were told, we shared a growing awareness that being caught with drugs by the police was significantly more dangerous and possibly deadly. Officer Friendly would shoot you down like a mad dog if he thought you were one’o’them drug-crazed hippies. It certainly changed the social dynamics of any interaction with Officer Friendly’s fellow heavily armed co-workers…

In the National Post, Barbara Kay suggests that not all the dangers of cannabis use were mere government propaganda:

Some years ago, in conversation with his wife, a forensic psychiatrist specializing in mentally ill criminals, former New York Times reporter Alex Berenson observed that the perpetrator of a recent violent crime had been high at the time, and had smoked pot regularly all his life. Her response — “Yeah, they all do” — jolted him. The result was his book, Tell Your Children: The Truth About Marijuana, Mental Illness and Violence.

Much of the referenced material in Berenson’s book had not yet been published a decade ago. But more recent studies only confirm what a few intrepid researchers were already warning about then.

Indeed, as I noted in a 2008 column, the head of the Medical research Council in the U.K., Professor Colin Blakemore, who in 1997 had been the moral authority behind a pot-legalization campaign, unequivocally reversed his pot-friendly stance in 2007, stating: “The link between cannabis and psychosis is quite clear now; it wasn’t 10 years ago.”

If you haven’t energy for a whole book, but would invest in 16 pages on the subject, you will be well rewarded by Steven Malanga’s in-depth article, “The Marijuana Delusion,” in City Journal‘s June issue. Here you will find debunked the blithe claim, still received as gospel by progressives and libertarians, that pot is virtually harmless and even therapeutic.

Unlike marijuana, real medications are deeply researched before coming on the market, and may attest to proven benefits, but are obligated to admit potential harms. Is pot a medicinal drug or a placebo? Nobody really knows. One may argue “who cares, as long as it works” (anecdotally I hear that pot works, and also that it doesn’t work), but that isn’t the point, since the legalization movement made medical claims for pot in order to bring the public onside politically. There was no will on the movement’s side to discover even radically fortified pot’s downsides.

The knowledge was out there for those interested. In 1987 a study of nearly 50,000 Swedish military conscripts followed for drug use over 15 years found that frequent pot use in teenhood was linked to a six-fold risk of schizophrenia as compared with non-usage. A 2004 meta-analysis of studies on pot use came to a similar conclusion. These studies, and others, are suggestive that heavy marijuana consumption, particularly in youth, may cause serious mental health problems. Yes, it is possible that the link isn’t entirely causal; people with mental health issues may be more likely to use marijuana heavily. But at the very least, this ought to be an issue of ongoing concern, particularly now that marijuana is legal in Canada and in an increasing number of U.S. states.

July 21, 2019

The humble egg – wonder food or deadly poison?

If you’ve paid any attention to popular reporting on nutrition studies over the years, you’ll have noticed how just about any advice on food has not only changed, but has often been completely the opposite of advice offered just a few years earlier. During my teenage years, the egg was pushed (thanks in part to the “Egg Marketing Board”, one of Canada’s supply management bureaucracies) as “the perfect food”. During the next decade, as newer nutrition studies were published, suddenly the wonderful, nutritious egg was now a huge risk to your cardiovascular health and even one egg per week might be enough to kill you. Rinse and repeat for so many other foods and you either stop eating altogether or, more sensibly, stop paying any attention at all to mainstream media interpretations of nutrition studies.

It’s been a tortuous path for the humble egg. For much of our history, it was a staple of the American breakfast — as in, bacon and eggs. Then, starting in the late 1970s and early 1980s, it began to be disparaged as a dangerous source of artery-clogging cholesterol, a probable culprit behind Americans’ exceptionally high rates of heart attack and stroke. Then, in the past few years, the chicken egg was redeemed and once again touted as an excellent source of protein, unique antioxidants like lutein and zeaxanthin, and many vitamins and minerals, including riboflavin and selenium, all in a fairly low-calorie package.

This March, a study published in JAMA put the egg back on the hot seat. It found that the amount of cholesterol in a bit less than two large eggs a day was associated with an increase in a person’s risk of cardiovascular disease and death by 17 percent and 18 percent, respectively. The risks grow with every additional half egg. It was a really large study, too — with nearly 30,000 participants — which suggests it should be fairly reliable.

So which is it? Is the egg good or bad? And, while we are on the subject, when so much of what we are told about diet, health, and weight loss is inconsistent and contradictory, can we believe any of it?

Quite frankly, probably not. Nutrition research tends to be unreliable because nearly all of it is based on observational studies, which are imprecise, have no controls, and don’t follow an experimental method. As nutrition-research critics Edward Archer and Carl Lavie have put it, “‘Nutrition’ is now a degenerating research paradigm in which scientifically illiterate methods, meaningless data, and consensus-driven censorship dominate the empirical landscape.”

Other nutrition research critics, such as John Ioannidis of Stanford University, have been similarly scathing in their commentary. They point out that observational nutrition studies are essentially just surveys: Researchers ask a group of study participants — a cohort — what they eat and how often, then they track the cohort over time to see what, if any, health conditions the study participants develop.

H/T to Marina Fontaine for the link.

July 20, 2019

QotD: Spices

Why do we use spices in our foods? In thinking about this question keep in mind that (1) other animals don’t spice their foods, (2) most spices contribute little or no nutrition to our diets, and (3) the active ingredients in many spices are actually aversive chemicals, which evolved to keep insects, fungi, bacteria, mammals and other unwanted critters away from the plants that produce them.

Several lines of evidence indicate that spicing may represent a class of cultural adaptations to the problem of food-borne pathogens. Many spices are antimicrobials that can kill pathogens in foods. Globally, common spices are onions, pepper, garlic, cilantro, chili peppers (capsicum) and bay leaves. Here’s the idea: the use of many spices represents a cultural adaptation to the problem of pathogens in food, especially in meat. This challenge would have been most important before refrigerators came on the scene. To examine this, two biologists, Jennifer Billing and Paul Sherman, collected 4578 recipes from traditional cookbooks from populations around the world. They found three distinct patterns.

1. Spices are, in fact, antimicrobial. The most common spices in the world are also the most effective against bacteria. Some spices are also fungicides. Combinations of spices have synergistic effects, which may explain why ingredients like “chili power” (a mix of red pepper, onion, paprika, garlic, cumin and oregano) are so important. And, ingredients like lemon and lime, which are not on their own potent anti-microbials, appear to catalyze the bacteria killing effects of other spices.

2. People in hotter climates use more spices, and more of the most effective bacteria killers. In India and Indonesia, for example, most recipes used many anti-microbial spices, including onions, garlic, capsicum and coriander. Meanwhile, in Norway, recipes use some black pepper and occasionally a bit of parsley or lemon, but that’s about it.

3. Recipes appear to use spices in ways that increase their effectiveness. Some spices, like onions and garlic, whose killing power is resistant to heating, are deployed in the cooking process. Other spices like cilantro, whose antimicrobial properties might be damaged by heating, are added fresh in recipes.

Thus, many recipes and preferences appear to be cultural adaptations adapted to local environments that operate in subtle and nuanced ways not understood by those of us who love spicy foods. Billing and Sherman speculate that these evolved culturally, as healthier, more fertile and more successful families were preferentially imitated by less successful ones. This is quite plausible given what we know about our species’ evolved psychology for cultural learning, including specifically cultural learning about foods and plants.

Among spices, chili peppers are an ideal case. Chili peppers were the primary spice of New World cuisines, prior to the arrival of Europeans, and are now routinely consumed by about a quarter of all adults, globally. Chili peppers have evolved chemical defenses, based on capsaicin, that make them aversive to mammals and rodents but desirable to birds. In mammals, capsicum directly activates a pain channel (TrpV1), which creates a burning sensation in response to various specific stimuli, including acid, high temperatures and allyl isothiocyanate (which is found in mustard or wasabi). These chemical weapons aid chili pepper plants in their survival and reproduction, as birds provide a better dispersal system for the plants’ seeds than other options (like mammals). Consequently, chilies are innately aversive to non-human primates, babies and many human adults. Capsaicin is so innately aversive that nursing mothers are advised to avoid chili peppers, lest their infants reject their breast (milk), and some societies even put capsicum on mom’s breasts to initiate weaning. Yet, adults who live in hot climates regularly incorporate chilies into their recipes. And, those who grow up among people who enjoy eating chili peppers not only eat chilies but love eating them. How do we come to like the experience of burning and sweating — the activation of pain channel TrpV1?

Research by psychologist Paul Rozin shows that people come to enjoy the experience of eating chili peppers mostly by re-interpreting the pain signals caused by capsicum as pleasure or excitement. Based on work in the highlands of Mexico, children acquire this gradually without being pressured or compelled. They want to learn to like chili peppers, to be like those they admire. This fits with what we’ve already seen: children readily acquire food preferences from older peers. In Chapter 14, we further examine how cultural learning can alter our bodies’ physiological response to pain, and specifically to electric shocks. The bottom line is that culture can overpower our innate mammalian aversions, when necessary and without us knowing it.

Joseph Henrich, The Secret of Our Success: How Culture Is Driving Human Evolution, Domesticating Our Species, and Making Us Smarter, 2015.

July 17, 2019

QotD: “The United States government [became] the greatest and most potent maker of criminals in any recent century”

For most of the history of the United States, drugs were legal. People could buy opiates and cocaine-based products from their local pharmacy. An opiate-laced brew called Mrs. Winslow’s Soothing Syrup, for example, was particularly popular with housewives. One person who viewed this legal system with skepticism was a Los Angeles doctor named Henry Smith Williams. When a small number of his patients became addicted, he was disgusted, and he came to see them as despicable “weaklings.” So when opiates and cocaine were banned in 1914, he welcomed this first birth-pang of the drug war with glee.

But then he noticed what happened to his addicted patients. They didn’t stop using. Instead, “here were tens of thousands of people, in every walk of life, frantically craving drugs that they could in no legal way secure,” he wrote in one of his books. “They craved the drugs, as a man dying of thirst craves water. They must have the drugs at any hazard, at any cost.”

At the same time, Smith Williams realized that the drug war was “in effect ordering a company of drug smugglers into existence.” Because pharmacists could no longer sell these drugs, the Mafia and other criminal organizations stepped in, selling a vastly inferior product at extortionate prices. In the pharmacies, morphine had cost two or three cents a grain, but the criminal gangs charged a dollar.

The death rate among addicts rose, and those who survived began to behave very differently. An official government study had found that, before the drug war kicked in, three-quarters of self-described addicts had steady and respectable jobs: some 22% were wealthy, while only 6% were poor. They were more sedate as a result of their addiction, but they were rarely out of control or criminal. Yet faced with the need to meet these extortionate new prices, many of the men started to commit property crimes, and many of the women started to steal or prostitute themselves.

So Smith Williams watched as the drug war created two waves of crime: first a wave of violent criminal drug-dealers, and then a wave of criminality among addicts. “The United States government,” Henry wrote in shock, had become “the greatest and most potent maker of criminals in any recent century.”

Johann Hari, “A 1930s California story shows why the war on drugs is a failure”, Los Angeles Times, 2017-06-16.

July 7, 2019

Does this sound like your gym?

Instapundit linked to this older Sean Kelly article about the “Planet Fitness” chain of health clubs. According to him, it’s even less pretty than you might have thought:

“Planet Fitness”by JeepersMedia is licensed under CC BY 2.0

Here’s what you need to know…

- Planet Fitness: The gym for people who don’t really want to get in shape, owned by people who really can’t afford for the members to be there.

- A survey of over 20 different Planet Fitness locations in 12 different states revealed that they provide no nutritional guidance. They do however supply candy and pizza.

- Planet Fitness seems to promise that health and fitness will ultimately be comfortable and not involve any real effort.

- Planet Fitness is a big, purple-colored adult daycare marketed to people afraid to go to an actual gym.

- Many Planet Fitness members do want to make progress of course, but the gym’s own rules and operating guidelines seem to dissuade this.

July 4, 2019

Assorted green scams

David Warren briefly returns to the current day (away from his normal 13th-century preferences) to look at a few of the many green scams being run by various government and industry scam artists:

Speaking with a gentleman who vends in a neighbourhood farmers’ market, I learnt something interesting, and probably true. Surviving family farms usually lack “organic” credentials. This is because getting them, from the bureaucracies that dispense them, is an immensely time-consuming process, and involves costs that would erase most of the little farmer’s profits. You have to be a big, faceless, industrial operation to afford the official “organic” labels that sucker big city consumers into paying double for essentially the same goods. That the whole system is massively corrupt, can almost go without saying. It was designed to be.

Organic scams are far from new, but perhaps more insidious because corporations love to add that “organic” label on stuff to jack up the prices on all sorts of things, like spices, wine, and many, many other items. Restaurants do the same trick on their menus, frequently assuming nobody will ever check up on them. That said, it’s mostly the well-off who get fooled because, well, they’re eager to be fooled on that score. The US government even admitted that organic certification is not about food safety or nutrition: it’s all marketing.

By coincidence, the same day my eye caught, by accident on the Internet, the announcement of a Green Award to a big car assembly “park.” They had changed all the light bulbs in their factory buildings, thus saving themselves a few thousand dollars on their multi-million electric bill, and seem to have installed new toilets, too. This sprawling high-tech carriage works remains three hundred acres of unspeakable aesthetic horror, in which human beings are enslaved to machines. But now it is “Green.”

The greenwashing of modern industrial and commercial buildings is a long-running scam, with the much-desired “LEED Platinum” certification usually, if not always, awarded to those who game the system most successfully. “What LEED designers deliver is what most LEED building owners want – namely, green publicity, not energy savings“

The environmental business — currently buoyed by unprovable, often fatuous claims of anthropogenic global warming — is perhaps the most cynical. It has spawned vested interests on a global scale, that will not be overturned by occasional exposure. At its heart is the manipulation of statistics, and scare-mongering through compliant mass media. The general public are hypnotized by repetition. I have noticed in desultory dips into the news that e.g. anomalous weather will invariably be attributed to “climate change,” when more plausible explanations are easily at hand.

This zombification extends to most other areas of reportage: invisible bogeys blamed for imaginary trends. Solutions to “environmental problems” are proposed that will not make the slightest dent in them.

Of course, the constant demands for “clean energy” almost always explicitly reject the use of nuclear power because reasons.

But nuclear power, most easily in the form of molten salt reactors (on which research was killed fifty years ago), could replace most uses of coal, oil, and gas within a decade, through much smaller facilities eliminating huge transmission costs. It would be the cheaper because the fuels are readily available to start in the form of recycled nuclear waste, and the raw materials would be abundantly available thereafter.

On the question of safety, the death toll from mining, drilling, hydro dams, &c, is quite considerable — in the tens of thousands at least, post-War. Except for Chernobyl (one of many Soviet-era environmental disasters), the death toll from nuclear accidents remains about nil. No one died at Three Mile Island. Not one death was caused by the flooded Fukushima reactors (though well over twenty thousand were killed by the tsunami that caused the difficulty there).

In short, “clean energy” is not a problem. It had to be made into one by the fright campaigns of the environmentalcases, whose own power and income depends on sustaining the problem, and preventing the most obvious solutions.

June 28, 2019

QotD: “Intelligence” is just a noun

Howard Gardner has also convinced us that the word intelligence carries with it undue affect and political baggage. It is still a useful word, but we shall subsequently employ the more neutral term cognitive ability as often as possible to refer to the concept that we have hitherto called intelligence, just as we will use IQ as a generic synonym for intelligence test score. Since cognitive ability is an uneuphonious phrase, we lapse often so as to make the text readable. But at least we hope that it will help you think of intelligence as just a noun, not an accolade.

We have said that we will be drawing most heavily on data from the classical tradition. That implies that we also accept certain conclusions undergirding that tradition. To draw the strands of our perspective together and to set the stage for the rest of the book, let us set them down explicitly. Here are six conclusions regarding tests of cognitive ability, drawn from the classical tradition, that are by now beyond significant technical dispute:

- There is such a thing as a general factor of cognitive ability on which human beings differ.

- All standardized tests of academic aptitude or achievement measure this general factor to some degree, but IQ tests expressly designed for that purpose measure it most accurately.

- IQ scores match, to a first degree, whatever it is that people mean when they use the word intelligent or smart in ordinary language.

- IQ scores are stable, although not perfectly so, over much of a person’s life.

- Properly administered IQ tests are not demonstrably biased against social, economic, ethnic, or racial groups.

- Cognitive ability is substantially heritable, apparently no less than 40 percent and no more than 80 percent.

Charles Murray, “The Bell Curve Explained”, American Enterprise Institute, 2017-05-20.