Leys’s essays often combine delicacy with deep irony — a combination that few writers, especially in our times of stridency and parti pris, achieve. Here, for example, is the beginning of his essay “An Introduction to Confucius”: “If we consider humanity’s greatest teachers of wisdom — the Buddha, Confucius, Socrates, Jesus — we are struck by a curious paradox: today, not one of them could obtain even the most modest of teaching posts in any of our universities”. We laugh — which, of course, is the best tribute to the seriousness of the point that he is making. He goes on to explain, “The reason is simple: their qualifications are insufficient — they have published nothing”.

In two sentences, Leys has pinned, like a butterfly to an entomologist’s board, the bureaucratic sickness that has overtaken our institutions of higher learning (and not only those institutions). There is no madness more difficult to treat than that which believes itself sane, and there is no irrationality greater than that which believes itself perfect. It is no surprise that Leys retired early from his university chair because the university no longer bore any resemblance to what it had once been and misled students and the rest of society into believing it still was. A community of scholars had become an organization of foremen on a production line.

Theodore Dalrymple, “Rare and Common Sense”, First Things, 2017-11.

April 11, 2025

QotD: Teaching in modern universities

April 3, 2025

QotD: When the History Department at Flyover State committed slow motion suicide

It started when they hired a radical feminist lesbian. Radical by ivory tower standards, I mean, which as you can imagine is a bar so high, Mt. Everest could limbo under it without breaking a sweat. This was the kind of “scholar” whose work was like that dude we mentioned a while back, who claimed that the US Civil War was really about “gay rights” — something that’s not merely wrong, but impossible, as the mid-19th century lacked the conceptual vocabulary to even suggest such a thing. In other words, they hired this persyn to be professionally obnoxious, and xzhey were happy to oblige.

Now, you have to understand something about the academy at this point: Though these people are profoundly ideologically enstupidated, they’re still pretty cunning where their wallets are concerned. Indeed, that’s the whole reason they allow “scholarship” on left-handed LatinX truck drivers in the Ming Dynasty or whatever that persyn’s book was, to pull that kind of stunt — only “original” “research” gets published, and since all the true facts have been ascertained long ago, you have to make shit up if you want tenure. Publish or perish, baby!

A clever plan, but with one teensy tiny flaw: “Tenure” requires a university, and universities require students, which means that, while pretty much all professors hate teaching, they have to do it … and not only that, they have to actually appeal to those icky little deplorables, the students, in sufficient numbers to keep the faculty employed. If, back in your own college days, you wondered if maybe the only reason Western Civ I or whatever was required was that it gave Professor Jones something to do, congrats, you were right. But you can’t require History majors, and there’s only enough Western Civ to go around, which means you have to have 200- through 400-level classes that students actually want to take …

You can see where this is going, and to their credit, some of the faculty at Flyover State saw it, too. At the time, there were still enough upperclassman History majors (and grad students) that the class on LatinX truck drivers in the Ming Dynasty would fill … barely … but that situation obviously would not continue. Nor could you simply stick the new hire in Western Civ classes, because in addition to the other obvious problems, of course xzhey would immediately turn “Western Civ I” into “LatinX truck drivers in the Ming Dynasty … and maybe, if there’s time, the Roman Empire or some shit.”

You know what the Department ended up doing (hint: nothing), and so the first semester after the new hire went exactly like you knew it would. And so did the next, and the next, because as we all know, chicks of both sexes and however-many-we’re-up-to-now genders are herd animals. Hiring the radical lesbian gave all the slightly-less-radical lesbians, again of both sexes and however-many genders, permission to let their freak flag fly. Which, of course, they did. Pretty soon you couldn’t find a History class that wasn’t some bizarre, micro-specialized SJW mad lib. Sure, they’d still call it “The US in the Civil War Era” or “Modern Germany” or whatever, but the course description made it perfectly clear that the class was really about transsexual cabaret acts on the New York Bowery … and maybe, if there’s time, secession or some shit.

And soon enough there were no more History majors, and thus no more History Department. At one of the small schools that collectively make up “Flyover State”, the former History, Psychology, and Classics departments have been folded into something called the “Humanities Department” … to which, last I heard, the former English Department will soon be added.

Severian, “The Dunbar Problem”, Founding Questions, 2021-10-06.

March 24, 2025

Postcards from academia’s zombie apocalypse

Ted Gioia points out exactly why them there kids ain’t learnin’ no more:

[High school students] just care about the next fix — because that’s how addicts operate. They have no long term plan, just short term needs.

They can’t get back to their phones fast enough.

How bad is it for educators right now?

Check out this commentary from one experienced teacher, who finds more engaged students in prison than a college classroom.

This comes from Corey McCall, a member of The Honest Broker community who recently posted this comment:

I saw this decline in both reading ability and interest occur firsthand between 2006 and 2021 … I had experience teaching undergrads who hadn’t comprehended the material before, but hadn’t faced the challenge of students who could read it but who simply didn’t care …

Since 2021 I’ve been teaching part-time in prison, and incarcerated students really want to learn. They love to read and think along with authors such as Plato, Descartes, and Simone de Beauvoir. I am teaching Intro to Theater this semester (the story of how this happened is interesting, but is irrelevant here) and students have been poring over Oedipus the King and asking why this amazing play isn’t performed more regularly alongside plays like Hamilton and The Lion King.

I believe that there is hope for the humanities and perhaps for culture more generally, but it will be found in unusual places.

I’ve made a similar claim in this article — where I look outside of college for a rebirth of the humanities. It would be great if it happened in classrooms, too, but I fear that they are now the epicenter of the zombie wars.

Alas, I fear the number of zombie students is still growing — and at an accelerated pace.

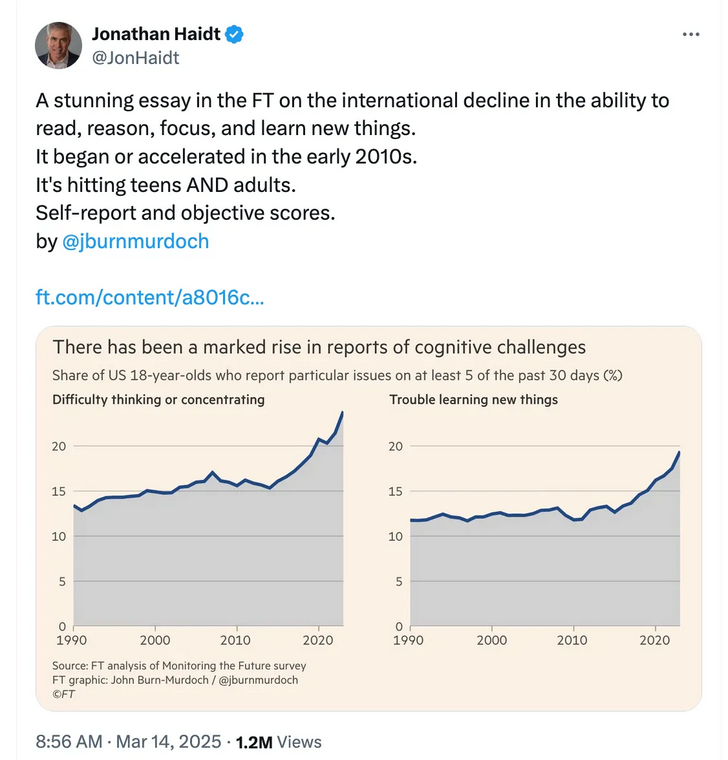

Jonathan Haidt, who has taken the lead in exposing this crisis — and thus gets attacked fiercely by zombie apologists — shares horrifying trendlines from Monitoring the Future.

This group at the University of Michigan has studied student behavior since 1975. But what’s happening now is unprecedented.

Students are literally finding it too hard to think. So they can’t learn new things.

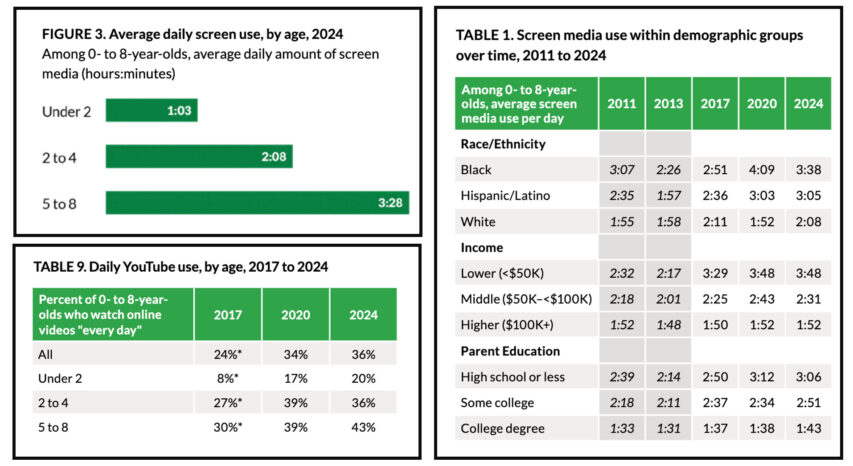

Below are more ugly numbers from another in-depth study — which looks at how children spend their day. It reveals that children under the age of two are already spending more than an hour per day on screens.

YouTube usage for this group has more than doubled in just four years.

Poor and marginalized communities are hurt the most. As your income drops, your children’s screen time more than doubles.

Source: 2025 study by Common Sense

In other words, these children are getting turned into screen addicts long before they enter the school system.

This is why teachers are speaking out. They see the fallout every day in their classrooms.

March 22, 2025

“Humiliate yourself before us,” I was being told, “And we still won’t hire you, lol”

The accelerating downfall of the academic-political complex is the subject of John Carter’s most recent post at Postcards from Barsoom:

University College, University of Toronto, 31 July, 2008.

Photo by “SurlyDuff” via Wikimedia Commons.

Look. In any given case, for any given scientist working inside the university system, there are exactly two possibilities.

One: they embraced all of this with cheerful, delirious, evangelical enthusiasm. Religious devotees of the unholy cause of converting every institution to the One False Faith of Decay, Envy, and Incompetence, they have spent the last decade or more enforcing campus speech codes, demanding inclusive changes to hiring policies, watering down curricular requirements to improve retention of underrepresented (because underperforming) equity-seeking demographics, forcing their research collaborations to adopt codes of conduct, and mobbing any of their colleagues who voiced the mildest protest against any of this intellectual and organizational vandalism.

Two: they had reservations, but went along with it all anyhow because what were they to do? They needed jobs; they needed funding; and anyhow they didn’t go into STEM to fight culture wars. As the article says, “They’d prefer to just get back to the science,” and the easiest way to get back to the science was to just go along with whatever the crazies were demanding. Even if the crazies were demanding that they abandon any pretense of doing actual science.

The first group are enemies.

The second are cowards.

Both deserve everything they get.

And I am going to enjoy every moment of them getting it.

Look, I am going to vent here a bit, okay? Because the mewling in this article succeeded in getting under my skin.

Not long ago I was considered a promising early career scientist, with an excellent publication record for my field, a decent enough teaching record, and all the rest of it. After several years as a semi-nomadic postdoc – which had followed several years as a semi-nomadic graduate student – it was time to start looking for faculty positions. My bad luck: Fentanyl Floyd couldn’t breathe, and the networked hive consciousness of eggless harpies infesting the institutions was driven into paroxysms of preening performative para-empathy.

What this meant was two things. First, more or less every single university started demanding ‘diversity statements’ be included in faculty application packages, alongside the standard research statements, teaching statements, curriculum vitae, and publication list. The purpose of the diversity statement was to enable the zampolit in HR and the faculty hiring committee to evaluate the candidate’s level of understanding of critical race theory, gender theory, intersectionality, and all the rest of the cultural Marxist anti-knowledge; to identify candidates who had already made contributions to advancing diversity; and to identify candidates who had well-thought-out ten-point plans to help advance the department’s new core principle and overriding purpose, that being: diversity.

The second thing it meant was that hiring policies now implicitly – in the United States – and explictly (in Canada) mandated diversity as an overriding concern in hiring. As everyone knows, this means that if you’re a heterosexual cisgendered fucking white male, you are not getting hired.

In other words, I was now expected to write paeans praising the very ideology that had erected itself as an essentially impermeable barrier to my own employment, pledging to uphold this ideology myself and enforce it against others who look like me. “Humiliate yourself before us,” I was being told, “And we still won’t hire you, lol.”

Having some modicum of self-respect, I refused to go along with this. This meant that I simply could not apply for something like 90% of the available positions. And when I did apply to positions that didn’t require a diversity statement, and successfully got an interview, guess what? One of the first questions out of the mouth of one of hiring committee members would be “what will you do for diversity”, or “I see you didn’t mention diversity in your teaching statement …” See, even if it isn’t mandated by the administration, that doesn’t stop the imposter-syndrome-having activist ladyprofs from insinuating the diversity test on their own initiative. I once had a dean, a middle-aged Hispanic woman, tell me “women in science are very important to me” right at the beginning of the interview; I very nearly got that job, because everyone on the committee wanted me, but later – after they inexplicably ghosted – found out that she’d nixed it. They just didn’t hire anyone.

Right around the same time, of course, we were in the thick of the COVID-19 scamdemic. You remember, the one that was just the flu, bro, until it became the new Black Death that definitely did not come from a laboratory shut up you conspiracy theorist; which couldn’t be stopped by masks so don’t be silly until suddenly masks were the only thing that could save you; which led to us all being locked in our houses for a year because some idiot wrote a Medium article called “the dance of the hammer with your soft skull” or whatever which then went viral inside the hysterosphere; which motivated the accelerated development of a novel mRNA treatment that no one was going to get because you couldn’t trust the Evil Orange Man’s bad sloppy science until suddenly it was safe and effective and then overnight absolutely mandatory and anyone who refused to take it should be sent to a camp.

Yeah, remember that?

I guarantee you that every single credentialed scientist in that article was on board for all of it.

How do I know this?

Because they all were.

March 19, 2025

The Trumpocalypse – “The outlook for universities has become dire”

John Carter suggests that American higher education needs to find a new funding model that doesn’t depend on governments to shower their administrative organizations with unearned loot:

Shortly after taking power, the Trump administration announced a freeze on academic grants at the National Science Foundation and the National Institute for Health. New grant proposal reviews were halted, locking up billions in research funding. Naturally, the courts pushed back, with progressive judges issuing injunctions demanding the funding be reinstated. Judicial activism has so far met with only mixed success: the NSF has resumed the flow of money to existing grants, but the NIH has continued to resist. While the grant review process has been restarted at the NSF, the pause created a huge backlog, resulting in considerable uncertainty for applicants.

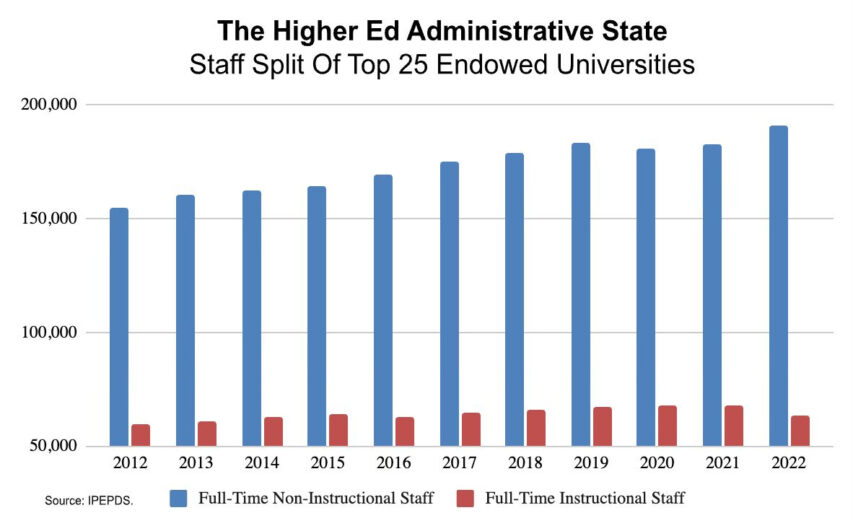

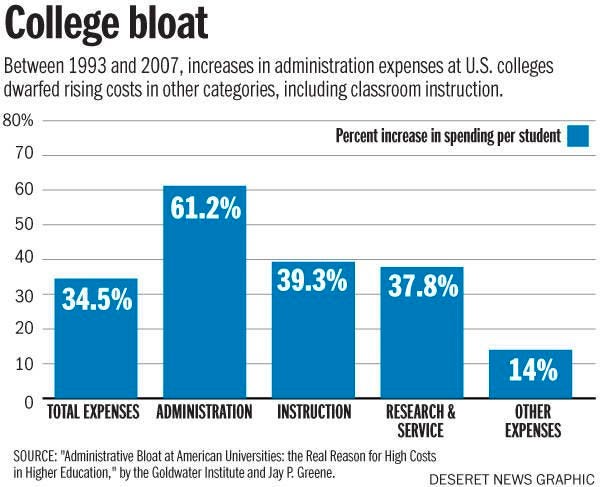

The NIH has instituted a 15% cap to indirect costs, commonly referred to as overheads. This has universities squealing. Overheads are meant to offset the budgetary strain research groups place on universities, covering the costs of the facilities they work in – maintenance, power and heating, paper for the departmental printer, that kind of thing. Universities have been sticking a blood funnel into this superficially reasonable line item for decades, gulping down additional surcharges up to 50% of the value of research grants, a bounty which largely goes towards inflating the salaries of the little armies of self-aggrandizing political commissars with titles like Associate Vice Assistant Deanlet of Advancing Excellence who infest the flesh of the modern campus like deer ticks swarming on the neck of a sick dog. Easily startled readers may wish to close their eyes and scroll past the next few images btw, but I really want to make this point here. When you look at this:

A 15% overhead cap, if applied across the board, has an effect on the parasitic university administration class similar to a diversity truck finding parking at a German Christmas market. Thoughts and prayers, everyone.

Meanwhile at the NSF, massive layoffs are ongoing, and there are apparently plans to slash the research budget by up to 50%. While specific overhead caps haven’t been announced at the NSF yet, there’s every reason to expect that these will be imposed as well, compounding the effect of budget cuts.

There is no attempt to hide the motivation behind the funding freeze, which is obvious to both the appalled and the cuts’ cheerleaders. Just as overheads serve as a blood meal for the administrative caste, scientific research funding has been getting brazenly appropriated by political activists at obscene scales. A recent Senate Commerce Committee report found that $2 billion in NSF funding had been diverted towards DEI promotion under the Biden administration. In reaction to this travesty, as this recent Nature article notes, there are apparently plans to outright cancel ongoing grants funding “research” into gay race communism. DEI programs, formerly ubiquitous across Federal agencies, have already been scrubbed both from departmental budgets and web pages. Indeed, killing those programs was one of the first actions of the MAGA administration.

The outlook for universities has become dire, and academics have been sweating bullets all over social media. Postdocs aren’t being hired, faculty offers are being rescinded, careers are on hold, research programs are in limbo. This comes at the same time that budgets are being hit by declining enrolment due to the demographic impact of an extended period of below-replacement fertility along with rapidly declining confidence in the value of university degrees, with young men in particular checking out at increasing rates as universities become tacitly understood as hostile feminine environments. They’re hitting a financial cliff at the same point that they’ve burned through the sympathy of the general public.

The entire sector is in grave danger.

Politically, going after research funding is astute. Academia is well known to be a Blue America power centre, used to indoctrinate the young with the antivalues of race Marxism, provide a halo of scholarly legitimacy to the left’s ideological pronouncements, and hand out comfortable sinecures to left-wing activists. The overwhelming left-wing bias of university faculties is proverbial. The Trump administration is using the budgetary crisis as a handy excuse to sic its attack DOGE on the unclean beast – starting with cutting off funding to the most ideological research projects, but apparently also intending to ruin the financial viability of the progressives’ academic spoils system as a whole.

Cutting the NSF budget by half may seem at first glance like punitive overkill, and no doubt the left is screeching that Orange Hitler is throwing a destructive tantrum like a vindictive child and thereby endangering American leadership in scientific research. After all, for all the attention that NASA diversity programs have received, the bulk of research funding still goes to legitimate scientific inquiry, surely? However, the problems in academic research go much deeper than its relentless production of partisan activist slop. Strip out all of the DEI funding, fire every equitied commissar and inclusioned diversity hire, and you’re still left with a sclerotic academic research landscape that has spent decades doing little of use – or interest – to anyone, and doing this great nothing at great expense.

March 10, 2025

QotD: The “Basic College Dude” of the 2020s

… though I have written probably 50,000 words on the Basic College Girl over the years, I have spent almost no time on her opposite number, hereby christened the Basic College Dude (BCD). Admittedly some part of this is structural: There just aren’t that many Persyns of Penis in college these days — nationwide, college enrollment is something like 65% female and climbing; I bet there are more than a few small colleges that, while technically coed, are almost exclusively female. Also, I taught mostly freshman-level History classes, and since I was one of the few dinosaurs who didn’t make attendance a part of the class grade, only the congenital rule-followers, i.e. chicks, showed up.

But mostly it’s just because none of them stick in my memory. The #1 characteristic of the Basic College Dude is that even if he’s there, he’s not there. He’s checked out — mentally, emotionally, spiritually (if that even means anything anymore). Unlike the girls, all of whom seem to be in 72 different clubs and organizations (and list them all on their email auto-signatures, such that by junior year, their honorifics are longer than my entire resume), the guys don’t seem to do much of anything. How do they while away their hours? I assume with social media, like everyone, and with video games and blackout drinking …

… the latter of which I have seen, a lot, and if you’ll permit a brief digression, if you really want to know how fucked our society is, go to a student bar on a Friday night. I myself was a bit of a party animal in college, and like everyone I went over the line a few times, but college kid drinking these days is almost Soviet — they’re aiming to get knee-walking, gutter-puking, total-blackout shitfaced, and they set about it as grimly and efficiently as possible. The girls, too, with the added bonus that they’re all on Ambien and Klonopin and every other happy pill you’ve ever heard of, which makes for some interesting, by which I mean terrifying, behavior …

[…]

But mostly it’s because college dudes have had their libidos beaten out of them. […]

Not only does the BCD not know how to do this, as Nikolai says, he apparently doesn’t actually want to. Constant stimulation by blinking screens, shit diets, and a lifetime of indoctrination have reversed the sexual dilithium crystals. Heartiste used to go on about this, and while I’m no biochemist, either, I think his theory is sound: There’s so much environmental estrogen floating around that men develop the emotional equivalent of gynecomastia, while women turn butch. Throw nth wave feminism into the mix, and you’ve got women acting like the crudest, most obnoxious male stereotypes (they call this “being strong and empowered”), while the men mope and sigh to their diaries.

The end result is that the BCD walks around like he’s shellshocked. He does the bare minimum, hoping to just grind it out without any further affronts to his basic human dignity … but so mal-educated is he, that the phrase “basic human dignity” doesn’t even register with him.

Severian, “The Basic College Dude”, Founding Questions, 2021-10-05.

March 6, 2025

QotD: Old Etonians

If you’d told somebody in the mid 2000s that David Cameron would become Prime Minister, they would have laughed in your face. If you then told them that a few years later Boris Johnson would be one of his successors, they’d consider you bonkers. This was Blairite Britain – gone were the days of Macmillan, Douglas-Home, and the coterie of other prime ministers educated at that same dusty institution – the hegemony of the Old Etonian was firmly over. Yet Cameron became the 19th Prime Minister educated there, and Boris the 20th, making five out of the fourteen prime ministers elected during Queen Elizabeth II’s reign Old Etonians.

When I first started there, the traditions seemed daunting, and while you had a week of grace period to find your feet, it took a lot longer for the novelty truly to wear off. Dressed in a tailsuit that makes you look like a penguin, and that even the production team of Downton Abbey would question, it’s a complete culture shock. Teachers become “beaks”; homework becomes “EW (short for Extra Work)”, and the threat of “tardy book” (a punishment where you have to get up early to report to the School Office) is ever present. Your life is governed by a tutor, housemaster, and dame (a surrogate mother for your time there, and the most influential person in your day-to-day life), and outside of lessons (known as “schools”) you’re left to your own devices. It’s a sink or swim situation, and some can’t hack the overload of independence.

You’re constantly surrounded by things named after great men who have come before you – whether that be John Maynard Keynes (an economics society) or William Gladstone (a library) – and you can’t help but see yourself as heir to some great dynasty. Sitting in Upper School – a large schoolroom now mainly used for talks by visiting speakers – the walls are lined with marble busts of illustrious Old Etonians past, and it’s not hard to daydream about joining them. In our first ever assembly the head master put it best: “If you know that some interesting people have gone on to do some interesting things, whether it’s George Orwell or the Duke of Wellington, that does implicitly ask the question, why not you?” Success never seems far away, and often you’re regaled with tales about the time your beak caught a famous actor smoking, or how awful a pupil a noted academic once was. Neither does service, particularly when you pass the memorial boards for the First World War (as you do daily on the way to chapel): 1157 Old Etonians died, and 37 Old Etonians have won the Victoria Cross – 17 more than any other school.

In your final years, it’s fun to try and work out who’s going to be most successful after leaving, and – it never seems too outlandish – who among you could be a future prime minister. The people you consider are never confined to a particular group – it’s not “one of the debaters” or “one of the Rugby XV” – in fact, it’s often those who you can’t seem to categorize, or transverse the groups that are most magnetic. To get into Eton, you have to do well in the infamous “List Test”, composed of a computerized assessment and an interview with one of the beaks. For an eleven year old, it can be brutal (one boy left crying midway through our test), particularly as you don’t know what they want: they’re not looking for candidates that fit a particular box. Potential is valued more than current ability, and the greatest asset is that of being interesting. With only one in five getting an offer (odds stiffer than Oxbridge), and after five years of being expected to perform at the highest level, it’s unsurprising that students end up so successful.

Ivo Delingpole, “Boris and the Spirit of Eton”, Die Weltwoche, 2020-01-29.

March 4, 2025

February 28, 2025

Activists get the Toronto school board to agree to rename three schools

For people who utterly lose their minds when the Bad Orange Man changes the names of things, Toronto’s activists are still full-steam ahead to force the Toronto District School Board to rename three schools:

According to media reports, the TDSB has voted 11 to 7 to change the names of three schools: Dundas Junior Public School, Ryerson Community School and Sir John A. Macdonald Collegiate Institute. Evidently, a process will now start to choose a new name at each school. We shall see what they end up with, hopefully something better than “Sankofa”, which is the new name for Dundas Square, and has absolutely nothing to do with Canada.

There is nothing wrong, in principle, with changing a school name. Times change, and school names may need to change to reflect changing times. I attended a school which was named after a school board trustee who had served many decades prior to my time at that school. Would it make sense to change the name to that of a person who lived more recently and had a bigger impact on the community? Maybe schools should not be named after people at all, but rather should get their name from some more enduring aspect of the community, city, province, or country? These are fair questions.

But in these three TDSB cases, the reasons being given for the changes are part and parcel of an overall strategy by the activists running the school system to rewrite history according to their narrative of colonial oppression and the victimhood of Indigenous people and “people of colour”.

Two of the schools, Dundas Junior Public and Ryerson Community School, are named for men who have been accused of complicity in historical evils specifically for deemed connections to the slave trade and to do with the Residential Schools set up for First Nations children, but the third really is historical revisionism on the grand scale: the one named for Canada’s first prime minister:

Sir John A. Macdonald, first Prime Minister of Canada. circa 1875.

Photo by George Lancefield from Library and Archives Canada, MIKAN ID number 3218718.

The activists want our illustrious first Prime Minister’s name off a school because they say he knowingly, willfully, and intentionally starved Indigenous people in the Prairies.

This starvation narrative was popularized by James Daschuck’s 2014 book Clearing the Plains but this harsh indictment of Macdonald does not stand up to scrutiny, as his government actually spent more on famine relief for the Indigenous people in 1884 than on national defense.

Additionally, the Canadian approach to avoiding war through treaties doubtless saved tens of thousands of Indigenous (and no small number of settlers) lives, as a look south of the border, where upwards of 60 000 died in such wars at the time, will attest.

Macdonald’s government created the Northwest Police Force (later renamed the RCMP) to protect the native (and settler) population from American raids and slaughter, and Indigenous leaders at the time expressed their gratitude for it. He provided vaccination against smallpox to thousands of Indigenous people too.

It should also be mentioned that the catch-all complaint about Macdonald being somehow responsible for forcing Indigenous kids to attend IRS schools is baseless. Such schools were built at the request of Indigenous leaders according to treaties with the Crown and attendance was entirely voluntary during Macdonald’s lifetime. Indeed, mandatory school attendance only became mandatory along with such a requirement for all Canadian children in the early 20th century.

As mentioned at the opening of this article, 7 of 18 TDSB trustees voted “no” to the name changes. This is an encouraging sign that presenting a simplistic and misleading account of Canada’s past, and the people who shaped our history, in the service of affirming a putrid and deceitful narrative of oppressors Vs. victims in Canada is starting to lose its credibility. People are starting to demand a more comprehensive, nuanced, and accurate account of what really happened, and why. Yes, mistakes were made, and there were some bad actors, but by and large our history is one to be exceedingly proud of. We can learn from our mistakes and be an even greater country in the future.

February 22, 2025

February 18, 2025

Canadian academic life now entails mandatory indoctrination about “settler colonialism”

In Quillette, Jon Kay talks about the pervasive indoctrination of Canadian university students in that invasive intellectual weed from Australia, “settler colonialism”:

Last month, I received a tip from a nursing student at University of Alberta who’d been required to take a course called Indigenous Health in Canada. It’s a “worthwhile subject”, my correspondent (correctly) noted, “but it won’t surprise you to learn [that the course consists of] four months of self-flagellation led by a white woman. One of our assignments, worth 30%, is a land acknowledgement, and instructions include to ‘commit to concrete actions to disrupt settler colonialism’ … This feels like a religious ritual to me.”

Canadian universities are now full of courses like this — which are supposed to teach students about Indigenous issues, but instead consist of little more than ideologically programmed call-and-response sessions. As I wrote on social media, this University of Alberta course offers a particularly appalling specimen of the genre, especially in regard to the instructor’s use of repetitive academic jargon, and the explicit blurring of boundaries between legitimate academic instruction and cultish struggle session.

Students are instructed, for instance, to “commit to concrete actions that disrupt the perpetuation of settler colonialism and articulate pathways that embrace decolonial futures”, and are asked to probe their consciences for actions that “perpetuate settler colonial futurity”. In the land-acknowledgement exercise, students pledge to engage in the act of “reclaiming history” through “nurturing … relationships within the living realities of Indigenous sovereignties”.

My source had no idea what any of this nonsense meant. It seems unlikely the professor knew either. And University of Alberta is not an outlier: For years now, whole legions of Canadian university students across the country have been required to robotically mumble similarly fatuous platitudes as a condition of graduation. It’s effectively become Canada’s national liturgy.

After my tweet went viral, I was contacted by a US-based publication called The College Fix, which covers post-secondary education from a (typically) conservative perspective. Like many observers from outside Canada, reporter Samantha Swenson couldn’t understand why Canadian students were being subjected to this kind of indoctrination session. “I hope you can answer,” she wrote: “Why do schools make mandatory classes like these?”

I sent Swenson a long 13-paragraph answer — which, at the time, felt like a waste of my time: I assumed the reporter would pluck a sentence or two from my lengthy ramble, and the rest of my words would fall down a memory hole.

So when her article did come out — under the title, Mandatory ‘Indigenous Health’ class for U. Alberta nursing students teaches ‘systemic racism’ — I was pleased to see that I’d been quoted at length. I especially appreciated the fact that Swenson had kept in my point that educating Canadians (especially students in the medical field) about Indigenous issues is important work; and that courses such as Indigenous Health in Canada would provide value if they actually served up useful facts and information, instead of self-parodic faculty-lounge gibberish about “decolonized futurities”.

February 13, 2025

Australia’s most toxic export (so far) – “Settler-colonial ideology”

Helen Dale explains how a lunatic fringe Australian notion has grown to be a major ideology in most of the Anglosphere and even as far afield as Israel:

Despite a great efflorescence of literature and especially film about the mafia, it’s a truism to say that it isn’t very good for Sicily. It also hasn’t been very good when exported to other countries, either, spreading violence, corruption, and lawlessness. Well, Australia is to settler-colonial ideology as Sicily is to mafia, and our poisonous gift to the world is, like Sicily’s mafia, one of those things about us that really isn’t for export.

“Settler-colonial ideology” seems a mouthful, but if I describe bits of it to you, you’ll recognise it. Heard Australia Day called “Invasion Day”? You’ve encountered settler-colonial ideology. Been called racist for voting NO in the 2023 Voice Referendum? You’ve encountered settler-colonial ideology. Noticed Aboriginal academics get hired with obviously inadequate qualifications? You’ve encountered settler-colonial ideology.

Many Australians — including me — first encountered settler-colonial ideology at university. Back then, it was a theoretical and foreign concern, and largely in languages other than English (mainly French and Arabic). I do remember one of the “post-colonial literatures” (note the s, the s is important) obsessives trying to convince me that Alan Paton’s Cry the Beloved Country wasn’t a “legitimate book” because its author was white, but back then, this was still a niche view.

Like other Australians confronted with daft academic ideas, I blamed the US or France and ignored my own country’s contribution. Australians aren’t noted for their theoretical acumen, which made this easier. Critical race theory and affirmative action are all-American, while US academics have often executed hostile takeovers of French nonsense like postmodernism or queer theory early on in proceedings. It gets easy to blame America and France.

Easy, but unfair.

I realised how mistaken I’d been when, in October last year, I returned to Australia for a stint. While I was there, I read Adam Kirsch’s On Settler Colonialism: Ideology, Violence and Justice. I did so in part because October 2024 was the one-year anniversary of two important events. Both concerned what Kirsch calls “the ideology of settler-colonialism”.

Kirsch documents a process whereby the French- and Arabic-speaking theorists of post WWII decolonial conflicts — particularly Frantz Fanon — had their ideas grafted (very, very awkwardly) onto dissimilar Australian history and conditions by Australian intellectuals. These were then exported throughout the English-speaking world, likely through academic conferences. This explains how cringeworthy Australian nonsense like land acknowledgments managed to spread first to Canada and then the US in a reversal of the usual process whereby America sneezes and so gives its Hat a cold.

Fanon was a Marxist and a Freudian. His writing seethes with angry bloodthirstiness and pseudoscientific psychodrama, but he was responding to a vicious war of independence and incipient civil conflict. Kirsch notices a pattern where Australian scholars borrow bits of Fanon to give a sanguinary rhetorical garnish to their writing. “Fanon’s praise of violence is a large part of his appeal for Western intellectuals,” he notes. “Many of the sentiments expressed in The Wretched of the Earth, coming from a European or American writer, would immediately be identified as fascistic.”

Australia’s intervention changed the ideology, in some ways making it more destructive. Fanon is shorn of most of his Marxism, for example (can’t have that, won’t be able to recruit rich minorities to the boss class otherwise). The key Australian shift coalesces around an oft-quoted aphorism from historian Patrick Wolfe: “invasion is a structure, not an event”. That is, colonisation trauma is constantly renewed because “settler” is a heritable identity. “Every inhabitant of a settler colonial society who is not descended from the original indigenous population,” Kirsch points out, “is, and always will be, a settler”.

“Settler” here includes people transported to both America and Australia in chains — slaves and convicts. Once it became acceptable to construe one group of people conveyed against their will across thousands of miles of ocean in dreadful conditions as providentially lucky (and genocidal) settlers, it became possible to extend the reasoning to other, similar groups. After all, the only difference between a convict and a slave is the presence or absence of a criminal conviction.

Kirsch’s attempt to explain how Australia was analogised with Fanon’s Algeria and then how Israel was analogised with Wolfe’s Australia is heroic, in part because the casuistry he seeks to unpick is so convoluted. Filtering Fanon through Australian academia and its claim that “settler” is a heritable identity did have the effect of making Jewish Israelis look more like non-indigenous Australians or Americans, however, especially when attention was focussed on European Jewish immigrants to Israel.

February 11, 2025

QotD: Scientists, in their natural habitat

Now, do you accept the traditional image of scientists as sober, serious, disinterested seekers of truth? Or do you have more of a Biscuit Factory sort of view of them, where quite a lot of them are very flawed human beings, egotistical shits bent on climbing the greasy pole and treading on people to get to the top? Bullshitters and networkers and operators? Actually, I think the former types do exist, there are good, serious scientists out there (including some of my personal friends, and quite a few readers of this blog), but there are an awful lot of the latter types, especially at the top, and it’s rare to hear of a science department that isn’t full of bitter hatreds and jealousies and vendettas, where every Professor turns into an arsehole no matter how nice they seemed when they were a graduate student.

Hector Drummond, “Soap-opera science”, Hector Drummond, 2020-03-29.

February 3, 2025

QotD: Illiteracy then and illiteracy now

My old friend George Jonas, now forcibly confined within the Mount Pleasant Cemetery, observed of the times:

In the not too distant past, people who were illiterate could neither read nor write. These days they can, with disastrous results for the culture.

He quoted his own old friend Stephen Vizinczey:

No amount of learning can cure stupidity, and formal education positively fortifies it.

David Warren, “On paper logic”, Essays in Idleness, 2020-02-22.