Overly Sarcastic Productions

Published 30 Dec 2022Journey to the West Kai, episode 7: Double Trouble

(more…)

April 28, 2023

Legends Summarized: Journey To The West (Part X)

April 25, 2023

QotD: What is military history?

The popular conception of military history – indeed, the conception sometimes shared even by other historians – is that it is fundamentally a field about charting the course of armies, describing “great battles” and praising the “strategic genius” of this or that “great general”. One of the more obvious examples of this assumption – and the contempt it brings – comes out of the popular CrashCourse Youtube series. When asked by their audience to cover military history related to their coverage of the American Civil War, the response was this video listing battles and reflecting on the pointless of the exercise, as if a list of battles was all that military history was (the same series would later say that military historians don’t talk about about food, a truly baffling statement given the important of logistics studies to the field; certainly in my own subfield, military historians tend to talk about food more than any other kind of historian except for dedicated food historians).

The term for works of history in this narrow mold – all battles, campaigns and generals – is “drums and trumpets” history, a term generally used derisively. The study of battles and campaigns emerged initially as a form of training for literate aristocrats preparing to be officers and generals; it is little surprise that they focused on aristocratic leadership as the primary cause for success or failure. Consequently, the old “drums and trumpets” histories also had a tendency to glory in war and to glorify commanders for their “genius” although this was by no means universal and works of history on conflict as far back as Thucydides and Herodotus (which is to say, as far back as there have been any) have reflected on the destructiveness and tragedy of war. But military history, like any field, matured over time; I should note that it is hardly the only field of history to have less respectable roots in its quite recent past. Nevertheless, as the field matured and moved beyond military aristocrats working to emulate older, more successful military aristocrats into a field of scholarly inquiry (still often motivated by the very real concern that officers and political leaders be prepared to lead in the event of conflict) the field has become far more sophisticated and its gaze has broadened to include not merely non-aristocratic soldiers, but non-soldiers more generally.

Instead of the “great generals” orientation of “drums and trumpets”, the field has moved in the direction of three major analytical lenses, laid out quite ably by Jeremy Black in “Military Organisations and Military Charge in Historical Perspective” (JMH, 1998). He sets out the three basic lenses as technological, social and organizational, which speak to both the questions being asked of the historical evidence but also the answers that are likely to be provided. I should note that these lenses are mostly (though not entirely) about academic military history; much of the amateur work that is done is still very much “drums and trumpets” (as is the occasional deeply frustrating book from some older historians we need not discuss here), although that is of course not to say that there isn’t good military history being written by amateurs or that all good military history narrowly follows these schools. This is a classification system, not a straight-jacket and I am giving it here because it is a useful way to present the complexity and sophistication of the field as it is, rather than how it is imagined by those who do not engage with it.

[…]

The technological approach is perhaps the least in fashion these days, but Geoffery Parker’s The Military Revolution (2nd ed., 1996) provides an almost pure example of the lens. This approach tends to see changing technology – not merely military technologies, but often also civilian technologies – as the main motivator of military change (and also success or failure for states caught in conflict against a technological gradient). Consequently, historians with this focus are often asking questions about how technologies developed, why they developed in certain places, and what their impacts were. Another good example of the field, for instance, is the debate about the impact of rifled muskets in the American Civil War. While there has been a real drift away from seeing technologies themselves as decisive on their own (and thus a drift away from mostly “pure” technological military history) in recent decades, this sort of history is very often paired with the others, looking at the ways that social structures, organizational structures and technologies interact.

Perhaps the most popular lens for military historians these days is the social one, which used to go by the “new military history” (decades ago – it was the standard form even back in the 1990s) but by this point comprises probably the bulk of academic work on military history. In its narrow sense, the social perspective of military history seeks to understand the army (or navy or other service branch) as an extension of the society that created it. We have, you may note, done a bit of that here. Rather than understanding the army as a pure instrument of a general’s “genius” it imagines it as a socially embedded institution – which is fancy historian speech for an institution that, because it crops up out of a society, cannot help but share that society’s structures, values and assumptions.

The broader version of this lens often now goes under the moniker “war and society”. While the narrow version of social military history might be very focused on how the structure of a society influences the performance of the militaries that created it, the “war and society” lens turns that focus into a two-way street, looking at both how societies shape armies, but also how armies shape societies. This is both the lens where you will find inspection of the impacts of conflict on the civilian population (for instance, the study of trauma among survivors of conflict or genocide, something we got just a bit with our brief touch on child soldiers) and also the way that military institutions shape civilian life at peace. This is the super-category for discussing, for instance, how conflict plays a role in state formation, or how highly militarized societies (like Rome, for instance) are reshaped by the fact of processing entire generations through their military. The “war and society” lens is almost infinitely broad (something occasionally complained about), but that broadness can be very useful to chart the ways that conflict’s impacts ripple out through a society.

Finally, the youngest of Black’s categories is organizational military history. If social military history (especially of the war and society kind) understands a military as deeply embedded in a broader society, organizational military history generally seeks to interrogate that military as a society to itself, with its own hierarchy, organizational structures and values. Often this is framed in terms of discussions of “organizational culture” (sometimes in the military context rendered as “strategic culture”) or “doctrine” as ways of getting at the patterns of thought and human interaction which typify and shape a given military. Isabel Hull’s Absolute Destruction: Military Culture and the Practices of War in Imperial Germany (2006) is a good example of this kind of military history.

Of course these three lenses are by no means mutually exclusive. These days they are very often used in conjunction with each other (last week’s recommendation, Parshall and Tully’s Shattered Sword (2007) is actually an excellent example of these three approaches being wielded together, as the argument finds technological explanations – at certain points, the options available to commanders in the battle were simply constrained by their available technology and equipment – and social explanations – certain cultural patterns particular to 1940s Japan made, for instance, communication of important information difficult – and organizational explanations – most notably flawed doctrine – to explain the battle).

Inside of these lenses, you will see historians using all of the tools and methodological frameworks common in history: you’ll see microhistories (for instance, someone tracing the experience of a single small unit through a larger conflict) or macrohistories (e.g. Azar Gat, War in Human Civilization (2008)), gender history (especially since what a society views as a “good soldier” is often deeply wrapped up in how it views gender), intellectual history, environmental history (Chase Firearms (2010) does a fair bit of this from the environment’s-effect-on-warfare direction), economic history (uh … almost everything I do?) and so on.

In short, these days the field of military history, as practiced by academic military historians, contains just as much sophistication in approach as history more broadly. And it benefits by also being adjacent to or in conversation with entire other fields: military historians will tend (depending on the period they work in) to interact a lot with anthropologists, archaeologists, and political scientists. We also tend to interact a lot with what we might term the “military science” literature of strategic thinking, leadership and policy-making, often in the form of critical observers (there is often, for instance, a bit of predictable tension between political scientists and historians, especially military historians, as the former want to make large data-driven claims that can serve as the basis of policy and the later raise objections to those claims; this is, I think, on the whole a beneficial interaction for everyone involved, even if I have obviously picked my side of it).

Bret Devereaux, “Collections: Why Military History?”, A Collection of Unmitigated Pedantry, 2020-11-13.

April 24, 2023

Even fighter pilots and gunners have love lives

In The Critic, John Sturgis reviews a new book by Luke Turner titled Men at War: Loving, Lusting, Fighting, Remembering 1939-1945, which considers the myths and reality of wartime relationships during the Second World War:

Spitfire pilot Ian Gleed shot down five enemy aircraft in just a week in May 1940 — the fastest time in which this had ever been done, making him an official “ace” who would be quickly promoted to Wing Commander.

Gleed had become a poster boy for “the few”, the hero pilots of the Battle of Britain to whom so many would owe so much. He lived up to the popular image with his talk of “a bloody good show” and shooting down “damned Huns”, after which he’d sink a few warm beers and spend some “wizard” free time recuperating with his girlfriend Pam, whom he “loved now more than ever”.

Gleed’s luck finally ran out over Tunisia in April 1943 when his plane was hit by a German fighter and crashed into dunes. Gleed was killed. He was just 26.

It would only emerge decades later that much of the popular image he had cultivated simply wasn’t true. Gleed was gay. Pam was an imaginary character he had invented as a cover to keep his double life as a sexually active homosexual firmly secret.

Gleed’s story is one of many similar vignettes in this alternative history of the Second World War. Its author Luke Turner’s previous book was a memoir about his own grappling with issues around his identity as a bisexual. Here he takes up the question of how this would have played out for him and other sexual non-conformists in 1939–45.

Turner grew up obsessed with the war — everything from Airfix kits and Dad’s Army to Stalingrad and the Berlin bunker — and here he examines what it was to live through that period as a sexually active person of whatever hue. It proves a particularly rich subject matter as sex seems to have been everywhere in war time: everyone was sleeping with everyone else, apparently. It’s a perennial truth that you or I might be hit by a bus tomorrow — but make that bus a bomb, and suddenly this seems to create a sense of urgency which frequently manifests itself sexually, lending daily life what Turner calls “an aphrodisiac quality”. As Quentin Crisp put it when describing the shenanigans in blacked-out, Blitz London: “As soon as the bombs started to fall, the city became like a paved double bed.”

Whilst war may see an explosion of sex of all kinds, the establishment was not always comfortable with this. A Home Office report on public behaviour in London during the Blitz noted, “In several districts cases of blatant immorality in shelters are reported; this upsets other occupants of the shelters.”

One contemporary account suggests, “In wartime a uniform, whether of the Army, Navy or Air Force, to the average girl ranks as a fetish.” This had consequences in the field: Dear John letters from home could be a real problem in this regard. As an Army report in 1942 put it, morale is often damaged by “the suspicion, very frequently justified, of fickleness on behalf of wives and girls”.

Amongst all this sex was, of course, sex with Americans. Turner cites George Formby who captured this mood in the song “Our Fanny’s Gone All Yankee”: “She don’t wait for the dark when she wants to have a lark/In a bus or train she does her hanky panky.”

Then in a dark reversal of this two-nations-colliding-sexually motif, we get the horror of the Red Army’s organised rape of as many as 1.4 milllion German women during the Russian advance on Berlin, whose residents would later refer to the city’s grandiose monument to Soviet war dead as “the tomb of the unknown rapist”.

April 23, 2023

There’s a spectre haunting your pantry – the spectre of “Ultra-Processed Food”

Christopher Snowden responds to some of the claims in Chris van Tulleken’s book Ultra-Processed People: Why Do We All Eat Stuff That Isn’t Food … And Why Can’t We Stop?:

Ultra-processed food (UPF) is the latest bogeyman in diet quackery. The concept was devised a few years ago by the Brazilian academic Carlos Monteiro who also happens to be in favour of draconian and wildly impractical regulation of the food supply. What are the chances?!

Laura Thomas has written some good stuff about UPF. The tldr version is that, aside from raw fruit and veg, the vast majority of what we eat is “processed”. That’s what cooking is all about. Ultra-processed food involves flavourings, sweeteners, emulsifiers etc. that you wouldn’t generally use at home, often combined with cooking processes such as hydrogenation and hydrolysation that are unavailable in an ordinary kitchen. In short, most packaged food sold in shops is UPF.

Does this mean a cake you bake at home (“processed”) is less fattening than a cake you buy from Waitrose (“ultra-processed”)? Probably not, so what is the point of the distinction? This is where the idea breaks down. All the additives used by the food industry are considered safe by regulators. Just because the layman doesn’t know what a certain emulsifier is doesn’t mean it’s bad for you. There is no scientific basis for classifying a vast range of products as unhealthy just because they are made in factories. Indeed, it is positively anti-scientific insofar as it represents an irrational fear of modernity while placing excessive faith in what is considered “natural”. There is also an obvious layer of snobbery to the whole thing.

Taken to an absurd but logical conclusion, you could view wholemeal bread as unhealthy so long as it is made in a factory. When I saw that CVT has a book coming out (of course he does) I was struck by the cover. Surely, I thought, he was not going to have a go at brown bread?

But that is exactly what he does.

During my month-long UPF diet, I began to notice this softness most starkly with bread — the majority of which is ultra-processed. (Real bread, from craft bakeries, makes up just 5 per cent of the market …

His definition of “real bread” is quite revealing, is it not?

For years, I’ve bought Hovis Multigrain Seed Sensations. Here are some of its numerous ingredients: salt, granulated sugar, preservative: E282 calcium propionate, emulsifier: E472e (mono- and diacetyltartaric acid esters of mono- and diglycerides of fatty acids), caramelised sugar, ascorbic acid.

Let’s leave aside the question of why he only recently noticed the softness of fake bread if he’s been eating it for years. Instead, let’s look at the ingredients. Like you, I am not familiar with them all, but a quick search shows that E282 calcium propionate is a “naturally occurring organic salt formed by a reaction between calcium hydroxide and propionic acid”. It is a preservative.

E472e is an emulsifier which interacts with the hydrophobic parts of gluten, helping its proteins unfold. It adds texture to the bread.

Ascorbic acid is better known as Vitamin C.

Caramelised sugar is just sugar that’s been heated up and is used sparingly in bread; Jamie Oliver puts more sugar in his homemade bread than Hovis does.

Hovis Multigrain Seed Sensations therefore qualifies as UPF but it is far from obvious why it should be regarded as unhealthy. According to CVT, the problem is that it is too easy to eat.

The various processes and treatment agents in my Hovis loaf mean I can eat a slice even more quickly, gram for gram, than I can put away a UPF burger. The bread disintegrates into a bolus of slime that’s easily manipulated down the throat.

Does it?? I’ve never tried this brand but it doesn’t ring true to me. It’s just bread. Either you toast it or you use it for sandwiches. Are there people out there stuffing slice after slice of bread down their throats because it’s so soft?

By contrast, a slice of Dusty Knuckle Potato Sourdough (£5.99) takes well over a minute to eat, and my jaw gets tired.

Far be it from me to tell anyone how to spend their money but, in my opinion, anyone who spends £6 on a loaf of bread is an idiot. Based on his description, the Dusty Knuckle Potato Sourdough is awful anyway. Is that the idea? Is the plan to make eating so jaw-achingly unenjoyable that we do it less? Is the real objection to UPF simply that it tastes nice?

From the Encyclopedia Britannica to Wikipedia

In the latest SHuSH newsletter, Ken Whyte recounts the decline and fall of the greatest of the print encyclopedias:

I remembered all this while reading Simon Garfield’s wonderful new book, All the Knowledge in the World: The Extraordinary History of the Encyclopedia. It’s an entertaining history of efforts to capture all that we know between covers, starting two thousand years ago with Pliny the Elder.

The star of Garfield’s show, naturally, is Encyclopedia Britannica, which dominated the field through the nineteenth and twentieth centuries. By the time of its fifteenth edition in 1989, the continuously revised Britannica was comprehensive, reliable, scholarly, and readable, with 43 million words and 25,000 illustrations on a half million topics published over 32,640 pages in thirty-two beautifully designed Morocco-leather-bound volumes. It was the greatest encyclopedia ever published and probably the greatest reference tool to that time. It was sold door-to-door in the US by a sales force of 5,000.

Just as the glorious fifteenth edition was going to press, Bill Gates tried to buy Encyclopedia Britannica. Not a set — the whole company. He didn’t want to go into the reference book business. He believed that the availability of a CD-ROM encyclopedia would encourage people to adopt Microsoft’s Windows operating system. The Britannica people told Gates to get stuffed. They were revolted by the thought of their masterpiece reduced to an inexpensive plastic bolt-on to a larger piece of software for gimmicky home computers.

Like the executives at Blockbuster, the executives at Britannica eventually recognized the threat of digital technology but couldn’t see their way to abandoning their old business model and their old production standards and the reliable profits that came with large sets of big books. CD-ROMs seemed to them like a child’s toy.

Even as more of life moved online and the company’s prospects for growth dwindled, the Britannica executives could still not get their heads around abandoning the past and favoring a digital marketplace. They figured that their time-honored strategy of guilting parents into buying a shelf of books in service of their kids’ education would survive the digital challenge, not recognizing that parents would soon be assuaging their guilt by buying personal computers for their kids.

By the time Britannica brought out an overly expensive and not-very-good CD-ROM version of its encyclopedia in 1994, Gates had launched Encarta based on the much inferior Funk & Wagnalls. It might not have been the equal of the printed Britannica, but with its ease of use and storage, its much lower price point, and its many photos and videos of the Apollo moon landing and spuming whales, Encarta made a splash. It was selling a million copies a year in its third year of production — a number that no previous encyclopedia had come close to matching.

As it turned out, Britannica‘s last profitable year was 1990 when it sold 117,000 bound sets for $650 million and a profit of $40 million. With the launch of Encarta, its annual sales were reduced to 50,000 sets and it was laying off masses of employees.

Encarta‘s own life was relatively short. It closed in 2009, at which point it was selling for a mere $22.95. The world now belonged to Wikipedia.

April 22, 2023

“I’d stock every preschool classroom with The Anarchist’s Cookbook if I could”

I’m also a libertarian, but I might not go quite as far as Freddie deBoer in the quote in the headline:

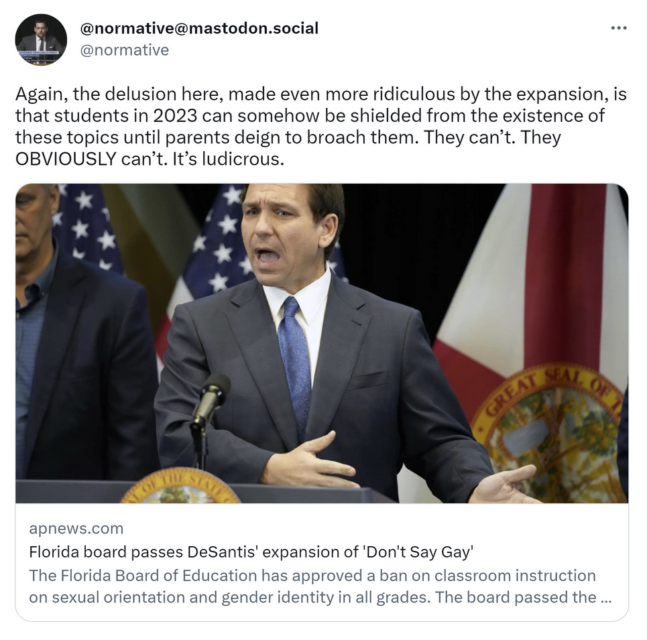

Julian Sanchez makes good sense here on recent bills in Florida designed to regulate and censor LGBTQ content in schools:

Yes, indeed. Kids will learn about LGBTQ issues sooner or later. It’s pointless to try and keep them from finding out about the existence of homosexuality, of gay love, of gay marriage, of trans people and gender nonconformity. They’re gonna find out. They have smartphones, usually much younger than they should. They’re curious and the world is always a click away. It’s foolish to try and prevent them from learning about this stuff. And, in fact, the more that you try to restrict what they learn, the more likely they are to explore this world in a way that openly defies your efforts. LGBTQ people, politics, art, and culture exist. You’re entitled to object to LGBTQ rights, in a free society, but you’re not entitled to (or able to) create a bubble in which others are kept hidden from knowledge of the existence of LGBTQ people. People love that I’m forever tweaking liberals about their attachment to various forms of unreality, to thinking that they can wish away facts of life that they’re uncomfortable with. But it’s the same deal here.

Look, I will acknowledge that some of the reporting on the “Don’t Say Gay” bill has distorted and exaggerated what the bill calls for, and I also think there’s a lot of motivated dismissal about the nature of some of the content that’s being debated. For example, some people have gone to the ramparts to defend access to the book This Book is Gay, which explicitly advertises itself as a guide to sex, despite the fact that the author herself says it’s not for children. (Pictures of the book that are routinely circulated are typically dismissed as conservative fabrications, but you just have to look at the book to know that isn’t true.) Probably that particular example is a matter of some groups being lazy when putting together reading lists, but of course there are always going to be debates and edge cases.

Would I ban that book? Of course not. Personally, I’m completely libertarian about this stuff; I’d stock every preschool classroom with The Anarchist’s Cookbook if I could. But there’s a difference between holding that position and believing it’s credible to pretend that there’s literally nothing to debate there. It’s pointless to pretend that books in a public school classroom are going to remain untouched by these disagreements. The views of parents will inevitably be expressed through the democratic apparatus that presides over those schools. Of course people are going to debate this stuff. Vociferously.

Still, the objections are ultimately misguided for the reasons Sanchez says. Plenty of kids in extremely repressive conservative environments dreamed of a future as an openly gay person in a liberal city, before the internet. I’ve always had qualms about the “born this way” framing — if being gay was a choice, would society have any legitimate right to refuse people from making it? — but the simple reality is that gay people and trans people etc have always transcended restrictive social and religious environments in their interior life, even if it was too dangerous for them to express it. If a kid is gay, they’re gonna figure that out. You don’t have to speed along the process, but trying to artificially impede their progress won’t work. That’s an “is” statement, not an “ought” statement.

April 21, 2023

Tiberius Caesar, the second Roman Emperor

Andrew Doyle considers how the reputation of Emperor Tiberius was shaped (and blackened) by later historians:

I am at the home of a psychopath. Here on the easternmost point of the island of Capri, the ancient ruins of the Villa Jovis still cling to the summit of the mountain. This was the former residence of the Emperor Tiberius, who retired here for the last decade of his life in order to indulge in what Milton described as “his horrid lusts”. He conducted wild orgies for his nymphs and catamites. He forced children to swim between his thighs, calling them his “little fish”. He raped two brothers and broke their legs when they complained. He threw countless individuals to their deaths from a precipice looming high over the sea.

That these stories are unlikely to be true is beside the point; Tiberius’s reputation has done wonders for the tourist trade here on Capri. The historians Suetonius and Tacitus started the rumours and, with the help of successive generations of sensationalists, established a tradition that was to persist for almost two millennia.

All of which serves as a reminder that reputations can be constructed and sustained on the flimsiest of foundations. Suetonius and Tacitus were writing almost a century after the emperor’s death, and many of their lurid stories were doubtless echoes of those circulated by his most spiteful enemies. Or perhaps it’s simply a matter of prurience. Who can deny that the more lascivious and outlandish acts of the Roman emperors are by far the most memorable? One thinks immediately of Caligula having sex with his siblings and appointing his horse as consul. Or Nero murdering his own mother, and taking a castrated slave for his bride, naming him after the wife he had kicked to death. For all their horror, who doesn’t feel cheated when such tales turn out to be false?

Our reputations are changelings: protean shades of other people’s imaginations. More often than not, they are birthed from a combination of uninformed prejudice and wishful thinking. And we should be in no doubt that in our online age, when lies are disseminated at lightning speed and casual defamation has become the activist’s principal strategy, reputations are harder to heal once tarnished.

I am tempted to feel pity for future historians. Quite how they will be expected to wade through endless reams of emails, texts, and other digital materials — an infinitude of conflicting narratives and individual “truths” — really is beyond me. At least when there is a dearth of primary sources it is possible to piggyback onto a firm conclusion. “Suetonius said” has a satisfactory and definitive air, but only because there are so few of his contemporary voices available to contradict him.

April 18, 2023

“Here it is then. This is The Hill.”

Simon Evans rightfully decides that fighting the bowdlerization of P.G. Wodehouse is the hill to die on:

PG Wodehouse has become the latest author to fall victim to the “sensitivity readers”. Passages have been purged and words have been altered for the new editions of his Jeeves and Wooster novels, including Thank You, Jeeves and Right Ho, Jeeves. According to Penguin, the publishers, some of the racial language and themes of these 1930s novels are “outdated” and “unacceptable”. This includes the use of the n-word.

When I saw the news, my tweet sort of fell out of me before I’d consciously drafted it: “Here it is then. This is The Hill.”

There is an interesting contrast here. We live in a time when every much-loved and out-of-copyright literary artefact that is brought to the screens is being stiffened, like an old Christmas pudding recipe that clearly needs more brandy, with swearing and novel scenes of sexual deviation never imagined in the original. Just think of the BBC’s recent modernising and coarsening of Charles Dickens, Agatha Christie et al, which have rendered them all but unwatchable for millions. So it is more than a little odd that Wodehouse, the mildest, most weightless comedy of the last century, should suddenly seem deserving of the nit comb.Yes, it is true that Wodehouse uses the n-word. And no other word is now, or arguably ever has been, quite so radioactive, so sui generis in its capacity for offence. It is not that I want to defend this word. Rather, the hill on which I will die is the pristine perfection of Wodehouse’s prose, and its right to remain so. He is – and by an extraordinary degree of consensus, in a field that is almost maddeningly subjective – the Bach of comic literature. And I’m sorry, but you just don’t tinker with Bach.

Though a fan, Christopher Hitchens, in a review of a Wodehouse biography, wrote of the tiresome habit of certain people in referring to Wodehouse as “The Master”, so I will try to avoid that unctuous, fulsome tone. But one of the very few writers of my lifetime who approached him for touch (though sadly not in output) was Douglas Adams, who often referred to Wodehouse as just that: “He’s up in the stratosphere of what the human mind can do, above tragedy and strenuous thought, where you will find Bach, Mozart, Einstein, Feynman and Louis Armstrong, in the realms of pure, creative playfulness.”

The point is not that the presence of the odd unfortunate archaic usage, which might indeed jolt the casual reader into a brief awareness that they are reading something older than their grandfather, is necessarily a good thing. It is simply, who the hell do the sensitivity readers think they are, to decide what stays and what goes?

April 17, 2023

April 16, 2023

Do Foucault and Derrida deserve the blame for PoMo excesses?

In Spiked, Patrick West says that it’s a misunderstanding of Foucault and Derrida to blame them for the rise of wokeness:

Michel Foucault speaking at the Hospital das Clínicas of the State University of Guanabara in Brazil, 1974.

Public domain image from the Arquivo Nacional Collection via Wikimedia Commons.

It has become common to blame wokeness on its supposed philosophical parent: postmodernism. As the standard narrative goes, postmodernism is the ideology that entrenched itself in Anglophone universities in the 1980s and 1990s. It talked of relativism, of the absence of objective truth, of the spectre of a pervasive, invisible power, and it was generally anti-Western. A whole generation of professors, writers, journalists and a fair few activists have subsequently been raised on this diet of postmodern thinking. And the result is a cultural elite that is wedded to wokeness.

[…]

For these critics of woke, Foucault’s influence, in particular, is seemingly everywhere. According to [Douglas] Murray [in The War on The West], it’s through the “anti-colonial” philosophy popularised by the Foucault-inspired scholar, Edward Said, that Foucault and therefore postmodernism have filtered down into woke philosophy, which holds that Western society is uniquely racist and to blame for all of today’s ills. Equally, right-wing critics of wokeness will claim that the trans movement has sprung from the postmodern contention that sexuality and gender are entirely socially constructed and therefore plastic and malleable.

If Foucault is regarded as the father of wokeness then 19th-century philosopher Friedrich Nietzsche tends to be regarded as the grandfather. After all, Foucault was profoundly influenced by Nietzsche and even proudly declared himself to be “Nietzchean”. Nietzsche, like Foucault, also saw all human behaviour stemming from the desire for power. And he conceived of morality – good and evil, right and wrong – as the mere manifestation of the will to power. As he wrote of the “origin of knowledge”, in The Joyous Science (1883): “Gradually, the human brain became full of such judgements and convictions, and a ferment, a struggle, and lust for power developed in this tangle. Not only utility and delight but every kind of impulse took sides in this fight about ‘truths’.” One can see this Nietzschean sentiment at work in Foucault’s Discipline and Punish (1975): “Power produces knowledge … power and knowledge directly imply one another.”

So, according to this largely right-wing narrative, wokeness is the product of a 20th-century philosophical assault on truth, objectivity and the West. And it was inspired by Nietzsche and led by several “cultural Marxist” thinkers.

There are several problems with this rather neat story. The first error is to use the phrase “cultural Marxism” to talk of postmodernism or wokeness. This term doesn’t really make sense. Marx himself conceived of his work as a historical materialism. It was focussed on class and the means of production, not on culture. Yes, in the 1940s and 1950s, some Frankfurt School thinkers, who sometimes presented themselves as Marxist, did focus on culture rather than class. But as Joanna Williams writes in How Woke Won (2022), their thinking “represented less a continuation of Marxism and more a break with Marx”.

Moreover, postmodern thinkers were broadly opposed to Marxism. Many may have been signed-up Communists in their youth (the French Communist Party dominated left-wing politics at the time), but by the 1960s they had become highly critical of Marxist politics. They rejected the idea that history was progressing “dialectically” towards a communist future, or “telos”. And they were often hostile to the scientific objectivity and “Enlightenment” values so central to Marxism. Foucault wrote that history was not the story of progress; it was but a series of non-linear discontinuities and contingencies. And Jean-François Lyotard (1924-1998), in his highly-influential The Postmodern Condition (1979), announced and celebrated the end of “grand narratives”, and with it the end of the Marxist “grand narrative” of progress. Lyotard’s writings from the 1970s onwards were violently antithetical to Marxism, especially its claims to objective truth.

As for wokeness itself, it has nothing to do with Marxism. With their myopic focus on race and gender, woke activists are utterly blind to the material, class-structure of society. Today, bizarrely, it’s often conservatives who are more attuned to the plight of the working class than woke “radicals”. As Williams writes, “critics who insist that woke is simply Marxism in disguise are wide of the mark”.

April 15, 2023

QotD: When the pick-up artist became “coded right”

When did pickup artistry become criminal? Relying on online sex gurus for advice on persuading women into bed used to be seen as a fallback for introverted, physically unprepossessing “beta males”. And for this reason, in the 2000s, the discipline was promoted by the mainstream media as a way of instilling confidence in sexually-frustrated nerds. MTV’s The Pickup Artist shamelessly broadcast its tactics, with dating coaches encouraging young men to prey upon reluctant women, hoping to “neg” and “kino escalate” them into “number closes“. Contestants advanced through women of increasing difficulty (picking-up a stripper was regarded as “the ultimate challenge”) with the most-skilled “winning” the show.

Today, the global face of pickup artistry is Andrew Tate: sculpted former kickboxing champion, self-described “misogynist”, and, now, alleged human trafficker. Whatever results from the current allegations, his fall is a defining moment in the cultural history of the now inseparable worlds of the political manosphere and pickup artistry, and provides an opportunity to reflect upon their entangled history.

Pickup artistry burst onto the scene in the 2000s, propelled by the success of Neil Strauss’s best-selling book The Game. More a page-turning potboiler cataloguing the mostly empty lives of pickup artists (PUAs) than a how-to guide (though Strauss wrote one of those too), the methods in the book had been developed through years of research shared on internet forums. The “seduction underground”, as the large online community of people doing this research was called, then became the subject of widespread media attention. Through pickup artistry, the aggressive, formulaic predation of women was normalised as esteem boosting, and men such as those described in Strauss’s The Game could be viewed in a positive light: they had transformed from zero to hero and taken what was rightfully theirs.

The emergence of PUAs generated a swift backlash. The feminist blogs of the mid-to-late 2000s internet, of which publications like Jezebel still survive as living fossils, rushed to pillory them. The attacks weren’t without justification, but the world of PUAs during this period, much like the similarly wild-and-woolly bodybuilding forums, had no obvious political dimension beyond some sort of generic libertarianism. It was only after these initial critiques that it began to be coded as Right-wing by those on the Left. Duly labelled, PUAs and other associated manosphere figures drifted in that direction. MTV’s dating coaches were not part of the political landscape, merely feckless goofballs and low-level conmen capable of entertaining the masses. But their successors would be overtly political actors.

Oliver Bateman, “Why pick-up artists joined the Online Right”, UnHerd, 2023-01-08.

April 12, 2023

Institutional Review Boards … trying to balance harm vs health, allegedly

At Astral Codex Ten Scott Alexander reviews From Oversight to Overkill by Simon N. Whitley, in light of his own experience with an Institutional Review Board’s demands:

Dr. Rob Knight studies how skin bacteria jump from person to person. In one 2009 study, meant to simulate human contact, he used a Q-tip to cotton swab first one subject’s mouth (or skin), then another’s, to see how many bacteria traveled over. On the consent forms, he said risks were near zero — it was the equivalent of kissing another person’s hand.

His IRB — ie Institutional Review Board, the committee charged with keeping experiments ethical — disagreed. They worried the study would give patients AIDS. Dr. Knight tried to explain that you can’t get AIDS from skin contact. The IRB refused to listen. Finally Dr. Knight found some kind of diversity coordinator person who offered to explain that claiming you can get AIDS from skin contact is offensive. The IRB backed down, and Dr. Knight completed his study successfully.

Just kidding! The IRB demanded that he give his patients consent forms warning that they could get smallpox. Dr. Knight tried to explain that smallpox had been extinct in the wild since the 1970s, the only remaining samples in US and Russian biosecurity labs. Here there was no diversity coordinator to swoop in and save him, although after months of delay and argument he did eventually get his study approved.

Most IRB experiences aren’t this bad, right? Mine was worse. When I worked in a psych ward, we used to use a short questionnaire to screen for bipolar disorder. I suspected the questionnaire didn’t work, and wanted to record how often the questionnaire’s opinion matched that of expert doctors. This didn’t require doing anything different — it just required keeping records of what we were already doing. “Of people who the questionnaire said had bipolar, 25%/50%/whatever later got full bipolar diagnoses” — that kind of thing. But because we were recording data, it qualified as a study; because it qualified as a study, we needed to go through the IRB. After about fifty hours of training, paperwork, and back and forth arguments — including one where the IRB demanded patients sign consent forms in pen (not pencil) but the psychiatric ward would only allow patients to have pencils (not pen) — what had originally been intended as a quick record-keeping had expanded into an additional part-time job for a team of ~4 doctors. We made a tiny bit of progress over a few months before the IRB decided to re-evaluate all projects including ours and told us to change twenty-seven things, including re-litigating the pen vs. pencil issue (they also told us that our project was unusually good; most got >27 demands). Our team of four doctors considered the hundreds of hours it would take to document compliance and agreed to give up. As far as I know that hospital is still using the same bipolar questionnaire. They still don’t know if it works.

Most IRB experiences can’t be that bad, right? Maybe not, but a lot of people have horror stories. A survey of how researchers feel about IRBs did include one person who said “I hope all those at OHRP [the bureaucracy in charge of IRBs] and the ethicists die of diseases that we could have made significant progress on if we had [the research materials IRBs are banning us from using]”.

Dr. Simon Whitney, author of From Oversight To Overkill, doesn’t wish death upon IRBs. He’s a former Stanford IRB member himself, with impeccable research-ethicist credentials — MD + JD, bioethics fellowship, served on the Stanford IRB for two years. He thought he was doing good work at Stanford; he did do good work. Still, his worldview gradually started to crack:

In 1999, I moved to Houston and joined the faculty at Baylor College of Medicine, where my new colleagues were scientists. I began going to medical conferences, where people in the hallways told stories about IRBs they considered arrogant that were abusing scientists who were powerless. As I listened, I knew the defenses the IRBs themselves would offer: Scientists cannot judge their own research objectively, and there is no better second opinion than a thoughtful committee of their peers. But these rationales began to feel flimsy as I gradually discovered how often IRB review hobbles low-risk research. I saw how IRBs inflate the hazards of research in bizarre ways, and how they insist on consent processes that appear designed to help the institution dodge liability or litigation. The committees’ admirable goals, in short, have become disconnected from their actual operations. A system that began as a noble defense of the vulnerable is now an ignoble defense of the powerful.

So Oversight is a mix of attacking and defending IRBs. It attacks them insofar as it admits they do a bad job; the stricter IRB system in place since the ‘90s probably only prevents a single-digit number of deaths per decade, but causes tens of thousands more by preventing life-saving studies. It defends them insofar as it argues this isn’t the fault of the board members themselves. They’re caught up in a network of lawyers, regulators, cynical Congressmen, sensationalist reporters, and hospital administrators gone out of control. Oversight is Whitney’s attempt to demystify this network, explain how we got here, and plan our escape.

April 11, 2023

Canada’s colonial past

Peter Shawn Taylor talks to Nigel Biggar, author of the recent book Colonialism: A Moral Reckoning:

C2C Journal: Explain what you mean by a “moral reckoning” for colonialism – and how does that differ from the now-standard historians’ view that it was a shameful era characterized by exploitation, racism and violence?

Nigel Biggar: My first degree from Oxford is in history but professionally I am a theologian and ethicist. An ethicist is in the business of thinking about rights and wrongs and complicated moral issues. As I have previously written about the morality of war, I wanted to bring that ethical expertise to the very complicated historical phenomenon of empire.

And while my critics claim I am not an historian, they are not ethicists. My book is not a chronology. Each chapter deals with a different moral issue: motives, violence, racism, slavery, et cetera. Then I try to bring it to a conclusion with an overall view of the record of British imperialism, morally speaking. There are the evils of the British Empire, and there are its benefits as well.

[…]

C2C: One of your chapters takes a close look at Canada’s Indian Residential Schools. Take us through an ethicist’s view of a topic that has come to be considered this country’s greatest sin.

NB: The motivation for establishing residential schools was basically humanitarian. That is, they were meant to enable pupils to survive in a world that was changing radically. Notwithstanding any abuses and deficiencies that may have come later, we have to deal with the fact that native Canadians were asking for these schools in the beginning. They lobbied for them in treaties. And this was because they recognized that for their people to survive, they needed to adapt. They wanted their young people to learn English or French and how to farm. They recognized that the old ways could not be sustained any longer.

A lot of people today have a hard time coming to grips with the fact that the past was a very different place. For most people, the 19th century was pretty damn brutal. When we consider the conditions in residential schools today, we are horrified. But what is horrifying are the conditions in which most people of that time had to live. It is true mortality among native kids in these schools was generally higher and conditions were poorer. Sexual abuse was also a problem, but mostly by fellow pupils. I don’t want to sweep any of that under the table. Maybe the Canadian government should have spent more money on residential schools. But to make that case you need to identify what the government of the day should have spent less on. And I haven’t seen that argument made anywhere.

The Truth and Reconciliation Commission has many lurid tales about kids being seized from their parents. No doubt that, after education became compulsory in the 1920s, some children were distressed at being taken away. But this too has to be understood in light of the fact that the idea all children must have a certain level of education was gaining tremendous traction in Canada, Britain and throughout Europe at this time. So compulsory education for native children must be considered in that regard. And what might people say today if the Government of Canada had refused to educate Indigenous children?

Again, I don’t want to downplay the defects of residential schools. But we need to provide context in order to understand these things in proportion. It must also be considered significant that since the early 1990s, Canadian media have declined to give voice to many natives who want to offer positive expressions of residential schools, as J.R. Miller points out in his authoritative history of the residential school system, Shingwauk’s Vision. According to Miller, the verdict for the schools must be given in “muted and equivocal terms”. The wholesale damnation of residential schools is overwrought and unfair.

April 8, 2023

The underlying philosophy of J.R.R. Tolkien’s work

David Friedman happened upon an article he wrote 45 years ago on the works of J.R.R. Tolkien:

The success of J.R.R. Tolkien is a puzzle, for it is difficult to imagine a less contemporary writer. He was a Catholic, a conservative, and a scholar in a field-philology-that many of his readers had never heard of. The Lord of the Rings fitted no familiar category; its success virtually created the field of “adult fantasy”. Yet it sold millions of copies and there are tens, perhaps hundreds, of thousands of readers who find Middle Earth a more important part of their internal landscape than any other creation of human art, who know the pages of The Lord of the Rings the way some Christians know the Bible.

Humphrey Carpenter’s recent Tolkien: A Biography, published by Houghton Mifflin, is a careful study of Tolkien’s life, including such parts of his internal life as are accessible to the biographer. His admirers will find it well worth reading. We learn details, for instance, of Tolkien’s intense, even sensual love for language; by the time he entered Oxford, he knew not only French, German, Latin, and Greek, but Anglo-Saxon, Gothic and Old Norse. He began inventing languages for the sheer pleasure of it and when he found that a language requires a history and a people to speak it he began inventing them too. The language was Quenya, the people were the elves. And we learn, too, some of the sources of his intense pessimism, of his feeling that the struggle against evil is desperate and almost hopeless and all victories at best temporary.

Carpenter makes no attempt to explain his subject’s popularity but he provides a few clues, the most interesting of which is Tolkien’s statement of regret that the English had no mythology of their own and that at one time he had hoped to create one for them, a sort of English Kalevala. That attempt became The Silmarillion, which was finally published three years after the author’s death; its enormous sales confirm Tolkien’s continuing popularity. One of the offshoots of The Silmarillion was The Lord of the Rings.

What is the hunger that Tolkien satisfies? George Orwell described the loss of religious belief as the amputation of the soul and suggested that the operation, while necessary, had turned out to be more than a simple surgical job. That comes close to the point, yet the hunger is not precisely for religion, although it is for something religion can provide. It is the hunger for a moral universe, a universe where, whether or not God exists, whether or not good triumphs over evil, good and evil are categories that make sense, that mean something. To the fundamental moral question “why should I do (or not do) something”, two sorts of answers can be given. One answer is “the reason you feel you should do this thing is because your society has trained you (or your genes compel you) to feel that way”. But that answers the wrong question. I do not want to know why I feel that I should do something; I want to know why (and whether) I should do it. Without an answer to that second question all action is meaningless. The intellectual synthesis in which most of us have been reared — liberalism, humanism, whatever one may call it — answers only the first question. It may perhaps give the right answer but it is the wrong question.

The Lord Of The Rings is a work of art, not a philosophical treatise; it offers, not a moral argument, but a world in which good and evil have a place, a world whose pattern affirms the existence of answers to that second question, answers that readers, like the inhabitants of that world, understand and accept. It satisfies the hunger for a moral pattern so successfully that the created world seems to many more real, more right, than the world about them.

Does this mean, as Tolkien’s detractors have often said, that everything in his books is black and white? If so, then a great deal of literature, including all of Shakespeare, is black and white. Nobody in Hamlet doubts that poisoning your brother in order to steal his wife and throne is bad, not merely imprudent or antisocial. But the existence of black and white does not deny the existence of intermediate shades; gray can be created only if black and white exist to be mixed. Good and evil exist in Tolkien’s work but his characters are no more purely good or purely evil than are Shakespeare’s.

April 6, 2023

Japan is weird, example MCMLXIII

John Psmith reviews MITI and the Japanese Miracle by Chalmers Johnson:

I’ve been interested in East Asian economic planning bureaucracies ever since reading Joe Studwell’s How Asia Works (briefly glossed in my review of Flying Blind). But even among those elite organizations, Japan’s Ministry of International Trade and Industry (MITI) stands out. For starters, Japanese people watch soap operas about the lives of the bureaucrats, and they’re apparently really popular! Not just TV dramas; huge numbers of popular paperback novels are churned out about the men (almost entirely men) who decide what the optimal level of steel production for next year will be. As I understand it, these books are mostly not about economics, and not even about savage interoffice warfare and intraoffice politics, but rather focus on the bureaucrats themselves and their dashing conduct, quick wit, and passionate romances … How did this happen?

It all becomes clearer when you learn that when the Meiji period got rolling, Japan’s rulers had a problem: namely, a vast, unruly army of now-unemployed warrior aristocrats. Samurai demobilization was the hot political problem of the 1870s, and the solution was, well … in many cases it was to give the ex-samurai a sinecure as an economic planning bureaucrat. Since positions in the bureaucracy were often quasi-hereditary, what this means is that in some sense the samurai never really went away, they just hung up their swords — frequently literally hung them up on the walls of their offices — and started attacking the problem of optimal industrial allocation with all the focus and fury that they’d once unleashed on each other. According to Johnson, to this day the internal jargon of many Japanese government agencies is clearly and directly descended from the dialects and battle-codes of the samurai clans that seeded them.

This book is about one such organization, MITI, whose responsibilities originally were limited to wartime rationing and grew to encompass, depending who you ask, the entire functioning of the Japanese government. Because this is the buried lede and the true subject of this book: you thought you were here to read about development economics and a successful implementation of the ideas of Friedrich List, but you’re actually here to read about how the entire modern Japanese political system is a sham. This suggestion is less outrageous than it may sound at first blush. By this point most are familiar with the concept of “managed democracy,” wherein there are notionally competitive popular elections, culminating in the selection of a prime minister or president who’s notionally in charge, but in reality some other locus of power secretly runs things behind the scenes.

There are many flavors of managed democracy. The classic one is the “single-party democracy”, which arises when for whatever reason an electoral constituency becomes uncompetitive and returns the same party to power again and again. Traditional democratic theory holds that in this situation the party will split, or a new party will form which triangulates the electorate in just such a way that the elections are competitive again. But sometimes the dominant party is disciplined enough to prevent schisms and to crush potential rivals before they get started. The key insight is that there’s a natural tipping-point where anybody seeking political change will get a better return from working inside the party than from challenging it. This leads to an interesting situation where political competition remains, but moves up a level in abstraction. Now the only contests that matter are the ones between rival factions of party insiders, or powerful interest groups within the party. The system is still competitive, but it is no longer democratic. This story ought to be familiar to inhabitants of Russia, South Africa, or California.

The trouble with single-party democracies is that it’s pretty clear to everybody what’s going on. Yes, there are still elections happening, there may even be fair elections happening, and inevitably there are journalists who will point to those elections as evidence of the totally-democratic nature of the regime, but nobody is really fooled. The single-party state has a PR problem, and one solution to it is a more postmodern form of managed democracy, the “surface democracy”.

Surface democracies are wildly, raucously competitive. Two or more parties wage an all-out cinematic slugfest over hot-button issues with big, beautiful ratings. There may be a kaleidoscopic cast of quixotic minor parties with unusual obsessions filling the role of comic relief, usually only lasting for a season or two of the hit show Democracy. The spectacle is gripping, everybody is awed by how high the stakes are and agonizes over how to cast their precious vote. Meanwhile, in a bland gray building far away from the action, all of the real decisions are being made by some entirely separate organ of government that rolls onwards largely unaffected by the show.