[…] Those are important findings, but the study of capitalism in the age of AI is larger than labor-saving technologies inside a fixed institutional world. It’s the study of market processes that change the world in which labor takes place.

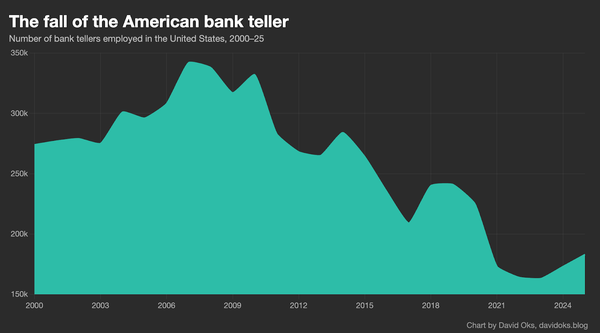

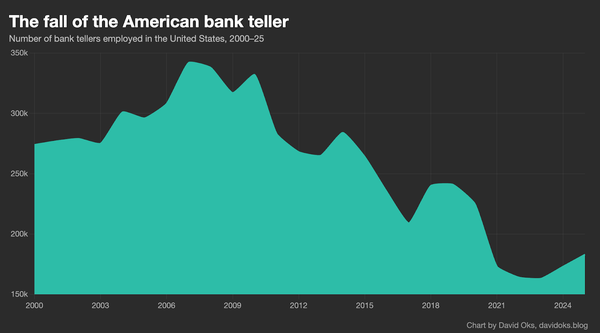

David Oks gets at this in a recent essay on bank tellers that has been making the rounds. For years, economists and pundits used the ATM to illustrate why technological progress does not necessarily wipe out jobs. In a conversation with Ross Douthat, Vice President J.D. Vance made exactly that point. The ATM automated a large share of what bank tellers used to do, and yet teller employment did not collapse. Why? Because the ATM lowered the cost of operating a branch. Banks opened more branches. Tellers shifted toward relationship management, customer cultivation, and a more boutique kind of service. The machine changed the worker’s role inside the same institution.

That story was true. Until it wasn’t.

As Oks puts it, the ATM did not kill the bank teller, but the iPhone did. Mobile banking changed the consumer interface of finance. Once that happened, the branch ceased to be the unquestioned center of retail banking. And once the branch lost that status, the teller lost the institutional setting that made him economically legible in the first place. The ATM fit capital into a labor-shaped hole. The smartphone changed the shape of the hole.

Vance looks at the ATM era and says: technology does not destroy jobs. Oks looks at the smartphone era and says: it does, just not the technology you expected. But if you stop there, you are still doing what economist Joseph Schumpeter called appraising the process ex visu of a given point of time. As Schumpeter wrote, capitalism is an organic process, and the “analysis of what happens in any particular part of it, say, in an individual concern or industry, may indeed clarify details of mechanism but is inconclusive beyond that”. You shouldn’t study one occupation within one industry and draw conclusions about how technological change works.

The obvious question you still have to answer is: where did those former bank tellers go? What happened to the capital freed when branches closed? What new institutional forms, fintech, mobile payments, embedded finance, neobanks, emerged from the very same process that destroyed the branch model? How many jobs did those create, and in what configurations?

The lost teller jobs are seen. They show up in BLS data and make for a dramatic graph. The unseen is everything the mobile banking revolution enabled, not only within financial services, but across the entire economy. The person who no longer spends thirty minutes at a branch and instead uses that time to manage cash flow for a small business. The immigrant who sends remittances through an app instead of through Western Union. The fintech startup that employs forty engineers building fraud-detection systems. None of that appears in a chart titled “Bank Teller Employment”. The unseen is the world that emerges.

When economists say the ATM was “complementary” to bank tellers, what they usually mean is something quite narrow: the machine performed one set of tasks, such as dispensing cash, and freed the human to concentrate on others, such as relationship banking, cross-selling, and problem-solving.

But the ATM did more than substitute for one task while leaving others to the teller. It made the teller more productive inside the same institutional setting. This is the comparative advantage layer that Séb Krier touches on when he says that “as long as the combination of Human + AGI yields even a marginal gain over AGI alone, the human retains a comparative advantage”. The branch still organized the relationship between bank and customer and the teller still inhabited a role within that world. The ATM simply changed the economics of that role, making the branch cheaper to operate and, paradoxically, more worth expanding.

But the branch is not just a building with unhappy carpet and suspicious lighting. It is an institution. It is a set of roles, expectations, scripts, constraints, and physical arrangements that organize how a bank and a customer relate to one another. It tells people where banking happens, how banking happens, and who performs which function in the ritual. The teller made sense within that world. So did the ATM. They were both playing the same game.

The iPhone did something different. Instead of automating tasks within the branch, it challenged the premise that banking requires a branch at all. It shifted the game to another board. Call this institutional substitution. When a technology is designed to operate within existing rules, the institution can often absorb it, adapt to it, metabolize it. The real threat comes from technologies that are not even playing the same game. The ATM was a move within the branch-banking game. Mobile banking was a move in the higher-order game, the game about which games get played.

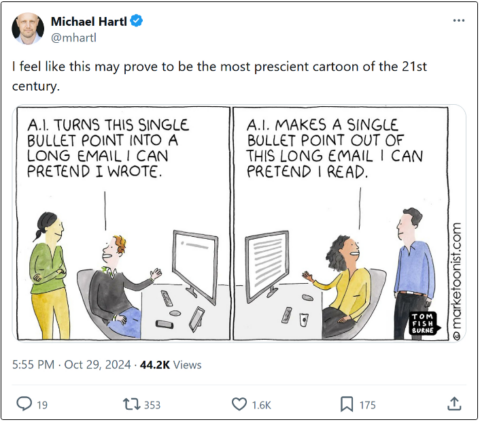

Most discussion of AI stops at the level of task substitution and complementarity. Those are necessary questions, but ATM questions.

Joseph Schumpeter understood that entrepreneurship is not simply about making institutions more efficient. It’s about unsettling the institutional forms through which those efficiencies make sense at all. If you ask whether AI can do some of the work of a lawyer, a teacher, a customer service representative, or a junior analyst, you are asking an interesting question. But you are still mostly asking an ATM question. You are asking how capital fits into an existing human role. The more interesting question is whether AI changes the institutional setting that made that role intelligible in the first place. Now we are talking about institutional substitution. It’s a more dangerous territory and a more interesting territory.

And if the bank teller story is any guide, the technologies that bring about institutional substitution will not necessarily be the ones designed to automate an institution’s existing tasks. They may come from somewhere orthogonal, from applications and configurations that incumbents were not watching because they did not look like competition. The iPhone was not competing with the ATM. It was playing a different game, and it happened to make the old game less central.

So the real question is not whether AI will destroy jobs in the abstract. The real question is how AI will reorganize the architecture of production, consumption, and coordination. Not “AI does what lawyers do, but cheaper”, but rather “AI enables a new way of resolving disputes or structuring agreements that makes the current institutional form of legal services less necessary”.