Marginal Revolution University

Published on 1 Nov 2016Finding a job can be kind of like dating. When a new graduate enters the labor market, she may have the opportunity to enter into a long-term relationship with several companies that aren’t really a good fit. Maybe the pay is too low or the future opportunities aren’t great. Before settling down with the right job, this person is still considered unemployed. Specifically, she’s experiencing frictional unemployment.

In the United States’ dynamic economy, this is a common state of short-term unemployment. Companies are often under high levels of competition and frequently evolve. They go out of business or have to lay off workers. Or maybe the worker quits to find a better position. In fact, millions of separations and new hires occur every month accompanied by short periods of unemployment.

Frictional unemployment helps allocate human capital (i.e. workers) to its highest valued use. Hopefully, workers are similarly finding themselves with more fulfilling jobs. Even when it’s caused by an event such as a firm going out of business, frictional unemployment is a normal part of a healthy, growing economy.

March 8, 2018

Frictional Unemployment

March 2, 2018

Defining the Unemployment Rate

Marginal Revolution University

Published on 18 Oct 2016How is unemployment defined in the United States?

If someone has a job, they’re defined as “employed.” But does that mean that everyone without a job is unemployed? Not exactly.

A minor without a job isn’t unemployed. Someone who has been incarcerated also isn’t counted. A retiree, too, does not count toward the unemployment rate.

For the official statistics, you have to meet quite a few criteria to be considered unemployed in the U.S. For instance, if you’re without a job, but have actively looked for work in the past four weeks, you are considered unemployed.

In times of recession, when people are faced with long-term unemployment and lots of discouragement, the official rate might not count some of the people that you would otherwise consider unemployed.

This video will give you a clear picture of how the unemployment rate is defined and build a foundation for further understanding this important facet of labor markets.

February 25, 2018

QotD: Trade deficits

No economic statistic is reported more dolefully these days than the country’s trade balance.

Ever on the alert for signs of impending economic disaster, the press routinely couples reports of record monthly trade deficits with warnings of experts and Government officials of the dangers of the deficit.

Just what is so dangerous about receiving more goods from foreigners than we give them back is never actually explained, but it is often suggested that that it causes a loss of American jobs.

News reports sometimes even provide estimates of the number of jobs lost owing to every billion dollar increase in the trade deficit. Heaven only knows how these estimates are made, but presumably they are based on the assumption that imports deprive Americans of jobs they could have had producing domestic substitutes for the imports.

It almost seems tedious to do so, but it apparently still needs to be pointed out that buying less from foreigners means that they will buy less from us for the simple reason that they will have fewer dollars with which to purchase our products.

Thus, even if reducing imports increases employment in industries that compete with imports, it must also reduce employment in export industries.

Moreover, the notion that the trade deficit destroys domestic jobs is contradicted by the tendency of the deficit to increase during economic expansions and to decrease during contractions.

The demand for imports rises with income, so imports normally tend to rise faster than exports when a country expands more rapidly than its trading partners. The trade deficit is a symptom or rising employment — not the cause of rising unemployment.

That balance-of-trade figures are misunderstood and misused is not surprising, since their function has never been to inform or to enlighten. Their real purpose is to provide spurious statistical and pseudo-scientific support to groups seeking protectionist legislation. These groups try to cloak their appeals to protection with an invocation of the general interest in a favorable balance of trade.

David Glasner, “What’s So Bad about the Trade Deficit?”, Uneasy Money, 2016-06-02 (originally published in the New York Times in 1984).

February 24, 2018

Is Unemployment Undercounted?

Marginal Revolution University

Published on 25 Oct 2016You may recall from our previous video that to be counted in the official unemployment rate in the U.S., you have to be an adult without a job and have actively looked for work within the past four weeks. That means that if someone has given up looking for a job, even if they want one, they are no longer counted under the official definition.

Does this mean that unemployment is undercounted? In other words, is the unemployment rate in fact higher than is reported?

Some have claimed this to be the case. However, unemployment is a tricky statistic. It’s important to consider that adults without jobs can fall into different categories. Many retirees, for example, are willing to leave retirement and take a job for the right price. If we are counting people that aren’t actively looking for employment, shouldn’t the retirees also be considered unemployed?

The simplest solution to this conundrum is to only count unemployed adults actively seeking work.

But what about discouraged workers — those who are unemployed and have not sought work in the past four weeks, but have sought work in the past year. Should we consider them in our calculations?

There are actually six different unemployment rates measured by the U.S. Bureau of Labor Statistics. The various rates have less and more stringent criteria. The official rate, called U3, falls somewhere in the middle. Another rate, called U4, does include discouraged workers in its calculation. All six rates follow a similar track over time.

So while the official unemployment rate may not be perfect, it does provide us with a good indicator of the state of the labor market and where it’s headed.

February 19, 2018

Graphing good news

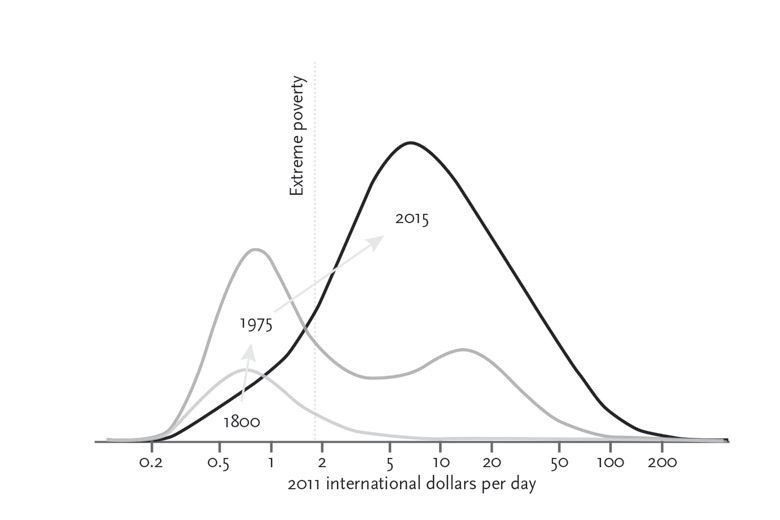

In the Times Literary Supplement, David Wootton reviews Enlightenment Now: A manifesto for science, reason, humanism and progress by Steven Pinker:

This book consists essentially of seventy-two graphs – and, despite that, it is gripping, provocative and (many will find) infuriating. The graphs all have time on the horizontal axis, and on the vertical axis something important that can be measured against it – life expectancy, for example, or suicide rates, or income. In some graphs the line, or lines (often the graphs compare trends in several countries) fall as they go from left to right; in others they rise. In every single one, the overall picture (with the inevitable blips and bounces) is of life getting better and better. Suicide rates fall, homicides fall, incomes rise, life expectancies rise, literacy rates rise and so on and on through seventy-two variations. Most of these graphs are not new: some simply update graphs which appeared in Pinker’s earlier The Better Angels of Our Nature (2011); others come from recognized purveyors of statistical information. The graphs that weren’t in Better Angels extend the argument of that book, that war and homicide are on the decline across the globe, to assert that life has been getting better and better in all sorts of other respects. The claim isn’t new: a shorter version is to be found in Johan Norberg’s Progress (2017). But the range and scope of the evidence adduced is new. The only major claim not supported by a graph (or indeed much evidence of any kind) is the assertion that all this progress has something to do with the Enlightenment.

Since the argument of the book is almost entirely contained in the graphs, those who want to attack the argument are going to attack the figures on which the graphs are based. Good luck to them: arguments based on statistics, like all interesting arguments, should be tested and tested again. Better Angels caused a vitriolic dispute between Pinker and Nassim Nicholas Taleb as to whether major wars are becoming less frequent. In Taleb’s view the question is a bit like asking whether major earthquakes are getting less frequent or not: they happen so rarely, and so randomly, that you would need records going back over a vast stretch of time to reach any meaningful conclusion; a graph showing falling death rates in wars over the past seventy years won’t do the job. But it certainly will tell you that lots of generalizations about modern war are wrong. Much, indeed most, of Pinker’s argument survived Taleb’s attack, which in any case was directed at only one graph among many.

A more radical line of criticism of Better Angels came from John Gray. How can one find a common standard of measurement for the suffering of a concentration camp victim, of a soldier who died in the trenches, and of someone killed in the firebombing of Dresden? To turn to economics, how can one find a common standard of measurement for books and washing machines, oranges and steak pies? Money, you might think, provides that standard, but what happens if many of the goods being measured – electric lighting, cars, televisions, computers – get cheaper and cheaper as time goes on, so that a rising standard of living is concealed by falling prices? For Gray, to place one’s faith in statistics, which claim to be measuring the unmeasurable, is no different from believing in conversations with angels or in the efficacy of Buddhist prayer wheels. Quantification is our religion.

February 17, 2018

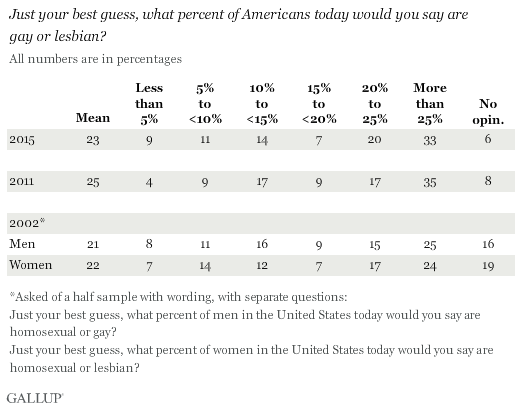

Only 3.8% of American adults identify themselves as LGBT

Most people guess a much higher percentage, and if the poll was restricted to the under-30s, the number would likely be at least twice as high. The poll is a few years old now, but it points out that most Americans over-estimate the number of gays and lesbians in the population:

The American public estimates on average that 23% of Americans are gay or lesbian, little changed from Americans’ 25% estimate in 2011, and only slightly higher than separate 2002 estimates of the gay and lesbian population. These estimates are many times higher than the 3.8% of the adult population who identified themselves as lesbian, gay, bisexual or transgender in Gallup Daily tracking in the first four months of this year.

The stability of these estimates over time contrasts with the major shifts in Americans’ attitudes about the morality and legality of gay and lesbian relations in the past two decades. Whereas 38% of Americans said gay and lesbian relations were morally acceptable in 2002, that number has risen to 63% today. And while 35% of Americans favored legalized same-sex marriage in 1999, 60% favor it today.

The U.S. Census Bureau documents the number of individuals living in same-sex households but has not historically identified individuals as gay or lesbian per se. Several other surveys, governmental and non-governmental, have over the years measured sexual orientation, but the largest such study by far has been the Gallup Daily tracking measure instituted in June 2012. In this ongoing study, respondents are asked “Do you, personally, identify as lesbian, gay, bisexual or transgender?” with 3.8% being the most recent result, obtained from more than 58,000 interviews conducted in the first four months of this year.

H/T to Gari Garion for the link.

February 15, 2018

QotD: Computer models

How can one be certain about outcomes in a complex system that we’re not really all that good at modeling? Anyone who’s familiar with the history of macroeconomic modeling in the 1960s and 1970s will be tempted to answer “Umm, we can’t.” Economists thought that the explosion of data and increasingly sophisticated theory was going to allow them to produce reasonably precise forecasts of what would happen in the economy. Enormous mental effort and not a few careers were invested in building out these models. And then the whole effort was basically abandoned, because the models failed to outperform mindless trend extrapolation — or as Kevin Hassett once put it, “a ruler and a pencil.”

Computers are better now, but the problem was not really the computers; it was that the variables were too many, and the underlying processes not understood nearly as well as economists had hoped. Economists can’t run experiments in which they change one variable at a time. Indeed, they don’t even know what all the variables are.

This meant that they were stuck guessing from observational data of a system that was constantly changing. They could make some pretty good guesses from that data, but when you built a model based on those guesses, it didn’t work. So economists tweaked the models, and they still didn’t work. More tweaking, more not working.

Eventually it became clear that there was no way to make them work given the current state of knowledge. In some sense the “data” being modeled was not pure economic data, but rather the opinions of the tweaking economists about what was going to happen in the future. It was more efficient just to ask them what they thought was going to happen. People still use models, of course, but only the unflappable true believers place great weight on their predictive ability.

Megan McArdle, “Global-Warming Alarmists, You’re Doing It Wrong”, Bloomberg View, 2016-06-01.

February 4, 2018

Sword Bayonets – German Casualties – Jerusalem Occupation I OUT OF THE TRENCHES

The Great War

Published on 3 Feb 2018Check our Podcast: http://bit.ly/MedievalismWW1Podcast

Chair of Wisdom Time! This week we talk about possibly fabricated German casualty numbers, the unwieldy WW1 bayonets and the reaction to the occupation of Jerusalem.

January 19, 2018

What “killed” the most tanks in World War 2?

Military History Visualized

Published on 22 Dec 2017This video discusses what killed the most tanks in World War 2. Was it anti-tank guns, mines, planes, hand-held anti-tank weapons, mechanical breakdowns, etc. Also a short look at the problems of the term “kill”, e.g., mobility, firepower and catastrophic/complete kill.

Original Question by Christopher: “What destroyed the most tanks during WW2: infantry, planes, anti-tank guns, or other tanks (I’m not sure if tank destroyers needs its own category or not).”

January 8, 2018

January 1, 2018

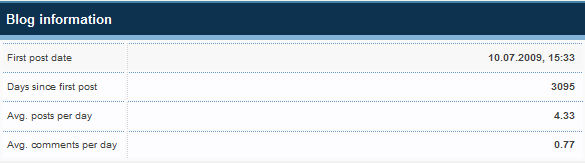

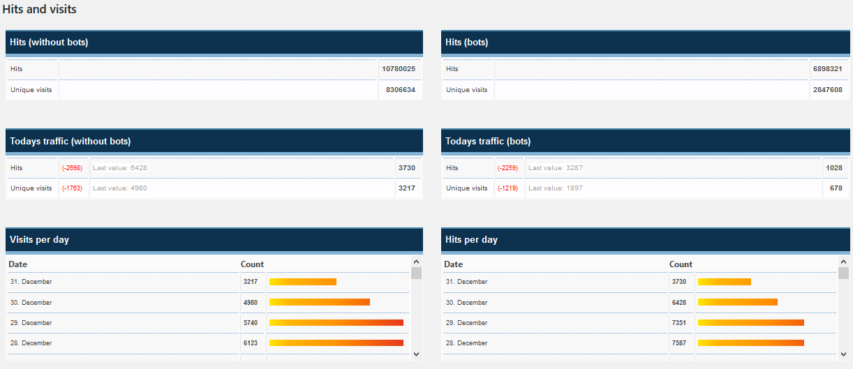

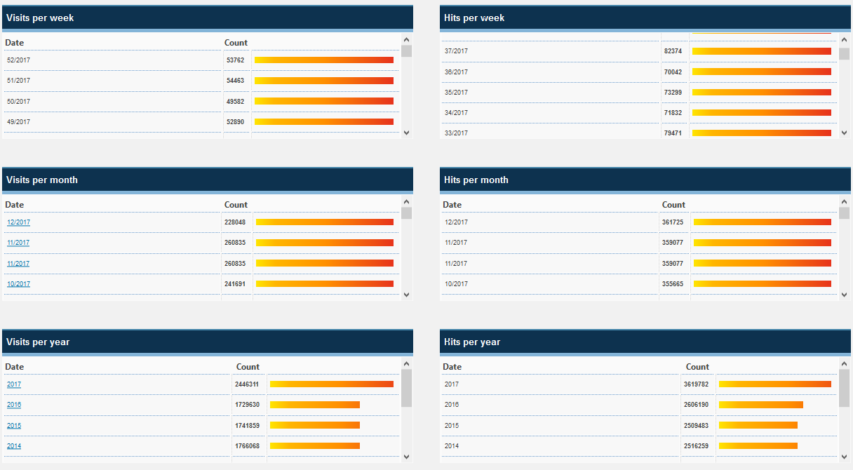

Blog traffic in 2017

The annual statistics update on Quotulatiousness from January 1st through December 31st, 2017. The numbers will be a couple of thousand short of the full year, as I did the screen captures mid-morning on the 31st.

I stopped paying much attention to the blog stats years ago, but the jump in traffic from 2016 to 2017 is amazing! Going from a stable ~1.7 million visits per year to nearly 2.5 million last year is quite unexpected. That’s getting up toward the region where it might seem to make sense to try to monetize the blog … but I tried doing the Amazon affiliate thing earlier this year, and it generated exactly $0.00 in revenue for Amazon, and I got my full share of that revenue (as Jayne put it: “Let’s see, let me do the math: 10 per cent of nothing is, … (mumble) carry the zero …(mumble) … “)

October 26, 2017

QotD: The nutrition science is settled

Nutrition science is, in general, a bottomless stew of politics, guesswork, bogus data and poor statistical practice. I would call it “unsavoury” if that weren’t such an inexcusable pun in this context. Anyone who has read the newspaper for 10 or 20 years, watching the endless tide of good-for-you/bad-for-you roll in and out, must know this instinctively.

Colby Cosh, “MSG: The harmless food enhancer everyone still dreads”, National Post, 2016-04-18.

September 5, 2017

The 100 Year Flood Is Not What You Think It Is (Maybe)

Published on 6 Mar 2016

Today on Practical Engineering we’re talking about hydrology, and I took a little walk through my neighborhood to show you some infrastructure you may have never noticed before.

Almost everyone agrees that flooding is bad. Most years it’s the number one natural disaster in the US by dollars of damage. So being able to characterize flood risks is a crucial job of civil engineers. Engineering hydrology has equal parts statistics and understanding how society treats risks. Water is incredibly important to us, and it shapes almost every facet of our lives, but it’s almost never in the right place at the right time. Sometimes there’s not enough, like in a drought or just an arid region, but we also need to be prepared for the times when there’s too much water, a flood. Rainfall and streamflow have tremendous variability and it’s the engineer’s job to characterize that so that we can make rational and intelligent decisions about how we develop the world around us. Thanks for watching!

FEMA Floodplain Maps: https://msc.fema.gov/portal

USGS Stream Gages: http://maps.waterdata.usgs.gov/mapper

“So, let’s consider the concept of a ‘500-year flood'”

Charlie Martin explains how it’s possible to have two “500-year floods” in less than 500 years:

There have been a lot of people suggesting that Harvey the Hurricane shows that “really and truly climate change is happening, see, in-your-face deniers!”

Of course, it’s possible, even though the actual evidence — including the 12-year drought in major hurricanes — is against it. But hurricanes are a perfect opportunity for stupid math tricks. Hurricanes also provide great opportunities to explain concepts that are unclear to people. So, let’s consider the concept of a “500-year flood.”

Most people hear this and think it means “one flood this size in 500 years.” The real definition is subtly different: saying “a 500-year flood” actually means “there is one chance in 500 of a flood this size happening in any year.”

It’s called a “500-year flood” because statistically, over a long enough time, we would expect to have roughly one such flood on average every 500 years. So, if we had 100,000 years of weather data (and things stayed the same otherwise, which is an unrealistic assumption) then we’d expect to have seen 100,000/500- or 200 500-year floods [Ed. typo fixed] at that level.

The trouble is, we’ve only got about 100 years of good weather data for the Houston area.

July 27, 2017

Words & Numbers: Is Income Inequality Real?

Published on 26 Jul 2017

Income inequality has been in the news more and more, and it doesn’t look good. It’s aggravating to see people making more money than you, and we’re told all the time that income inequality is on the rise. But is it? And even if it is, is it actually a bad thing? This week on Words and Numbers, Antony Davies and James R. Harrigan talk about how income inequality plays out in the real world.

Learn more: https://fee.org/articles/is-income-inequality-real/