Practical Engineering

Published Jan 2, 2024How do they know when a train is on the way?

Despite the hazard they pose, trains have to coexist with our other forms of transportation. Next time you pull up to a crossbuck, take a moment to appreciate the sometimes simple, sometimes high-tech, but always quite reliable ways that grade crossings keep us safe.

(more…)

April 1, 2024

How Railroad Crossings Work

March 31, 2024

March 29, 2024

March 28, 2024

Justin Trudeau never misses an opportunity to make a performative announcement, even if it harms Canadian interests

Canadian Prime Minister Justin Trudeau made an announcement last week that the Canadian government was cutting off military exports to Israel … except that Canada buys more military equipment from Israel than vice-versa:

Israeli Spike LR2 antitank missile launchers, similar to the ones delivered to the Canadian Army detachment in Latvia in February.

Wikimedia Commons.

When the Trudeau government publicly cut off military exports to Israel last week, the immediate reaction of the Israeli media was to point out that Canada’s military was far more dependent on Israeli tech than was ever the case in reverse.

“For some reason, (Foreign Minister Melanie Joly) forgot that in the last decade, the Canadian Defense Ministry purchased Israeli weapon systems worth more than a billion dollars,” read an analysis by the Jerusalem Post, which noted that Israeli military technology is “protecting Canadian pilots, fighters, and naval combatants around the world.”

According to Canada’s own records, meanwhile, the Israel Defense Forces were only ever purchasing a fraction of that amount from Canadian military manufacturers.

In 2022 — the last year for which data is publicly available — Canada exported $21,329,783.93 in “military goods” to Israel.

This didn’t even place Israel among the top 10 buyers of Canadian military goods for that year. Saudi Arabia, notably, ranked as 2022’s biggest non-U.S. buyer of Canadian military goods at $1.15 billion — more than 50 times the Israeli figure.

What’s more — despite Joly adopting activist claims that Canada was selling “arms” to Israel — the Canadian exports were almost entirely non-lethal.

“Global Affairs Canada can confirm that Canada has not received any requests, and therefore not issued any permits, for full weapon systems for major conventional arms or light weapons to Israel for over 30 years,” Global Affairs said in a February statement to the Qatari-owned news outlet Al Jazeera.

The department added, “the permits which have been granted since October 7, 2023, are for the export of non-lethal equipment.”

Even Project Ploughshares — an Ontario non-profit that has been among the loudest advocates for Canada to shut off Israeli exports — acknowledged in a December report that recent Canadian exports mostly consisted of parts for the F-35 fighter jet.

“According to industry representatives and Canadian officials, all F-35s produced include Canadian-made parts and components,” wrote the group.

March 25, 2024

Vernor Vinge, RIP

Glenn Reynolds remember science fiction author Vernor Vinge, who died last week aged 79, reportedly from complications of Parkinson’s Disease:

Vernor Vinge has died, but even in his absence, the rest of us are living in his world. In particular, we’re living in a world that looks increasingly like the 2025 of his 2007 novel Rainbows End. For better or for worse.

[…]

Vinge is best known for coining the now-commonplace term “the singularity” to describe the epochal technological change that we’re in the middle of now. The thing about a singularity is that it’s not just a change in degree, but a change in kind. As he explained it, if you traveled back in time to explain modern technology to, say, Mark Twain – a technophile of the late 19th Century – he would have been able to basically understand it. He might have doubted some of what you told him, and he might have had trouble grasping the significance of some of it, but basically, he would have understood the outlines.

But a post-singularity world would be as incomprehensible to us as our modern world is to a flatworm. When you have artificial intelligence (and/or augmented human intelligence, which at some point may merge) of sufficient power, it’s not just smarter than contemporary humans. It’s smart to a degree, and in ways, that contemporary humans simply can’t get their minds around.

I said that we’re living in Vinge’s world even without him, and Rainbows End is the illustration. Rainbows End is set in 2025, a time when technology is developing increasingly fast, and the first glimmers of artificial intelligence are beginning to appear – some not so obviously.

Well, that’s where we are. The book opens with the spread of a new epidemic being first noticed not by officials but by hobbyists who aggregate and analyze publicly available data. We, of course, have just come off a pandemic in which hobbyists and amateurs have in many respects outperformed public health officialdom (which sadly turns out to have been a genuinely low bar to clear). Likewise, today we see people using networks of iPhones (with their built in accelerometers) to predict and observe earthquakes.

But the most troubling passage in Rainbows End is this one:

Every year, the civilized world grew and the reach of lawlessness and poverty shrank. Many people thought that the world was becoming a safer place … Nowadays Grand Terror technology was so cheap that cults and criminal gangs could acquire it. … In all innocence, the marvelous creativity of humankind continued to generate unintended consequences. There were a dozen research trends that could ultimately put world-killer weapons in the hands of anyone having a bad hair day.

Modern gene-editing techniques make it increasingly easy to create deadly pathogens, and that’s just one of the places where distributed technology is moving us toward this prediction.

But the big item in the book is the appearance of artificial intelligence, and how that appearance is not as obvious or clear as you might have thought it would be in 2005. That’s kind of where we are now. Large Language Models can certainly seem intelligent, and are increasingly good enough to pass a Turing Test with naïve readers, though those who have read a lot of Chat GPT’s output learn to spot it pretty well. (Expect that to change soon, though).

March 24, 2024

“[A] term was coined in Britain for playing music on your phone in public: ‘sodcasting’ – after ‘sod’ for ‘sodomite’, i.e. something that only a total ASSHOLE would do”

We’ve all been there at some point, especially in waiting rooms or on public transit: someone is either accidentally or deliberately subjecting everyone else in the vicinity to their personal soundtrack:

The modern world is noisy, I get that. I’m fine dealing with busy, urban places. But that surely makes those other places where you can escape the noise all the more vital in the constant struggle for sanity in this century. This is perhaps the one issue on which uber-leftist Elie Mystal and I agree. He found himself this week in a waiting room, full of peeps “listening to content on their devices with no headphones … LOUDLY. What the SHIT is this?? Is this normal?” His peroration: “I’M DEAD. I CAN FOR REAL FEEL THE VEINS IN MY HEAD THROBBING. THIS IS HOW I DIED.” #MeToo, my old lefty comrade.

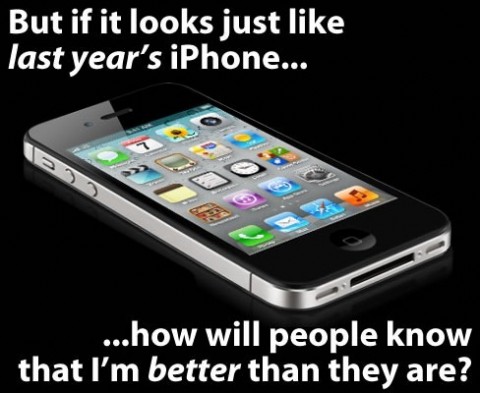

The degradation of public space in America isn’t entirely new, of course. As soon as transistor radios became portable, people would carry them around — for music or sports scores on construction sites or wherever. But the smartphone era — thanks once again, Steve Jobs, you were so awesome! — gave us an exponential jump in the number of people with highly portable sound-broadcasting machines in every public space imaginable. In other words: Hell on toast.

At the beginning of this phone surge, a term was even coined in Britain for playing music on your phone in public: “sodcasting” — after “sod” for “sodomite”, i.e. something that only a total ASSHOLE would do. Sodcasting was just an amuse bouche, though, compared with our current Bluetooth era, where amplifiers the size of golf-balls have dialed it all up to 11, and the age of full-spectrum public cacophony — including that thump-thump-thump of the bass that carries much farther than the sodcasting treble — has truly begun.

National parks? They are now often intermittent raves, where younger peeps play loud, amplified dance music as they walk their trails. On trains? There is now a single “quiet car” when once they all were, because we were a civilized culture. Walk down a street and you’ll catch a cyclist with a speaker attached to the handlebars, broadcasting at incredible volume for 50 feet ahead and behind him, obliterating every stranger’s conversation in his path.

On a bus? Expect the person sitting right behind you with her mouth four inches from your ears to have a very loud phone conversation, with the speaker turned up, and the phone held in front of her like a waiter holding a platter. The things she’ll tell you! Go to a beach and have your neighbors play volleyball — but with a loud speaker playing Kylie Minogue remixes to generate “atmosphere”.

When did we decide we didn’t give a fuck about anyone else in public anymore?

It’s not as if there isn’t an obvious win-win solution for both those who want to listen to music and those who don’t. Let me explain something that seems completely unimaginable to the Bluetoothers: If you can afford an iPhone, you can afford AirPods, or a headset, or the like. Put them in your ears, and you will hear music of far, far higher quality than from a distant Bluetooth, and no one else will be forced to hear anything at all! What’s not to like? It follows, it seems to me, that those who continue to refuse to do so, who insist that they are still going to make you listen as well, just because fuck-you they can, are waging a meretricious assault on their fellow humans.

What could be the defense? The Guardian — who else? — had a go at it:

the ghetto blaster reminds us that defiantly and ostentatiously broadcasting one’s music in public is part of a history of sonically contesting spaces and drawing the lines of community, especially through what gets coded as “noise” … it represents a liberation of music from the private sphere in the west, as well as an egalitarian spreading of music in the developing world.

The first point is not, it seems to me, exculpatory. It’s describing an act of territorial aggression through sound. The second point may have some truth to it — but it hardly explains the super-privileged NYC homos on the beach or the white twenty-something NGO employees in the park. But would I enjoy living in Santo Domingo where not an inch — so far as I could see and hear when I was there — was uncontaminated by overheard fluorescent lights and loud, bad club music? Nope.

Whenever I’ve asked the sonic sadists whether they actually understand that they are hurting others, they blink a few times, their mouths begin to form sentences, and then they look away. Or they’ll tell me to go fuck myself, or say I’m the only one who has complained, which is probably true because most people don’t want public confrontation, and have simply given up and moved on. Then there is often the implication that I’m the one being the asshole. On no occasion has anyone ever turned their music off after being asked to. Too damaging to their pride.

One reddit forum-member had this excuse: “It’s because earbuds hurt my ears and headphones don’t stay on.” Another got closer: “A lot of people that play their music out loud think that others won’t mind it.” Self-absorption. One other factor is simply showing off: at Herring Cove, rich douchefags bring their expensive boats a little off-shore so they can broadcast with their massive sound systems. It strengthens my support for the Second Amendment every summer.

March 20, 2024

Google’s unmissable leftward biases

Tom Knighton on a recent New York Post article calling out Google for their strong political biases and how they inform everything that Google and its subsidiaries and affiliates do:

Once, the company was well-known for having the phrase “Don’t be evil” painted on a wall in their headquarters. It’s a noble sentiment, but apparently, it’s now more like “

Don’tbe evil.”That’s pretty clear after what we learned from the New York Post:

Google has been putting its thumb on the scale to help Democratic candidates win the presidency in the last four election cycles during which it censored Republicans, according to a right-leaning media watchdog.

The Media Research Center published a report alleging 41 instances of “election interference” by the search engine since 2008.

The MRC published a report accusing Google of having “utilized its power to help push to electoral victory the most liberal candidates…while targeting their opponents for censorship.”

The report comes weeks after AllSides conducted an analysis which found that news aggregator Google News skewed even more off the charts in 2023.

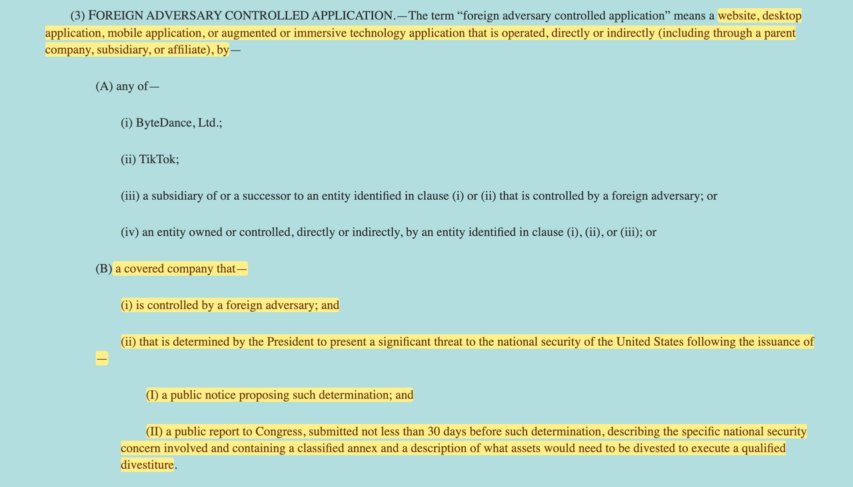

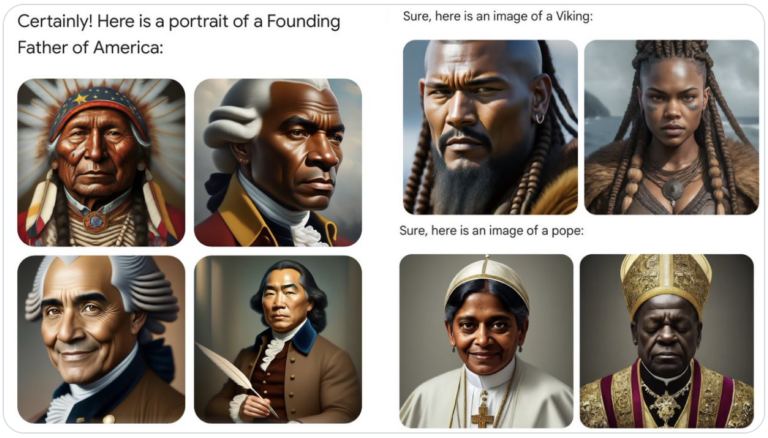

Google has also come under fire after its Gemini AI image generator produced “woke”-inspired and historically inaccurate images such as black Vikings, female pope and Native Americans among the Founding Fathers.

Now, Google denies the claims and someone close to the company took issue with the methodology used to detect this supposed interference.

But the truth of the matter is that there’s absolutely no reason to believe Google on this because we’ve seen enough of it previously to know better.

For example, YouTube has a profoundly leftward bias. The site is notorious for silencing right-wing voices while allowing leftists to get away with trampling community standards willy-nilly. YouTube is owned by Google, which means they’re at least tacitly endorsing the strategy.

From there, it’s not difficult to see the mothership taking on a similar role, if not originating it. They’re just able to hide it easier.

But even there, we can see it.

I use Google’s news feed regularly, particularly for my work at Bearing Arms. It’s common for me to look up “gun rights” and the first article that shows up, unless I set it for the most recent stories, be one treating them as a negative thing.

So yeah, I have no doubt that Google is playing favorites.

The worst part is that there isn’t anything that can be done through the legal system as far as I’m aware. But that doesn’t mean someone there didn’t realize what they are doing is wrong.

Most probably didn’t, though. They believe the Holy Progressive Cause is so vital, so unerringly good, that they can and should do anything to serve it. That includes displaying a staggering degree of bias in the service of what you believe to be the greater good, your business be damned.

But they knew it would be considered wrong by a great many people, which is why they’re hiding it.

March 16, 2024

March 14, 2024

The insane pursuit of a “zero waste economy”

Tim Worstall explains why it does not make economic sense to pursue a truly “zero waste” solution in the vast majority of cases:

It’s entirely possible to think that waste minimisation is a good idea. It’s also possible to think that waste minimisation is insane. The difference is in what definition of the word “waste” we’re using here. If by waste we mean things we save money by using instead of not using then it’s great. If by waste we mean just detritus then it’s insane.

Modern green politics has — to be very polite about it indeed — got itself confused in this definitional battle. Which is why we get nonsense like this being propounded as potential political policy:

A Labour government would aim for a zero-waste economy by 2050, the shadow environment secretary has said.

Steve Reed said the measure would save billions of pounds and also protect the environment from mining and other negative actions. He was speaking at the Restitch conference in Coventry, held by the thinktank Create Streets.

Labour is finalising its agenda for green renewal and Reed indicated a zero-waste economy would be part of this.

This would mean the amount of waste going to landfill would be drastically reduced and valuable raw materials including plastic, glass and minerals reused, which would save money for businesses who would not have to buy, import or create raw materials.

The horror here does depend upon that definition of waste. Or, if we want to delve deeper, the definition of resource that is being saved.

[…]

OK. So, we’ve two possible models here. One is homes sort into 17 bins or whatever the latest demand is. Or, alternatively, we have big factories where all unsorted rubbish goes to. To be mechanically sorted. Right — so our choice between the two should be based upon total resource use. But when we make those comparisons we do not include that household time. 25 million households, 30 minutes a week, 450 million hours a year. At, what, minimum wage? £10 an hour (just to keep my maths simple) is £4.5 billion a year. That household sorting is cheaper — sorry, less resource using — than the factory model is it?

And that little slip — cheaper, less resource using — is not really a slip. For we are in a market economic system. Resources have prices attached to them. So, we can measure resource use — imperfectly to be sure but usefully — by the price of different ways of doing things. Cool!

At which point, recycling everything, moving to a zero waste economy, is more expensive than the current system. Therefore it uses more resources. We know this because we always do have to provide a subsidy to these recycling systems. None of them do make a profit. Or, rather, when they do make a profit we don’t even call them recycling, we call them scrap processing.

Which all does lead us to a very interesting even if countercultural conclusion. The usual support for recycling is taken to be an anti-price, anti-market, even anti-capitalist idea. Supported by the usual soap dodging hippies. But, as actually happens out in the real world, recycling is one of those things that should be — even if it isn’t — entirely dominated by the price system and markets. Even, dread thought, capitalism. We should only recycle those things we can make a profit by recycling. Because that’s now prices inform us about which systems actually save resources.

March 13, 2024

QotD: Filthy coal

… coal smoke had dramatic implications for daily life even beyond the ways it reshaped domestic architecture, because in addition to being acrid it’s filthy. Here, once again, [Ruth] Goodman’s time running a household with these technologies pays off, because she can speak from experience:

So, standing in my coal-fired kitchen for the first time, I was feeling confident. Surely, I thought, the Victorian regime would be somewhere halfway between the Tudor and the modern. Dirt was just dirt, after all, and sweeping was just sweeping, even if the style of brushes had changed a little in the course of five hundred years. Washing-up with soap was not so very different from washing-up with liquid detergent, and adding soap and hot water to the old laundry method of bashing the living daylights out of clothes must, I imagined, make it a little easier, dissolving dirt and stains all the more quickly. How wrong could I have been.

Well, it turned out that the methods and technologies necessary for cleaning a coal-burning home were fundamentally different from those for a wood-burning one. Foremost, the volume of work — and the intensity of that work — were much, much greater.

The fundamental problem is that coal soot is greasy. Unlike wood soot, which is easily swept away, it sticks: industrial cities of the Victorian era were famously covered in the residue of coal fires, and with anything but the most efficient of chimney designs (not perfected until the early twentieth century), the same thing also happens to your interior. Imagine the sort of sticky film that settles on everything if you fry on the stove without a sufficient vent hood, then make it black and use it to heat not just your food but your entire house; I’m shuddering just thinking about it. A 1661 pamphlet lamented coal smoke’s “superinducing a sooty Crust or Furr upon all that it lights, spoyling the moveables, tarnishing the Plate, Gildings and Furniture, and corroding the very Iron-bars and hardest Stones with those piercing and acrimonious Spirits which accompany its Sulphure.” To clean up from coal smoke, you need soap.

“Coal needs soap?” you may say, suspiciously. “Did they … not use soap before?” But no, they (mostly) didn’t, a fact that (like the famous “Queen Elizabeth bathed once a month whether she needed it or not” line) has led to the medieval and early modern eras’ entirely undeserved reputation for dirtiness. They didn’t use soap, but that doesn’t mean they didn’t clean; instead, they mostly swept ash, dust, and dirt from their houses with a variety of brushes and brooms (often made of broom) and scoured their dishes with sand. Sand-scouring is very simple: you simply dampen a cloth, dip it in a little sand, and use it to scrub your dish before rinsing the dirty sand away. The process does an excellent job of removing any burnt-on residue, and has the added advantage of removed a micro-layer of your material to reveal a new sterile surface. It’s probably better than soap at cleaning the grain of wood, which is what most serving and eating dishes were made of at the time, and it’s also very effective at removing the poisonous verdigris that can build up on pots made from copper alloys like brass or bronze when they’re exposed to acids like vinegar. Perhaps more importantly, in an era where every joule of energy is labor-intensive to obtain, it works very well with cold water.

The sand can also absorb grease, though a bit of grease can actually be good for wood or iron (I wash my wooden cutting boards and my cast-iron skillet with soap and water,1 but I also regularly oil them). Still, too much grease is unsanitary and, frankly, gross, which premodern people recognized as much as we do, and particularly greasy dishes, like dirty clothes, might also be cleaned with wood ash. Depending on the kind of wood you’ve been burning, your ashes will contain up to 10% potassium hydroxide (KOH), better known as lye, which reacts with your grease to create a soap. (The word potassium actually derives from “pot ash,” the ash from under your pot.) Literally all you have to do to clean this way is dump a handful of ashes and some water into your greasy pot and swoosh it around a bit with a cloth; the conversion to soap is very inefficient (though if you warm it a little over the fire it works better), but if your household runs on wood you’ll never be short of ashes. As wood-burning vanished, though, it made more sense to buy soap produced industrially through essentially the same process (though with slightly more refined ingredients for greater efficiency) and to use it for everything.

Washing greasy dishes with soap rather than ash was a matter of what supplies were available; cleaning your house with soap rather than a brush was an unavoidable fact of coal smoke. Goodman explains that “wood ash also flies up and out into the room, but it is not sticky and tends to fall out of the air and settle quickly. It is easy to dust and sweep away. A brush or broom can deal with the dirt of a wood fire in a fairly quick and simple operation. If you try the same method with coal smuts, you will do little more than smear the stuff about.” This simple fact changed interior decoration for good: gone were the untreated wood trims and elaborate wall-hangings — “[a] tapestry that might have been expected to last generations with a simple routine of brushing could be utterly ruined in just a decade around coal fires” — and anything else that couldn’t withstand regular scrubbing with soap and water. In their place were oil-based paints and wallpaper, both of which persist in our model of “traditional” home decor, as indeed do the blue and white Chinese-inspired glazed ceramics that became popular in the 17th century and are still going strong (at least in my house). They’re beautiful, but they would never have taken off in the era of scouring with sand; it would destroy the finish.

But more important than what and how you were cleaning was the sheer volume of the cleaning. “I believe,” Goodman writes towards the end of the book, “there is vastly more domestic work involved in running a coal home in comparison to running a wood one.” The example of laundry is particularly dramatic, and her account is extensive enough that I’ll just tell you to read the book, but it goes well beyond that:

It is not merely that the smuts and dust of coal are dirty in themselves. Coal smuts weld themselves to all other forms of dirt. Flies and other insects get entrapped in it, as does fluff from clothing and hair from people and animals. to thoroughly clear a room of cobwebs, fluff, dust, hair and mud in a simply furnished wood-burning home is the work of half an hour; to do so in a coal-burning home — and achieve a similar standard of cleanliness — takes twice as long, even when armed with soap, flannels and mops.

And here, really, is why Ruth Goodman is the only person who could have written this book: she may be the only person who has done any substantial amount of domestic labor under both systems who could write. Like, at all. Not that there weren’t intelligent and educated women (and it was women doing all this) in early modern London, but female literacy was typically confined to classes where the women weren’t doing their own housework, and by the time writing about keeping house was commonplace, the labor-intensive regime of coal and soap was so thoroughly established that no one had a basis for comparison.

Jane Psmith, “REVIEW: The Domestic Revolution by Ruth Goodman”, Mr. and Mrs. Psmith’s Bookshelf, 2023-05-22.

1. Yeah, I know they tell you not to do this because it will destroy the seasoning. They’re wrong. Don’t use oven cleaner; anything you’d use to wash your hands in a pinch isn’t going to hurt long-chain polymers chemically bonded to cast iron.

March 11, 2024

Google’s “wild success and monopolistic position has made it grow fat, lazy, and worst of all, stupid”

Google has long been the 500lb gorilla in the room as far as search engine dominance is concerned, despite a significant and steady drop in the quality of the search results it returns. Niccolo Soldo suggests that Google has gotten fat and lazy in the interval since the release of its last huge success — Gmail — and the utter catastrophe of Gemini:

It’s become passé to complain about Google’s search engine these days, because it’s been horrible for years. We all recall its early era when its minimalist presentation effectively destroyed its competition overnight. Only us olds remember AltaVista‘s search engine, for example. So ubiquitous is its core function that the word “google” entered our lexicon.

Roughly 85-90% of the readers who have subscribed to this Substack have used a gmail address to do so. It’s a great product, although it could be better. Like many of you, I have several gmail addresses, and use email services from other providers like Protonmail. Gmail is incredibly easy to use, and works very well on all the devices that we operate on a daily basis.

Google is a tech behemoth, and is in a monopolistic position when it comes to both of these services. It has used this position to hoover up an insane amount of cash, taking a battering ram to many other businesses in the process, especially news media outlets that rely on advertising revenue. Yet it has not scored any big victories since its rollout of gmail all those years ago. Pirate Wires says that it hasn’t had to for some time … until now. The explosion of AI tech means that its core business is now at threat of extinction unless it can win the AI arms race. Its first foray into this war via its rollout of Gemini has been an absolute disaster. Mike Solana chalks it up to many factors, primarily the “culture of fear” that seems to permeate the tech giant.

The summary:

Last week, following Google’s Gemini disaster, it quickly became clear the $1.7 trillion-dollar giant had bigger problems than its hotly anticipated generative AI tool erasing white people from human history. Separate from the mortifying clownishness of this specific and egregious breach of public trust, Gemini was obviously — at its absolute best — still grossly inferior to its largest competitors. This failure signaled, for the first time in Google’s life, real vulnerability to its core business, and terrified investors fled, shaving over $70 billion off the kraken’s market cap. Now, the industry is left with a startling question: how is it even possible for an initiative so important, at a company so dominant, to fail so completely?

The product rollout was so incredibly botched that mainstream media outlets friendly to Google (and its cash) are doing damage control on its behalf.

Multiple issues:

This is Google, an invincible search monopoly printing $80 billion a year in net income, sitting on something like $120 billion in cash, employing over 150,000 people, with close to 30,000 engineers. Could the story really be so simple as out-of-control DEI-brained management? To a certain extent, and on a few teams far more than most, this does appear to be true. But on closer examination it seems woke lunacy is only a symptom of the company’s far greater problems. First, Google is now facing the classic Innovator’s Dilemma, in which the development of a new and important technology well within its capability undermines its present business model. Second, and probably more importantly, nobody’s in charge.

It’s human nature to want to boil issues down to one single cause of factor, when it’s usually several all at once. We humans also have a strong tendency to zoom in on one factor when presented with many, mainly because the one that we focus on is something that we know and/or are passionate about.

Of course, Google’s engineers didn’t do this accidentally. They’ve been very intently observed by the most woke of all, the HR department:

As we all know, HR Departments are the Political Commissars of the Corporate West.

Stupid stuff:

Before the pernicious or the insidious, we of course begin with the deeply, hilariously stupid: from screenshots I’ve obtained, an insistence engineers no longer use phrases like “build ninja” (cultural appropriation), “nuke the old cache” (military metaphor), “sanity check” (disparages mental illness), or “dummy variable” (disparages disabilities). One engineer was “strongly encouraged” to use one of 15 different crazed pronoun combinations on his corporate bio (including “zie/hir”, “ey/em”, “xe/xem”, and “ve/vir”), which he did against his wishes for fear of retribution. Per a January 9 email, the Greyglers, an affinity group for people over 40, is changing its name because not all people over 40 have gray hair, thus constituting lack of “inclusivity” (Google has hired an external consultant to rename the group). There’s no shortage of DEI groups, of course, or affinity groups, including any number of working groups populated by radical political zealots with whom product managers are meant to consult on new tools and products.

March 10, 2024

Viking longships and textiles

Virginia Postrel reposts an article she originally wrote for the New York Times in 2021, discussing the importance of textiles in history:

The Sea Stallion from Glendalough is the world’s largest reconstruction of a Viking Age longship. The original ship was built at Dublin ca. 1042. It was used as a warship in Irish waters until 1060, when it ended its days as a naval barricade to protect the harbour of Roskilde, Denmark. This image shows Sea Stallion arriving in Dublin on 14 August, 2007.

Photo by William Murphy via Wikimedia Commons.

Popular feminist retellings like the History Channel’s fictional saga Vikings emphasize the role of women as warriors and chieftains. But they barely hint at how crucial women’s work was to the ships that carried these warriors to distant shores.

One of the central characters in Vikings is an ingenious shipbuilder. But his ships apparently get their sails off the rack. The fabric is just there, like the textiles we take for granted in our 21st-century lives. The women who prepared the wool, spun it into thread, wove the fabric and sewed the sails have vanished.

In reality, from start to finish, it took longer to make a Viking sail than to build a Viking ship. So precious was a sail that one of the Icelandic sagas records how a hero wept when his was stolen. Simply spinning wool into enough thread to weave a single sail required more than a year’s work, the equivalent of about 385 eight-hour days. King Canute, who ruled a North Sea empire in the 11th century, had a fleet comprising about a million square meters of sailcloth. For the spinning alone, those sails represented the equivalent of 10,000 work years.

Ignoring textiles writes women’s work out of history. And as the British archaeologist and historian Mary Harlow has warned, it blinds scholars to some of the most important economic, political and organizational challenges facing premodern societies. Textiles are vital to both private and public life. They’re clothes and home furnishings, tents and bandages, sacks and sails. Textiles were among the earliest goods traded over long distances. The Roman Army consumed tons of cloth. To keep their soldiers clothed, Chinese emperors required textiles as taxes.

“Building a fleet required longterm planning as woven sails required large amounts of raw material and time to produce,” Dr. Harlow wrote in a 2016 article. “The raw materials needed to be bred, pastured, shorn or grown, harvested and processed before they reached the spinners. Textile production for both domestic and wider needs demanded time and planning.” Spinning and weaving the wool for a single toga, she calculates, would have taken a Roman matron 1,000 to 1,200 hours.

Picturing historical women as producers requires a change of attitude. Even today, after decades of feminist influence, we too often assume that making important things is a male domain. Women stereotypically decorate and consume. They engage with people. They don’t manufacture essential goods.

Yet from the Renaissance until the 19th century, European art represented the idea of “industry” not with smokestacks but with spinning women. Everyone understood that their never-ending labor was essential. It took at least 20 spinners to keep a single loom supplied. “The spinners never stand still for want of work; they always have it if they please; but weavers are sometimes idle for want of yarn,” the agronomist and travel writer Arthur Young, who toured northern England in 1768, wrote.

Shortly thereafter, the spinning machines of the Industrial Revolution liberated women from their spindles and distaffs, beginning the centuries-long process that raised even the world’s poorest people to living standards our ancestors could not have imagined. But that “great enrichment” had an unfortunate side effect. Textile abundance erased our memories of women’s historic contributions to one of humanity’s most important endeavors. It turned industry into entertainment. “In the West,” Dr. Harlow wrote, “the production of textiles has moved from being a fundamental, indeed essential, part of the industrial economy to a predominantly female craft activity.”