On Substack, Andrew Doyle explains why it’s a terrible idea to trust the government — any government — in forcing digital ID on everyone:

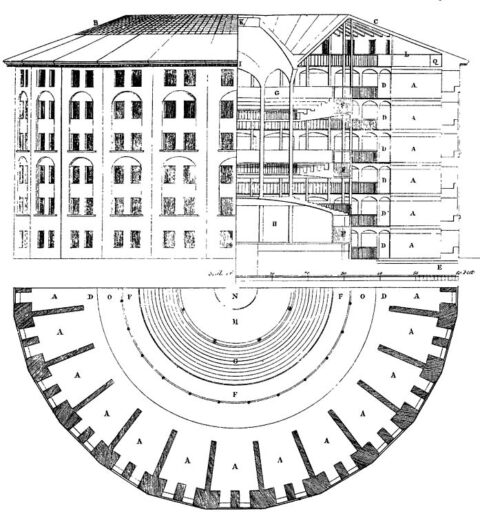

An illustration of Jeremy Bentham’s Panopticon prison.

Drawing by Willey Reveley, 1791.

During a trip to Russia in 1785, the philosopher Jeremy Bentham sketched an outline for a new prison design. The cells were arranged around the circular perimeter and, at the centre, he placed his “panopticon”: a watchtower which afforded a view of any of the cells at all times. The prisoners might not always be being observed, but they could never be sure that they weren’t.

Bentham’s design was never directly used, but the idea took hold as a symbol of state overreach and control, most famously in Michel Foucault’s Discipline and Punish (1975). Foucault was alert to the political ramifications of such a concept, and how surveillance might become an internalised experience. With Keir Starmer now pledging to introduce a digital ID system as a mandatory condition for the right to work, are we seeing the first step towards the realisation of Bentham’s vision?

I suppose we are already there. I have seen friends switch off their phones before discussing politically sensitive issues, genuinely convinced that digital eavesdropping is the norm. Many people are mistrustful of the “Alexa” voice assistant, which they are persuaded is recording their every word. While this all seems terribly conspiratorial, I’m sure most of us remember those reports a few years ago about the Pegasus spyware which had been covertly installed on the phones of journalists and government figures, turning the devices into pocket spies.

[…]

Few will be surprised to hear that public trust in political institutions has plummeted. The increasingly authoritarian tendencies of successive governments, our two-tier policing system, public manipulation as embodied in the “nudge unit”, and the corrupt prioritisation of the interests of the political class over the people they serve – perhaps best demonstrated by parliament’s flagrant efforts to overturn the Brexit vote – have all contributed to this climate of mistrust. The bizarre overreach of police during the lockdowns – in which dog walkers were publicly shamed with drone footage, and shopping trolleys were probed for “non-essential items” – has hardly helped matters.

To many of us, it is baffling that anyone at all would support the prospect of the government keeping track of our movements and holding our private details in a database. Starmer claims that the scheme will curb illegal immigration, but we are talking about criminals who already work outside the system and will doubtless continue to do so. Besides, identity cards have been a reality on the continent for years, and have done precisely nothing to resolve the problem. Employers in the UK are already legally obliged to insist on proof of immigration status from workers.

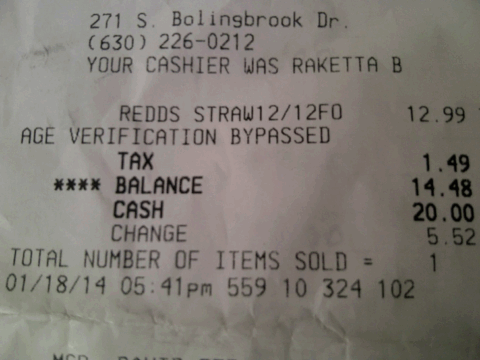

Labour’s digital ID scheme seems more about control than anything else. The possibility of fraud is also a major concern. It’s not as though the government has an unblemished track record of preventing data breaches. We all recall the massive leak of official MOD data regarding Afghans who had worked with the British government during the UK’s military campaigns. And who could forget the senior civil servant who, in 2008, left top-secret documents concerning al-Qaeda and Iraq’s security forces on a train from London Waterloo? Are we really to suppose that the creation of an all-encompassing centralised database will not leave the public open to risk from hackers and hostile foreign powers?

Tim Worstall adds that “they c’n fuck off ‘n’ all”:

So we’ve that wet dream of Tony Blair raising its ugly head again. There should be a national ID system. Actually, it’s not just Blair, T — the bureaucracy has been right pissed at the erasure of the wartime system since the 50s when it was abolished.

For there are two ways of looking at, thinking about, the whole governance thing. One is — the Blair, bureaucrats’, version — that the population are cattle, kine, to be managed. For the benefit of the bureaucracy of course — or at very least to be forced into doing what the bureaucracy thinks they — we — should be doing.

Then there’s that stout Englishman, the Anglo Saxon, version, which is that government are just the slaves we communally hire to make sure the bins get emptied. Well, OK, maybe raise a bit of tax for a Royal Navy to sink the Frenchies. But even then, not too much of that — the Civil War was, after all, triggered by Ship Money. Did the people who would not be slaughtered by the first wave of invading Frenchies — because they had the silly excuse of living 25 miles inland — have to pay the tax to run the Royal Navy to keep the Frenchies at bay or not? The King said yes — the King was right — and not for the first nor last time in British political history the guy who was right had his head cut off for being so.

Digital ID, so which version should we have? That one beloved of Froggie-type bureaucrats who view La Profonde as kine to be corralled? Or the Anglo Saxon version where we just devolve the scut work to a few slaves?

[…]

The reason this never will be proposed is that it doesn’t fit the reasons why our rulers wish to have an ID system. They’re insistent that we be their kine rather than they our. So, the Hell w’ ’em.

But it could be done. Government simply publishes an interface — an API — which says that proof of identity needs to be presented in this format. We’re done as far as whose kine is whose.

Update 4 October: From Samizdata, another illustration of just how toxic Two Tier Keir has become to British voters:

The Guardian reports:

“Reverse Midas touch”: Starmer plan prompts collapse in support for digital IDs

Public support for digital IDs has collapsed after Keir Starmer announced plans for their introduction, in what has been described as a symptom of the prime minister’s “reverse Midas touch”.

Net support for digital ID cards fell from 35% in the early summer to -14% at the weekend after Starmer’s announcement, according to polling by More in Common.

The findings suggest that the proposal has suffered considerably from its association with an unpopular government. In June, 53% of voters surveyed said they were in favour of digital ID cards for all Britons, while 19% were opposed.