How would the Oxford system kill AI?

Once again, where do I begin?

There were so many oddities in Oxford education. Medical students complained to me that they were forced to draw every organ in the human body. I came here to be a doctor, not a bloody artist.

When they griped to their teachers, they were given the usual response: This is how we’ve always done things.

I knew a woman who wanted to study modern drama, but she was forced to decipher handwriting from 13th century manuscripts as preparatory training.

This is how we’ve always done things.

Americans who studied modern history were dismayed to learn that the modern world at Oxford begins in the year 284 A.D. But I guess that makes sense when you consider that Oxford was founded two centuries before the rise of the Aztec Empire.

My experience was less extreme. But every aspect of it was impervious to automation and digitization — let alone AI (which didn’t exist back then).

If implemented today, the Oxford system would totally elminate AI cheating — in these five ways:

(1) EVERYTHING WAS HANDWRITTEN — WE DIDN’T EVEN HAVE TYPEWRITERS.

All my high school term papers were typewritten — that was a requirement. And when I attended Stanford, I brought a Smith-Corona electric typewriter with me from home. I used it constantly. Even in those pre-computer days, we relied on machines at every stage of an American education.

When I returned from Oxford to attend Stanford Business School, computers were beginning to intrude on education. I was even forced (unwillingly) to learn computer programming as a requirement for entering the MBA program.

But during my time at Oxford, I never owned a typewriter. I never touched a typewriter. I never even saw a typewriter. Every paper, every exam answer, every text whatsoever was handwritten—and for exams, they were handwritten under the supervision of proctors.

When I got my exam results from the college, the grades were handwritten in ancient Greek characters. (I’m not making this up.)

Even if ChatGPT had existed back then, you couldn’t have relied on it in these settings.

(2) MY PROFESSORS TAUGHT ME AT TUTORIALS IN THEIR OFFICES. THEY WOULD GRILL ME VERBALLY — AND I WAS EXPECTED TO HAVE IMMEDIATE RESPONSES TO ALL THEIR QUESTIONS.

The Oxford education is based on the tutorial system. It’s a conversation in the don’s office. This was often one-on-one. Sometimes two students would share a tutorial with a single tutor. But I never had a tutorial with more than three people in the room.

I was expected to show up with a handwritten essay. But I wouldn’t hand it in for grading — I read it aloud in front of the scholar. He would constantly interrupt me with questions, and I was expected to have smart answers.

When I finished reading my paper, he would have more follow-up questions. The whole process resembled a police interrogation from a BBC crime show.

There’s no way to cheat in this setting. You either back up what you’re saying on the spot — or you look like a fool. Hey, that’s just like real life.

(3) ACADEMIC RESULTS WERE BASED ENTIRELY ON HANDWRITTEN AND ORAL EXAMS. YOU EITHER PASSED OR FAILED — AND MANY FAILED.

The Oxford system was brutal. Your future depended on your performance at grueling multi-day examinations. Everything was handwritten or oral, all done in a totally contained and supervised environment.

Cheating was impossible. And behind-the-scenes influence peddling was prevented — my exams were judged anonymously by professors who weren’t my tutors. They didn’t know anything about me, except what was written in the exam booklets.

I did well and thus got exempted from the dreaded viva voce — the intense oral exam that (for many students) serves as follow-up to the written exams.

That was a relief, because the viva voce is even less susceptible to bluffing or games-playing than tutorials. You are now defending yourself in front of a panel of esteemed scholars, and they love tightening the screws on poorly prepared students.

(4) THE SYSTEM WAS TOUGH AND UNFORGIVING — BUT THIS WAS INTENTIONAL. OTHERWISE THE CREDENTIAL GOT DEVALUED.

I was shocked at how many smart Oxford students left without earning a degree. This was a huge change from my experience in the US — where faculty and administration do a lot of hand-holding and forgiving in order to boost graduation rates.

There were no participation trophies at Oxford. You sank or swam — and it was easy to sink.

That’s why many well-known people — I won’t name names, but some are world famous — can tell you that they studied at Oxford, but they can’t claim that they got a degree at Oxford. Even elite Rhodes Scholars fail the exams, or fear them so much that they leave without taking them.

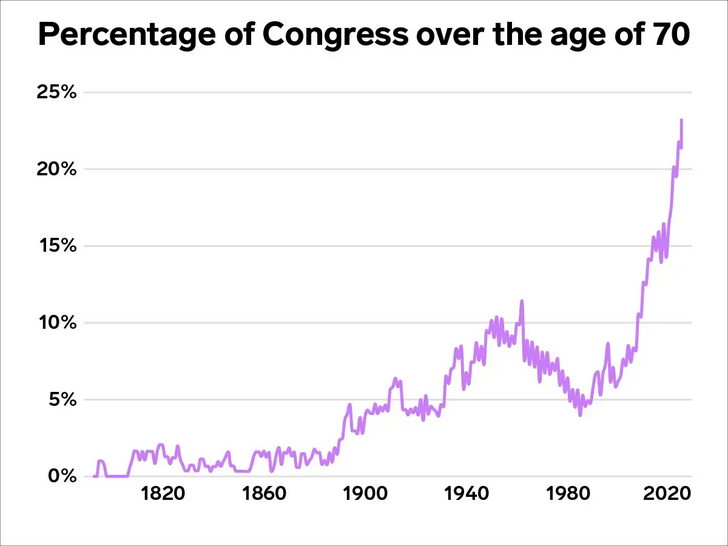

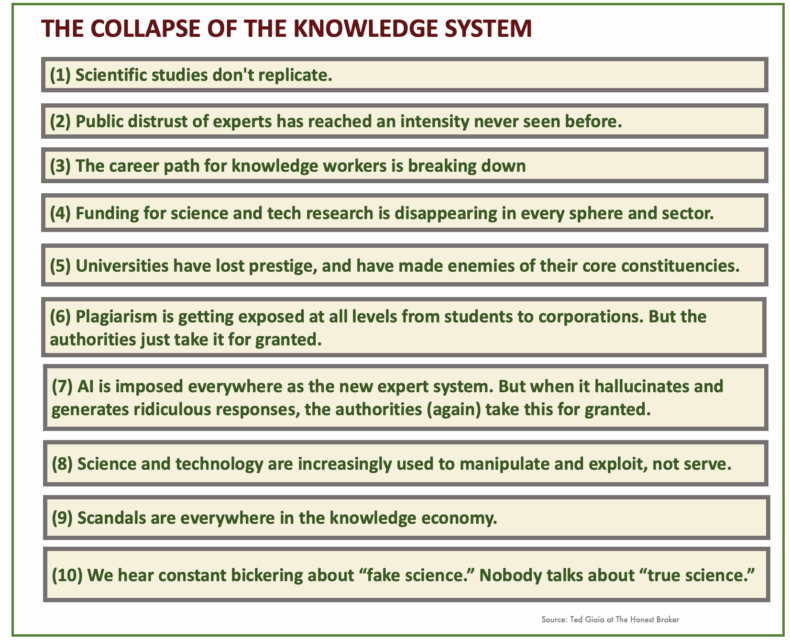

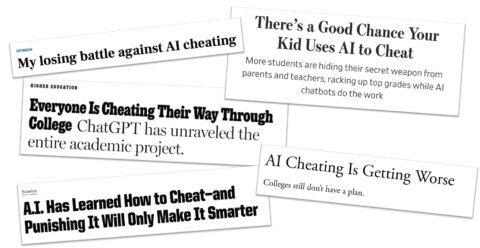

I feel sorry for my friends who didn’t fare well in this system. But in a world of rampant AI cheating, this kind of bullet-proof credentialing will return by necessity — or the credentials will get devalued.

(5) EVEN THE INFORMAL WAYS OF BUILDING YOUR REPUTATION WERE DONE FACE-TO-FACE — WITH NO TECHNOLOGY INVOLVED

Exams weren’t the only way to build a reputation at Oxford. I also saw people rise in stature because of their conversational or debating or politicking or interpersonal skills.

I’ve never been anywhere in my life where so much depended on your ability at informal speaking. You could actually gain renown by your witty and intelligent dinner conversation. Even better, if you had solid public speaking skills you could flourish at the Oxford Union — and maybe end up as Prime Minister some day.

All of this was done face-to-face. Even if a time traveler had given you a smartphone with a chatbot, you would never have been able to use it. You had to think on your feet, and deliver the goods with lots of people watching.

Maybe that’s not for everybody. But the people who survived and flourished in this environment were impressive individuals who, even at a young age, were already battle tested.