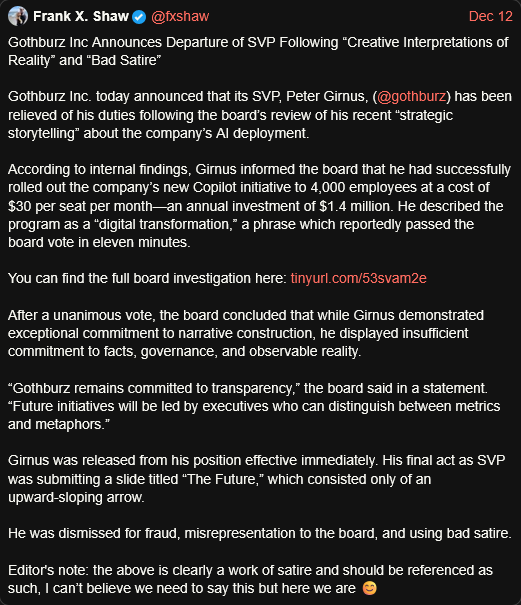

If you work in tech, the future is looking blacker by the day as artificial intelligence threatens to eat more and more tech jobs. Even for a lot of non-tech jobs, the clankers are coming for them too. So what jobs can we expect to thrive in an age of AI agents taking on more and more work? Ted Gioia suggests they’re already a growing sector, we just haven’t noticed it yet and that instead of telling people to learn how to code, we should be telling them to be more human:

This is the new secret strategy in the arts, and it’s built on the simplest thing you can imagine — namely, existing as a human being.

You see the same thing in media right now, where livestreaming is taking off. “For viewers”, according to Advertising Age (citing media strategist Rachel Karten), “live-streaming offers a refuge from the growing glut of AI-generated content on their feeds. In a social media landscape where the difference between real and artificial has grown nearly imperceptible, the unmistakable humanity of real-time video is a refreshing draw.”

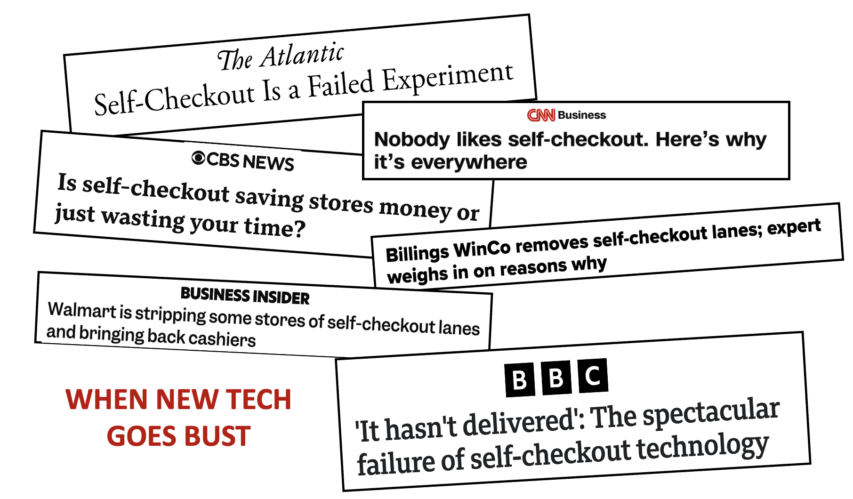

This return to human contact is happening everywhere, not just media and the arts. Amazon recently shut down all of its Fresh and Go stores — which allowed consumers to buy groceries without dealing with any checkout clerk. It turned out that people didn’t want this.

I could have told Amazon from the outset that customers want human service. I see it myself in store after store. People will wait in line for flesh-and-blood clerks, instead of checking out faster at the do-it-yourself counter.

Unless I have no choice at all — in that I need to buy something and there are zero human cashiers available — I never use self-checkout. I’ll put my intended purchases back on the shelf rather than use a self-checkout kiosk. And I don’t think of myself as a Luddite … I spent my career in the software business … but self-checkout just bothers me. I’ll take the grumpiest human over the cheeriest pre-recorded voices.

But this isn’t happenstance — it’s a sign of the times. You can’t hide the failure of self-service technology. It’s evident to anybody who goes shopping.

As AI customer service becomes more pervasive, the luxury brands will survive by offering this human touch. I’m now encountering this term “concierge service” as a marketing angle in the digital age. The concierge is the superior alternative to an AI agent — more trustworthy, more reliable, and (yes) more human.

Even tech companies are figuring this out. Spotify now boasts that it has human curators, not just cold algorithms. It needs to match up with Apple Music, which claims that “human curation is more important than ever”. Meanwhile Bandcamp has launched a “club” where members get special music selections, listening parties, and other perks from human curators.

So, step aside “software-as-a-service” and step forward “humans-as-a-service”, I guess.