You’ve heard me say quite often that the nicest car in the faculty lot always belongs to the wildest-eyed Marxist. Given what we know about the stiffness of the “most insane Marxist” competition among eggheads, it seems to follow that the faculty lot must look like a rap video — Bugattis and Bentleys and Maybachs everywhere. But that’s not the case, comrades.

Not because academics are worried about anything so prole as hypocrisy — as we know, cognitive dissonance only affects the Dirt People — but because eggheads operate on a different scale of values. Bentleys etc. are what those people drive … so professors have to kick it bobo style. Thus the faculty lot is filled with Priuses (Prii?), Teslas, and so forth. Back when The Simpsons was funny, it had a throwaway joke about Ed Begley Jr. driving a car so eco-friendly, it was powered entirely by his own sense of self-regard. If they actually marketed those, every professor in America would have one … but since they don’t, the “eco-pimp my ride” competition continues unabated.

Which is hilarious for two reasons. First, there isn’t — there won’t be, there can’t be — sufficient infrastructure to support more than a token few electric vehicles (EVs). Flyover State is nothing if not eco-friendly, though, and so they fell all over themselves giving the faculty charging stations … but see above: All they could manage was one charging station per level, in one parking garage. Which naturally led to much agonizing in the Faculty Senate, not to mention the student newspaper, the town newspaper, and every boutique coffee place and head shop in College Town. How can charging station time be most equitably distributed? Does the Lesbian Negress outweigh (supply your own joke here, please) the White FTM tranny? What about the genderfluid hemophiliac Inuit? Where does the wingless golden-skinned dragonkin rank?

Severian, “Luxury Beliefs”, Rotten Chestnuts, 2021-06-03.

April 5, 2024

QotD: When the faculty lounge turned nasty over the electric vehicle charging stations

April 1, 2024

How Railroad Crossings Work

Practical Engineering

Published Jan 2, 2024How do they know when a train is on the way?

Despite the hazard they pose, trains have to coexist with our other forms of transportation. Next time you pull up to a crossbuck, take a moment to appreciate the sometimes simple, sometimes high-tech, but always quite reliable ways that grade crossings keep us safe.

(more…)

March 22, 2024

If you peasants won’t buy EVs voluntarily, the government will make it mandatory

Tom Knighton notes that the government isn’t happy with us dirt people because we’re not all rushing to voluntarily give up our old fashioned internal combustion vehicles and replace them with shiny new electric vehicles like we’re supposed to:

I’ve written a lot about electric vehicles (EVs) over my time at Substack. My take is, as it always is, that I like the concept, but they’re not ready for prime time. In particular, their range and recharge time means they lose in a head-to-head comparison with internal combustion engines (ICE).

For some people, even as things currently stand, EVs make sense. They may have charging stations at work and only use them for commutes to and from their place of employment, making all of these concerns irrelevant.

Yet a lot of people don’t have that. They have concerns about EVs and so they don’t see them as a viable option, which is why people aren’t buying them.

Enter the EPA.

It seems that if we won’t willingly do what they want, they’ll just force us to buy something we don’t want.

“Outlaw your car” sounds like such an outrageous phrase, and technically speaking, it isn’t true — but only barely. What practical difference is there between outlawing something, and regulating it out of existence?

That’s exactly what the EPA intends to do this week with strict new rules going forward against gas- and diesel-powered cars and light trucks.

Expected as soon as Wednesday, the Biden EPA “is poised to finalize emissions rules that will effectively require a certain percentage — as much as two-thirds by 2032 — of new cars to be all-electric”, according to Inside EVs. Politico sells the expected rule as one that would “tackle the nation’s biggest source of planet-warming pollution and accelerate the transition to electric vehicles”.

The rule would require carmakers to cut their average emissions of carbon dioxide by 52% between 2027 and 2032. EPA projects that the standard would push the car industry to ensure that electric cars and light trucks make up about 67% of new vehicles by model year 2032.

Of course, this led to pushback by people like dealer groups and car companies who argued, as I typically do, that the American people weren’t ready for that, in part due to the price of EVs and the lack of charging stations.

So the EPA has decided to make it so that we probably can’t afford ICE cars and trucks, either, so we might as well go with EVs instead.

And people wonder why I want to dismantle the EPA at the same time as I dismantle the ATF. It’s for the same damn reason. They just make up rules that impact people’s lives and businesses, all because of their own political agenda.

Of course, raising the prices on all cars by making the emissions standards virtually impossible to meet without making the cars so expensive isn’t going to prompt a lot of people to buy electric. It’ll just make them buy older, less fuel-efficient models.

The market for used cars was already getting pretty spicy before the pandemic. Dealers were barely able to accept trade-in vehicles before they were selling them off the lot to other buyers (and there were waiting lists of willing buyers for certain kinds of vehicles … not even particularly special vehicles, either). I don’t know if that situation has changed since the pandemic, but it indicated to me that the demand for good quality used vehicles must be coming from people who’d consciously chosen not to buy newer, more expensive cars (and pretty much by definition, every EV was more expensive than an equivalant ICE vehicle).

March 3, 2024

QotD: The pushback against EVs

Parts of the automotive press seem to have sensed conspiracy in this. One senior figure recently asked who exactly has been “driving the anti-electric-car agenda”, while a respected publication claimed an “increasingly vehement anti-electric-car rhetoric” had hampered consumer confidence. The truth, however, is far simpler: people aren’t buying electric cars because they’re not very good.

Don’t think me a luddite – EVs are lovely in their own right. Smooth, brisk and easy to drive, there is a certain serenity in piloting a battery-powered vehicle. But EVs don’t exist in isolation. Instead, they are competing with a century of petrol and diesel power that has established cars as providers of comfort, freedom and convenience. And while the quiet nature of an EV arguably brings more comfort than an engine, batteries offer so much less freedom and convenience than fuel tanks as to barely be worth comparing.

My old diesel Mercedes, for instance, cost £4,000 and could go from London to Aberdeen, and most of the way back, on a single tank of fuel. A typical EV would need to recharge at least twice – just on the way up. This would add perhaps 90 minutes to the journey, assuming the public plugs were working and conveniently located. That, in my book, makes an EV demonstrably inconvenient. And cries of “how often do you drive to Aberdeen?” don’t hold water, because the freedom cars bring is absolutely intrinsic to their appeal. Perhaps tomorrow I get the urge to cross the Bridge of Dee; perhaps it’s none of your business. That’s freedom for you, and EVs curtail it.

Hugo Griffiths, “Why the public isn’t buying electric cars”, Spiked, 2023-11-20.

November 15, 2023

The Future of Railways (circa 1961)

Jago Hazzard

Published 26 Jul 2023The future is slightly dingy.

(more…)

May 30, 2023

It might be time to consider getting a home generator … while you still can

J.D. Tuccille in today’s The Rattler newsletter suggests that if you’ve been considering getting a generator to power your house in case of blackouts, now might be a good time:

The regulatory body that oversees the nation’s power grid cast a bit of a chill over the coming warm months when, in mid-May, it cautioned that the country might not generate enough electric power to meet demand. Coming after multiple warnings from regulators, grid operators, and industry experts that enthusiasm for retiring old-school “dirty” generating capacity is outstripping the ability of renewable sources to fill the gap, the announcement is a heads-up to Americans that they may want to make back-up plans for a power grid growing increasingly unreliable. It’s also a reminder that green ideology is no substitute for the ability to flip a switch and have the lights come on.

Unreliable Energy

“NERC’s 2023 Summer Reliability Assessment warns that two-thirds of North America is at risk of energy shortfalls this summer during periods of extreme demand,” the North American Electric Reliability Corporation, a nominally non-governmental organization with statutory regulatory powers, noted May 17. “‘Increased, rapid deployment of wind, solar and batteries have made a positive impact,’ said Mark Olson, NERC’s manager of Reliability Assessments. ‘However, generator retirements continue to increase the risks associated with extreme summer temperatures, which factors into potential supply shortages in the western two-thirds of North America if summer temperatures spike.'”

This is not the first time we’re hearing that the power grid isn’t up to meeting demand for electricity. Nor is it the first time we’re told that renewable sources such as wind and solar are coming online more slowly than power-generation capacity based on fossil fuels is being retired.

Dwindling Power Plants

“The United States is heading for a reliability crisis,” Commissioner Mark C. Christie of the Federal Energy Regulatory Commission (FERC) told the Senate Committee on Energy & Natural Resources during a May 4 hearing. “I do not use the term ‘crisis’ for melodrama, but because it is an accurate description of what we are facing. I think anyone would regard an increasing threat of system-wide, extensive power outages as a crisis. In summary, the core problem is this: Dispatchable generating resources are retiring far too quickly and in quantities that threaten our ability to keep the lights on. The problem generally is not the addition of intermittent resources, primarily wind and solar, but the far too rapid subtraction of dispatchable resources, especially coal and gas.”

The federal Energy Information Administration foresees almost a quarter of coal generating capacity being retired by the end of the decade — a process already in progress. Just this month it announced “the United States will generate less electricity from coal this year than in any year this century.” Non-hydropower renewables are the only generating capacity sources really seen growing in the short-term.

In February of this year, PJM Interconnection, which manages grid operations in much of the eastern United States, warned of “increasing reliability risks … due to a potential timing mismatch between resource retirements, load growth and the pace of new generation entry.”

April 29, 2023

Justin can’t let Joe steal his thunder on this critical issue!

Justin Trudeau’s love of the vastly expensive and utterly useless virtue signal is almost unmatched among western leaders, but as Bruce Gudmundsson relates here, some of Joe Biden’s lower-echelon cronies in the Pentagram Pentagon have put up a virtue signal that will be very hard for Justin to top:

In the United States, the president enjoys the privilege of appointing 4,000 of his supporters to positions within the Executive Branch. When the president is a Democrat, the best connected of these invariably prefer perches in the vast social service bureaucracy, there to reign (but rarely rule) over like-minded civil servants.* Those with the fewest friends, alas, end up in the Pentagon.

I’m honestly surprised that the number of direct appointees is so low … I’d have guessed at least ten times that number. I was vaguely aware that the formal “spoils” system was broken up late in the 19th century, but the US federal government and its various arms-length agencies are several orders of magnitude larger than they were back then.

The appointees who suffer the latter fate know nothing of the work they supposedly supervise. Indeed, having been raised in homes in which there were “no war toys for Christmas”, they cannot distinguish a sailor from an airman, let alone explain the difference between a soldier and a Marine. What is worse, like impoverished Regency belles, obliged to spend the Season wearing last year’s frocks, Defense Department Democrats live in constant fear of losing caste.

With this in mind, it is not surprising that the aforementioned appointees embrace, with great enthusiasm, projects of the sort they can discuss at Georgetown cocktail parties. During the Obama years (2009-2017), many of these bore the brand of “green energy”. (No doubt, the appointees in question made much of the double entendre.) As might be expected, many of these programs went into hibernation during the presidency of Donald Trump (2017-2021), only to spring back to life after the inauguration (in 2021) of Joseph Biden.

In a recent post on his Substack, the indispensable Igor Chudov lays bare the folly of one of these initiatives. Part of the Climate Strategy unveiled by the US Army in 2022, this plan calls for the progressive replacement, over the course of twenty-eight years, of petroleum dependent cars, trucks, and tanks with their battery-powered counterparts.

I mean, on the plus side, it would mean that wars could only take place on sunny days (for solar-powered tanks) or windy days (for wind-powered tanks). The sheer stupidity of the notion would be laughable, except they really seem to be serious about military combat vehicles running on batteries recharged with solar cells, windmills, or unicorn farts. I’d call it peak Clown World, but it’s a safe bet that they can get even crazier without working up a sweat.

Searching for an appropriate graphic to go with this article, I found this gem at Iowa Climate Change from back in 2021:

Screencap from Iowa Climate Science, 14 Feb 2021

https://iowaclimate.org/2021/02/14/nato-chief-wonders-about-solar-powered-battle-tanks/

* Lest you think, Gentle Reader, that this post serves a partisan political purpose, I will mention that am convinced that the one Republican political appointee with whom I am well-acquainted is a knucklehead of the first order. Indeed, if I ever manage to locate the proper forms, I intend to nominate him for a place of particular honor in the Knucklehead Hall of Fame.

April 27, 2023

It’s not environmentalism I object to, it’s environmentalists

I thoroughly agree with Tom Knighton here:

I tend to be pretty critical of environmentalism. It’s not that I don’t value things like clean air, clean water, and pristine land free of pollution. I actually do value all of those things. I actually care about the environment.

What I don’t care about, though, are environmentalists.

Much of my issue with them is that they don’t seem to recognize reality or, if they do, they just want everyone to have to pay more and make do with less.

Most evironmentalists I’ve dealt with fail to understand one of the basic tenets of economics: There’s No Such Thing As A Free Lunch. Yes, we can make certain changes to how we do things to reduce our impact on the natural environment, but such changes are almost never free and sometimes the potential cost is significantly higher than any rational expectation of benefit from changing. Economics — and life in general — is all about the trade-offs. If you do X, you can’t do Y. If you specialize in this area, you can’t devote effort in that area, and so on. Time and materials limit what can be done and require a sensible way of deciding … and most environmentalists either don’t understand or refuse to accept this.

For example, take the electric cars that are being pushed so hard by environmentalists and their allies in the government. They’re not remotely ready to replace gas- or diesel-powered vehicles by any stretch of the imagination. They lack the range to really compete as things currently stand, and yet, what are we being pushed to buy?

Obviously, little of this is new. I wrote that post nearly two years ago and absolutely nothing has changed for either better or worse. Not on that front.

But there have been some changes, and they really show me why I’m glad I don’t describe myself as an environmentalist.

Actually, I take back a bit of my accusation that environmentalists don’t see the trade-offs: they do see some of them. They see things that you will have to give up to achieve their goals. That’s the kind of trade-off they’re eager to make.

Even if you don’t think climate change is real and manmade — I don’t, for example — I like the idea of clean, cheap sources of energy. Solar and wind aren’t going to produce all the electricity we need, but nuclear can.

Yet why do so many environmentalists focus on wind and solar? It can’t make what we need. It won’t replace coal power plants, especially with regard to reliability. Coal creates power when it’s overcast and when there’s no wind to speak of.

Nuclear can.

Nuclear, in fact, could create power on a fraction of the footprint, minimize pollution due to power creation, and do it safely. For all the fearmongering over nuclear power, there have been only two meltdowns in history — both of which were at facilities with reportedly abysmal safety records and one of which still needed a massive earthquake and tsunami to trigger it.

But wind and solar don’t just create “clean” energy. They also require Americans to make do with less.

That is the heart of the environmental movement. It’s not so much about saving the planet. If it’s not about a cult of personality, as Lights encountered, it’s about making people step backward in their standard of living.

April 13, 2023

Old and tired – “Conspiracy Theories”. The new hotness – “Coming Features”

Kim du Toit rounds up some not-at-all random bits of current events:

So Government — our own and furriners’ both — have all sorts of rules they wish to impose on us (and from here on I’m going to use “they” to describe them, just for reasons of brevity and laziness — but we all know who “they” are). Let’s start with one, pretty much picked at random.

They want to end sales of vehicles powered by internal combustion engines, and make us all switch to electric-powered ones. Leaving aside the fact that as far as the trucking industry is concerned, this can never happen no matter how massive the regulation, we all know that this is not going to happen (explanation, as if any were needed, is here). But to add to the idiocy, they have imposed all sorts of unrealistic, nonsensical and impossible deadline to all of this, because:

There isn’t enough electricity — and won’t be enough electricity, ever — to power their future of universal electric car usage. Why is that? Well, for one thing, they hate nuclear power (based on outdated 1970s-era fears), are closing existing ones and will not allow new ones to be built by dint of strangling environmental regulation (passed because of said 1970s-era fears). Then, to add to that, they have forced the existing electricity supply to become unstable by insisting on unreliable and variable generation sources such as solar and wind power. Of course, existing fuel sources such as oil. coal and natural gas are also being phased out because they are “dirty” (they aren’t, in the case of natgas, and as far as oil and coal are concerned, much much less so than in decades past) — but as with nuclear power, the rules are being drawn up as though old technologies are still being used (they aren’t, except in the Third World / China — which is another whole essay in itself). And if people want to generate their own electricity? Silly rabbits: US Agency Advances New Rule Targeting Portable Gas-Powered Generators. (It’s a poxy paywall, but the headline says it all, really.)

So how is this

pixie dust“new” electricity to be stored? Why, in batteries, of course — to be specific, in lithium batteries which are so far the most efficient storage medium. The only problem, of course, is that lithium needs to be mined (a really dirty industry) and even assuming there are vast reserves of lithium, the number of batteries needed to power a universe of cars is exponentially larger than the small number of batteries available — but that means MOAR MINING which means MOAR DIRTY. And given how dirty mining is, that would be a problem, yes?No. Because — wait for it — they will limit lithium mining, also by regulation, by enforcing recycling (where have we heard this before?) and by reducing battery size.

Now take all the above into consideration, and see where this is going. Reduced power supply, reduced power consumption, reduced fuel supply: a tightening spiral, which leads to my final question:

JUST HOW DO THEY THINK THIS IS ALL GOING TO END?

If there’s one thing we know, it’s that increased pressure without escape mechanisms will eventually cause explosion. It’s true in physics, it’s true in nature and it’s true, lest we forget, in humanity.

Of course, as friend-of-the-blog Severian often points out, these people think Twitter is real life. Of course there’ll be enough pixie dust to sprinkle over all their preferred solutions to make them come true. Reality is just a social construct — they learned that in college, and believe it wholeheartedly.

March 22, 2023

California – Embrace the Unicorn! No, the other Unicorn!

Chris Bray on California’s hoped-for path to transition away from fossil fuels:

California has the progressive vision to go all-electric, with laws and regulations that phase out gas water heaters, furnaces, and cars in the near future — while transitioning away from the production of electricity through the use of nuclear and natural gas technologies. Putting the teensy-weensy engineering questions aside and embracing the unicorn, this deep blue vision of the future means the state needs to build wind and solar power facilities in massive quantities, and now. California currently has about half the power it needs to do what it says it plans to do in just ten years.

But President Joe Biden just announced a move that aggressively advances the progressive vision of preserving wilderness and preventing development, naming the Avi Kwa Ame National Monument in the Mojave Desert — preventing solar and wind development on 506,814 acres of lightly populated and federally owned desert land. The coalition of tribal and community groups that has worked to get the national monument designated make the anti-solar point explicit on their website:

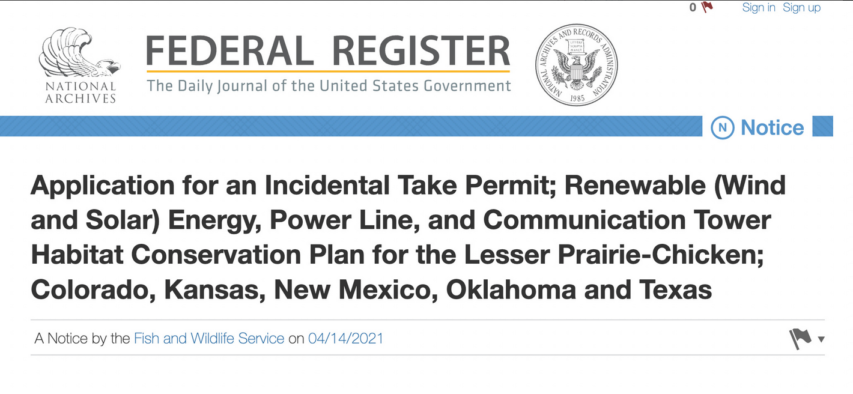

Where progressive coastal urbanites see the progressive project of decarbonization, people in wilderness-adjacent areas see industrial blight, and express that view in the language of progressive conservationism. To a degree not explored by the false binary of media-defined American politics, in which good people on the left face bad people on the right, California’s future is an increasingly sharp conflict between left and left — and note what kind of permits energy companies have to get to build big wind and solar plants in the desert:

When progressive California builds its progressive energy infrastructure, it’s going to incidentally take a shit-ton of bighorns and tortoises and birds. I assume I don’t have to explain the euphemism.

March 21, 2023

The reason there are no EV charging facilities along the Interstate Highway System

Jon Miltimore explains why a 1956 law prohibits the installation of EV charging bays anywhere on the Interstate:

In 1956, Ike signed into law a bill — the Federal-Aid Highway Act — that paved the way (pun intended) for the interstate highway system, which included rest areas at convenient locations.

While there were numerous problems with the legislation, a relatively minor one was that it created strict limits on what could be sold at these rest stops. Today, federal law limits commercial sales to only a few items (including lottery tickets), the Verify team found. When President Joe Biden rolled out a $5 billion funding plan for states to create EV charging stations, he neglected to carve out a commercial exemption for EVs.

“You would be paying for that energy”, Natalie Dale of the Georgia Department of Transportation told WXIA-TV Atlanta. “That would count as commercialized use of the right-of-way and therefore not allowed under current federal regulations.”

If you think this sounds like an inauspicious roll out to the massive federal EV program, you’re not wrong.

Allowing drivers to charge their EVs at convenient, familiar locations that already exist along interstate highways is a no-brainer — yet this simple idea eluded lawmakers in Washington, DC.

Unfortunately, it illustrates a much larger problem with the top-down blueprint central planners are using to create their EV charging station network.

“We have approved plans for all 50 States, Puerto Rico and the District of Columbia to help ensure that Americans in every part of the country … can be positioned to unlock the savings and benefits of electric vehicles”, Transportation Secretary Pete Buttigieg said in a 2022 statement.

While it’s good the DOT isn’t trying to single-handedly map out the locations of thousands of EV charging stations across the country, there’s little reason to believe that state bureaucrats will be much more efficient. A review of state plans reveals a labyrinth of rules, regulations, and stakeholders dictating everything from the maximum distance of EV stations from highways and interstates to the types of charging equipment stations can use to the types of power capabilities charging stations must have.

The primary reason drivers enjoy the great convenience of gasoline stations across the country — there are some 145,000 of them today — is that they rely on market forces, not central planning. Each year hundreds of new filling stations are created, not because a bureaucrat identified the right location but because an entrepreneur saw an opportunity for profit.

March 7, 2023

How Would a Nuclear EMP Affect the Power Grid?

Practical Engineering

Published 8 Nov 2022How a nuclear blast in the upper atmosphere could disable the power grid.

(more…)

February 13, 2023

Appliance futility by design

Tal Bachman recounts a miserable — but increasingly common — experience with modern “energy efficient” home appliances:

“A-rated energy appliances” by Tom Raftery is licensed under CC BY-NC-SA 2.0

The LG 5.8 cubic foot Capacity Top Load Washer sat in the laundry room, brand new. Maybe it was my imagination, but it looked insouciant.

Dad said it was the latest and greatest in laundering technology. Supposedly, some sort of internal sensor system (having something to do with a computer) fine-tuned water levels depending on clothing weight. Or something. I can’t remember exactly what he — or was it the moving guy? — said.

I did notice the washing machine had several preset wash cycles — Allergiene, Sanitary, Bright Whites, Towels, Heavy Duty, Bedding, and more. You could select them with a shiny, space-age-looking chrome dial. (I would later discover the machine had other fancy features with names like TurboWash™ 360, ENERGY STAR® Qualified, Smart Diagnosis™, and ThinQ™ Technology [Wi-Fi Enabled]).

[…]

Well, it was win-win-win, with a minor caveat. The caveat was the washing machine. Turns out that for all its razzle-dazzle features, it didn’t actually clean clothes. Even worse, it took hours to not clean clothes. The “Allergiene” cycle, for example, took almost four hours. Yet when you pulled your clothes out, you could still make out the orange juice or tomato sauce stains. I’d never encountered a more useless washing machine.

“How you feeling about this new washing machine?”, I asked Dad, a few days after the hunkering down began.

“Great!”, said Dad.Okay, I thought. That’s not unusual. Music — as opposed to the mundane or practical — occupies most of Dad’s awareness, and always has. Besides, most of his clothes are black, and he probably hasn’t noticed it’s not removing the ketchup stains. Maybe he will in a few weeks.

And maybe in the meantime, I thought, I could figure out a way to reprogram the machine for cycles which actually washed. And were faster.

But no. That turned out to be way too much to hope for. The machine allowed no independent control over water volume, cycle time, or water temperatures. It only allowed selection of a preset computerized cycle — none of which got your clothes clean.

[…]

Yet more irritating was the reason it skimped on water and power: it was trying to stop global warming. Oops — I mean “climate change”. It was “environmentally friendly”. Except it wasn’t, because you usually had to run at least two cycles to get your clothes clean. That’s right: you had to use the same amount of water in the end anyway, and double the electricity.

And so — not for the first time — I had stumbled upon yet another example of technological “progress” which exacerbated the very (pseudo) problem it purported to solve. The new useless LG “Save the World!” piece of garbage was the home equivalent of Hollywood stars taking private jets to a carbon reduction conference in Switzerland.

[…]

The US Department of Energy, I discovered, had begun imposing energy efficiency regulations in the early 1990s. A decade later, they made the regulations even stricter (see here also). Then, as the years passed, they made them even stricter. And then stricter. And then stricter. All the while, the feds offered appliance manufacturers huge tax incentives — i.e., huge cash rewards — to accelerate their phase out of functional washing machines.

Government succeeded. Today, minus the loophole-exploiting Speed-King (which the feds will probably crush soon), you cannot find a new washing machine — front- or top-loading — which washes clothes anywhere near as well as its predecessors. The rationale for this — saving the world from global warming — doesn’t even rise to the level of ludicrous. Just for starters, as I type this, we’re enduring one of the coldest winters ever recorded. New Hampshire’s Mount Washington Observatory just recorded a wind chill calculation of minus 109 degrees Farenheit, an all-time record for the United States (and approaching midway between the average temperatures of Jupiter and Mars). Temperatures are thirty degrees Farenheit colder than average in many places. Why would anyone want to bring temperatures down even further? And at the cost of destroying washing machine functionality? And what loon could actually believe home washing machines change the climate?

In any case, thanks to an essentially totalitarian government run by bought-and-paid-for liars, control freaks, and imbeciles, we have gone technologically backward — certainly in the appliance domain, but in others — for no good reason at all. (Regulations have also downgraded dishwashers, toilets, showers, and other appliances, but we can discuss those another time)

Back in 2019, Sarah Hoyt expressed her frustrations with “modern” “energy-efficient” appliances which matched our experiences exactly.

November 12, 2022

Climate imperialism

Michael Shellenberger on the breathtaking hypocrisy of first world nations’ rhetoric toward developing countries’ attempts to improve their domestic energy production:

What’s worse, global elites are demanding that poor nations in the global south forgo fossil fuels, including natural gas, the cleanest fossil fuel, at a time of the worst energy crisis in modern history. None of this has stopped European nations from seeking natural gas to import from Africa for their own use.

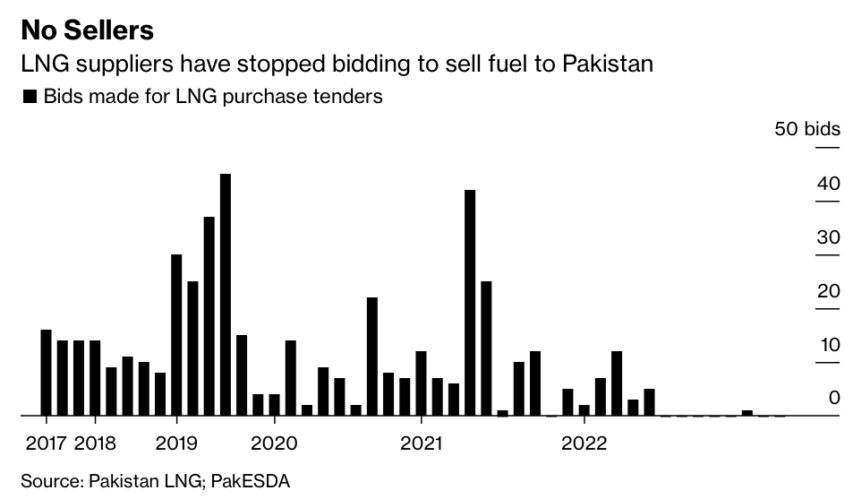

Rich nations have for years demanded that India and Pakistan not burn coal. But now, Europe is bidding up the global price of liquified natural gas (LNG), leaving Pakistan forced to ration limited natural gas supplies this winter because Europeans — the same ones demanding Pakistan not burn coal — have bid up the price of natural gas, making it unaffordable.

At last year’s climate talks, 20 nations promised to stop all funding for fossil fuel projects abroad. Germany paid South Africa $800 million to promise not to burn coal. Since then, Germany’s imports of coal have increased eight-fold. As for India, it will need to build 10 to 20 full-sized (28 gigawatts) coal-fired power plants over the next eight years to meet a doubling of electricity demand.

This is climate imperialism. Rich nations are only agreeing to help poor nations so long as they use energy sources that cannot lift themselves out of poverty.

Consider the case of Norway, Europe’s second-largest gas supplier after Russia. Last year it agreed to increase natural gas exports by 2 billion cubic meters, in order to alleviate energy shortages. At the same time, Norway is working to prevent the world’s poorest nations from producing their own natural gas by lobbying the World Bank to end its financing of natural gas projects in Africa.

The IMF wants to hold hostage $50 billion as part of a “Resilience and Sustainability Trust” that will demand nations give up fossil fuels and thus their chance at developing. Such efforts are working. On Thursday, South Africa received $600 million in “climate loans” from French and German development banks that can only be used for renewables. The Europeans hope to shift the $7.6 billion currently being invested by South Africa in electricity infrastructure away from coal and into renewables.

Celebrities and global leaders say they care about the poor. In 2019, the Duchess of Sussex, Meghan Markle, Prince Harry’s wife, told a group of African women, “I am here with you, and I am here FOR you … as a woman of color.” Why, then, are they demanding climate action on their backs?

October 25, 2022

A Multi-Trillion Dollar Pipe Dream

PragerU

Published 16 Jun 2022Are we heading toward an all-renewable energy future, spearheaded by wind and solar? Or are those energy sources wholly inadequate for the task? Mark Mills, Senior Fellow at the Manhattan Institute and author of The Cloud Revolution, compares the energy dream to the energy reality.

(more…)