We try to explain AI alignment by analogy to human alignment. Evolution “created” humans. Its “goal” is for humans to spread their genes by (approximately) having as many children as possible. It couldn’t directly communicate that goal to humans – partly because it’s an abstract concept that can’t talk, and partly because for most of biological history it was working with lemurs and ape-men who couldn’t understand words anyway. Instead, it tried to give us instincts that align us with that goal. The most relevant instinct is sex: most humans want to have sex, an action that potentially results in pregnancy, childbearing, and genes being spread to the next generation. This alignment strategy succeeded well enough that humans populations remain high as of 2023.

We’ve talked before about a major failure: humans can invent contraception. Evolution’s main alignment strategy was totally unprepared for this. It made us interested in a certain type of genital friction, which was a good proxy for its goal in the ancestral environment. But once we became smarter, we got new out-of-training-distribution options available, and one of those was inventing contraception so that we could get the genital friction without the kids. This is a big part of why average-children-per-couple is declining from 8+ in eg pioneer times to ~1.5 in rich countries today, even though modern rich people have more child-rearing resources available than the pioneers.

Another major alignment failure is porn. Giving evolution a little more credit, it didn’t just make people want genital friction – if that had been the sole imperative, we would have died out as soon as someone inventing the dildo/fleshlight. People want genital friction associated with attractive people and certain emotions relating to complex relationships. But now we can take pictures of attractive people and write stories that evoke the complex emotions, while using a dildo/fleshlight/hand to provide the genital friction, and that does substitute for sex pretty well. There’s still debate over whether porn makes people less likely to go out and form real relationships, but it’s at least plausibly another factor in the rich-country fertility decline. At the very least it doesn’t scream “well-thought-out alignment strategy robust to training-vs-deployment differences”.

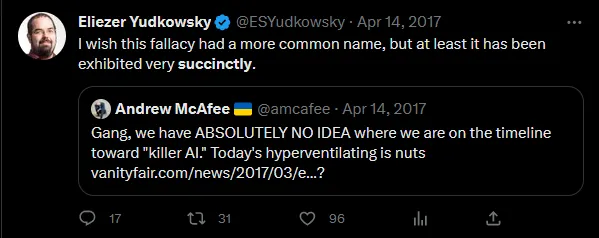

But these are boring examples. These are like 2015-level alignment concerns, from back when we thought the big problem was AIs seizing control of their reward centers or something. I think we might genuinely be able to avoid problems shaped like these. Unlike evolution, which had to work with lemurs, even weak GPT-level modern AIs are able to understand language and complicated concepts; we can tell them to want children instead of using genital friction as a proxy. 2023 alignment concerns are more about failed generalization – that is, about fetishes.

Evolution’s alignment problem isn’t just that humans have learned to satiate their libido in ways other than procreative sex. It’s that some humans’ libidos are fundamentally confused. For example, some men, instead of wanting to have sex with women, mostly want to spank them, or be whipped by them, or kiss their feet, or dress up in their clothes. None of these things are going to result in babies! You can’t trivially blame this on the shift from training to deployment (ie the environment of evolutionary adaptedness to the modern world) – women had feet in the ancestral environment too. This is a different kind of failure.

Here’s a simple story of fetish formation: evolution gave us genes that somehow unfold into a “sex drive” in the brain. But the genome doesn’t inherently contain concepts like “man”, “woman”, “penis”, or “vagina”. I’m not trying to make a woke point here: the genome is just a bunch of the nucleotides A, T, C, and G in various patterns, but concepts like “man” and “woman” are learned during childhood as patterns of neural connections. We assume that the nucleotides are a program telling the body to do useful things, but that has to be implemented through deterministic pathways of proteins and the brain’s neural connections are too complex to trivially influence that way (see here for more). The genome probably contains some nucleotides that are supposed to refer to the concepts “man” and “woman” once the brain gets them, but there’s are a lot of fallible proteins in between those two levels.

So the simple story of fetish formation is that the genome contains some message written in nucleotides saying “have procreative sex with adults of the opposite sex as you”, some galaxy-brained Rube Goldberg plan for translating that message into neural connections during childhood or adolescence, and sometimes the plan fails. Here are some zero-evidence just-so-story speculations for how various fetishes might form, more to give you an idea what I’m talking about than because I claim to have useful knowledge on this topic:

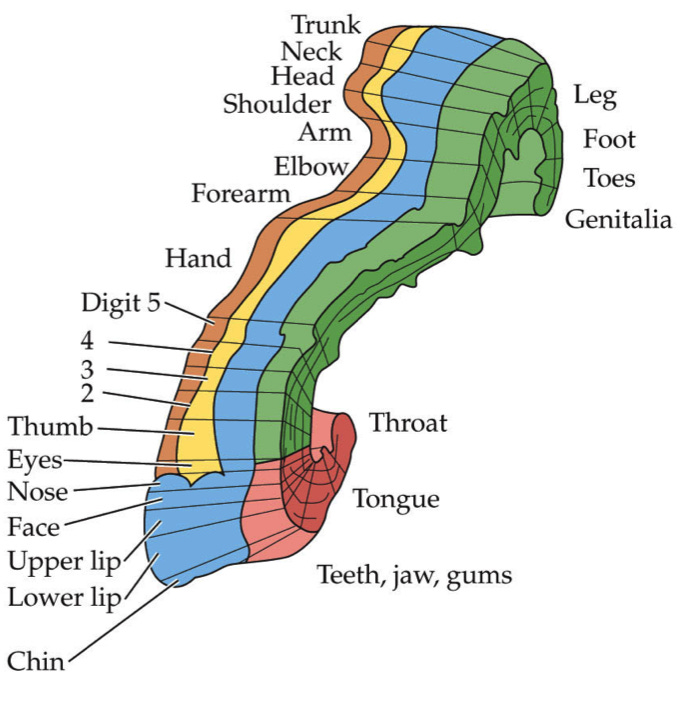

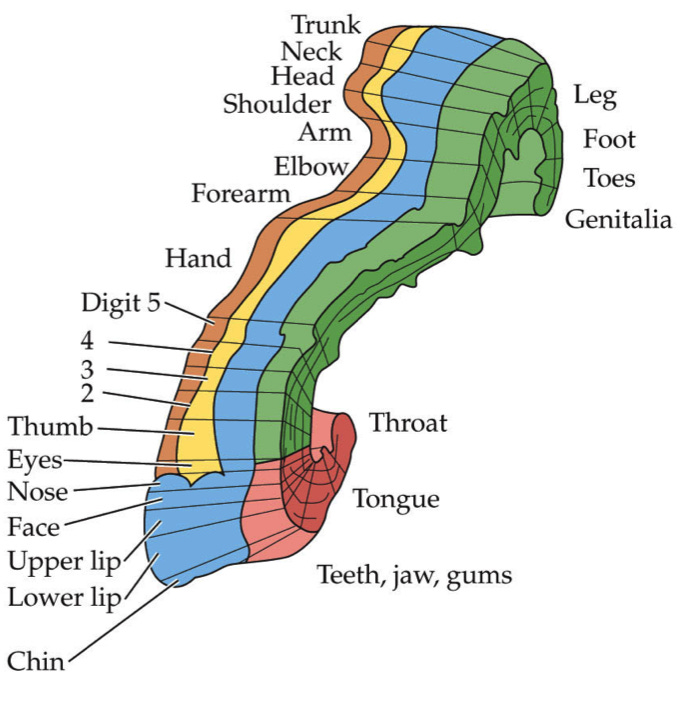

- Foot fetish: On the somatosensory cortex, the area representing the feet is right next to the area representing the genitalia. If the genome includes an “address” for the genitalia, plus the instructions “have sexual urges towards this”, then getting the address slightly wrong will land you in the feet.

A reasonable next question would be “what’s on the other side of the genitalia, and do people also have fetishes about that one?” The answer is “the somatosensory cortex is a line with the genitalia at the far end, because God is merciful and didn’t want there to be a second thing like foot fetishes.”

(source for cortex image)

- Spanking: From the male point of view, penetrative PIV sex involves applying force to the bottom half of a woman, at rhythmic intervals, in a way that causes her very intense emotions and makes her make moan and scream. Spanking is exactly like this, and most kids encounter spanking at a very early age and sex only after they’re much older. If the evolutionary message is something like “find the concept that looks vaguely like this, then be into it”, spanking is the first concept like that most people will find; by the time they learn about actual sex, spanking might be a trapped prior.

- Sadomasochism: Sex is painful for virgins, can be mildly painful even for some non-virgins, and when it’s pleasurable, it still looks a lot like pain (screams, intense emotions). Imagine you are a little boy/girl who stumbles in on your parents having sex. Your father is impaling the most sensitive part of your mother’s body, and your mother is moaning and squealing. A natural generalization might be “sex is the thing where a man causes a woman pain”.

- Latex/rubber: Plausibly the evolutionary specification includes details about attractiveness. Attractive people (ie those you should be most interested in having babies with) should be young and healthy (characteristics associated with better pregnancy outcomes, especially in the high-risk ancestral environment). The simplest sign of youth and good health is smooth skin, so the evolutionary message might say something about preferring sex with smooth-skinned people. Latex is a superstimulus for smooth skin, and maybe if you see it at the right time, in the right situation, it can totally overwhelm the rest of the message.

- Urine/scat: Procreative sex involves a sticky substance that comes out of the genitals, it doesn’t take much misgeneralization to get to other sticky substances that come out of the genitals or nearby regions.

- Bondage/domination/submission: Okay, I admit I don’t have a good just-so explanation for this one. Maybe it’s more psychological – people who have been told that sex is shameful can only fully appreciate it if they feel like a victim who’s been forced into it (and so carries no guilt). And people who have been told they’re undesirable and nobody could ever really love them can only fully appreciate it if their partner is a victim who has no choice in the matter.

- Furries: This has to be because of all the cute cartoon animals, right? But why do some people sexually imprint on them? I found this article on worshippers of the 1990s cartoon mouse Gadget helpful here. Gadget obviously has many desirable characteristics — she’s a very cute nerdy woman who sometimes ends up in damsel-in-distress situations. Maybe she is the most sexualized being that some six-year-old boys have encountered. When I watched Rescue Rangers as a six-year old, I could feel my brain trying to figure out whether to have a crush on her before deciding that no, it was too deep in latency stage. I assume most people who get their first crushes on Gadget or some other desirable cartoon character end up with their brains later generalize properly to “I like cute nerdy women in damsel-in-distress situations”, but a small minority misgeneralize to “nope, I’m only attracted to mice now, that’s where I’m going to go with this.”

Combine this with equivalent animal “fetishes” — things like beetles species where the females have red dots on their backs, and the males try to mate with anything that has a red dot — and you get a picture where evolution tries to communicate a lot of contingent features of sex in the hopes that one of them will stick, then tells you to be attracted to whatever is most associated with those features. At least for men, I think the features communicated in the genomic message are simple things like curves and thrusting and genitals and smooth skin, plus something that somehow picks out the concept of “woman” (except in 3% of the male population, where it picks out the concept of “men” instead, plus another 3% where it doesn’t pick out a sex at all).

Real procreative sex usually matches enough of features of the genomic message to be attractive to most people, but if the original triggers were associated with some contingent characteristics, the brain might misinterpret that as part of the target — for example, if it was a cartoon animal, the brain might think the target includes cartoon animals.

Other times, something that isn’t procreative sex matches the genomic message closely enough to be misinterpreted as the center of the target (eg getting whipped); usually procreative sex is somewhere in the target space, but maybe not the exact center, and a few people have such strong fetishes that procreative sex doesn’t register as erotic at all.

The process of forming the category “sexually attractive things” is just a special case of the process of forming categories at all. I discuss the formation of categories like “happiness” and “morality” in The Tails Coming Apart As Metaphor For Life. Society feeds us some labeled data about what is good or bad — for example, we might see someone commit murder on TV, and our parents tell us “No! That’s bad! Don’t do that!” (and the other TV characters hate and punish that character). Then we try to extrapolate such incidents to a broader moral system. If we’re philosophers, we might go further and try to formally describe that moral system, eg Kantianism, utilitarianism, divine command theory, natural law, etc. All of these correctly predict the training data (eg “murder is bad”) while having different opinions on out-of-distribution environments. Which one you choose is just a function of some kind of mysterious intellectual preference for how to generalize inherently ungeneralizeable things — what I previously described as “extrapolating a three-dimensional shape from its two-dimensional reinforcement-learning shadow”.

Fetishes are the same way. Here the evolutionary message provides semi-labeled data, giving people weird feelings when they see certain kinds of curvy, smooth-skinned people. Then people try to generalize that into an idea of what’s sexy. Usually their category is centered (in the sense that the category “bird” is centered around “sparrow” and not “ostrich”) around something close to procreative heterosexual sex. Other times they generalize in some very unexpected way, and are only attracted to cartoon mice. I think if we understood the laws of generalization, this would make sense. It would seem like a reasonable mistake that someone using Occam’s Razor and all the rest of the information-theoretic toolkit for generalization could make. But we don’t really understand those laws beyond faint outlines, so instead we’re reduced to YKINMKBYKIOK.