Forgotten Weapons

Published Feb 14, 2024The Patchett Machine Carbine Mk I is the predecessor to the Sterling SMG. It was developed by George William Patchett, who was an employee of the Sterling company. At the beginning of the wear, Sterling was making Lanchester SMGs, and Patchett began in 1942 working on a new design that was intended to be simpler, cheaper, and lighter than the Lanchester. He used the receiver tube dimensions from the Sten and the magazine well and barrel shroud from the Lanchester. His first prototypes were ready in 1943, but it wasn’t until early 1944 that the British government actually issued a requirement for a new submachine gun to replace the Stens in service.

The initial Patchett guns worked very well in early 1944 testing, which continued into 1945. It ultimately came out the winner of the trials, but they didn’t conclude until World War Two was over — and nothing was adopted because of the much-reduced need for small arms. Patchett continued to work on the gun, and by 1953 he was able to win adoption of it in the later Sterling form — which is a story for a separate video.

The Patchett was not used in any significant quantity in World War Two. At most, a few of them may have been taken on the parachute drops on Arnhem — there are specifically three trials guns which appear referenced in British documents before Arnhem, but are never mentioned afterwards (numbers 67, 70, and 72). Were they taken into the field? We really don’t know.

(more…)

May 21, 2024

Patchett Machine Carbine Mk I: Sten Becomes Sterling

May 20, 2024

The economic distortions of government subsidies

The Canadian federal and provincial governments are no strangers to the (political) attractions of picking winners and losers in the market by providing subsidies to some favoured companies at the expense not only of their competitors but almost always of the economy as a whole, because the subsidies almost never produce the kind of economic return promised. The current British government has also been seduced by the subsidies game, as Tim Congdon writes:

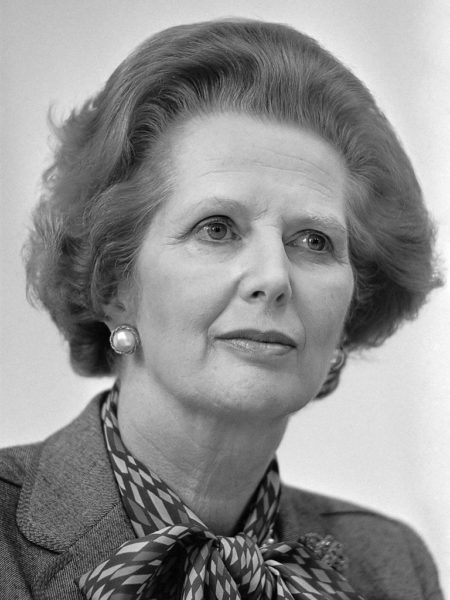

Former British Conservative Prime Minister Margaret Thatcher in 1983. She was in office from May 1979 to November 1990.

Photo via Wikimedia Commons.

Why do so many economists support a free market? By the phrase they mean a market, or even an economy dominated by such markets, where the government leaves companies and industries alone, and does not try to interfere by “picking winners” and subsidising them. Two of the economists’ arguments deserve to be highlighted.

The first is about the good use — the productivity — of resources. To earn a decent profit, most companies have to achieve a certain level of output to attract enough customers and to secure high enough revenue per worker.

If the government decides to give money to a favoured group of companies, these companies can survive even if they produce less, and obtain lower revenue per worker, than the others. The subsidisation of a favoured group of companies therefore lowers aggregate productivity relative to a free market situation.

In this column last month I compared the economically successful 1979–97 Conservative government with the economically unsuccessful 2010–2024 Conservative government, which is now coming to an end. In the context it is worth mentioning that Margaret Thatcher and her economic ministers had a strong aversion to government subsidies of any kind.

According to Professor Colin Wren of Newcastle University’s 1996 study, Industrial Subsidies: the UK Experience, subsidies were slashed from £5 billion (in 1980 prices) in 1979 to £0.3 billion in 1990. (In today’s prices that is from £23 billion to under £1.5 billion.)

Thatcher is controversial, and she always will be. All the same, the improvement in manufacturing productivity in the 1980s was faster than before in the post-war period and much higher than it has been since 2010. Further, one of Thatcher’s beliefs was that if the private sector refuses to pursue a supposed commercial opportunity, the public sector most certainly should not try to do so.

Such schemes as HS2 and the Hinkley Point nuclear boondoggle could not have happened in the 1980s or 1990s. They will result in pure social loss into the tens of billions of pounds and will undoubtedly reduce the UK’s productivity.

But there is a second, and also persuasive, general argument against subsidies and government intervention in industry. An attractive feature of a free market policy is its political neutrality. Because market forces are to determine commercial outcomes, businessmen are wasting their time if they lobby ministers and parliamentarians for financial aid.

Honest and straightforward tax-paying companies with British shareholders are rightly furious if they see the government channelling revenues towards other companies who have access to the right politicians and friendly civil servants. By definition, the damage to the UK’s interests is greatest if the recipients of government largesse are foreign.

May 19, 2024

Kamikazes versus Admirals! – WW2 – Week 299 – May 18, 1945

World War Two

Published 18 May 2024The kamikaze menace continues unabated, with suicide flyers hitting not one but two admirals’ flagships. There’s plenty of fighting on land, though, as the Americans advance on Okinawa and take a dam on Luzon to try and solve the Manila water crisis, but even after last week’s German surrender there is also still scattered fighting in Europe.

Chapters

01:34 The Battle of Poljana

06:32 American Advances on Okinawa

10:37 Kamikazes Versus the Admirals

13:58 The Battle for Ipo Dam

19:39 Soldiers Must Go From Europe to the Pacific

23:16 Summary

23:38 Conclusion

25:50 Call to Action

(more…)

May 18, 2024

Glory Days of the Kamikaze! – Operation Kikusui

World War Two

Published 17 May 2024During the Battle of Okinawa, the Japanese see the opportunity to cripple the core of the Allied navies. With their conventional air and naval forces unable to challenge the Allies, the Japanese unleash a wave of mass Kamikaze attacks. Hundreds of suicide pilots smash their aircraft into the Allied fleet. This is Operation Kikusui.

(more…)

HMS Victory: Returning Nelson’s flagship to her former glory

Forces News

Published Feb 10, 2024HMS Victory is undergoing a massive restoration and conservation programme costing around £45m.

Lord Nelson’s flagship at the Battle of Trafalgar is being stripped right back and having all the rotten wood removed.

Forces News was given exclusive access to the ship, preserved for all to enjoy at the National Museum of the Royal Navy in Portsmouth, to see the progress that’s being made.

(more…)

May 15, 2024

At least one of Queen Victoria’s PMs thought her “very wilful and whimsical, like a spoilt child”

In The Critic, Jonathan Parry reviews Queen Victoria and Her Prime Ministers: A Personal History by Anne Somerset:

In 1875, Queen Victoria sent Benjamin Disraeli a long and querulous letter “about vivisection, which she insists upon my stopping, as well as the theft of ladies’ jewels”. Similar heated and impracticable demands might arrive on his prime ministerial desk several times a day. He was not alone in thinking her “very wilful and whimsical, like a spoilt child”.

After the Conservative ministry’s defeat at the 1892 general election, Victoria complained it was “a defect in our much famed constitution to have to part with an admirable government like Lord Salisbury’s for no question of any importance or any reason, merely on account of the number of votes”.

Victoria’s outbursts to, and about, her ten prime ministers over the 64 years of her reign provide the meat of Anne Somerset’s book. Most of her letters were extremely forthright; some were endearing; not a few seem demented. She found disturbances to her comfort or routine particularly intolerable, such as ministerial crises which erupted in Ascot week or during one of her pregnancies.

Somerset’s approach is exhaustive and chronological. Gluttons for Victorian political history will probably enjoy it; she writes well and authoritatively, though could be more concise. Over nearly 600 pages, the effect of this torrent of royal complaint is overwhelming. It’s easy to see why a shaken Bismarck stuttered, “Mein Gott! That was a woman!” after his only audience with Victoria in 1888.

The book is presented as a “personal history” of the exchanges between her and her premiers. Most readers will sympathise with the men who had to manage her tactfully; many will wonder why they put up with it.

Yet they put up with it because of the principles at stake, which a “personal” account cannot bring out properly. Beneath the excitable phrases and endless underlining, Victoria’s correspondence doggedly promoted a coherent policy. She fought to maintain the authority of the Crown within the constitution, seeing it as essential for effective government. Her worry was that popular pressure would destabilise politics, through extra-parliamentary agitation but also through parliamentary organisation. So she was very suspicious of political parties, which she saw as factional agencies whose populist demands would disrupt the constitutional status quo.

Politically she remained a Hanoverian monarch: she believed the Crown should manage parliament through ministers chosen for their competence, loyalty and patriotism, not their commitment to popular causes. She even tried (unsuccessfully) to glean information on internal cabinet arguments so she could play her ministers off against each other, a trick used by her Georgian predecessors until the cabinet managed to assert collective responsibility in the 1820s.

Fiji in World War Two: the Momi Bay Gun Battery

Forgotten Weapons

Published Feb 3, 2024When the clouds of World War Two began to loom in the 1930s, Britain decided to begin securing some of its more distant colonial outposts — places that might be of strategic importance in a future conflict. Fiji was once of these outposts — a vital point on the seagoing supply line from Europe and the Americas to Australia and Asia. Construction of coastal defense batteries began in the late 1930s, mostly using 6 inch MkVII naval guns. These batteries were constructed around the capital of Suva and the airfield at Nadi on the west side of the island.

Today we are at the Momi Bay Battery, just south of Nadi. This emplacement has been restored and is maintained as a public museum site by the Fijian government today. It houses two 6 inch guns (the King’s Gun and the Queen’s Gun, colloquially), and originally also included an optical rangefinder and various command and control buildings. It had a range of about 8 miles, and controlled one of the few natural approaches to western Fiji.

The guns here were only fired in anger once, and that was actually at an unidentified sonar contact in the Bay. No evidence of an enemy vessel was ever found, and it ended up just being a brief reconnaissance by fire, so to speak. By later in the war, the threat of Japanese invasion had passed, but Fiji remained an active part of the war effort, as a transportation hub and a site for soldiers to get some R&R outside of combat duties. This led to the creation of the successful tourist economy which remains vibrant today on the island.

(more…)

May 14, 2024

QotD: Sporting songs

[A]ll the great football songs are by Americans — Rodgers and Hammerstein (“You’ll Never Walk Alone”) and Livingston and Evans, whose “Que Sera, Sera” has a British lyric of endearing directness:

Mi-illwall, Millwall

Millwa-all, Millwall, Millwall

Millwa-all, Millwall, Millwall

Mi-illwall, Millwall.

(Repeat until knife fight)Mark Steyn, “Hyperpower”, Daily Telegraph, 2002-06-22.

May 13, 2024

Archaeological Publishing – the unpalatable truth

Classical and Ancient Civilization

Published May 11, 2024Some anecdotes about publishing archaeological sites

Roman Legions – Sometimes found all at sea!

Drachinifel

Published Feb 2, 2024Today we take a quick look at some of the maritime highlights of the new special exhibition at the British Museum about the Roman Legions:

https://www.britishmuseum.org/exhibit…

(more…)

QotD: Of course, they could try just … acting

In any case, the demand that actors should play only those parts that are somehow consonant with what we now call their “lived experience” is self-evidently absurd. If taken seriously, Richard III would have to be played by a member of the Royal Family (Prince Andrew, perhaps?), for only such a person could know or imagine what it was like to be a royal person and covet the crown. Taken to its logical conclusion, or its reductio ad absurdum, the argument would mean that the only person an actor could play was him- or herself.

Of course, a happy medium exists, though we are increasingly unable to find it. We should not expect Ophelia to be played by a 90-year-old crone. We should add difficulties in the way of an audience’s “willing suspension of disbelief”, as Coleridge put it, by casting a tall man as short or a short man as tall.

The whole silly controversy reveals to what absurdities we have sunk, thanks to identity politics and a willful misunderstanding, for the sake of personal or group advantage, of what wrongful discrimination is. Storms in teacups can be revealing.

Theodore Dalrymple, “My Kingdom for Some Crutches”, New English Review, 2024-02-06.

May 12, 2024

The fascinating story of HMS Challenger (K07)

Sir Humphrey pens a long blog post about a late Cold War Royal Navy ship — officially just a “diving support vessel”, but apparently much more capable — most naval fans may never have heard about:

The story of HMS Challenger remains one of the most unusual of all post war Royal Navy vessels. Born in the late Cold War, she was in the eyes of the public a “white elephant” commissioned and never operationally used and sold after just a few years’ service at the end of the Cold War. She was to the few public that had heard of her, “the Warship that never was”. But revealing files in the National Archives tell a story of a ship that was designed to fill a range of highly secretive intelligence support functions and clandestine espionage activity that, had she been successful, would have made her perhaps one of the most vital intelligence collection assets in the UK. This article is about the untold story of HMS Challenger and why she deserves far more recognition than enjoyed to date.

The background of the Challenger story can be traced to the mid 1970s when the Royal Navy used the, by then positively venerable, warship HMS Reclaim to conduct diving support work. The Reclaim, commissioned in 1949 was the last warship in the RN to be designed and fitted with sails, that were occasionally used. Employed in diving support and salvage ops for 30 years, she was a vital asset for the recovery of crashed aircraft, support to diving and other assorted duties. But by 1975 she was also very old and out of date and requiring replacement (she paid off as the oldest operational vessel in the Royal Navy in 1979).

To replace her the Royal Navy developed Naval Staff Requirement 7003 and 7741, which were approved in 1976. These requirements set out the need for a replacement and the capabilities that were required. By this stage of the Cold War the world was a very different place both operationally and technologically from when HMS Reclaim entered service. There were significantly more undersea cables laid across the Atlantic, while the SOSUS network (a deep-water network of sonar systems intended to detect Russian submarines) had been delivered and expanded into UK waters in the early 1970s under project BACK SCRATCH. Additionally the Royal Navy had introduced a few years previously the Resolution class SSBN, which by 1976 had four submarines providing a Continuous At Sea Deterrent (CASD) with their Polaris missiles, as well as wider nuclear submarine operations. At the same time new technology was emerging including better diving capability, the rise of miniature submarines capable of both operating at immense depths and also the rise of rescue submarines for stranded nuclear submarines. Additionally technology had improved increasing the ability to recover items from the seabed.

When brought together this provided the RN with the opportunity to think afresh about how to replace Reclaim. The result was a set of requirements that were defined as follows:

The objective of NSR 7003 was to provide the Royal Navy with a Vessel and equipment capable of carrying out seabed operations. The requirement … is to find, inspect, work on and recover items on the seabed at all depths down to 300m with some capability to greater depths.

The specific missions for which the requirement was looking to cater for broke down into three main areas:

- Inspection, neutralisation or recovery of military equipment, including weapons;

- Operations in support of national offshore interests including research;

- Assistance with submarine escape and rescue and with underwater salvage

This represented a significant leap forward compared to Reclaim, which was limited to diving at up to 90m in very limited conditions, and would have provided the Royal Navy with an entirely new level of capabilities.

The decision was taken to proceed with the requirement and Challenger was ordered in 1979 and commissioned in 1983. What then follows is a sorry story of a ship being brought into service and having practically everything that could go wrong, going wrong. This article will not go into any depth on the story of what failed, as to do so would be a lengthy story. Suffice to say that a combination of faulty equipment, manufacturing challenges, fires and other damages and the reality that technical aspirations were not matched by practical delivery in reality meant that Challenger never really became operational.

Used for a series of trials until the late 1980s to prove her systems and see if they would work, she struggled to achieve what was expected of her. She had some success recovering toxic chemicals from the seabed from a sunken merchant ship in the 1980s and then conducting other demonstrations, such as deep diving and supporting submarine rescue trials. But she never lived up to the expectations placed on her, and at a time when the costs required to get her to the level of capability were far too high, and the defence budget was under pressure at a point when the Warsaw Pact threat was rapidly collapsing, the decision was taken to pay her off as a failed experiment even before the wider Options for Change plan was announced. This much is widely known to the public, but what is nowhere near as well known is the missions that Challenger was intended to carry out. Had she been successful, it would have made a very real difference to RN capabilities.

Why did the Royal Navy seem so determined to make a success of Challenger for so many years, to the extent of throwing ever more money at her, given these problems? In short because the missions she was designed to do made it worthwhile. Files in the archives clearly show that beyond the public line of “research” she was designed to carry out exceptionally sensitive missions. Although the original Naval Staff Requirement focused on three areas, by the time she entered service, this had expanded to at least 9 (possibly more). These were:

- Strategic Deterrent Force Security

- Seabed surveillance device support

- Nuclear weapon recovery

- Recovery of security and military sensitive material

- Crashed military aircraft recovery

- Submarine escape and rescue operations

- Salvage operations

- MOD research and data collection for other than intelligence agencies

- Miscellaneous operations in support of other government agencies

It can be seen that far from being just a diving support platform, Challenger was in fact an absolutely central part in providing assurance to the protection of CASD and ensuring the security of the nuclear deterrent and SOSUS. How would she have done this?

The files show that in the 1980s the UK had a different attitude to the US about protection of these routes due to geographic differences.

Germany Surrenders! – WW2 – Week 298 – May 11, 1945

World War Two

Published 11 May 2024Germany signs not one, but two unconditional surrenders and the war in Europe is officially over … although that does not mean that all the fighting in Europe is, for there is fighting and surrenders all over Europe all week. The Japanese launch a counteroffensive on Okinawa; the Chinese launch one in Western Hunan; the Australians advance on Borneo and New Guinea; and the fight continues on Luzon in the Philippines, so there is still an awful lot of the world war to come, even with the end of the war in Europe.

00:00 Intro

00:40 The German Surrender

03:23 Fighting And Surrenders In The East

06:53 The Prague Uprising

15:50 The Last Surrenders In Europe

18:42 The Polish Situation

20:25 The War In China And The South Seas

23:17 Summary

24:44 Conclusion

(more…)

Javier Milei and the “Malvinas” question

Colby Cosh on how Argentine President Javier Milei handled British press inquiries about the Malvinas Falkland Islands like a boss:

I’ve been relishing a classic feast of British press overreaction to a BBC interview with the colourful libertarian president of Argentina, Javier Milei. The Beeb’s Ione Wells visited Milei at the Casa Rosada last week in Buenos Aires for a chat, and nothing like this could possibly happen without some talk about those damned islands — the Falklands or las Malvinas, depending on which country you believe to be their rightful sovereign.

Argentine leaders have to be careful how they talk about the Falklands (and about their uninhabited dependencies elsewhere in the South Atlantic). For decades regimes of left and right in Argentina have opportunistically kept the disputed islands at the forefront of the public imagination, fostering a spirit of delayed revenge. Sometimes this leads to daft verbal outbursts about “colonialism”, alongside game-playing with supplies and access to the islands. The constitution of Argentina contains language asserting “legitimate and imprescriptible sovereignty” over the rocks.

So anything a current Argentine leader says about the Falklands is bound to be scrutinized closely at home and in the United Kingdom. Milei is naturally impulsive, and has the particular problem that he is a political admirer of the late Margaret Thatcher. Wells tried to provoke him by bringing up the 1982 sinking of the General Belgrano and the consequent deaths of 323 Argentine sailors, which is still a slightly controversial episode of the Falklands War among the most self-hating shades of U.K. political opinion.

Milei, who had arranged a little display of Thatcher memorabilia in the room where the interview was held, sliced right through Wells’s Gordian knot. “Criticizing someone because of their nationality or race is very intellectually precarious,” he told Wells. “I have heard lots of speeches by Margaret Thatcher. She was brilliant. So what’s the problem?”

Even if you venerate Thatcher, who ordered the sinking of the Belgrano in very cold blood, you can perceive that this is a non sequitur. Milei is under no obligation to like a fellow neoliberal who was a military enemy of his own country. But one does remember that British statesmen have often been willing to express admiration for Napoleon I, Washington, Rommel and other killers of large numbers of British soldiers.