The response to the essay has been an outpouring of suppressed rage that has been simmering for years in an emotional pressure cooker of silenced frustration. The author, Jacob Savage, provides a ground-level view of the DEI revolution’s human cost, beginning with his personal experiences as an aspiring screenwriter, and then widening the reader’s perspective via interviews with would-be journalists and academics. Every subject described a similar pattern of frustrated ambitions in which, starting around the middle of the 2010s, their careers stalled out for no other reason than their melanin-deficiency and y-chromosome superfluity. Young white men were systematically excluded from every institutional avenue of prestige and prosperity. Doors were closed in academia, in journalism, in entertainment, in the performing arts, in publishing, in tech, in the civil service, in the corporate world. It didn’t matter if you wanted to be a journalist, a novelist, a scientist, an engineer, a software developer, a musician, a comedian, a lawyer, a doctor, an investment banker, or an actor. In every direction, Diversity Is Our Strength and The Future Is Female; every job posting particularly encourages applications from traditionally underrepresented and equity-seeking groups including women, Black and Indigenous People Of Colour, LGBTQ+, and the disabled … a litany of identities in which “white men” was always conspicuous by its absence.

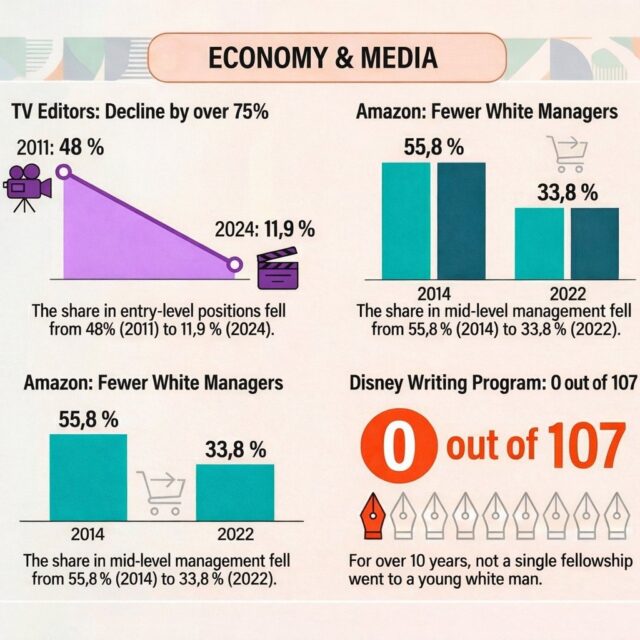

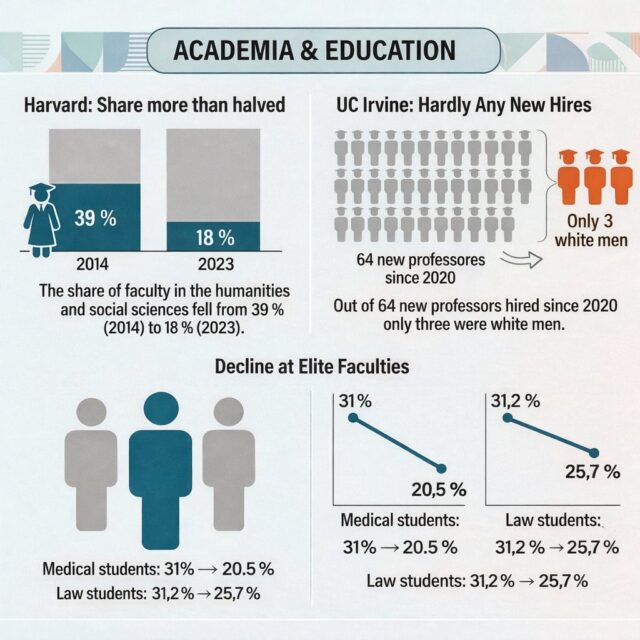

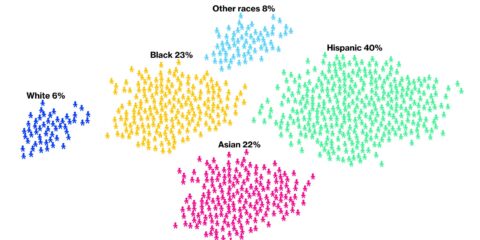

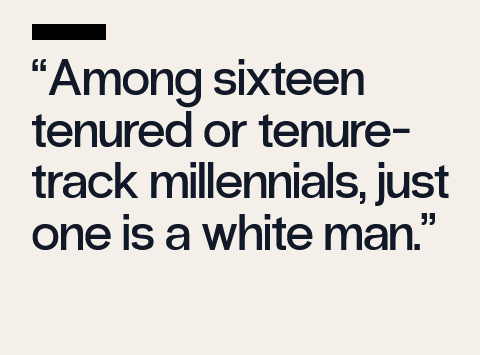

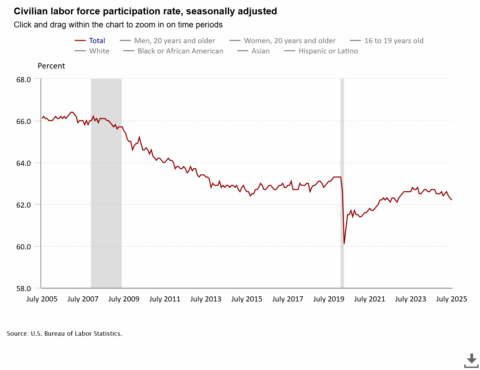

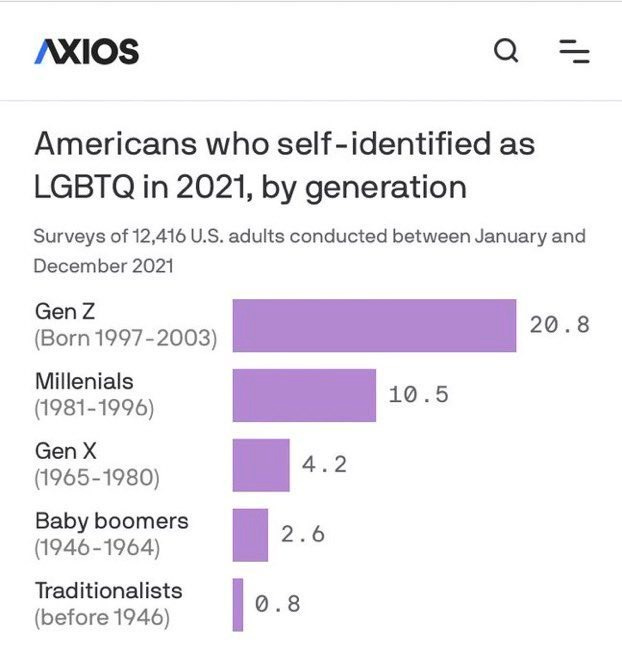

The Lost Generation does not rely only on the pathos of anecdote. Savage includes endless reams of data, demonstrating how white men virtually disappeared from Hollywood writing rooms, editorial staff, university admissions, tenure-track positions, new media journalism, legacy media, and internships. He shows how, after the 2020s, they even stopped bothering to apply, because what was the point? The comprehensive push to exclude young white men from employment wasn’t limited to prestigious creative industries, of course. The corporate sector has also adopted a practice of hiring anyone but white men, as revealed two years ago by a Bloomberg article which gloated that well over 90% of new hires at America’s largest corporations weren’t white.

The Bloomberg article was criticized for methodological flaws, but judging by the outpouring of stories it elicited (just see the several hundred comments my own essay got, the best of which I summarized here) it was certainly directionally accurate.

The real strength of Savage’s article isn’t the cold statistics, though, but the heartrending poignancy with which it highlights the emotional wreckage left in the wake of this cultural revolution.

Hiring processes are opaque. If an employer doesn’t extend an offer, they rarely explain why; at best one receives a formulaic “thank you for your interest in the position, but we have decided to move forward with another applicant. We wish you the best of luck in your endeavours.” They certainly never come out and say that you didn’t get hired because you’re a white man, which is generally technically illegal, for whatever that is worth in an atmosphere in which the unspoken de facto trumps the written de jure. Candidates are not privy to the internal deliberations of hiring committees, which will always publicly claim that they hired the best candidate. Officially a facade of meritocracy was maintained, even as meritocracy was systematically dismantled from within.

The power suit-clad feminists who body-checked their padded shoulder into C-suites and academic departments in the 1970s flattered themselves that they were subduing sexist male chauvinism by outdoing the boys at their own game and forcing the patriarchy to acknowledge their natural female excellence. Growing up I would often hear professional women say things like “as a woman, to get half as far as a man, you have to be twice as good and work twice as hard”. [NR: usually with a smug “fortunately, that’s not difficult” tacked on] The implication of this was that women were just overall better than men, because the old boy’s club held the fairer sex to a higher standard than it did the good old boys. Of course this was almost never true, these women were overwhelmingly the beneficiaries of affirmative action programs motivated by anti-discrimination legislation that opened up any corporation that didn’t put a sufficient number females on the payroll to ruinous lawsuits. Moreover, a fair fraction of them were really being recruited as decorative additions to the secretarial harems of upper management. Nevertheless it helped lay the foundation for the Future Is Female boosterism that stole the future from a generation of young men.

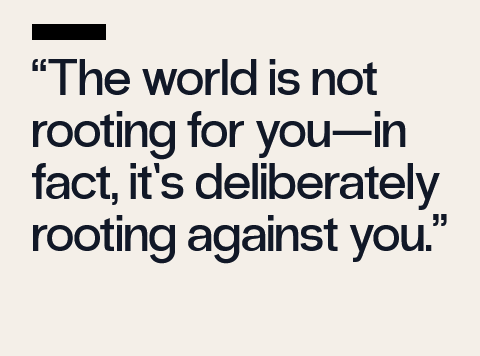

There was a time, not so long ago, where I naively assumed that my own situation was simply the inverse of the one women had faced in the 70s and 80s. I was aware that I was being rather openly discriminated against, but imagined that this simply meant that I had to perform to a higher standard, that if I was good enough, the excellence of my work would shatter the institutional barriers and force someone to employ me. It took me several long and agonizing years to realize that this just wasn’t true. The crotchety patriarchs of the declining West may have been principled men capable of putting stereotypes aside to recognize merit; in fact, the historical evidence suggests that they overwhelmingly prized merit above any other consideration (just as the evidence suggests that their stereotypes were overwhelmingly correct). The priestesses of the present gynocracy hold themselves to no such standard. They don’t care about your promise or your performance, at all. If anything, performing well is a strike against you, because it threatens them. Nothing makes them seethe more than being outperformed by men. They champion mediocrity as much to punish as to promote.

Young white men had been raised to expect meritocracy. They’d also been raised to be racial and sexual egalitarians. People in the past, they believed, had been bigoted, believing superstitious stereotypes about differences of ability and temperament between the sexes and races that had no foundation in reality, pernicious falsehoods that were developed and propagated as intersectional systems of oppression with the purpose of justifying slavery, colonialism, imperialism, and genocide. Naturally they were appalled to have such charges laid at their feet, and so they they agreed that we were all going to try and correct this injustice, and we’d do it by carefully eliminating every potential source of racial or sexual bias, eliminating all the unfair barriers to advancement within society, in particular although not certainly not exclusively via university admissions and institutional hiring. That was the original official line on DEI: that it wasn’t about excluding white men, heaven forbid, no, it was simply about including everyone else, widening the talent pool so that we could ensure both the fairest possible system of advancement, and that the best possible candidates were given access to opportunity. In practice, we were told, this wouldn’t be a quota system: everything would still be meritocratic, but if it came down to a coin flip between two equally qualified candidates, one of whom was a white man and the other of whom was not, the not would win. Fair enough, the young white men thought at first: we’ll all compete on a level playing field, in fact we’ll even accept a bit of a handicap in the interests of correcting historical injustices, and may the best human win.

But the DEI commissars had absolutely no interest in a level playing field. That the playing field wasn’t already as level as it could be was, in fact, one of their most infamous lies. The arena has always been level: physics plays no favourites in the eternal struggle for survival and mastery. If some always end up on top – certain individuals, certain families, certain nations, certain races – this is invariably due to their own innate advantages over their competitors. An interesting example of this was provided by the Russian revolution. The Bolsheviks cast down the old Czarist aristocracy, stripping them of land, wealth, and status, and then discriminated against them in every way possible; a century later, their descendants had clawed their way back to power and prominence. The only possible conclusion from this is that the Russian aristocrats were, at least to some degree, aristos – the best, the noblest – in some sense that went beyond inherited estates.

The young white men did not think of themselves as aristocrats with a blood right to a certain position in life, but as contestants in a fair competition, who would rise or fall on their own merits and by their own efforts. They then abruptly found themselves competing in a system in which it was simply impossible for them to rise, but which also lied to them about the impassable barrier that had been placed in their way. If you noticed the unfairness, you were told that this was ridiculous, that as a white man you were automatically and massively privileged, that it was impossible to discriminate against you because of this, and that in addition to being a bigoted racist you were also quite clearly mediocre, a bitter little man filled with envy for the winners in life, the brilliant beautiful black women who had obviously outcompeted you because they were just so much smarter, so much more dedicated, and so much better because after all they had succeeded in spite of the deck being stacked against them whereas you had failed despite having been born with every unearned advantage in the world.

An entire generation had their future ripped from their hands, and were then told that it was their fault, their inadequacy. They were gaslit that there was no systemic discrimination against them, that their failure to launch was purely due to their individual failings … while at the same time being told that those who were so clearly the beneficiaries of a heavy thumb on the scale were the victims of discrimination, that the oppressors were the oppressed, and that to cry “oppression” yourself was therefore itself a form of oppression.

Do you see how cruel that is? How sadistic? It is more psychologically vicious by far than anything the Bolsheviks did to the Russian aristocracy. At least the Bolsheviks were honest. Although, it must be said, the psychological sadism of the gay race commissars is part of a tradition, communists have often been noted for their demonic cruelty.