While I’ve been following the net neutrality debate, I was still unconvinced that either side had the answers. In a post from 2008, ESR helps to explain why I was confused:

Let it be clear from the outset that the telcos are putting their case for being allowed to do these things with breathtaking hypocrisy. They honk about how awful it is that regulation keeps them from setting their own terms, blithely ignoring the fact that their last-mile monopoly is entirely a creature of regulation. In effect, Theodore Vail and the old Bell System bribed the Feds to steal the last mile out from under the public’s nose between 1878 and 1920; the wireline telcos have been squatting on that unnatural monopoly ever since as if they actually had some legitimate property right to it.

But the telcos’ crimes aren’t merely historical. They have repeatedly bargained for the right to exclude competitors from their networks on the grounds that if the regulators would let them do that, they’d be able to generate enough capital to deploy broadband everywhere. That promise has been repeatedly, egregiously broken. Instead, they’ve creamed off that monopoly rent as profit or used it to cross-subsidize competition in businesses with higher rates of return. (Oh, and of course, to bribe legislators and buy regulators.)

Mistake #1 for libertarians to avoid is falling for the telcos’ “we’re pro-free market” bullshit. They’re anything but; what they really want is a politically sheltered monopoly in which they have captured the regulators and created business conditions that fetter everyone but them.

OK, so if the telcos are such villainous scum, the pro-network-neutrality activists must be the heroes of this story, right?

Unfortunately, no.

Your typical network-neutrality activist is a good-government left-liberal who is instinctively hostile to market-based approaches. These people think, rather, that if they can somehow come up with the right regulatory formula, they can jawbone the government into making the telcos play nice. They’re ideologically incapable of questioning the assumption that bandwidth is a scarce “public good” that has to be regulated. They don’t get it that complicated regulations favor the incumbent who can afford to darken the sky with lawyers, and they really don’t get it about outright regulatory capture, a game at which the telcos are past masters.

[…]

In short, the “network neutrality” crowd is mainly composed of well-meaning fools blinded by their own statism, and consequently serving mainly as useful idiots for the telcos’ program of ever-more labyrinthine and manipulable regulation. If I were a telco executive, I’d be on my knees every night thanking my god(s) for this “opposition”. Mistake #2 for any libertarian to avoid is backing these clowns.

In the comments, he summarizes “the history of the Bell System’s theft of the last mile”.

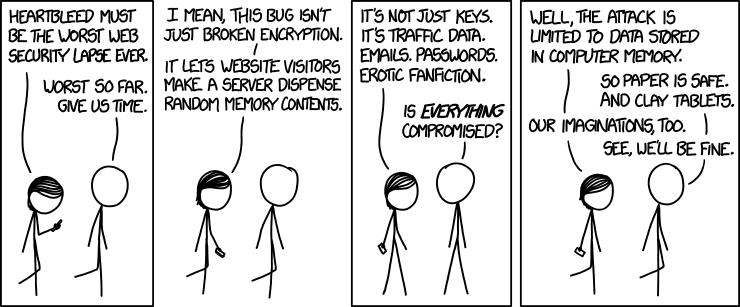

I actually chuckled when I read rumor that the few anti-open-source advocates still standing were crowing about the Heartbleed bug, because I’ve seen this movie before after every serious security flap in an open-source tool. The script, which includes a bunch of people indignantly exclaiming that many-eyeballs is useless because bug X lurked in a dusty corner for Y months, is so predictable that I can anticipate a lot of the lines.

I actually chuckled when I read rumor that the few anti-open-source advocates still standing were crowing about the Heartbleed bug, because I’ve seen this movie before after every serious security flap in an open-source tool. The script, which includes a bunch of people indignantly exclaiming that many-eyeballs is useless because bug X lurked in a dusty corner for Y months, is so predictable that I can anticipate a lot of the lines.