Forgotten Weapons

Published 17 Sept 2025The FAMAS G1 was developed as a lower-cost option for FAMAS export sales. The original F1 model had been offered for international sale, but it attracted little interest largely because of its high price. In response, GIAT created the G1 with many of the extra features left optional. This allowed them to reduce the price by up to 40%. Specific feature reductions included:

- Omitting the bipod legs

- Omitting the grenade launching sights and barrel fittings

- Omitting the night sights

- Omitting the burst fire mechanism

- Replacing the trigger guard with a molded whole-hand trigger guard

The mechanism stayed the same, and all of the omitted features could be included as options. This still failed to generate any export sales, in part because GIAT came under ownership of FN, and FN’s competing assault rifle options were more profitable than the FAMAS.

The G1 did contribute elements like the whole-hand trigger guard to the mid-1990s G2 model adopted by the French Navy, however.

(more…)

February 10, 2026

FAMAS G1: Simplified for Export

January 23, 2026

“Functional illiteracy was once a social diagnosis, not an academic one”

On Substack, Maninder Järleberg illuminates the problem of functional illiteracy in higher education in the west:

The Age of Functional Illiteracy

Functional illiteracy was once a social diagnosis, not an academic one. It referred to those who could technically read but could not follow an argument, sustain attention, or extract meaning from a text. It was never a term one expected to hear applied to universities. And yet it has begun to surface with increasing regularity in conversations among faculty themselves. Literature professors now admit — quietly in offices, more openly in essays — that many students cannot manage the kind of reading their disciplines presuppose. They can recognise words; they cannot inhabit a text.

The evidence is no longer anecdotal. University libraries report historic lows in book borrowing. National literacy assessments show long-term declines in adult reading proficiency. Commentators in The Atlantic, The Chronicle of Higher Education, and The New York Times describe a generation for whom long-form reading has become almost foreign. A Victorian novel, once the ordinary fare of undergraduate study, now requires extraordinary accommodation. Even thirty pages of assigned reading can provoke anxiety, resentment, or open resistance.

It would be dishonest to ignore the role of the digital world in this transformation. Screens reward speed, fragmentation, and perpetual stimulation; sustained attention is neither required nor encouraged. But to lay the blame solely at the feet of technology is a convenient evasion. The crisis of reading within universities is not merely something that has happened to the academy. It is something the academy has, in significant measure, helped to produce.

The erosion of reading was prepared by intellectual shifts within the humanities themselves—shifts that began during the canon wars of the late twentieth century. Those battles were never only about which books should be taught. They were about whether literature possessed inherent value, whether reading required discipline, whether difficulty was formative or oppressive, and whether the humanities existed to shape students or merely to affirm them. In the decades that followed, entire traditions of reading were dismantled with remarkable confidence and astonishing speed.

The result is a moment of institutional irony. The very disciplines charged with preserving literary culture helped undermine the practices that made such culture possible. What we are witnessing now is not simply a failure of students to read, but the delayed consequence of ideas that taught generations of readers to approach texts with suspicion rather than attention, critique rather than encounter.

This essay is part of a larger project to trace that history, to explain how a war over the canon helped usher in an age in which reading itself is slipping from our grasp, and why the consequences of that war are now returning to the academy with unmistakable force.

The Canon Wars: A Short Intellectual History

To understand the present state of literary studies, one must return to the canon wars of the 1980s and 1990s — a conflict that reshaped the humanities with a speed and finality few recognised at the time. Although remembered now as a dispute about which books deserved a place on the syllabus, the canon wars were in truth a contest over the very meaning of literature and the purpose of a humanistic education.

In the decades after the Second World War, the curriculum in most Western universities still rested upon a broadly shared assumption: that certain works possessed enduring value, that they spoke across time, and that an educated person should grapple with them. This conviction, however imperfectly applied, formed the backbone of the humanities. It was also increasingly at odds with a new intellectual climate shaped by post-1968 radicalism, the rise of identity politics, and the importation of French theory.

By the early 1980s, tensions that had simmered beneath the surface erupted into public view. The most emblematic flashpoint came at Stanford University in 1987–88, when student demonstrators chanted, “Hey hey, ho ho, Western Culture’s got to go!” in protest of the university’s required course on Western civilisation. The course was soon dismantled, replaced by a broader, more ideologically framed program. What happened at Stanford quickly reverberated across the country. Departments revised reading lists, restructured curricula, and abandoned long-standing core requirements.

On one side of the debate stood defenders of the canon—figures such as Harold Bloom, Allan Bloom, E.D. Hirsch, and Roger Kimball—who argued that the great works formed a kind of civilisational inheritance. The canon, they insisted, was not a museum of privilege but a record of human striving, imagination, and achievement. On the other side were scholars like Edward Said, Toni Morrison, Henry Louis Gates Jr., Gayatri Spivak, and Homi Bhabha, who contended that the canon reflected histories of exclusion and domination, and that expanding or dismantling it was a moral imperative.

But beneath these arguments lay a deeper philosophical rift. The defenders assumed that literature possessed intrinsic value, that texts could be read for their beauty, their insight, or their power. The critics, armed with concepts drawn from Foucault, Derrida, and Barthes, argued that literature was inseparable from structures of power, that meaning was unstable, and that reading was less an act of discovery than an exposure of hidden ideological operations.

The canon wars ended not with a negotiated peace but with a decisive transformation. The traditional canon was not merely expanded; its authority was dissolved. And with it dissolved a set of shared assumptions about why we read at all.

January 16, 2026

“During the 1990s, the midlist disappeared at major publishing houses”

It’s no secret that the publishing industry has changed substantially — and from most readers’ point of view, for the worse — but when did it happen, and why? Ted Gioia points to the mid-1990s in New York City:

Everybody can see there’s a crisis in New York publishing. Even the hot new books feel lukewarm. Writers win the Pulitzer Prize and sell just few hundred copies. The big publishers rely on 50 or 100 proven authors — everything else is just window dressing or the back catalog.

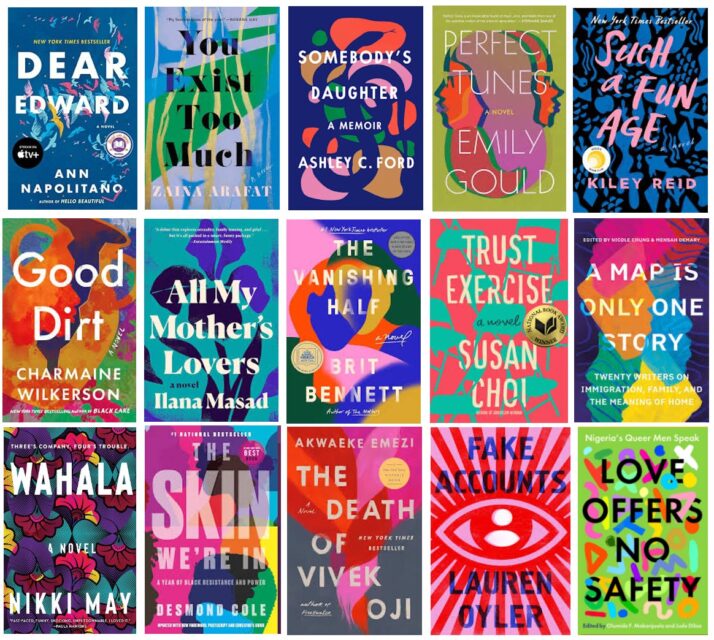

You can tell how stagnant things have become from the lookalike covers. I walk into a bookstore and every title I see is like this.

They must have fired the design team and replaced it with a lazy bot. You get big fonts, random shapes, and garish colors — again and again and again. Every cover looks like it was made with a circus clown’s makeup kit.

My wife is in a book club. If I didn’t know better, I’d think they read the same book every month. It’s those same goofy colors and shapes on every one.

Of course, you can’t judge a book by its cover. But if you read enough new releases, you get the same sense of familiarity from the stories. The publishers keep returning to proven formulas — which they keep flogging long after they’ve stopped working.

And that was a long time ago.

It’s not just publishing. A similar stagnancy has settled in at the big movie studios and record labels. Nobody wants to take a risk — but (as I’ve learned through painful personal experience) that’s often the riskiest move of them all. Live by the formula, and you die by the formula.

How did we end up here?

It’s hard to pick a day when the publishing industry made its deal with the devil. But an anecdote recently shared by Steve Wasserman is as good a place to begin as any.

He’s describing a lunch with his boss at Random House in the fall of 1995. Wasserman is one of the smartest editors I’ve ever met, and possesses both shrewd judgment and impeccable tastes. So he showed up at that lunch with a solid track record.

But it wasn’t good enough. The publishing industry was now learning a new kind of math. Steve’s boss explained the numbers:

Osnos waited until dessert to deliver the bad news … First printings of ten thousand copies were killing us. It was our obligation to find books that could command first printings of forty, fifty, even sixty thousand copies. Only then could profits be had that were large enough to feed the behemoth — or more precisely, the more refined and compelling tastes — that modern mainstream publishing demanded.

Wasserman countered with infallible logic:

I pointed out, if such a principle were raised to the level of dogma, none of the several books that were then keeping Random House fiscally afloat — Paul Kennedy’s The Rise and Fall of the Great Powers, John Berendt’s Midnight in the Garden of Good and Evil (eventually spending a record two hundred and sixteen weeks on the bestseller list, and adapted into a film by Clint Eastwood), and Joe Klein’s Primary Colors (published anonymously and made into a movie by Mike Nichols in 1998) — would ever have been acquired. None had been expected to be a bestseller, and each had started out with a ten-thousand copy first printing.

But it was a hopeless cause. And I know because I’ve had similar conversations with editors. And my experience matches Wasserman’s — something changed in the late 1990s.

The old system offered more variety. It took greater risks. It didn’t rely so much on formulas. So it could surprise you.

In a post about the jinned-up anger about CEO pay versus average worker pay, Tim Worstall briefly touches on the plight of writers:

Or even more fun perhaps.

In 2006, median author earnings were £12,330. In 2022, the median has fallen to £7,000, a drop of 33.2% based on reported figures, or 60.2% when adjusted for inflation.

That’s median earnings so 50% of all authors earn less than £7,000. And note this is only among those taking it seriously enough to join the Society of Authors (a number which does not include me). Even at my level of scribbling 5x to 10x, depends upon the year, of those median earnings can be gained.

But top level authors, well, they do earn rather more. No, not thinking of the JK Rowling level of global superstardom. But it would astonish me if Owen Jones is earning less than 50x those median earnings (yes, Guardian column, books, Patreon, YouTube and whatever, £350k would be my lower guess).

Writers display that similar sort of income disparity and range, no? Because we’re not about to suggest that Owen is top rank earning now, are we, even if it is a damn good income there.

Which shows us what is wrong with the Cardiganista’s1 initial calculation. They’re pointing only to the incomes of the very tippy toppy of the income distribution for that job. If we apply the same sort of reasoning to writers or footie players (and it’ll be the same for actors and all sorts of other peeps) we find very similar distributions.

- They all seem to be Guardian retirees and their byline pictures have them wearing cardigans — or did — therefore …

January 14, 2026

QotD: Bill Clinton, proto-PUA

Slick Willie was a pudgy marching band dork who learned some Game. The 1990s were the worst decade in human history for a lot of reasons, and I typically say “because that’s when the Jonesers really came into their own”, but that’s not accurate. It’s when the AWFL — that’s “affluent White female liberal”, and it’s redundant at least 2x, but I didn’t coin it — realized that she ruled the Evil Empire. We called them “soccer moms” back then, “Karen” now, but the concept is the same (though the former weren’t quite as obnoxious, it was a difference of degree, not kind).

Most men I knew, even most Boomer and Generation Jones men, were put off by Bill Clinton. We all instinctively knew he was a weasel, even if we couldn’t quite articulate why. But oh how the soccer moms loved him! He was the pudgy marching band dork they’d actually settled for, carrying on like the Alpha Chad they still knew, in their secret hearts, they were hot enough to snag. What appeared to men (and what actually was) narcissism and braggadocio, looked like caddish swagger to soccer moms. But in actual fact he was just a nerd who’d learned Game ahead of its time, and that’s how he governed …

[Funny how none of the “Game” gurus recognized this. I guess I can’t blame them, since I just now realized it myself, but then again I don’t pimp myself out as some kind of Master Pickup Artist. Instead of aping Tom Cruise and Daniel Craig and those guys, the “Game” crowd should’ve been studying Bill Clinton. That’s what Game can do for you, boys, and yeah, I know you’ve got your sights set a little higher than Monica, but for pete’s sake, the man was President of the United States. He cigar-banged the entire electorate. That’s some serious Game].

Severian, “Friday Mailbag”, Founding Questions, 2022-04-08.

NR: In case “PUA” has fallen sufficiently out of current use — as it probably deserves — here’s a useful overview of the Pick Up Artist jargon by Kim du Toit from 2017.

Update, 15 January: Welcome, Instapundit readers! Have a look around at some of my other posts you may find of interest. I send out a daily summary of posts here through my Substack – https://substack.com/@nicholasrusson that you can subscribe to if you’d like to be informed of new posts in the future.

December 20, 2025

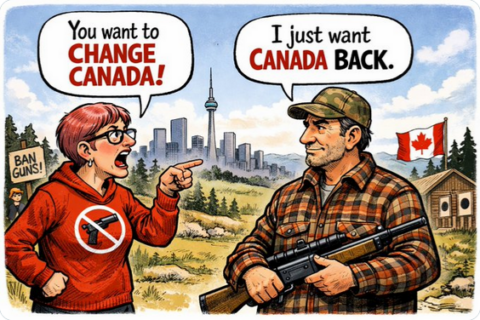

“We don’t want to change Canada; we want the Canada we grew up in back”

On the social media site formerly known as Twitter, Gun Owners of Canada refute claims that they want to change the nation and explain that the nation has been radically changed to the agenda of a small, urban pressure group by compliant politicians and civil servants:

For those of us who grew up in or lived through the 1980s and 1990s, the change is impossible to miss.

We remember a Canada where firearms ownership was ordinary, regulated, and largely uncontroversial. Target shooting, hunting, and collecting were part of everyday life. Gun clubs existed quietly on the edge of town. Weekend trap shoots, small-bore leagues, cadets, and hunting camps weren’t political statements, they were just normal parts of growing up.

That Canada had rules. Before the mid-1990s, ownership was governed through the Firearms Acquisition Certificate (FAC) system. You were screened, approved, and expected to act responsibly. Misuse was punished severely. But lawful owners weren’t treated as provisional citizens, waiting to see if the rules would change again next year.

Context matters. In the Canada of the 1980s, firearms that are now politically charged were treated very differently. The AR-15, for example, existed openly within the shooting sports community and was classified as non-restricted at the time. It was regulated, owned by vetted individuals, and largely absent from public controversy.

That isn’t shocking to people who lived through it. It simply illustrates how much the framework has shifted.

Firearms ownership in that era wasn’t limited to a single purpose. Most people participated through sport, hunting, or collecting. Some also possessed firearms with personal security in mind, particularly in rural areas, remote communities, or professions where police response was distant. This wasn’t sensationalized, and it wasn’t politicized. It was understood as part of lawful ownership, governed by responsibility and accountability.

In the Canada many of us grew up in, following the law meant something. If you complied with the rules as they existed, you could reasonably expect stability.

That’s what’s been lost.

Beginning in the mid-1990s, Canada transitioned to the modern licensing system and expanded registration, classification, and regulation. The shooting sports community adapted, again, to our own fault. We trained, we licensed, we registered, and we complied in good faith.

What we didn’t grow up with was the idea that entire classes of legally owned firearms could be redefined by regulation overnight. Or that decades of compliance could still end in confiscation, not because of misuse, but because of shifting political definitions and political theatre.

When firearm owners push back against this, we’re told we want to “change Canada.”

From our perspective, we’re responding to the change, not demanding it.

Other democracies have recognized the risk in allowing lawful ownership to exist solely at the discretion of the government of the day. Some have taken steps to ensure that civilian firearms ownership, particularly for sport, hunting, and lawful personal security, is anchored in a way that prevents arbitrary reclassification, while still allowing strong regulation and oversight.

That idea isn’t radical. It’s about predictability, due process, and trust between citizens and governance.

Firearm owners aren’t asking for chaos. We’re asking for the same social contract we grew up with: follow the rules, be accountable and don’t have the ground shift beneath your feet without warning.

So, no. We don’t want to change Canada.

We want the Canada we knew, back:

One where responsibility mattered, laws were stable, and lawful communities weren’t erased by regulation.Bring that Canada back. This one doesn’t resemble it, at all.

December 19, 2025

QotD: “1998 was the official start of the Girlboss Era”

Paltrow seemed to arrive on the scene having everything and wanting for nothing.

Funny, that’s also the most accurate description of an AWFL ever penned. Who the hell are they, and where did they come from? How do they have the free time and endless disposable cash to do literally every single thing they do?

In 2001, she promoted Shallow Hal — in which she played Rosemary, an obese woman whose “inner beauty” is only visible to Hal (Jack Black) — by talking about doing practice runs in her character’s fat suit. “I got a real sense of what it would be like to be that overweight, and every pretty girl should be forced to do that.”

Wait, this is supposed to be a hit piece? Because that might be the most sensible thing I have ever heard a woman say. Yes, definitely they should be forced to do that, if not the full Norah Vincent. If you’re halfway presentable, ladies — hell, if you’re not grossly deformed — you’re playing life on “God mode”. Look at all the simps in your social media feeds, and tell me I’m wrong. Being forced to go around in a fat suit for a week or two is a necessary corrective.

Paltrow’s first big trip on the Hollywood hater-go-round was 1998, the year she won the Best Actress Oscar for Shakespeare in Love and gave a memorably messy, genuinely emotional acceptance speech. (Days after her win, Salon was among many outlets eviscerating her.) What viewers didn’t see, Odell notes, is the amount of effort by Miramax head Harvey Weinstein to make Shakespeare a winner, raise the profile of his still-independent studio, and solidify his belief that Paltrow belonged to him.

I’m going to stop here, because there’s really no point. I just wanted everyone to remember Shakespeare in Love. You do remember Shakespeare in Love, don’t you?

Of course you don’t; it was silly and forgettable at the time, and now is remembered, if at all, as a bizarre footnote — it’s the movie that won Best Picture over Saving Private Ryan. From the perspective of 2025, then, it sure looks like 1998 was the official start of the Girlboss Era.

Severian, “Kvetching Up With Karen: DC Edition”, Founding Questions, 2025-08-14.

September 26, 2025

School Cafeteria Sloppy Joe from the 1980s & ’90s

Tasting History with Max Miller

Published 22 Apr 2025Ground beef in a delicious tomato-based sauce on a hamburger bun, part of a classic 90s American school lunch

City/Region: United States of America

Time Period: 1988Today we know sloppy joes as a saucy ground beef sandwich, but the term sloppy joe has referred to many things over the years. A sloppy joe could be other kinds of sandwiches, a nickname for a messy friend, or women’s fashion from the 1940s and 50s that included pants and looser fitting styles.

For me, though, it is this style of sandwich. Really, it is this version of this sandwich. Sloppy joes were a larger part of my adolescent diet than was healthy, and these taste exactly like the ones I remember from middle school.

Be sure to get the cheapest hamburger buns possible to authentically recreate this nostalgic lunchtime favorite.

Sloppy Joe on a Roll (50 servings)

Raw ground beef (no more than 24% fat) … 17 lb 4 oz

Dehydrated onions … 2 1/4 oz … 2/3 cup

OR Fresh onions, chopped … 1 lb 2 oz … 3 cups

Garlic powder … 2 Tbsp

Tomato paste … 3 lb 8 oz … 1/2 No. 10 can

Catsup … 3 lb 9 oz … 1/2 No. 10 can

Water … 2 qt 3 1/2 cups

Vinegar … 2 1/4 cups

Dry mustard … 1/4 cup

Black pepper … 2 tsp

Brown sugar, packed … 5 1/2 oz … 3/4 cup

Hamburger rolls…100

— Quantity Recipes for School Food Service by the United States Department of Agriculture, 1988

September 25, 2025

QotD: The Clinton years

… in a weird way I feel bad for the young folks who never got a chance to experience life under Bill Clinton. Back then, we — as a society — still acknowledged that there was such a thing as “the truth”. You know, statements about the world that actually correspond to the world in a meaningful and systematic way. Watching Bill Clinton lie was great practice. You young folks are used to everyone, everywhere, in power being an utter sociopath, but it was a novelty back then.

Bill Clinton, some wag observed, would rather climb to the top of Mt. Everest to lie to you than stand still and tell you the truth. He lied when it was to his advantage, and he lied when it was to his very obvious disadvantage. He lied when there was absolutely no point to lying — indeed, like climbing Mt. Everest, when it took enormous effort and real planning to lie. He lied just for the fun of it, and if you saw him do it enough, you realized what that little smirk on his greasy, chicken-fried mug actually was: Orgasm. Bill Clinton got off on lying. That’s why he did it. Every press conference the man ever did was frottage.

Severian, “Party like it’s 1999”, First Questions, 2022-01-13.

September 19, 2025

QotD: The sub-generations of Generation X

… “Gen X” is actually a misnomer, as there are at least three distinct subgroups. There’s the very earliest Xers, the guys who were in high school in the late 1970s. They often get lumped in with the Baby Boomers, too, though they’re as different from the Boomers as they are from us, the “mid” Xers. Think Wooderson from Dazed and Confused. Brian Niemeier calls them “Generation Jones”, and while I don’t like that tag I don’t know what else to call them (except maybe “Woodersons”), so roll with it.

Then there’s the group that was in high school in the late 1980s. I squeak into this group (barely). We’re the mid-Xers. The real “grunge” generation. If Dazed and Confused is a pretty decent late-90s approximation of late-70s high school kids, then the best description I can give you of a “grunge” kid is the movie Deadpool. Made in 2016, by guys who were born in the mid-1970s. That’s grunge, in a way Kurt Cobain couldn’t even imagine. Fourth wall breaks! Sarcastic asides about the fourth wall breaks! Profanity! Masturbation jokes! And snark, snark, snark — unrelenting snark, about everything, all the time. Every second of that movie screams “I can’t believe you fags are amused by this, but since you obviously do, here’s lots more! Choke on it!!!”

The writers obviously wanted to work on Buffy the Vampire Slayer, but Joss Whedon was too cool for them — imagine the twisted psyche of a person who thinks Joss Whedon is cool — and Sarah Michelle Gellar just laughed at them, so they made Deadpool out of spite.

Then there’s the late Xers. They were in high school in the late 1990s, which is why us oldsters call them “Millennials” (as the term is now used, it seems to mean “those born around 2000”, i.e. the generation just now getting out of college. We took it to mean “those who were just getting out of college around the turn of the century”). Obviously I use the Internet. I’m using it now, but I’m not on the internet, and I’m certainly not an Internet Person. The very late Xers are Internet People. The very first Internet People; they invented the concept of Internet People. Mark Zuckerberg (born 1984) is a late Xer. The people behind Twitter (Jack Dorsey born 1976; Biz Stone 1974; Evan Williams 1972) are mid-Xers; they were ahead of the curve.

Severian, “Addendum to Previous”, Founding Questions, 2022-02-24.

September 8, 2025

September 7, 2025

September 5, 2025

QotD: Hillary Clinton in the White House

As some of you know, I was the Air Force Military Aide for Bill Clinton, lived in the White House, traveled everywhere they traveled, and carried the “nuclear football”. As such, I was always in close proximity to both Bill and Hill.

Among the military who served in the White House and the professional White House staff, the Clinton administration was infamously known for its lack of professionalism and courtesy, though few ever spoke about it. But when it came to rudeness, it was Hillary Clinton who was the most feared person in the administration. She set the tone. From the very first day in my assignment.

When I first arrived to work in the White House, my predecessor warned me. “You can get away with pissing off Bill but if you make her mad, she’ll rip your heart out.” I heeded those words. I did make him mad a few times, but I never really pissed her off. I knew the ramifications. I learned very quickly that the administration’s day-to-day character, whether inside or outside of DC, depended solely on the presence or absence of Hillary. Her reputation preceded her. We used to say that when Hillary was gone, it was a frat party. When she was home, it was Schindler’s List.

In my first few days on the job, and remember I essentially lived there, I realized there were different rules for Hillary. She instructed the senior staff, including me, that she didn’t want to be forced to encounter us. We were instructed that “whenever Mrs. Clinton is moving through the halls, be as inconspicuous as possible”. She did not want to see “staff” and be forced to “interact” with anyone. No matter their position in the building. Many a time, I’d see mature, professional adults, working in the most important building in the world, scurrying into office doorways to escape Hillary’s line of sight. I’d hear whispering, “She’s coming, she’s coming!” I could be walking down a West Wing hallway, midday, busier than hell, people doing the administration’s work whether in the press office, medical unit, wherever. She’d walk in and they’d scatter. She was the Nazi schoolmarm and the rest of us were expected to hide as though we were kids in trouble. I wasn’t a kid, I was a professional officer and pilot. I said “I’m not doing that”.

There was also a period of time when she attempted to ban military uniforms in the White House. It was the reelection year of 1996, and she was trying to craft the narrative that the military was not a priority in the Clinton administration. As a military aide, carrying the football, and working closely with the Secret Service, I objected to that. It simply wasn’t a matter of her political agenda; it was national security. If the balloon went up, the Secret Service would need to find me as quickly as possible. Seconds matter. Finding the aide in military uniform made complete sense. Besides, what commander in chief wouldn’t want to advertise his leadership and command? She finally relented because the Secret Service weighed in.

The Clintons are corrupt beyond words. Hillary is evil, vindictive, and profane. Hillary is a bitch.

Buzz Patterson, Twitter, 2025-05-17.

August 12, 2025

AOL to shut down its last dial-up access: dozens to be inconvenienced

James Lileks on the end-of-era announcement from AOL — and I can’t recall the last time I thought of that company — that they’ll be eliminating the last of their dial-up internet access accounts:

New tech: shiny today, tarnished tomorrow. Everything that was once bright and brilliant now stamps its walker towards the exit door. The headlines wave goodbye: Last telegram office in the US shut down.

Last phone booth in New York is decommissioned. The latest: AOL to shut off its landline customers.

You’d think this would be news on the level of “homing pigeon trainer employment hits record lows”.

Who uses dialup? Yahoo, which now owns the AOL brand, says that the user base is in the “low thousands”, which suggests that some people forgot to turn off autopay in 2005. What does AOL do today? The usual basket of dross and chum. A website that offers “trending videos” — gosh, don’t know where else you’d find those — and a lot of news stories, supplied by Yahoo, and its … numberless army of journalists, I guess.

It’s a legacy brand for people who want to slide into the internet like comfy slippers they left under the desk. And that’s fine. Facebook serves the same function. It’s a place to start, a home base. A familiar window out which we gaze daily We all have them. But let us not get nostalgic for AOL and the early days of the internet. Some people, of course, love to talk about the pioneer days, and how it required some technical know-how:

Well, we didn’t have those fancy little pre-made modems like you got in the 90s, so we had to get a little matchbox and fill it up with a certain kind of specially-bred insect that sang a note at a particular pitch when exposed to electrical current. So you’d crank up the generator and put the little alligator clips on the box and hold the box up to the phone while you entered your user name in Morse code by pushing on the hang-up buttons, and then you had to shake the box so the insect singing would modulate. Took about an hour, but then you’d be “On the Line”, as we said, and you could go to a Usenet group and call people Nazis. Kids today, they can call someone a Nazi without lifting a finger.

August 11, 2025

Smug Canadian boomer autohagiography rightly antagonizes the under-35s

Fortissax had an argument with one of his readers over a smug, self-congratulating meme about how wonderful Canada was in the 1990s and early 2000s:

What we lived through long before Trudeau was the Shattering, the breakdown of Canada’s social cohesion, driven by left-liberalism with communist characteristics applied to race, ethnicity, sex, and gender, and punitive almost exclusively toward visibly White men. My generation, those millennials born on the cusp of Gen Z, saw post-national Canada take shape not in the comfortable suburban rings of the GTA or the posh boroughs of Outremont and Westmount, but in self-segregated, ghettoised enclaves of immigrants whose parents never integrated and were never required to.

Memes like that are dishonest because they feed a false memory. The 2000s were not normal. Wages were stagnant, housing was already an asset bubble, and immigration was still flooding in under a policy that explicitly forbade assimilation. Brian Mulroney had enshrined multiculturalism into law in 1988. Quebec alone resisted, carving out the right to limit immigration under the 1992 Quebec–Canada Accord. After Chrétien, Stephen Harper brought in three million immigrants, primarily from China, India, and the Philippines in that order.

The Don Cherry conservatives of that era were Bush lite. They were rootless, cut off from their history, their identities manufactured from the top down since the days of Lester B. Pearson. They conserved nothing. For Canadian youth, it was the dawn of a civic religion of wokeness, totalitarian self-policing by striver peers, and the quiet coercion of every institution. My memories of that decade are of constant assault — mental, physical, spiritual — from leftists in power, from encroaching foreigners, and from the cowardice of conservatives.

Your 2000s might have been great. For us, they were communist struggle sessions. In 2009 we were pulled from class to watch the inauguration of Barack Obama, a foreign president, as a historic moment for civil rights. Our schools excluded us while granting space to every group under the sun: LGBT safe spaces and cultural clubs for Italians, Jamaicans, Jews, Indians, Indigenous, Balkaners, Greeks, Slavs, Portuguese, Quebecois, Iroquois, Pakistanis — every culture celebrated except our own. Anglo-Quebecers and Anglo-Canadians got nothing but an Irish club, closely monitored for “white supremacy” and “racism” by the HR grandmas of the gyno-gerontocracy of English Montreal. Students self-segregated, sitting at different cafeteria tables and smoking at different bus shelters. At Vanier, Dawson, and John Abbott College, these divisions were institutionalised. I remember walking into the atrium of Dawson, my first post-secondary experience, greeted by a wigger rolling a joint while a Jamaican beatboxed to Soulja Boy.

We became amateur anthropologists out of necessity, forced to navigate a nationwide cosmopolitan experiment from birth. We learned the distinctions between squabbling southeastern Europeans of the former Yugoslavia, and we did not care if Kosovo was Serbia or whether Romanians and Albanians were Slavic, they all acted the same way. We learned the divides within South Asia, the rivalries between Hindutva and Khalistani, the differences between a Punjabi, a Gujarati, a Telugu, a Pakistani, a Hong Konger, a mainlander, and a Taiwanese. We know the shades of Caribbean identity, the factions of the Middle East, and the intricacies of North African identity. We should never have needed to know these things, but we do.

For us, childhood in this cesspit was the seedbed of radicalism. We never knew an era when contact with foreigners was limited to sampling food at Loblaws. All we know is being surrounded by those who hate us, governed by a state that wants to erase us, with no healthcare, no homes, no jobs that are not contested by foreigners, and no money to start families.

January 2, 2025

QotD: Sincerity

“… in the ’90s, the human spirit was alive and free. And that’s the vibe that resonates with me.”

This is what the French call le horse pucky. If we may be so bold as to speak of “the human spirit” — which is pretty heavy for a column starting with a professional wrestler — the 90s killed it stone cold dead. The human spirit can flourish in the most awful situations, but one indispensable requirement is: Sincerity. You just can’t be snarky about the “Ode to Joy” or ironic about the Sistine Chapel. If you do, then there really is no difference between Beethoven and MC Funetik Spelyn, nothing to choose between Michelangelo and a dog turd on the sidewalk — someone placed them there intentionally, which is the only distinguishing characteristic of “art” possible in a world overrun by Postmodernists and Deconstructionists.

Severian, “Why the 90s Was the Worst Decade Ever”, Rotten Chestnuts, 2021-07-04.