Extra Credits

Published on 14 Oct 2018Credit to Alisa Bishop for her art on this series: http://www.alisabishop.com/

Quantum computing isn’t a replacement for classical computing … yet. Quantum decoherence happens when anything gets in the way of a qubit’s job, so sterile low-temperature environments are an absolute necessity.

A tremendous thank-you to Alexander Tamas, the “mystery patron” who made this series possible. We finally found room in our busy production schedule to create and air this series alongside our regularly scheduled, patron-approved Extra History videos. A huge thank you to the multiple guest artists we got to work with, to Matt Krol for his skillful wrangling of the production schedule and keeping everyone happy, and to our Patreon supporters for your patience and support.

Join us on Patreon! http://bit.ly/EHPatreon

October 17, 2018

Quantum Computing – Decoherence – Extra History – #5

October 10, 2018

Quantum Computing – Spooky Action at a Distance – Extra History – #4

Extra Credits

Published on 7 Oct 2018What happens when we can’t link physical cause and effect between two actions? Well, quantum bits (or qubits) do this all the time. Let’s look into how quantum entanglement can be used in computing.

Credit to Alisa Bishop for her art on this series: http://www.alisabishop.com/

A tremendous thank-you to Alexander Tamas, the “mystery patron” who made this series possible. We finally found room in our busy production schedule to create and air this series alongside our regularly scheduled, patron-approved Extra History videos. A huge thank you to the multiple guest artists we got to work with, to Matt Krol for his skillful wrangling of the production schedule and keeping everyone happy, and to our Patreon supporters for your patience and support.

Support us on Patreon! http://bit.ly/EHPatreon

QotD: The first time ESR changed the world

I think it was at the 1983 Usenix/UniForum conference (there is an outside possibility that I’m off by a year and it was ’84, which I will ignore in the remainder of this report). I was just a random young programmer then, sent to the conference as a reward by the company for which I was the house Unix guru at the time (my last regular job). More or less by chance, I walked into the meeting where the leaders of IETF were meeting to finalize the design of Internet DNS.

When I walked in, the crowd in that room was all set to approve a policy architecture that would have abolished the functional domains (.com, .net, .org, .mil, .gov) in favor of a purely geographic system. There’d be a .us domain, state-level ones under that, city and county and municipal ones under that, and hostnames some levels down. All very tidy and predictable, but I saw a problem.

I raised a hand tentatively. “Um,” I said, “what happens when people move?”

There was a long, stunned pause. Then a very polite but intense argument broke out. Most of the room on one side, me and one other guy on the other.

OK, I can see you boggling out there, you in your world of laptops and smartphones and WiFi. You take for granted that computers are mobile. You may have one in your pocket right now. Dude, it was 1983. 1983. The personal computers of the day barely existed; they were primitive toys that serious programmers mostly looked down on, and not without reason. Connecting them to the nascent Internet would have been ludicrous, impossible; they lacked the processing power to handle it even if the hardware had existed, which it didn’t yet. Mainframes and minicomputers ruled the earth, stolidly immobile in glass-fronted rooms with raised floors.

So no, it wasn’t crazy that the entire top echelon of IETF could be blindsided with that question by a twentysomething smartaleck kid who happened to have bought one of the first three IBM PCs to reach the East Coast. The gist of my argument was that (a) people were gonna move, and (b) because we didn’t really know what the future would be like, we should be prescribing as much mechanism and as little policy as we could. That is, we shouldn’t try to kill off the functional domains, we should allow both functional and geographical ones to coexist and let the market sort out what it wanted. To their eternal credit, they didn’t kick me out of the room for being an asshole when I actually declaimed the phrase “Let a thousand flowers bloom!”.

[…]

The majority counter, at first, was basically “But that would be chaos!” They were right, of course. But I was right too. The logic of my position was unassailable, really, and people started coming around fairly quickly. It was all done in less than 90 minutes. And that’s why I like to joke that the domain-name gold rush and the ensuing bumptious anarchy in the Internet’s host-naming system is all my fault.

It’s not true, really. It isn’t enough that my argument was correct on the merits; for the outcome we got, the IETF had to be willing to let a n00b who’d never been part of their process upset their conceptual applecart at a meeting that I think was supposed to be mainly a formality ratifying decisions that had already been made in working papers. I give them much more credit for that than I’ll ever claim for being the n00b in question, and I’ve emphasized that every time I’ve told this story.

Eric S. Raymond, “Eminent Domains: The First Time I Changed History”, Armed and Dangerous, 2010-09-11.

October 8, 2018

QotD: The closed-source software dystopia we barely avoided

Thought experiment: imagine a future in which everybody takes for granted that all software outside a few toy projects in academia will be closed source controlled by managerial elites, computers are unhackable sealed boxes, communications protocols are opaque and locked down, and any use of computer-assisted technology requires layers of permissions that (in effect) mean digital information flow is utterly controlled by those with political and legal master keys. What kind of society do you suppose eventually issues from that?

Remember Trusted Computing and Palladium and crypto-export restrictions? RMS and Linus Torvalds and John Gilmore and I and a few score other hackers aborted that future before it was born, by using our leverage as engineers and mentors of engineers to change the ground of debate. The entire hacker culture at the time was certainly less than 5% of the population, by orders of magnitude.

And we may have mainstreamed open source just in time. In an attempt to defend their failing business model, the MPAA/RIAA axis of evil spent years pushing for digital “rights” management so pervasively baked into personal-computer hardware by regulatory fiat that those would have become unhackable. Large closed-source software producers had no problem with this, as it would have scratched their backs too. In retrospect, I think it was only the creation of a pro-open-source constituency with lots of money and political clout that prevented this.

Did we bend the trajectory of society? Yes. Yes, I think we did. It wasn’t a given that we’d get a future in which any random person could have a website and a blog, you know. It wasn’t even given that we’d have an Internet that anyone could hook up to without permission. And I’m pretty sure that if the political class had understood the implications of what we were actually doing, they’d have insisted on more centralized control. ~For the public good and the children, don’t you know.~

So, yes, sometimes very tiny groups can change society in visibly large ways on a short timescale. I’ve been there when it was done; once or twice I’ve been the instrument of change myself.

Eric S. Raymond, “Engineering history”, Armed and Dangerous, 2010-09-12.

September 27, 2018

Mind Your Business #4: Free the Unikrn

Foundation for Economic Education

Published on 25 Sep 2018Forget about slot machines, the future of gaming is virtual reality! In this episode of Mind Your Business, Andrew Heaton is teaming up with entrepreneur Rahul Sood to learn all about esports, safe and legal online betting, and the global community that is surging behind organized competitive video gaming.

September 24, 2018

Verity Stob on early GUI experiences

“Verity Stob” began writing about technology issues three decades back. She reminisces about some things that have changed and others that are still irritatingly the same:

It’s 30 years since .EXE Magazine carried the first Stob column; this is its

pearlPerl anniversary. Rereading article #1, a spoof self-tester in the Cosmo style, I was struck by how distant the world it invoked seemed. For example:

Your program requires a disk to have been put in the floppy drive, but it hasn’t. What happens next?

The original answers, such as:

e) the program crashes out into DOS, leaving dozens of files open

would now need to be supplemented by

f) what’s ‘the floppy drive’ already, Grandma? And while you’re at it, what is DOS? Part of some sort of DOS and DON’TS list?

I say: sufficient excuse to present some Then and Now comparisons with those primordial days of programming, to show how much things have changed – or not.

1988: Drag-and-drop was a showy-offy but not-quite-satisfactory technology.

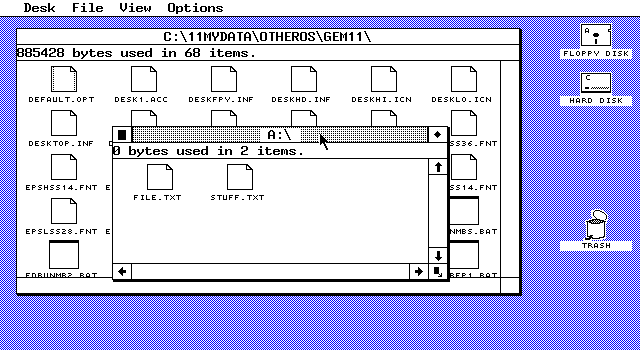

My first DnD encounter was by proxy. In about 1985 my then boss, a wise and cynical old Brummie engineer, attended a promotional demo, free wine and nibbles, of an exciting new WIMP technology called GEM. Part of the demo was to demonstrate the use of the on-screen trash icon for deleting files.

According to Graham’s gleeful report, he stuck up his hand at this point. “What happens if you drag the clock into the wastepaper basket?’

The answer turned out to be: the machine crashed hard on its arse, and it needed about 10 minutes embarrassed fiddling to coax it back onto its feet. At which point Graham’s arm went up again. “What happens if you drop the wastepaper basket into the clock?’

Drag-ons ‘n’ drag-offs

GEM may have been primitive, but it was at least consistent.

The point became moot a few months later, when Apple won a look-and-feel lawsuit and banned the GEM trashcan outright.

2018: Not that much has changed. Windows Explorer users: how often has your mouse finger proved insufficiently strong to grasp the file? And you have accidentally dropped the document you wanted into a deep thicket of nested server directories?

Or how about touch interface DnD on a phone, where your skimming pinkie exactly masks from view the dragged thing?

Well then.

However, I do confess admiration for this JavaScript library that aims to make a dragging and dropping accessible to the blind. Can’t fault its ambition.

September 13, 2018

Mind Your Business Ep. 2: Aceable in the Hole

Foundation for Economic Education

Published on 11 Sep 2018Believe it or not, parallel parking is not an impossible task. Meet Blake Garrett, the entrepreneur who is using VR to teach people how to drive, without actually getting behind the wheel.

____________

Produced & Directed by Michael Angelo Zervos

Executive Produced by Sean W. Malone

Hosted by Andrew Heaton

Original Music by Ben B. Goss

Featuring Blake Garrett

August 16, 2018

In praise of Donald Knuth

David Warren sings the praises of the inventor of “TeX”:

Among my heroes in that trade is a man now octogenarian, a certain Donald Knuth, author of the multi-volumed Art of Computer Programming, and of the great mass of algorithms behind the “TeX” composing system. A life-long opponent of patenting for software, and still not on email, he is one of the finer products of the Whole Earth Catalogue mindset of that era, though as a devoted Christian, he had it from older sources. (The mindset of: forget politics and do-it-yerself.)

Perfesser Knuth’s life journey was somewhat altered when a publisher presented him with the galley proofs for a reissue of one of his earlier volumes. They were, compared to the pages of the original hot-metal edition, a dog’s breakfast. In particular, even when technically correct, the mathematical formulae appeared to have been set by monkeys. He resolved to “make the world a better place” by doing something about this.

Knowing (pronounce the “k” as we do in this author’s surname) that computers can do many things that humans can’t — or can’t within one lifetime — he set about designing the computer processes to calculate beautiful letter and word spacings, line-breaks, line spacings, marginal proportions and such. He understood that civilization depends on literacy, literacy on legibility, and legibility on elegance. Ruthlessly, he recognized that things like “widowed” and “orphaned” lines of text are moral evils, and discovered algorithms that could exterminate them by complex anticipation. Too, he contributed to the counter-revolution by which the letters themselves could be drawn not pixelated.

I will quickly lose my few remaining readers if I go into the details. But here was a man (and still is) who discerned that nature herself is built on aesthetic principles, which men can investigate and apply. It is when something is ugly that we can know that it is wrong. Mathematicians, like poets and other artists, can embody the Faith at the root of this.

To my mind, or I would rather say K-nowledge, there is nothing wrong with technology, per se. We can often do things better with new tools. But we must be guided by the uncompromising demands of Beauty. Everything must be made as beautiful as we can make it: there must be no wavering, no surrender. All that is ugly must be consigned to Hell.

June 5, 2018

The Internet-of-Things as “Moore’s Revenge”

El Reg‘s Mark Pesce on the end of Moore’s Law and the start of Moore’s Revenge:

… the cost of making a device “smart” – whether that means, aware, intelligent, connected, or something else altogether – is now trivial. We’re therefore quickly transitioning from the Death of Moore’s Law into the era of Moore’s Revenge – where pretty much every manufactured object has a chip in it.

This is going to change the whole world, and it’s going to begin with a fundamental reorientation of IT, away from the “pinnacle” desktops and servers, toward the “smart dust” everywhere in the world: collecting data, providing services – and offering up a near infinity of attack surfaces. Dumb is often harder to hack than smart, but – as we saw last month in the Z-Wave attack that impacted hundreds of millions of devices – once you’ve got a way in, enormous damage can result.

The focus on security will produce new costs for businesses – and it will be on IT to ensure those costs don’t exceed the benefits of this massively chipped-and-connected world. It’ll be a close-run thing.

It’s also likely to be a world where nothing works precisely as planned. With so much autonomy embedded in our environment, the likelihood of unintended consequences amplifying into something unexpected becomes nearly guaranteed.

We may think the world is weird today, but once hundreds of billions of marginally intelligent and minimally autonomous systems start to have a go, that weirdness will begin to arc upwards exponentially.

May 12, 2018

QotD: Women in I.T.

… any woman who wants to be in a STEM field should be able to get as far as talent, hard work, and desire to succeed will take her, without facing artificial barriers erected by prejudice or other factors. If there are women who dream of being in STEM but have felt themselves driven off that path, the system is failing them. And the system is failing itself, too; talent is not so common that we can afford to waste it.

Now I’m going to refocus on computing, because that’s what I know best and I think it exhibits the problems that keep women out of STEM fields in an extreme form. There’s a lot of political talk that the tiny and decreasing number of women in computing is a result of sexism and prejudice that has to be remedied with measures ranging from sensitivity training up through admission and hiring quotas. This talk is lazy, stupid, wrong, and prevents correct diagnosis of much more serious problems.

I don’t mean to deny that there is still prejudice against women lurking in dark corners of the field. But I’ve known dozens of women in computing who wouldn’t have been shy about telling me if they were running into it, and not one has ever reported it to me as a primary problem. The problems they did report were much worse. They centered on one thing: women, in general, are not willing to eat the kind of shit that men will swallow to work in this field.

Now let’s talk about death marches, mandatory uncompensated overtime, the beeper on the belt, and having no life. Men accept these conditions because they’re easily hooked into a monomaniacal, warrior-ethic way of thinking in which achievement of the mission is everything. Women, not so much. Much sooner than a man would, a woman will ask: “Why, exactly, am I putting up with this?”

Correspondingly, young women in computing-related majors show a tendency to tend to bail out that rises directly with their comprehension of what their working life is actually going to be like. Biology is directly implicated here. Women have short fertile periods, and even if they don’t consciously intend to have children their instincts tell them they don’t have the option young men do to piss away years hunting mammoths that aren’t there.

There are other issues, too, like female unwillingness to put up with working environments full of the shadow-autist types that gravitate to programming. But I think those are minor by comparison, too. If we really want to fix the problem of too few women in computing, we need to ask some much harder questions about how the field treats everyone in it.

Eric S. Raymond, “Women in computing: first, get the problem right”, Armed and Dangerous, 2010-07-15.

March 24, 2018

James May doesn’t trust Sat Navs | Q&A extras | HeadSqueeze

BBC Earth Lab

Published on 27 Sep 2013Don’t trust the Sat Nav! Speaking from experience, James thinks we shouldn’t blindly trust a machine. Get a map!

February 27, 2018

The notion of “uploading” your consciousness

Skeptic author Michael Shermer pours cold water on the dreams and hopes of Transhumanists, Cryonicists, Extropians, and Technological Singularity proponents everywhere:

It’s a myth that people live twice as long today as in centuries past. People lived into their 80s and 90s historically, just not very many of them. What modern science, technology, medicine, and public health have done is enable more of us to reach the upper ceiling of our maximum lifespan, but no one will live beyond ~120 years unless there are major breakthroughs.

We are nowhere near the genetic and cellular breakthroughs needed to break through the upper ceiling, although it is noteworthy that companies like Google’s Calico and individuals like Abrey deGrey are working on the problem of ageing, which they treat as an engineering problem. Good. But instead of aiming for 200, 500, or 1000 years, try to solve very specific problems like cancer, Alzheimer’s and other debilitating diseases.

Transhumanists, Cryonicists, Extropianists, and Singularity proponents are pro-science and technology and I support their efforts but extending life through technologies like mind-uploading not only cannot be accomplished anytime soon (centuries at the earliest), it can’t even do what it’s proponents claim: a copy of your connectome (the analogue to your genome that represents all of your memories) is just that—a copy. It is not you. This leads me to a discussion of…

The nature of the self or soul. The connectome (the scientific version of the soul) consists of all of your statically-stored memories. First, there is no fixed set of memories that represents “me” or the self, as those memories are always changing. If I were copied today, at age 63, my memories of when I was, say, 30, are not the same as they were when I was 50 or 40 or even 30 as those memories were fresh in my mind. And, you are not just your memories (your MEMself). You are also your point-of-view self (POVself), the you looking out through your eyes at the world. There is a continuity from one day to the next despite consciousness being interrupted by sleep (or general anaesthesia), but if we were to copy your connectome now through a sophisticated fMRI machine and upload it into a computer and turn it on, your POVself would not suddenly jump from your brain into the computer. It would just be a copy of you. Religions have the same problem. Your MEMself and POVself would still be dead and so a “soul” in heaven would only be a copy, not you.

Whether or not there is an afterlife, we live in this life. Therefore what we do here and now matters whether or not there is a hereafter. How can we live a meaningful and purposeful life? That’s my final chapter, ending with a perspective that our influence continues on indefinitely into the future no matter how long we live, and our species is immortal in the sense that our genes continue indefinitely into the future, making it all the more likely our species will not go extinct once we colonize the moon and Mars so that we become a multi-planetary species.

February 21, 2018

Transistors – The Invention That Changed The World

Real Engineering

Published on 12 Sep 2016

February 15, 2018

QotD: Computer models

How can one be certain about outcomes in a complex system that we’re not really all that good at modeling? Anyone who’s familiar with the history of macroeconomic modeling in the 1960s and 1970s will be tempted to answer “Umm, we can’t.” Economists thought that the explosion of data and increasingly sophisticated theory was going to allow them to produce reasonably precise forecasts of what would happen in the economy. Enormous mental effort and not a few careers were invested in building out these models. And then the whole effort was basically abandoned, because the models failed to outperform mindless trend extrapolation — or as Kevin Hassett once put it, “a ruler and a pencil.”

Computers are better now, but the problem was not really the computers; it was that the variables were too many, and the underlying processes not understood nearly as well as economists had hoped. Economists can’t run experiments in which they change one variable at a time. Indeed, they don’t even know what all the variables are.

This meant that they were stuck guessing from observational data of a system that was constantly changing. They could make some pretty good guesses from that data, but when you built a model based on those guesses, it didn’t work. So economists tweaked the models, and they still didn’t work. More tweaking, more not working.

Eventually it became clear that there was no way to make them work given the current state of knowledge. In some sense the “data” being modeled was not pure economic data, but rather the opinions of the tweaking economists about what was going to happen in the future. It was more efficient just to ask them what they thought was going to happen. People still use models, of course, but only the unflappable true believers place great weight on their predictive ability.

Megan McArdle, “Global-Warming Alarmists, You’re Doing It Wrong”, Bloomberg View, 2016-06-01.

December 12, 2017

Why Hold Music Sounds Worse Now

Tom Scott

Published on 27 Nov 2017It’s not your imagination; hold music on phones really did sound better in the old days. Here’s why, as we talk about old telephone exchanges and audio compression.

Thanks to the Milton Keynes Museum, and their Connected Earth gallery: http://www.mkmuseum.org.uk/ – they’re also on Twitter as @mkmuseum, and on Facebook: https://www.facebook.com/mkmuseum/