… let’s start with the single largest input for our entire process, measured in either mass or volume – quite literally the largest input resource by an order of magnitude. That’s right, it’s … Trees

The reader may be pardoned for having gotten to this point expecting to begin with exciting furnaces, bellowing roaring flames and melting all and sundry. The thing is, all of that energy has to come from somewhere and that somewhere is, by and large, wood. Now it is absolutely true that there are other common fuels which were probably frequently experimented with and sometimes used, but don’t seem to have been used widely. Manure, used as cooking and heating fuel in many areas of the world where trees were scarce, doesn’t – to my understanding – reach sufficient temperatures for use in iron-working. Peat seems to have similar problems, although my understanding is it can be reduced to charcoal like wood; I haven’t seen any clear evidence this was often done, although one assumes it must have been tried.

Instead, the fuel I gather most people assume was used (to the point that it is what many video-game crafting systems set for) was coal. The problem with coal is that it has to go through a process of coking in order to create a pure mass of carbon (called “coke”) which is suitable for use. Without that conversion, the coal itself both does not burn hot enough, but also is apt to contain lots of sulfur, which will ruin the metal being made with it, as the iron will absorb the sulfur and produce an inferior alloy (sulfur makes the metal brittle, causing it to break rather than bend, and makes it harder to weld too). Indeed, the reason we know that the Romans in Britain experimented with using local coal this way is that analysis of iron produced at Wilderspool, Cheshire during the Roman period revealed the presence of sulfur in the metal which was likely from the coal on the site.

We have records of early experiments with methods of coking coal in Europe beginning in the late 1500s, but the first truly successful effort was that of Abraham Darby in 1709. Prior to that, it seems that the use of coal in iron-production in Europe was minimal (though coal might be used as a fuel for other things like cooking and home heating). In China, development was more rapid and there is evidence that iron-working was being done with coke as early as the eleventh century. But apart from that, by and large the fuel to create all of the heat we’re going to need is going to come from trees.

And, as we’ll see, really quite a lot of trees. Indeed, a staggering number of trees, if iron production is to be done on a major scale. The good news is we needn’t be too picky about what trees we use; ancient writers go on at length about the very specific best woods for ships, spears, shields, or pikes (fir, cornel, poplar or willow, and ash respectively, for the curious), but are far less picky about fuel-woods. Pinewood seems to have been a consistent preference, both Pliny (NH 33.30) and Theophrastus (HP 5.9.1-3) note it as the easiest to use and Buckwald (op cit.) notes its use in medieval Scandinavia as well. But we are also told that chestnut and fir also work well, and we see a fair bit of birch in the archaeological record. So we have our trees, more or less.

Bret Devereaux, “Iron, How Did They Make It? Part II, Trees for Blooms”, A Collection of Unmitigated Pedantry, 2020-09-25.

September 12, 2023

QotD: The largest input for producing iron in pre-industrial societies

September 8, 2023

QotD: Rents and taxes in pre-modern societies

In most ways […] we can treat rent and taxes together because their economic impacts are actually pretty similar: they force the farmer to farm more in order to supply some of his production to people who are not the farming household.

There are two major ways this can work: in kind and in coin and they have rather different implications. The oldest – and in pre-modern societies, by far the most common – form of rent/tax extraction is extraction in kind, where the farmer pays their rents and taxes with agricultural products directly. Since grain (threshed and winnowed) is a compact, relatively transportable commodity (that is, one sack of grain is as good as the next, in theory), it is ideal for these sorts of transactions, although perusing medieval manorial contacts shows a bewildering array of payments in all sorts of agricultural goods. In some cases, payment in kind might also come in the form of labor, typically called corvée labor, either on public works or even just farming on lands owned by the state.

The advantage of extraction in kind is that it is simple and the initial overhead is low. The state or large landholders can use the agricultural goods they bring in in rents and taxes to directly sustain specialists: soldiers, craftsmen, servants, and so on. Of course the problem is that this system makes the state (or the large landholder) responsible for moving, storing and cataloging all of those agricultural goods. We get some sense of how much of a burden this can be from the prominence of what seem to be records of these sorts of transactions in the surviving writing from the Bronze Age Near East (although I should note that many archaeologists working on the ancient Near Eastern economy are pushing for a somewhat larger, if not very large, space for market interactions outside of the “temple economy” model which has dominated the field for quite some time). This creates a “catch” we’ll get back to: taxation in kind is easy to set up and easier to maintain when infrastructure and administration is poor, but in the long term it involves heavier administrative burdens and makes it harder to move tax revenues over long distances.

Taxation in coin offers potentially greater efficiency, but requires more particular conditions to set up and maintain. First, of course, you have to have coinage. That is not a given! Much of the social interactions and mechanics of farming I’ve presented here stayed fairly constant (but consult your local primary sources for variations!) from the beginnings of written historical records (c. 3,400 BC in Mesopotamia; varies place to place) down to at least the second agricultural revolution (c. 1700 AD in Europe; later elsewhere) if not the industrial revolution (c. 1800 AD). But money (here meaning coinage) only appears in Anatolia in the seventh century BC (and probably independently invented in China in the fourth century BC). Prior to that, we see that big transactions, like long-distance trade in luxuries, might be done with standard weights of bullion, but that was hardly practical for a farmer to be paying their taxes in.

Coinage actually takes even longer to really influence these systems. The first place coinage gets used is where bullion was used – as exchange for big long-distance trade transactions. Indeed, coinage seemed to have started essentially as pre-measured bullion – “here is a hunk of silver, stamped by the king to affirm that it is exactly one shekel of weight”. Which is why, by the by, so many “money words” (pounds, talents, shekels, drachmae, etc.) are actually units of weight. But if you want to collect taxes in money, you need the small farmers to have money. Which means you need markets for them to sell their grain for money and then those merchants need to be able to sell that grain themselves for money, which means you need urban bread-eaters who are buying bread with money, which means those urban workers need to be paid in money. And you can only get any of these people to use money if they can exchange that money for things they want, which creates a nasty first-mover problem.

We refer to that entire process as monetization – when I talk about economies being “monetized” or “incompletely monetized” that’s what I mean: how completely has the use of money penetrated through this society. It isn’t a one-way street, either. Early and High Imperial Rome seem to have been more completely monetized than the Late Roman Western Empire or the early Middle Ages (though monetization increases rapidly in the later Middle Ages).

Extraction, paradoxically, can solve the first mover problem in monetization, by making the state the first mover. If the state insists on raising taxes in money, it forces the farmers to sell their grain for money to pay the tax-man; the state can then take that money and use it to pay soldiers (almost always the largest budget-item in an ancient or medieval state budget), who then use the money to buy the grain the farmers sold to the merchants, creating that self-sustaining feedback loop which steadily monetizes the society. For instance, Alexander the Great’s armies – who expected to be paid in coin – seem to have played a major role in monetizing many of the areas they marched through (along with breaking things and killing people; the image of Alexander the Great’s conquests in popular imagination tend to be a lot more sanitized).

Bret Devereaux, “Collections: Bread, How Did They Make It? Part IV: Markets, Merchants and the Tax Man”, A Collection of Unmitigated Pedantry, 2020-08-21.

August 17, 2023

“… the Chinese invented gunpowder and had it for six hundred years, but couldn’t see its military applications and only used it for fireworks”

John Psmith would like to debunk the claim in the headline here:

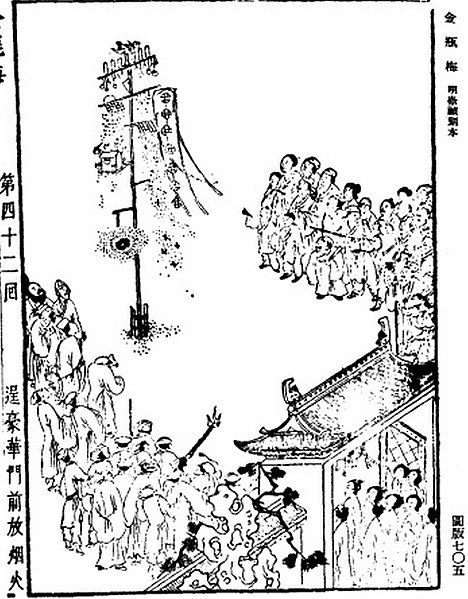

An illustration of a fireworks display from the 1628-1643 edition of the Ming dynasty book Jin Ping Mei (1628-1643 edition).

Reproduced in Joseph Needham (1986). Science and Civilisation in China, Volume 5: Chemistry and Chemical Technology, Part 7: Military Technology: The Gunpowder Epic. Cambridge University Press. Page 142.

There’s an old trope that the Chinese invented gunpowder and had it for six hundred years, but couldn’t see its military applications and only used it for fireworks. I still see this claim made all over the place, which surprises me because it’s more than just wrong, it’s implausible to anybody with any understanding of human nature.

Long before the discovery of gunpowder, the ancient Chinese were adept at the production of toxic smoke for insecticidal, fumigation, and military purposes. Siege engines containing vast pumps and furnaces for smoking out defenders are well attested as early as the 4th century. These preparations often contained lime or arsenic to make them extra nasty, and there’s a good chance that frequent use of the latter substance was what enabled early recognition of the properties of saltpetre, since arsenic can heighten the incendiary effects of potassium nitrate.

By the 9th century, there are Taoist alchemical manuals warning not to combine charcoal, saltpetre, and sulphur, especially in the presence of arsenic. Nevertheless the temptation to burn the stuff was high — saltpetre is effective as a flux in smelting, and can liberate nitric acid, which was of extreme importance to sages pursuing the secret of longevity by dissolving diamonds, religious charms, and body parts into potions. Yes, the quest for the elixir of life brought about the powder that deals death.

And so the Chinese invented gunpowder, and then things immediately began moving very fast. In the early 10th century, we see it used in a primitive flame-thrower. By the year 1000, it’s incorporated into small grenades and into giant barrel bombs lobbed by trebuchets. By the middle of the 13th century, as the Song Dynasty was buckling under the Mongol onslaught, Chinese engineers had figured out that raising the nitrate content of a gunpowder mixture resulted in a much greater explosive effect. Shortly thereafter you begin seeing accounts of truly destructive explosions that bring down city walls or flatten buildings. All of this still at least a hundred years before the first mention of gunpowder in Europe.

Meanwhile, they had also been developing guns. Way back in the 950s (when the gunpowder formula was much weaker, and produced deflagarative sparks and flames rather than true explosions), people had already thought to mount containers of gunpowder onto the ends of spears and shove them in peoples’ faces. This invention was called the “fire lance”, and it was quickly refined and improved into a single-use, hand-held flamethrower that stuck around until the early 20th century.1 But some other inventive Chinese took the fire lances and made them much bigger, stuck them on tripods, and eventually started filling their mouths with bits of iron, broken pottery, glass, and other shrapnel. This happened right around when the formula for gunpowder was getting less deflagarative and more explosive, and pretty soon somebody put the two together and the cannon was born.

All told it’s about three and a half centuries from the first sage singing his eyebrows, to guns and cannons dominating the battlefield.2 Along the way what we see is not a gaggle of childlike orientals marvelling over fireworks and unable to conceive of military applications. We also don’t see an omnipotent despotism resisting technological change, or a hidebound bureaucracy maintaining an engineered stagnation. No, what we see is pretty much the opposite of these Western stereotypes of ancient Chinese society. We see a thriving ecosystem of opportunistic inventors and tacticians, striving to outcompete each other and producing a steady pace of technological change far beyond what Medieval Europe could accomplish.

Yet despite all of that, when in 1841 the iron-sided HMS Nemesis sailed into the First Opium War, the Chinese were utterly outclassed. For most of human history, the civilization cradled by the Yellow and the Yangtze was the most advanced on earth, but then in a period of just a century or two it was totally eclipsed by the upstart Europeans. This is the central paradox of the history of Chinese science and technology. So … why did it happen?

1. Needham says he heard of one used by pirates in the South China Sea in the 1920s to set rigging alight on the ships that they boarded.

2. I’ve left out a ton of weird gunpowder-based weaponry and evolutionary dead ends that happened along the way, but Needham’s book does a great job of covering them.

August 15, 2023

QotD: Iron ore processing in pre-industrial societies

Once our ore reaches the surface (or is removed from its open pit) it is not immediately ready for smelting, but has to go through a series of preparatory steps collectively referred to as “dressing” to get the ore ready for its date with the smelter […]

Ore removed from the mine would need to be crushed, with the larger stones pulled out of the mines smashed with heavy hammers (against a rock surface) in order to break them down to a manageable size. The exact size of the ore chunks desired varies based on the metal one is seeking and the quality of the local ore. Ores of precious metals, it seems, were often ground down to powder, but for iron ore it seems like somewhat larger chunks were acceptable. I’ve seen modern experiments with bloomeries […] getting pretty good results from ore chunks about half the size of a fist. Interestingly, Craddock notes that ore-crushing activity at mines was sufficiently intense that archaeologists can spot the tell-tale depressions where the rock surface that provided the “floor” against which the ore was crushed have been worn by repeated use.

Ore might also be washed, that is passed through water to liberate and wash away any lighter waste material. Washing is attested in the ancient world for gold and silver ores (and by Georgius Agricola for the medieval period for the same), but might be used for other ores depending on the country rock to wash away impurities. The simple method of this, sometimes called jigging, consisted of putting the ore in a sieve and shaking it while water passed through, although more complex sluicing systems are known, for instance at the Athenian silver mines at Laurium (note esp. Healy, 144-8 for diagrams); the sluices for washing are sometimes called buddles. Throughout these processes, the ore would also probably be hand-sorted in an effort to separate high-grade ore from low-grade ore.

It’s clear that this mechanical ore preparation was much more intensive for higher-value metals where making sure to be as efficient as possible was a significant concern; gold and silver ores might be crushed, sorted, washed and rewashed before being ground into a powder for the final smelting process. Craddock presents a postulated processing set for copper ore for the Bronze Age Timna mines that goes through a primary crushing, hand-sorted division into three grades, secondary crushing, grinding, a winnowing step for the low-grade ore (either air winnowing or washing) before being blended into the final smelter “charge”.

As far as I can tell, such extensive processing for iron was much less common; in many cases it seems it is hard to be certain because the sources remain so focused on precious metal mining and the later stages of iron-working. Diodorus describes the iron ore on Elba as merely being crushed, roasted and then bloomed (5.13.1) but the description is so brief it is possible that he is leaving out steps (but also, Elba’s iron ore was sufficiently rich that further processing may not have been necessary). In many cases, iron was probably just crushed, sorted and then moved straight to roasting […]

Bret Devereaux, “Iron, How Did They Make It? Part I, Mining”, A Collection of Unmitigated Pedantry, 2020-09-18.

August 11, 2023

QotD: Subsistence versus market-oriented farming in pre-modern societies

Large landholders interacted with the larger number of small farmers (who make up the vast majority of the population, rural or otherwise) by looking to trade access to their capital for the small farmers’ labor. Rather than being structured by market transactions (read: wage labor), this exchange was more commonly shaped by cultural and political forces into a grossly unequal exchange whereby the small farmers gathered around the large estate were essentially the large landholder’s to exploit. Nevertheless, that exploitation and even just the existence of the large landholder served to reorient production away from subsistence and towards surplus, through several different mechanisms.

Remember: in most pre-modern societies, the small farmers are largely self-sufficient. They don’t need very many of the products of the big cities and so – at least initially – the market is a poor mechanism to induce them to produce more. There simply aren’t many things at the market worth the hours of labor necessary to get them – not no things, but just not very many (I do want to stress that; the self-sufficiency of subsistence farmers is often overstated in older scholarship; Erdkamp (2005) is a valuable corrective here). Consequently, doing anything that isn’t farming means somehow forcing subsistence farmers to work more and harder in order to generate the surplus to provide for those people who do the activities which in turn the subsistence farmers might benefit from not at all. But of course we are most often interested in exactly all of those tasks which are not farming (they include, among other things, literacy and the writing of history, along with functionally all of the events that history will commemorate until quite recently) and so the mechanisms by which that surplus is generated matter a great deal.

First, the large landholder’s farm itself existed to support the landholder’s lifestyle rather than his actual subsistence, which meant its production had to be directed towards what we might broadly call “markets” (very broadly understood). Now many ancient and even medieval agricultural writers will extol the value of a big farm that is still self-supporting, with enough basic cereal crops to subsist the labor force, enough grazing area for animals to provide manure and then the rest of the land turned over to intensive cash-cropping. But this was as much for limiting expenses to maximize profits (a sort of mercantilistic maximum-exports/minimum-imports style of thinking) as it was for developing self-sufficiency in a crisis. Note that we (particularly in the United States) tend to think of cash crops as being things other than food – poppies, cotton, tobacco especially. But in many cases, wheat might be the cash crop for a region, especially for societies with lots of urbanism; good wheat land could bring in solid returns […]. The “cash” crop might be grapes (for wine) or olives (mostly for olive oil) or any number of other necessities, depending on what the local conditions best supported (and in some cases, it could be a cash herd too, particularly in areas well-suited to wool production, like parts of medieval Britain).

Second, the exploitation by the large landholder forces the smaller farmers around him to make more intensive use of their labor. Because they are almost always in debt to the fellow with the big farm and because they need to do labor to get access to plow teams, manure, tools, or mills and because the large landholder’s land-ready-for-sharecropping is right there, the large landholder both creates the conditions that impel small farmers to work more land (and thus work more days) than their own small farms do and also creates the conditions where they can farm more intensively (both their own lands and the big farm’s lands, via plow teams, manure, etc.). Of course the large landholder then generally immediately extracts that extra production for his own purposes. […] all of the folks who aren’t small farmers looking to try to get small farmers to work harder than is in their interest in order to generate surplus. In this case, all of that activity funnels back into sustaining the large landholder’s lifestyle (which often takes place in town rather than in the countryside), which in turn supports all sorts of artisans, domestics, crafters and so on.

And so the large landholder needs the small subsistence farmers to provide flexible labor and the small subsistence farmers (to a lesser but still quite real degree) need the large landholder to provide flexibility in capital and work availability and the interaction of both of these groups serves to direct more surplus into activities which are not farming.

Bret Devereaux, “Collections: Bread, How Did They Make It? Part II: Big Farms”, A Collection of Unmitigated Pedantry, 2020-07-31.

July 25, 2023

QotD: Non-free farm labourers in pre-modern agriculture

The third complicated category of non-free laborers is that of workers who had legal control of their persons to some degree but who were required by law and custom to work on a given parcel of land and give some of the proceeds to their landlord. By way of example, under the reign of Diocletian (284-305), in a (failed) effort to reform the tax-system, the main class of Roman tenants, called coloni (lit: “tillers”), were legally prevented from moving off of their estates (so as to ensure that the landlords who were liable for taxes on that land would be in a position to pay). That this change does not seem to have been a massive shift at the time should give some sense of how low the status of these coloni had fallen and just how powerful a landlord might be over their tenants. That system in turn (warning: substantial but necessary simplification incoming) provided the basis for later European serfdom. Serfs were generally tied to the land, being bought and sold with it, with traditional (and hereditary) duties to the owner of the land. They might owe a portion of their produce (like tenants) or a certain amount of labor to be performed on land whose proceeds went directly to the landlord. While serfs generally had more rights (particularly in the protection and self-ownership of their persons) than enslaved persons, they were decidedly non-free (they couldn’t, by law, move away generally) and their condition was often quite poor when compared to even small freeholders. Non-free labor was generally not flexible (the landholder was obliged to support these folks year-round whether they had work to do or not) and so composed the fixed core labor of the large landholder’s holdings.

Bret Devereaux, “Collections: Bread, How Did They Make It? Part II: Big Farms”, A Collection of Unmitigated Pedantry, 2020-07-31.

June 24, 2023

QotD: The plight of miners in pre-industrial societies

Essentially the problem that miners faced was that while mining could be a complex and technical job, the vast majority of the labor involved was largely unskilled manual labor in difficult conditions. Since the technical aspects could be handled by overseers, this left the miners in a situation where their working conditions depended very heavily on the degree to which their labor was scarce.

In the ancient Mediterranean, the clear testimony of the sources is that mining was a low-status occupation, one for enslaved people, criminals and the truly desperate. Being “sent to the mines” is presented, alongside being sent to work in the mills, as a standard terrible punishment for enslaved people who didn’t obey their owners and it is fairly clear in many cases that being sent to the mines was effectively a delayed death sentence. Diodorus Siculus describes mining labor in the gold mines of Egypt this way, in a passage that is fairly representative of the ancient sources on mining labor more generally (3.13.3, trans Oldfather (1935)):

For no leniency or respite of any kind is given to any man who is sick, or maimed, or aged, or in the case of a woman for her weakness, but all without exception are compelled by blows to persevere in their labours, until through ill-treatment they die in the midst of their tortures. Consequently the poor unfortunates believe, because their punishment is so excessively severe, that the future will always be more terrible than the present and therefore look forward to death as more to be desired than life.

It is clear that conditions in Greek and Roman mines were not much better. Examples of chains and fetters – and sometimes human remains still so chained – occur in numerous Greek and Roman mines. Unfortunately our sources are mostly concerned with precious metal mines and those mines also seem to have been the worst sorts of mines to work in, since the long underground shafts and galleries exposed the miners to greater dangers from bad air to mine-collapses. That said, it is hard to imagine working an open-pit iron mine by hand, while perhaps somewhat safer, was any less back-breaking, miserable toil, even if it might have been marginally safer.

Conditions were not always so bad though, particularly for free miners (being paid a wage) who tended to be treated better, especially where their labor was sorely needed. For instance, a set of rules for the Roman mines at Vipasca, Spain provided for contractors to supply various amenities, including public baths maintained year-round. The labor force at Vipasca was clearly free and these amenities seem to have been a concession to the need to make the life of the workers livable in order to get a sufficient number of them in a relatively sparsely populated part of Spain.

The conditions for miners in medieval Europe seems to have been somewhat better. We see mining communities often setting up their own institutions and occasionally even having their own guilds (for instance, there was a coal-workers guild in Liege in the 13th century) or internal regulations. These mining communities, which in large mining operations might become small towns in their own right, seem to have often had some degree of legal privileges when compared to the general rural population (though it should be noted that, as the mines were typically owned by the local lord or state, exemption from taxes was essentially illusory as the lord or king’s cut of the mine’s profits was the taxes). It does seem notable that while conditions in medieval mines were never quite so bad as those in the ancient world, the rapid expansion of mining activity beginning in the 15th century seems to have coincided with a loss of the special status and privileges of earlier medieval European miners and the status associated with the labor declined back down to effectively the bottom of the social spectrum.

(That said, it seems necessary to note that precious metal-mining done by non-free Native American laborers at the order of European colonial states appears to have been every bit as cruel and deadly as mining in the ancient world.)

Bret Devereaux, “Iron, How Did They Make It? Part I, Mining”, A Collection of Unmitigated Pedantry, 2020-09-18.

May 20, 2023

QotD: Alienation

One of Marx’s most famous concepts, “alienation” initially meant “the systemic separation of a worker from the product of his labor”. The result of a craftsman’s labor is directly visible beneath his hands, growing by the day; when he’s done, the shirt (or whatever) sits there before him, fully finished. The factory worker, by contrast, is little more than a machine-tender; he pulls the lever, and the finished article is squirted out somewhere far down the line, automatically, by machine. His “labor” consists of lever-pulling and jam-clearing.

It was a real enough insight into the psychology of factory work, and Marx deserves all the credit he got for it, but “alienation” was even more useful in a broad social context — the separation of man from the cultural products of his society. After all, if capitalism is the mode of production around which society organizes itself, and the products of capitalism are by definition alienated from their producers, then by extension capitalist society must be alienated from itself. Indeed, what could “society” even mean, in a world of lever-pullers and bearing-lubers and jam-clearers?

Again, a profound and important insight into the social conditions of the Industrial Age. Ours is a mechanical, transactional world, one not well-suited to the kind of organism we are. That’s why Marxism and its spacey little brother Nazism are both what Jeffrey Herf calls “reactionary modernism.” The Communists thought they were the endpoint of the Enlightenment; the Nazis rejected it entirely; but both of them were curdled Romantics, in love with Enlightenment science while terrified of that science’s society. Lenin said that Communism was “Soviet power plus electrification”. Goebbels wasn’t that pithy, but “the feudal system plus autobahns” is pretty much what he meant by Nazism, and both boil down to “medieval peasant villages with air conditioning”.

That the one excludes the other — necessarily, comrade, necessarily, in the full Hegelian sense of the word — never occurred to either of them shouldn’t really be held against them, since both of them were determined to freeze the world exactly as it was. Both were so terrified of individuality that they were determined to stamp it out, not realizing that individuality was the only thing that made their fantasy worlds possible. Medieval peasants who were happy being medieval peasants never would’ve invented air conditioning in the first place, nicht wahr?

Severian, “Alienation”, Rotten Chestnuts, 2020-10-29.

May 10, 2023

Feasting at a Medieval Tournament

Tasting History with Max Miller

Published 9 May 2023

(more…)

May 6, 2023

Face-palm-worthy Coronations of the past

I’m sure almost everyone — except the tiny number of Republicans in England — hopes for a smooth and spectacular Coronation for His Majesty King Charles III, there are plenty of examples of past Coronations that were anything but:

The Imperial State Crown, worn by the British monarch in the royal procession following the Coronation and at the opening of Parliament.

Wikimedia Commons.

Whereas so many traditions are 19th-century inventions, as any student of history knows, the coronation of Britain’s monarch is a rare example of a truly ancient custom, dating to the 10th century in its structure and with origins stretching back further, to the Romans and even Hebrews. As Tom Holland said on yesterday’s The Rest is History, it is like going to a zoo and seeing a woolly mammoth.

It is a sacred moment when the sovereign becomes God’s anointed, an almost unique state ceremony in a secular world. The custom originates with the late Roman emperors, associated with Constantine the Great and certainly established by the mid-fifth century in Constantinople. In the West, and following the fall of that half of the empire, barbarian leaders were eager to imitate imperial styles (a bit like today). Germanic and Celtic tribes had ceremonies for new leaders in which particular swords were displayed, a feature of later rites, but as they developed the practice of kingship, so their rituals began to imitate the Roman form.

[…]

Athelstan, the first king of England, had been crowned in 925 at Kingston, a spot where seven kings of England had been enthroned. Perhaps the most notorious was Edwig, a 16-year-old whose proto-rock star qualities were not appreciated at the time of his coronation in 955. Indeed he failed to turn up, and when Bishop Dunstan marched to the king’s nearby quarters to drag him along, he found the teenager in bed with a “strumpet” and the strumpet’s mother.

However, Edwig died four years later, and Dunstan was elevated to Canterbury, became a saint and, through chronicles recorded by churchmen, got his version of history.

This reign might seem impossibly distant and obscure, yet it was under Edwig’s brother Edgar that the current coronation format was established. Edgar was a powerful king, and the last of the Anglo-Saxon rulers to live a happily Viking-free existence. His coronation on 11 May 973 was an illustration of his strength, and also his aspirations. Held at Bath, most likely because of its association with Rome, it involved a bishop placing the crown on the king’s head, in the Carolingian style, and would become the template for the ceremony for his direct descendent Charles III.

But not all coronations would run so smoothly. After Edgar’s death his elder son Edward was killed in possibly nefarious circumstances, and his stepmother placed her son Ethelred on the throne. Ethelred’s reign was plagued by disaster, and it was later said in the chronicles — the medieval equivalent of “and then the whole bus clapped” Twitter tales — that Bishop Dunstan lambasted the boy-king for “the sin of your shameful mother and the sin of the men who shared in her wicked plot” and that it “shall not be blotted out except by the shedding of much blood of your miserable subjects”.

This would have been merely awkward, whereas many coronations ended in riot or bloodshed. The most notorious incident in English history occurred on Christmas Day 1066: Duke William got off to a bad start PR-wise when his nervous Norman guards mistook cheers for booing and began attacking the crowd, before setting fire to buildings.

[…]

Perhaps the most scandalous coronation took place at the newly completed St Paul’s Cathedral in February 1308. The young queen, Isabella, was the 12-year-old daughter of France’s King Philippe Le Bel, and had inherited her father’s good looks, with thick blonde hair and large blue, unblinking eyes. Her husband, Edward II, was a somewhat boneheaded man of 24 years whose idea of entertainment was watching court fools fall off tables.

It was a fairy tale coronation for the young girl, apart from a plaster wall collapsing, bringing down the high altar and killing a member of the audience, and the fact that her husband was gay and spent the afternoon fondling his lover Piers Gaveston, while ignoring her. Isabella’s two uncles, who had made the trip from France, were furious at the behaviour of their new English in-law, though perhaps not surprised.

[…]

One of the most disastrous coronations occurred during the Hundred Years’ War. Inspired by Joan of Arc, in 1429 the French had beaten the English at the Battle at Patay, after which their leader Charles VII entered Reims and was crowned at the spot where the kings of France had been enthroned for almost a thousand years. In response, on 26 December 1431 the English had their candidate, the 10-year-old Henry VI, crowned King of France at Notre-Dame in Paris, where one road was turned into a river of wine filled with mermaids, and Christmas plays were performed on an outdoor stage.

Unfortunately, the coronation was a complete mess. The entire service was in English, the weather was freezing, the event rushed, too packed, filled with pickpockets, and worst of all the English made such bad food that even the sick and destitute at the Hotel-Dieu complained they had never tasted anything so vile.

May 2, 2023

QotD: The musical importance of the city of Córdoba

Which city is our best role model in creating a healthy and creative musical culture?

Is it New York or London? Paris or Tokyo? Los Angeles or Shanghai? Nashville or Vienna? Berlin or Rio de Janeiro?

That depends on what you’re looking for. Do you value innovation or tradition? Do you want insider acclaim or crossover success? Is your aim to maximize creativity or promote diversity? Are you seeking timeless artistry or quick money attracting a large audience?

Ah, I want all of these things. So I only have one choice — but I’m sure my city isn’t even on your list.

My ideal music city is Córdoba, Spain.

But I’m not talking about today. I’m referring to Córdoba around the year 1000 AD.

I will make a case that medieval Córdoba had more influence on global music than any other city in history. That’s probably not something you expected. But even if you disagree — and I already can hear some New Yorkers grumbling in the background — I think you will discover that the “Córdoba miracle,” as I call it, is an amazing role model for us.

It’s a case study in how communities foster the arts — and in a way that benefits everybody, not just the artists.

[…] a thousand years before New Orleans spurred the rise of jazz, and instigated the Africanization of American music, a similar thing happened in Córdoba, Spain. You could even call that city the prototype for all the decisive musical trends of our modern times.

“This was the chapter in Europe’s culture when Jews, Christians, and Muslims lived side by side,” asserts Yale professor María Rosa Menocal, “and, despite their intractable differences and enduring hostilities, nourished a complex culture of tolerance.”

There’s even a word for this kind of cultural blossoming: Convivencia. It translates literally as “live together.” You don’t hear this term very often, but you should — because we need a dose of it now more than ever. And when scholars discuss and debate this notion of Convivencia, they focus their attention primarily on one city: Córdoba.

It represents the historical and cultural epicenter of living together as a norm and ideal.

Even today, we can see the mixture of cultures in Spain’s distinctive architecture, food, and music. These are both part of Europe, but also separate from it. It is our single best example of how the West can enter into fruitful cultural dialogue with the outsider — to the benefit of both.

Ted Gioia, “The Most Important City in the History of Music Isn’t What You Think It Is”, The Honest Broker, 2023-01-26.

April 21, 2023

Localism versus centralism

Theophilus Chilton offers some support to localism as an antidote to the centralization of powers we’ve seen in every western nation since the early “nation state” era at the end of the Middle Ages:

Cropped image of a Hans Holbein the Younger portrait of King Henry VIII at Petworth House.

Photo by Hans Bernhard via Wikimedia Commons.

The history of the West has, among other things, included a long, drawn-out conflict between two functional organizing principles – localism and centralization. The former involves the devolution of power to more narrowly defined provincial, parochial centers, while the later involves the concentration of power into the hands of an absolutist system. The tendency toward centralization began as far back as the high Middle Ages, during which the English and French monarchies began the reduction of aristocratic privileges and local divisions and the folding of this power into the rising bureaucratic state with a permanently established capital city and rapacious desire for provincial monies and personnel. The trend towards the development of absolute monarchy continued through the Baroque period, and the replacement of divinely-sanctioned kingship with popular forms of government (republicanism, democracy, communism) did not abate the process, but merely redirected power into different hands. The ultimate form of centralization, not yet come to pass, would be the sort of borderless one-world government desired by today’s globalists, whether they be neoconservatives or neoliberals, which would involve the ultimate consolidation of all power everywhere into one or a few hands in some place like Geneva or New York City.

[…]

The historical transition from localism to centralization in medieval Europe was seen in the decline of aristocratic rights and the institution of peer kingship, and their replacement with consolidated administrative control over a much larger and generally contiguous geographic area. This control was manifested in the person of the absolute monarch, and was exercised through an impersonal, disinterested bureaucratic apparatus which came to demand a greater and greater share of the national wealth to cover its expenses. This process, I believe, can ultimately be traced back to the strengthening of English and French royal power beginning in the 13th century, especially under Philip IV of France. Its fruition came (while monarchy still exercised effectual power in Europe) in the 17th-18th centuries before being undermined by Enlightenment and democratic dogmas which merely transferred the centralizing power to demagogues claiming to speak “for the people.”

Under the old aristocratic system, executive power formed a distributed system and rested on local nobility ruling over a local population with whom they were knowledgeable and on generally good terms. Despite the jaundiced modern view that feudalism was always “tyrannical” and “oppressive,” the fact is that most aristocrats in that era were genuinely devoted to the welfare of the commoners in their land, and it was the responsibility of the nobility to dispense justice and to right wrongs. The picture presented in Kipling’s poem “Norman and Saxon” most likely serves as a fair reflection of the relationship between lord and commoner. Kingship certainly existed, but the king was viewed as a “first among equals”, one who was the prime lord over his vassals, but who could also himself be a vassal of other kings of equal power and authority (as many of the earlier Plantagenet kings were to the Kings of France, by virtue of their holding fiefs as Dukes of Aquitaine).

False impressions about the role of the aristocracy generally correlate with false impressions about serfdom, the dominant labor relationship of the time. Contrary to popular notions, serfdom was generally not some cruel form of slavery that destroyed human dignity. Indeed, many serfs had liberties approach those of freemen, could transfer allegiances between nobles, enjoyed dozens of feast days (which were effectively vacation days to be devoted to family and community), and could even take themselves off to one of the many free cities which existed and be reasonably sure of not being compelled to return to their former master unless their case was especially egregious.

However, under centralization, the nobility was generally reduced to being ornaments of the royal court, their judicial and administrative functions removed and replaced by a bureaucracy personally loyal to the king. This, in effect, served to remove opportunities for serfs and other commoners to “get away” from the rule of a bad king. Whereas before, a serf could at least hope for the opportunity to flee a bad ruler and seek shelter with a good one, under the uniform rule of the absolute monarch, this was no longer an option unless the commoner wished to flee his entire nation and culture completely. Likewise, the ever-increasing regulation of his daily life by the bureaucracy followed him everywhere he went. By the end of the period, the centralization of power and the rise of crony capitalism led to the destruction of serfdom and the rise of wage capitalism, acting to reduce serfs and freemen alike to the status of cogs in profit-generating machines. The rise of absolute monarchy, part and parcel with the appearance of bureaucracy and the professionalization of military power, led directly to the rise of the modern managerial state.

April 17, 2023

QotD: Tenant-farming (aka “sharecropping”) in pre-modern societies

Tenant labor of one form or another may be the single most common form of labor we see on big estates and it could fill both the fixed labor component and the flexible one. Typically tenant labor (also sometimes called sharecropping) meant dividing up some portion of the estate into subsistence-style small farms (although with the labor perhaps more evenly distributed); while the largest share of the crop would go to the tenant or sharecropper, some of it was extracted by the landlord as rent. How much went each way could vary a lot, depending on which party was providing seed, labor, animals and so on, but 50/50 splits are not uncommon. As you might imagine, that extreme split (compared to the often standard c. 10-20% extraction frequent in taxation or 1/11 or 1/17ths that appear frequently in medieval documents for serfs) compels the tenants to more completely utilize household labor (which is to say “farm more land”). At the same time, setting up a bunch of subsistence tenant farms like this creates a rural small-farmer labor pool for the periods of maximum demand, so any spare labor can be soaked up by the main estate (or by other tenant farmers on the same estate). That is, the high rents force the tenants to have to do more labor – more labor that, conveniently, their landlord, charging them the high rents is prepared to profit from by offering them the opportunity to also work on the estate proper.

In many cases, small freeholders might also work as tenants on a nearby large estate as well. There are many good reasons for a small free-holding peasant to want this sort of arrangement […]. So a given area of countryside might have free-holding subsistence farmers who do flexible sharecropping labor on the big estate from time to time alongside full-time tenants who worked land entirely or almost entirely owned by the large landholder. Now, as you might imagine, the situation of tenants – open to eviction and owing their landlords considerable rent – makes them very vulnerable to the landlord compared to neighboring freeholders.

That said, tenants in this sense were generally considered free persons who had the right to leave (even if, as a matter of survival, it was rarely an option, leaving them under the control of their landlords), in contrast to non-free laborers, an umbrella-category covering a wide range of individuals and statuses. I should be clear on one point: nearly every pre-modern complex agrarian society had some form of non-free labor, though the specifics of those systems varied significantly from place to place. Slavery of some form tends to be the rule, rather than the exception for these pre-modern agrarian societies. Two of the largest categories of note here are chattel slavery and debt bondage (also called “debt-peonage”), which in some cases could also shade into each other, but were often considered separate (many ancient societies abolished debt bondage but not chattel slavery for instance and debt-bondsmen often couldn’t be freely sold, unlike chattel slaves). Chattel slaves could be bought, sold and freely traded by their slave masters. In many societies these people were enslaved through warfare with captured soldiers and civilians alike reduced to bondage; the heritability of that status varies quite a lot from one society to the next, as does the likelihood of manumission (that is, becoming free).

Under debt bondage, people who fell into debt might sell (or be forced to sell) dependent family members (selling children is fairly common) or their own person to repay the debt; that bonded status might be permanent, or might hold only till the debt is repaid. In the later case, as remains true in a depressing amount of the world, it was often trivially easy for powerful landlord/slave-holders to ensure that the debt was never paid and in some systems this debt-peon status was heritable. Needless to say, the situation of both of these groups could be and often was quite terrible. The abolition of debt-bondage in Athens and Rome in the sixth and fourth centuries B.C. respectively is generally taken as a strong marker of the rising importance and political influence of the class of rural, poorer citizens and you can readily see why this is a reform they would press for.

Bret Devereaux, “Collections: Bread, How Did They Make It? Part II: Big Farms”, A Collection of Unmitigated Pedantry, 2020-07-31.

April 10, 2023

QotD: Interaction between “big” farmers and subsistence farmers in pre-modern societies

What our little farmers generally have […] is labor – they have excess household labor because the household is generally “too large” for its farm. Now keep in mind, they’re not looking to maximize the usage of that labor – farming work is hard and one wants to do as little of it as possible. But a family that is too large for the land (a frequent occurrence) is going to be looking at ways to either get more out of their farmland or out of their labor, or both, especially because they otherwise exist on a razor’s edge of subsistence.

And then just over the way, you have the large manor estate, or the Roman villa, or the lands owned by a monastery (because yes, large landholders were sometimes organizations; in medieval Europe, monasteries filled this function in some places) or even just a very rich, successful peasant household. Something of that sort. They have the capital (plow-teams, manure, storage, processing) to more intensively farm the little land our small farmers have, but also, where the small farmer has more labor than land, the large landholder has more land than labor.

The other basic reality that is going to shape our large farmers is their different goals. By and large our small farmers were subsistence farmers – they were trying to farm enough to survive. Subsistence and a little bit more. But most large landholders are looking to use the surplus from their large holdings to support some other activity – typically the lifestyle of wealthy elites, which in turn require supporting many non-farmers as domestic servants, retainers (including military retainers), merchants and craftsmen (who provide the status-signalling luxuries). They may even need the surplus to support political activities (warfare, electioneering, royal patronage, and so on). Consequently, our large landholders want a lot of surplus, which can be readily converted into other things.

The space for a transactional relationship is pretty obvious, though as we will see, the power imbalances here are extreme, so this relationship tends to be quite exploitative in most cases. Let’s start with the labor component. But the fact that our large landholders are looking mainly to produce a large surplus (they are still not, as a rule, profit maximizing, by the by, because often social status and political ends are more important than raw economic profit for maintaining their position in society) means that instead of having a farm to support a family unit, they are seeking labor to support the farm, trying to tailor their labor to the minimum requirements of their holdings.

[…]

The tricky thing for the large landholder is that labor needs throughout the year are not constant. The window for the planting season is generally very narrow and fairly labor intensive: a lot needs to get done in a fairly short time. But harvest is even narrower and more labor intensive. In between those, there is still a fair lot of work to do, but it is not so urgent nor does it require so much labor.

You can readily imagine then the ideal labor arrangement would be to have a permanent labor supply that meets only the low-ebb labor demands of the off-seasons and then supplement that labor supply during the peak seasons (harvest and to a lesser extent planting) with just temporary labor for those seasons. Roman latifundia may have actually come close to realizing this theory; enslaved workers (put into bondage as part of Rome’s many wars of conquest) composed the villa’s primary year-round work force, but the owner (or more likely the villa’s overseer, the vilicus, who might himself be an enslaved person) could contract in sharecroppers or wage labor to cover the needs of the peak labor periods. Those temporary laborers are going to come from the surrounding rural population (again, households with too much labor and too little land who need more work to survive). Some Roman estates may have actually leased out land to tenant farmers for the purpose of creating that “flexible” local labor supply on marginal parts of the estate’s own grounds. Consequently, the large estates of the very wealthy required the impoverished many subsistence farmers in order to function.

Bret Devereaux, “Collections: Bread, How Did They Make It? Part II: Big Farms”, A Collection of Unmitigated Pedantry, 2020-07-31.

March 23, 2023

History Summarized: Rome After Empire

Overly Sarcastic Productions

Published 11 Nov 2022“It’s gonna take more than killing me to kill me” – Rome, constantly.

Rome “Fell” in 476 … but we still have Rome. How’d that happen, and what does the Pope have to do with it?

(more…)