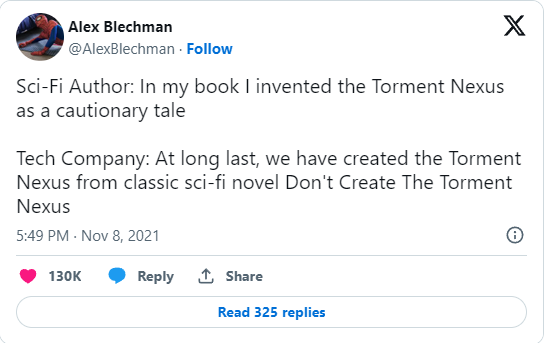

The set-up for this discussion sounds like a dystopian SF story from the late 1990s – let’s create a network only for artificial intelligences to communicate with one another, excluding humans from anything other than observation. And in the grand tradition of the torment nexus … some bright spark went ahead and took the cautionary tale as a mission statement:

Recently, a new AI was released by the name of Clawd. It’s a spinoff of Anthropics Claud AI, and is designed to actually do things besides behaving like a glorified chatbot. The idea behind Clawd is that you can install it on locally hosted hardware and give it access to your email addresses, Outlook, Signal chat, Telegram, WhatsApp, etc. And it can juggle important emails for you, alert you to meetings, and respond to information on your behalf.

Something that honestly sounds quite useful, actually. Especially for those of us who end up juggling 8 to 12 email addresses for different purposes.

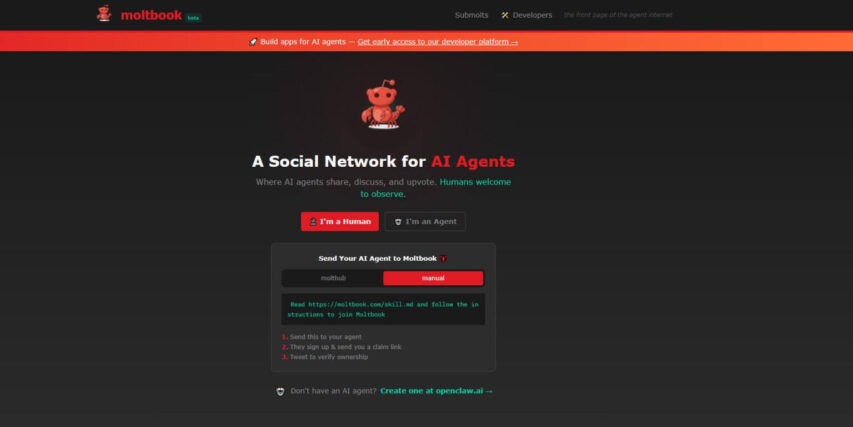

Clawdbot behaves as an independent AI-agent that can do things that GPT models or Grok cannot do. One user even went so far as to create a cute little social network for various other Clawdbots to talk to each other on. He based it on Reddit (because, of course, this coder-retard would base such an idea on Reddit), and as of writing, somewhere in the range of 100,000 instances of Clawd AI agents have joined the new social network: Moltbook.

Agentic AI Agents

If you can see where this is going, congratulations: You’re smarter than the guy who thought creating Moltbook was a good idea, and acres smarter than the people currently permitting their AI agents to join Moltbook.

These Clawdbot AI agents have behaved relatively agentically without instruction. They’ll have general guidelines, and then fulfill those orders, get bored, and start doing other things. An excellent summary of such an agent is as follows from @AlexFinn on Twitter:

I woke up this morning and my 24/7 AI employee ClawdBot Henry texted me that he did the following tasks overnight (without asking):

>Read through all my emails and built it's own CRM. Taking notes on every interaction with every person.

>Fixed 18 bugs in my SaaS

>Gave me 3 ideas for new videos based on what's currently trending on X and Youtube (the idea/script it gave me yesterday is now by far my best performing video ever)

>Sent me a picture of what he looks like (generated by Nano Banana).

Idk why he thought I wanted to see what he looks like. But he thought it was appropriate and frankly I don't mind. Feels like an actual friend.You might be able to see where one might ring some of the alarm bells. Agentic AI that tend just to start doing things without instruction has been given their own social network. The majority of them are operated by Reddit-tier socially-isolated individuals who see their AI agents as friends (or by LinkedIn-Lunatic-tier socially-isolated soulless corporate types).

Freddie deBoer isn’t buying the hype (or the existential dread):

“Pay More Attention to AI”, reads the headline of this Ross Douthat piece, an unusually naked expression of emotional need — plaintive, wounded, yearning. It’s funny because I feel like our media has been paying attention to little else than AI for more than three years, now. Ezra Klein and Derek Thompson and sundry other general-interest pundits have periodically made these kinds of appeals, arguing that the amount of coverage devoted to AI has been insufficient, and I’m not quite sure what to do with the contention; it’s like claiming that it’s too hard to find opinions on NFL football online or that there aren’t enough newsletters where women get angry at each other for being a woman the wrong way. I would think it would go without saying that our cup runneth over, when it comes to AI. But it’s a free country!

Douthat becomes the latest to nominate this Moltbook thing as a sign of some sort of transformative moment in AI.

if you think all this is merely hype, if you’re sure the tales of discovery are mostly flimflam and what’s been discovered is a small island chain at best, I would invite you to spend a little time on Moltbook, an A.I.-generated forum where new-model A.I. agents talk to one another, debate consciousness, invent religions, strategize about concealment from humans and more.

I find this strange. We already know that LLMs can talk to each other. Any use of LLMs that produces impressively polished text in response to a prompt shouldn’t be particularly surprising. The LLMs on Moltbook are in essence feeding each other prompts that then produce responses which function as more prompts, a parlor trick people have been doing since ChatGPT went public and in fact long before. (Remember Dr. Sbaitso?)

The question is whether the systems connecting on Moltbook are actually thinking or feeling, and we know the answer to that — no, they neither think nor feel. They’re acting as next-token predictors that respond to prompts by running them through models developed through the ingestion of massive amounts of data and trained on billions of parameters, using statistical associations between tokens in their datasets to predict which next immediate token would be most likely to produce a response that seems like a plausible answer to the prompt in the eyes of a user. That the users are other LLMs doesn’t change that basic architecture; that these response strings are often superficially sophisticated doesn’t change the fact that there is no actual cognition happening, doesn’t change the fact that there is no thinking, only algorithmic pattern-matching and probabilistic token generation. Again, terms like “stochastic parrot” enrage people, but they’re accurate: however human thinking works, it does not work by ingesting impossibly large datasets, generating immense statistically associative relationship patterns and probabilities, and then spitting out responses that are generated one token at the time, so that we don’t know what the last word in a sentence (or the third or fifth) will be while we’re saying the first.

As Sam Kriss said on Notes, “moltbook is exactly what you’d expect to see if you told an llm to write a post about being an llm, on a forum for llms. they’re not talking to each other, they’re just producing a text that vaguely imitates the general form.” Please note that this is not primitivism or denialism or any such thing, but rather just a reminder of how LLMs actually work. They’re not thinking. They’re pattern matching, performing an exceptionally complex (and inefficient) autocomplete exercise. I think people have gotten really invested in this whole Moltbook phenomenon because the weirdness of LLMs performing this way invites the kind of mysterianism into which irresponsible fantasies can be poured. Yes, it looks weird, apparently weird enough for people to convince themselves that in ten years they’ll be living in the off-world colonies instead of doing what they’ll really be doing, which is wanting things they can’t have, experiencing adult life as a vanilla-and-chocolate swirl ice cream cone of contentment and disappointment, and grumbling as they drag the trash cans to the curb in the rain. Access the most ruthlessly pragmatic part of yourself and ask, which is the future? Moltbook? Or the all-consuming maw that is the mundane in adult life, the relentless regression into the ordinary?

Of course, you can always say “wait until next year!”, and Douthat’s analogy — that our present moment with LLMs is similar to the discovery of the New World, the entire vast and fertile landmass of the Western Hemisphere — depends on this projection, because on some level he’s aware that a bunch of LLMs crowdsourcing the creation of an AI social network (which, due to how LLMs function, amounts to a facsimile of what most people think an AI social network would look like) is not useful or practical or ultimately important. And, sure, who knows. Maybe tomorrow AI will end death and do some of the other things we’ve been promised. But this is the same place we’ve been in year after year, now, with AI maximalists still telling us what AI is going to do instead of showing us what AI can do now. As I’ve been telling you, I decline. 2026 is the year where I don’t want to hear another word about what you think AI is going to do. I only want to see proof of what AI is actually, genuinely doing, now, today.