I’ve read that it’s smells that humans remember the longest, or are the most likely to jog memories. After positing that, the pseudoscientists often talk about Grandma’s cookies. Let me tell you about smells.

It smells like exotic bread is baking near the dust collector when you put pine through the drum sander. You know the fine dust is giving you nose cancer and lung trouble so you’re almost immune to its charms. Almost. There was this smell once, when I had to renovate an apartment a guy died in. He was in there a good long time, too. It’s the smell of the mass grave. That was fun. But nothing can compare to the smell of the abrasive cutoff saw going through steel. It makes brimstone smell like French pastry.

You see, to cut metal like that you don’t often use a saw with teeth. It’s just an abrasive disc, and you send a shower of sparks and an acrid, burning blast of stink up your nose. It’s like snorting sand from the outdoor ashtray next to the door at the place they hold Alcoholics Anonymous meetings. I’ll never forget it.

“Strange Adventures In The Fall And Rise Of Sippican Cottage”, Sippican Cottage, 2013-09-04

November 23, 2013

QotD: The evocative power of smell

November 6, 2013

Your website needs more Infographics!

October 12, 2013

Not news: people under-report calorie intake, invalidating 40 years of federal research

Any study that depends on self-reporting, especially self-reporting on things like how much food they eat, can’t be assumed to be accurate:

Four decades of nutrition research funded by the Centers for Disease Control and Prevention (CDC) may be invalid because the method used to collect the data was seriously flawed, according to a new study by the Arnold School of Public Health at the University of South Carolina.

The study, led by Arnold School exercise scientist and epidemiologist Edward Archer, has demonstrated significant limitations in the measurement protocols used in the National Health and Nutrition Examination Survey (NHANES). The findings, published in PLOS ONE (The Public Library of Science), reveal that a majority of the nutrition data collected by the NHANES are not “physiologically credible,” Archer said.

[…]

The study examined data from 28,993 men and 34,369 women, 20 to 74 years old, from NHANES I (1971 — 1974) through NHANES (2009 — 2010), and looked at the caloric intake of the participants and their energy expenditure, predicted by height, weight, age and sex. The results show that — based on the self-reported recall of food and beverages — the vast majority of the NHANES data “are physiologically implausible, and therefore invalid,” Archer said.

In other words, the “calories in” reported by participants and the “calories out,” don’t add up and it would be impossible to survive on most of the reported energy intakes. This misreporting of energy intake varied among participants, and was greatest in obese men and women who underreported their intake by an average 25 percent and 41 percent (i.e., 716 and 856 Calories per-day respectively).

September 18, 2013

Elizabeth Loftus on false memories

The more we discover about the process of memory formation and recall, the more we discover that our memories are more fallible and plastic than we believed. Elizabeth Loftus talks to Alison George about the problem of false memories:

AG: How does this happen? What exactly is going on when we retrieve a memory?

EL: When we remember something, we’re taking bits and pieces of experience — sometimes from different times and places — and bringing it all together to construct what might feel like a recollection but is actually a construction. The process of calling it into conscious awareness can change it, and now you’re storing something that’s different. We all do this, for example, by inadvertently adopting a story we’ve heard — like Romney did.AG: How did you end up studying false memories?

EL: Early in my career, I had done some very theoretical studies of memory, and after that I wanted to [do] work that had more obvious practical uses. The memory of witnesses to crimes and accidents was a natural place to go. In particular I looked at what happens when people are questioned about their experiences. I would ultimately see those questions as a means by which the memories got contaminated.AG: You’re known for debunking the idea of repressed memories. Why focus on them?

EL: In the 1990s we began to see these recovered-memory cases. In the first big one, a man called George Franklin was on trial. His daughter claimed she had witnessed her father kill her best friend when she was 8 years old — but had only remembered this 20 years later. And that she had been raped by him and repressed that memory too. Franklin was convicted of the murder, and that started this repressed-memory ball rolling through the legal system. We began to see hundreds of cases where people were accusing others based on claims of repressed memory. That’s what first got me interested.AG: How did you study the process of creating false memories?

EL: We needed a different paradigm for studying these types of recollections. I developed a method for creating “rich false memories” by using strong suggestion. The first such memory was about getting lost in a shopping mall as a child.AG: How susceptible are people to having these types of memories implanted?

EL: Depending on the study, you might get as many as 50 percent of people falling for the suggestion and developing a complete or partial false memory.

As I’ve mentioned before, the more we learn about memory, the less comfortable I am with the belief that eyewitness testimony in criminal cases is as dependable as our legal system assumes. There are definitely large numbers of people in prison based on eyewitness accounts … some of which are almost certainly false memories (but believed by the witness to be accurate).

AG: Is there any way to distinguish a false memory from a real one?

EL: Without independent corroboration, little can be done to tell a false memory from a true one.AG: Could brain imaging one day be used to do this?

EL: I collaborated on a brain imaging study in 2010, and the overwhelming conclusion we reached is that the neural patterns were very similar for true and false memories. We are a long way away from being able to look at somebody’s brain activity and reliably classify an authentic memory versus one that arose through some other process.AG: Do you think it’s important for people to realize how malleable their memory is?

EL: My work has made me tolerant of memory mistakes by family and friends. You don’t have to call them lies. I think we could be generous and say maybe this is a false memory.

September 12, 2013

This is rather sinister

At Marginal Revolution, Alex Tabarrok talks about a statistical study which concluded that being left-handed had serious impact on your lifespan:

In 1991 Halpern and Coren published a famous study in the New England Journal of Medicine which appears to show that left handed people die at much younger ages than right-handed people. Halpern and Coren had obtained records on 987 deaths in Southern California — we can stipulate that this was a random sample of deaths in that time period — and had then asked family members whether the deceased was right or left-handed. What they found was stunning, left handers in their sample had died at an average age of 66 compared to 75 for right handers. If true, left handedness would be on the same order of deadliness as a lifetime of smoking. Halpern and Coren argued that this was due mostly to unnatural deaths such as industrial and driving accidents caused by left-handers living in a right-handed world. The study was widely reported at the time and continues to be regularly cited in popular accounts of left handedness (e.g. Buzzfeed, Cracked).

What is less well known is that the conclusions of the Halpern-Coren study are almost certainly wrong, left-handedness is not a major cause of death. Rather than dramatically lower life expectancy, a more plausible explanation of the HC findings is a subtle and interesting statistical artifact. The problem was pointed out as early as the letters to the editor in the next issue of the NEJM (see Strang letter) and was also recently pointed out in an article by Hannah Barnes in the BBC News (kudos to the BBC!) but is much less well known.

The statistical issue is that at a given moment in time a random sample of deaths is not necessarily a random sample of people. I will explain.

August 31, 2013

Enabling the “nudgers”

Coyote Blog links to a Daily Mail article on the woman who wants to run your life (and Obama wants to help her):

I am a bit late on this, but like most libertarians I was horrified by this article in the Mail Online about Obama Administration efforts to nudge us all into “good” behavior. This is the person, Maya Shankar, who wants to substitute her decision-making priorities for your own […]

If the notion — that a 20-something person who has apparently never held a job in the productive economy is telling you she knows better what is good for you — is not absurd on its face, here are a few other reasons to distrust this plan.

- Proponents first, second, and third argument for doing this kind of thing is that it is all based on “science”. But a lot of the so-called science is total crap. Medical literature is filled with false panics that are eventually retracted. And most social science findings are frankly garbage. If you have some behavior you want to nudge, and you give a university a nice grant, I can guarantee you that you can get a study supporting whatever behavior you want to foster or curtail. Just look at the number of public universities in corn-growing states that manage to find justifications for ethanol subsidies. Recycling is a great example, mentioned several times in the article. Research supports the sensibility of recycling aluminum and steel, but says that recycling glass and plastic and paper are either worthless or cost more in resources than they save. But nudgers never-the-less push for recycling of all this stuff. Nudging quickly starts looking more like religion than science.

- The 300 million people in this country have 300 million different sets of priorities and personal circumstances. It is the worst hubris to think that one can make one decision that is correct for everyone. Name any supposedly short-sighted behavior — say, not getting health insurance when one is young — and I can name numerous circumstances where this is a perfectly valid choice and risk to take.

July 17, 2013

Keep calm, and don’t panic about bee-pocalypse now

You’ve heard about the mysterious colony collapse disorder (CCD) that has been devastating bee colonies across the world, right? This is serious, as bees are a very important part of the pollenization of many crops. As you’ll know from many media reports, this is a food disaster unfolding before us and we’re all going to starve! Or, looking at the facts, perhaps not:

In a rush to identify the culprit of the disorder, many journalists have made exaggerated claims about the impacts of CCD. Most have uncritically accepted that continued bee losses would be a disaster for America’s food supply. Others speculate about the coming of a second “silent spring.” Worse yet, many depict beekeepers as passive, unimaginative onlookers that stand idly by as their colonies vanish.

This sensational reporting has confused rather than informed discussions over CCD. Yes, honey bees are dying in above average numbers, and it is important to uncover what’s causing the losses, but it hardly spells disaster for bees or America’s food supply.

Consider the following facts about honey bees and CCD.

For starters, US honey bee colony numbers are stable, and they have been since before CCD hit the scene in 2006. In fact, colony numbers were higher in 2010 than any year since 1999. How can this be? Commercial beekeepers, far from being passive victims, have actively rebuilt their colonies in response to increased mortality from CCD. Although average winter mortality rates have increased from around 15% before 2006 to more than 30%, beekeepers have been able to adapt to these changes and maintain colony numbers.

[…]

“The state of the honey bee population—numbers, vitality, and economic output — are the products of not just the impact of disease but also the economic decisions made by beekeepers and farmers,” economists Randal Rucker and Walter Thurman write in a summary of their working paper on the impacts of CCD. Searching through a number of economic measures, the researchers came to a surprising conclusion: CCD has had almost no discernible economic impact.

But you don’t need to rely on their study to see that CCD has had little economic effect. Data on colonies and honey production are publicly available from the USDA. Like honey bee numbers, US honey production has shown no pattern of decline since CCD was first detected. In 2010, honey production was 14% greater than it was in 2006. (To be clear, US honey production and colony numbers are lower today than they were 30 years ago, but as Rucker and Thurman explain, this gradual decline happened prior to 2006 and cannot be attributed to CCD).

H/T to Tyler Cowen for the link.

July 11, 2013

Inane new “measurement” claims 1978 was the “best year ever”

An editorial in New Scientist (which I can’t quote from due to copyright concerns) claims that using a new “measurement” called the Genuine Progress Indicator (GPI), human progress peaked in 1978 and it’s all been downhill since then. Anyone who actually lived through 1978 might struggle to recall just what — if anything — was better about 1978 than following years, but the NS editors do point out that the GDP measurement generally used to compare national economies doesn’t capture all the relevant details, while GPI includes what they refer to as social factors and economic costs, making it a better measuring tool for certain comparisons.

I can only assume that most of the economists who believe that 1978 was a peak year for the environment hadn’t been born at that time: pollution was a much more visible issue in North America and western Europe than at almost any time afterwards (and eastern Europe was far worse). Industry and government were taking steps to cut back some of the worst pollutants, but that process was really only just in its early stages: it took several years for the effects to start to show.

In the late 1970s, the world was a much dirtier, poorer, less egalitarian place than even a decade later: China and India were both much more authoritarian and had still not mastered the art of ensuring that there was enough food to feed everyone. Behind the Iron Curtain, Soviets and citizens of their client states in Europe were falling further and further behind the material well-being of westerners (and becoming much more aware of the deficit).

No matter how much emphasis you put on nebulous “social factors”, the fact that the world poverty rate — regardless of how you measure it — has been cut in half over the last twenty years, lifting literally billions of people out of near-starvation makes an incredibly strong case that the world is doing better now than at any time since 1978. You can prattle on all you like about “rising inequality”, but for my money it’s a better world where the risk of people literally starving to death is that much closer to being eliminated. Give me an “unequal” world where even the poorest have enough food and clean water over an egalitarian world where billions starve, thanks very much.

July 8, 2013

The return of the fickle finger of fate (non-humour category)

In sp!ked, Brendan O’Neill discusses the unlikely comeback of “fate”:

Fate is making a comeback. The idea that a human being’s fortunes are shaped by forces beyond his control is returning, zombie-like, from the graveyard of bad historical ideas. The notion that a man’s character and destiny are determined for him rather than by him is back in fashion, after 500-odd years of having been criticised and ridiculed by humanist thinkers.

Of course, we’re far too sophisticated these days actually to use the f-word, fate. We don’t talk about a god called Fortuna, as the Romans did, believing that this blind, mysterious creature decided people’s fates with the spin of a wheel. Unlike long-gone Norse communities we don’t believe in goddesses called Norns, who would attend the birth of every child to determine his or her future. No, today we use scientific terms to argue that people’s fortunes are determined by higher powers than their little, insignificant selves.

We use and abuse neuroscience to claim certain people are ‘born this way’. We claim evolutionary psychology explains why people behave and think the way they do. We use phrases like ‘weather of mass destruction’, in place of ‘gods’, to push the idea that mankind is a little thing battered by awesome, destiny-determining forces. Fate has been brought back from the dead and she’s been dolled up in pseudoscientific rags.

[. . .]

It’s hard to overstate what a radical idea this was at the tailend of the Dark Ages. It’s this idea that gives rise to the concept of free will, to the concept of personality even. And it was an idea carried through to the Enlightenment and on to the humanist liberalism of the nineteenth and early twentieth centuries. In the words of the greatest liberal, John Stuart Mill, it is incumbent upon the individual to never ‘let the world, or his portion of it, choose his plan of life for him’.

But today, in our downbeat era that bears a bit of a passing resemblance to the Dark Ages, we’re turning the clock back on this idea. We’re rewinding the historic breakthroughs of the Renaissance and Enlightenment, and we’re breathing life back into the fantasy of fate. Ours is an era jampacked with deterministic theories, claims that human beings are like amoeba in a Petri dish being prodded and shaped by various forces. But the new determinism isn’t religious or supernatural, as it was in the pre-Enlightened era — it’s scientific determinism, or rather pseudo-scientific determinism.

June 23, 2013

Wine tasting scores are bullshit

In the Guardian, David Derbyshire takes the modern “science” of wine tasting to the woodshed:

… drawing on his background in statistics, Hodgson approached the organisers of the California State Fair wine competition, the oldest contest of its kind in North America, and proposed an experiment for their annual June tasting sessions.

Each panel of four judges would be presented with their usual “flight” of samples to sniff, sip and slurp. But some wines would be presented to the panel three times, poured from the same bottle each time. The results would be compiled and analysed to see whether wine testing really is scientific.

The first experiment took place in 2005. The last was in Sacramento earlier this month. Hodgson’s findings have stunned the wine industry. Over the years he has shown again and again that even trained, professional palates are terrible at judging wine.

“The results are disturbing,” says Hodgson from the Fieldbrook Winery in Humboldt County, described by its owner as a rural paradise. “Only about 10% of judges are consistent and those judges who were consistent one year were ordinary the next year.

“Chance has a great deal to do with the awards that wines win.”

These judges are not amateurs either. They read like a who’s who of the American wine industry from winemakers, sommeliers, critics and buyers to wine consultants and academics. In Hodgson’s tests, judges rated wines on a scale running from 50 to 100. In practice, most wines scored in the 70s, 80s and low 90s.

Results from the first four years of the experiment, published in the Journal of Wine Economics, showed a typical judge’s scores varied by plus or minus four points over the three blind tastings. A wine deemed to be a good 90 would be rated as an acceptable 86 by the same judge minutes later and then an excellent 94.

Today’s headline is a slightly stronger version of one I ran in May: Is wine tasting bullshit? with this rather amusing caption:

Although that “real” wine “review” illustrates the verbal bullshit side of wine reviewing, the statistical analysis in Robert Hodgson’s tests rather undermines the claims to any kind of actual analysis in most or all wine reviewing.

I’ve said for years that for most people there is a range of wine prices that will satisfy their tastes without emptying their wallets — in Ontario, the range for most people seems to be in the $14-$40 price spectrum. Pay less than that, and you risk buying wine that really isn’t very good (although there are some underpriced gems even there), and pay over $40 and you’re just paying extra for the “prestige” and most of us wouldn’t really be able to detect any flavour differences.

It’s interesting to see what kind of immediate environmental changes seem to be able to directly influence the scores given by reviewers:

More evidence that wine-tasting is influenced by context was provided by a 2008 study from Heriot-Watt University in Edinburgh. The team found that different music could boost tasters’ wine scores by 60%. Researchers discovered that a blast of Jimi Hendrix enhanced cabernet sauvignon while Kylie Minogue went well with chardonnay.

June 22, 2013

The Economist on the cooling chances of major climate control legislation

I was disappointed when The Economist switched sides to join the “consensus” on global warming and started arguing for massive government intervention in the economy to “save the planet”. Fifteen years after the last significant bout of warming, The Economist is starting to sound a bit more sensible (and skeptical):

…there’s no way around the fact that this reprieve for the planet is bad news for proponents of policies, such as carbon taxes and emissions treaties, meant to slow warming by moderating the release of greenhouse gases. The reality is that the already meagre prospects of these policies, in America at least, will be devastated if temperatures do fall outside the lower bound of the projections that environmentalists have used to create a panicked sense of emergency. Whether or not dramatic climate-policy interventions remain advisable, they will become harder, if not impossible, to sell to the public, which will feel, not unreasonably, that the scientific and media establishment has cried wolf.

Dramatic warming may exact a terrible price in terms of human welfare, especially in poorer countries. But cutting emissions enough to put a real dent in warming may also put a real dent in economic growth. This could also exact a terrible humanitarian price, especially in poorer countries. Given the so-far unfathomed complexity of global climate and the tenuousness of our grasp on the full set of relevant physical mechanisms, I have favoured waiting a decade or two in order to test and improve the empirical reliability of our climate models, while also allowing the economies of the less-developed parts of the world to grow unhindered, improving their position to adapt to whatever heavy weather may come their way. I have been told repeatedly that “we cannot afford to wait”. More distressingly, my brand of sceptical empiricism has been often met with a bludgeoning dogmatism about the authority of scientific consensus.

Of course, if the consensus climate models turn out to be falsified just a few years later, average temperature having remained at levels not even admitted to be have been physically possible, the authority of consensus will have been exposed as rather weak. The authority of expert consensus obviously strengthens as the quality of expertise improves, which is why it’s quite sensible, as matter of science-based policy-making, to wait for a callow science to improve before taking grand measures on the basis of it’s predictions.

As I wrote back in 2004, “I’ve never been all that convinced of the accuracy of the scientific evidence presented in favour of the Global Warming theory, especially as it seemed to play rather too clearly into the hands of the anti-growth, anti-capitalist, pro-world government folks. A world-wide ecological disaster, clearly caused by human action, would allow a lot of authoritarian changes which would radically reduce individual freedom and increase the degree of social control exercised by governments over the actions and movement of their citizenry.”

June 11, 2013

As if a pregnant woman doesn’t have enough things to worry about…

…there’s an entire industry devoted to the cause of warning pregnant women about possible, potential, unknown dangers all around them:

The only other real option is to take the position held by Joan Wolf, author of the excellent study about contemporary risk thinking, Is Breast Best? Taking on the Breastfeeding Experts and the New High Stakes of Motherhood. Wolf has explored how, in the US, pregnant women are frequently told: everything is potentially risky; you have control over fetal development, but we do not know how; actions that you think are innocuous are probably harmful, but we cannot tell you which ones; things you do or do not do might be more problematic at certain times in pregnancy, but we do not know when; what you do or do not do can produce disastrous or moderately negative effects, but we cannot predict either one.

Wolf’s assessment is that the only rational response is not a call for more information of this kind; rather, it is to recognise that there is far too much of it already. While science can tell us important things, what we need to come to terms with is the inevitability of risk, the fact that people do risky things all day long (in that there are outcomes of actions over which we do not have total control), but this is just life. It is not a problem, and we do not need to be ‘informed’ or ‘empowered’ about it.

The other sort of argument made by the critics of the RCOG report was that instead of ‘raising awareness’ of the theoretical risks of everyday chemicals, more advice and information should be given to pregnant women about ‘real harm’. Hence, instead of just focusing on making it clear to the RCOG what they should do with their report, the critics have engaged in a sort of ‘my risk is bigger than your risk’ competition. In the discussion so far, the risks we apparently really understand and should be even more informed about have included all the old chestnuts: coffee, alcohol, cigarettes and stress.

Indeed, an interesting ‘my risk is bigger than your risk’ theme is developing when it comes to ‘stress’. Here, the entirely legitimate point that it is not reasonable to worry people and cause anxiety for no reason has morphed into a claim about the apparently overwhelming evidence that ‘stress’ endangers the developing fetus. In reality, as the US sociologist Betsy Armstrong has explained, the ‘science’ supporting the idea that stress in pregnancy is a problem is far more contentious than such objections assume. The wider public discourse about this issue demands robust criticism not endorsement because of its scaremongering qualities. In any case, given that a pregnant woman can no more avoid ‘stress’ in her life than a she can a pre-prepared ham sandwich, it is worth asking quite where this line of argument takes us.

May 2, 2013

A layman’s guide to evaluating statistical claims

We’re awash with statistics, 43.2% of which seem to be made up on the spot (did you see what I did there?). Betsey Stevenson & Justin Wolfers offer some guidance on how non-statisticians should approach the numbers we’re presented with in the media:

So how can non-experts and policy makers separate the useful research from the dross? Allow us to offer six rules.

1. Focus on how robust a finding is, meaning that different ways of looking at the evidence point to the same conclusion. Do the same patterns repeat in many data sets, in different countries, industries or eras? Are the findings fragile, changing as one makes small changes in how phenomena are measured, and do the results depend on whether particularly influential observations are included? Thanks to Moore’s Law of increasing computing power, it has never been easier or cheaper to assess, test and retest an interesting finding. If the author hasn’t made a convincing case, then don’t be convinced.

2. Data mavens often make a big deal of their results being statistically significant, which is a statement that it’s unlikely their findings simply reflect chance. Don’t confuse this with something actually mattering. With huge data sets, almost everything is statistically significant. On the flip side, tests of statistical significance sometimes tell us that the evidence is weak, rather than that an effect is nonexistent. Remember, results can be useful even if they don’t meet significance tests. Sometimes questions are so important that we need to glean whatever meaning we can from available data. The best bad evidence is still more informative than no evidence.

3. Be wary of scholars using high-powered statistical techniques as a bludgeon to silence critics who are not specialists. If the author can’t explain what they’re doing in terms you can understand, then you shouldn’t be convinced. You wouldn’t be convinced by an analysis just because it was written in ancient Latin, so why be impressed by an abundance of Greek letters? Sophisticated statistical methods can be helpful, but they can also hide more than they reveal.

4. Don’t fall into the trap of thinking about an empirical finding as “right” or “wrong.” At best, data provide an imperfect guide. Evidence should always shift your thinking on an issue; the question is how far.

5. Don’t mistake correlation for causation. For instance, even after revisions and corrections, Reinhart and Rogoff have demonstrated that economic growth is typically slower when government debt is higher. But does high debt cause slow growth, or is slow growth in gross domestic product the cause of higher debt-to-GDP ratios? Or are there other important determinants, such as populist spending by a government looking to get re- elected, which is more likely when growth is slow and typically drives debt up?

6. Always ask “so what?” Are the factors that drove the observed negative correlation between debt and GDP likely to exist today, in the U.S.? Does it even make sense to speak of “the” relationship between debt and economic growth, when there are surely many such relationships: Governments borrowing simply to fund their re-election are likely harming growth, while those investing in much-needed public works can provide the foundation for growth. The “so what” question is about moving beyond the internal validity of a finding to asking about its external usefulness.

Fraudster who sold fake bomb detectors to Iraq jailed for ten years

Under the circumstances, a ten year sentence is pretty lenient:

Fraudster James McCormick has been jailed for 10 years for selling fake bomb detectors.

McCormick, 57, of Langport, Somerset perpetrated a “callous confidence trick”, said the Old Bailey judge.

He is thought to have made £50m from sales of more than 7,000 of the fake devices to countries, including Iraq.

The fraud “promoted a false sense of security” and contributed to death and injury, the judge said. He also described the profit as “outrageous”.

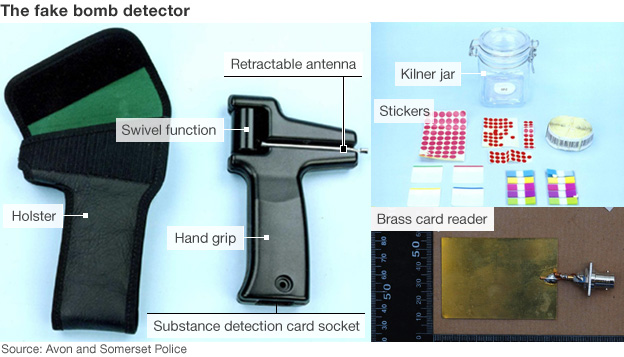

Police earlier said the ADE-651 devices, modelled on a novelty golf ball finder, are still in use at some checkpoints.

Sentencing McCormick, Judge Richard Hone said: “You are the driving force and sole director behind [the fraud].”

He added: “The device was useless, the profit outrageous, and your culpability as a fraudster has to be considered to be of the highest order.”

One invoice showed sales of £38m over three years to Iraq, the judge said.

The bogus devices were also sold in other countries, including Georgia, Romania, Niger, Thailand and Saudi Arabia.

April 23, 2013

Seller of fake bomb detectors found guilty of fraud

Back in 2010, I said “There should be a special hell for this scam artist” who mocked up bomb detector kits and sold them for thousands of dollars in Iraq and other areas with a real need for protection against IEDs. It’s taken more than three years, but he’s finally been found guilty:

A Somerset-based businessman has been convicted of three counts of fraud over the sale of bogus bomb detectors after his operation was exposed in a BBC Newsnight investigation in 2010.

This was a scam of global dimensions. James McCormick marketed his fake bomb detectors around the world, selling them in Georgia, Romania, Niger, Thailand, Saudi Arabia and beyond.

But his main market was Iraq, where lives depended on bomb detection and where the bogus devices were, and still are, used at virtually every checkpoint in the capital.

Between 2008 and 2009 alone, more than 1,000 Iraqis were killed in explosions in Baghdad.

How the device was meant to work:

- A small amount of the substance the user wished to detect — such as explosives — was put in a Kilner jar along with a sticker that was intended to absorb the “vapours” of the substance

- The sticker was then placed on a credit-card sized card, which was read by a card reader and inserted into the device

- The user would then hold the device, which had no working electronics, and the swivelling antenna was meant to indicate the location of the sought substance

In other words, a magical dowsing stick that depended on the user to “detect” whatever the device was supposedly seeking. This wasn’t a case of a device that didn’t do what it was designed to do: it was a deliberate fraud with just enough “technological” mumbo-jumbo to appear to be a solution to a real problem:

The court heard that McCormick began his business by buying a batch of novelty “golf ball detectors” from the USA for less than $20 each. In fact they were simply radio aerials, attached by a hinge to a handle. He put the labels of his company, ATSC, on them and sold them as bomb detectors for $5,000 each.

He then made a more advanced-looking version which he was to sell for up to $55,000. The ADE-651 came with cards which he claimed were “programmed” to detect everything from explosives to ivory and even $100 bills. Police say the only genuine part of the kit — and the most expensive — was the carrying case.

To their credit, the police moved to investigate the same day the BBC’s original story broke. Strategy Page explained why the scam had been so easy to sell. Later it was reported that British civil servants and military personnel had been implicated in the fraud.