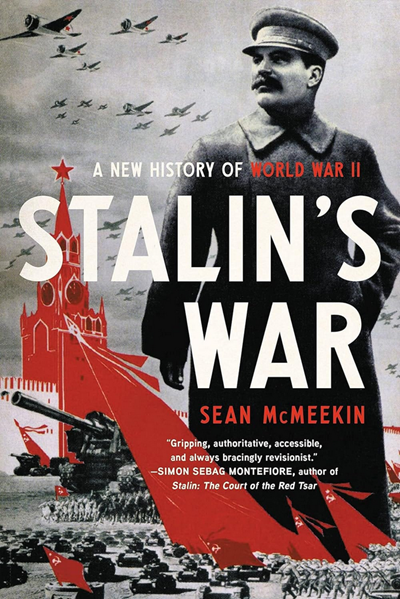

Big Serge: “One of the first things that stands out about your work is that you have found success writing about topics which are very familiar to people and have a large extant corpus of writing. World War One, the Russian Revolution, World War Two, and now a broad survey of Communism – these are all subjects with no shortage of literature, and yet you have consistently managed to write books that feel refreshing and new. In a sense, your books help “reset” how people understand these events, so for example Stalin’s War was very popular and was not perceived as just another World War Two book. Would you say that this is your explicit objective when you write, and more generally, how do you approach the challenge of writing about familiar subjects?”

Dr. McMeekin: “Yes, I think that is an important goal when I write. I have often been called a revisionist, and it is not usually meant as a compliment, but I don’t particularly mind the label. I have never understood the idea that a historian’s job is simply to reinforce or regurgitate, in slightly different form, our existing knowledge of major events. If there is nothing new to say, why write a book?

Of course, it is not easy to say something genuinely new about events such as the First World War, the Russian Revolution, or World War Two. The scholar in me would like to think that I have been able to do so owing to my discovery of new materials, especially in Russian and other archives less well-trodden by western historians until recently, and that is certainly part of it. But I think it is more important that I come to this material – and older material, too – with new questions, and often surprisingly obvious ones.

For example, in The Russian Origins of the First World War, I simply took up Fritz Fischer’s challenge, which for some reason had been forgotten after “Fischerites” (most of them less than careful readers of Fischer, apparently) took over the field. In the original 1961 edition of Griff nach der Weltmacht (Germany’s “Bid” or “Grab” for World Power, a title translated more blandly but descriptively into English as Germany’s Aims in the First World War), Fischer pointed out that he was able to subject German war aims to withering scrutiny because basically every German file (not destroyed in the wars) had been declassified and opened to historians owing to Germany’s abject defeat in 1945 – while pointing out that, if the secret French, British, and Russian files on 1914 were ever opened, a historian could do the same thing for one of the Entente Powers. I had already done a Fischer-esque history on German WWI strategy, especially Germany’s use of pan-Islam (The Berlin-Baghdad Express), inspired by a similar epigraph in an old edition of John Buchan’s wartime thriller Greenmantle – Buchan predicted that a historian would come along one day to tell the story “with ample documents”, joking that when this happened he would retire and “fall to reading Miss Austen in a hermitage”. So it was a logical progression to ask, if Fischer can do this for Germany’s war aims, why not Russia?

Readers may have missed the obvious Fischer inspiration for Russian Origins owing to the editors at Harvard/Belknap, who thought my original title – the obviously Fischer-inspired Russia’s Aims in the First World War – was boring and unsexy. Probably this helped sell books, but it did lend my critics an easy line that I was “blaming Russia for the First World War” rather than simply applying a Fischer-esque lens to Russia’s war aims. Some also called me Russophobic, which is understandable, though I think it misses the point. To my mind, subjecting Russian strategic thinking, wartime diplomacy and maneuvering to the same scrutiny as those routinely applied to Germany and the other Powers is taking the country seriously on its own terms, rather than ignoring Russia, as nearly every historian of, say, Gallipoli has done.

A book on Russian war aims was also long overdue. Other than an underwhelming Chai Lieven study from 1983 and a few articles, no one had really done this for Russia since Soviet scholars and archivists had (with very different motivations) published annotated volumes of secret Russian diplomatic correspondence back in the 1920s. For me, this was a door wide open, and I walked right in. Stalin’s War is in many ways a sequel to Russia’s Aims in the First World War (my own title!), written in a similar spirit, albeit much longer and in some ways more ambitious.

With the Russian Revolution, it was probably still harder to say anything really new, particularly after the popular histories of Richard Pipes and Orlando Figes (and a huge new literature written partly in response to them) came out in the 1990s. And I do not think my “take” was quite as revisionist or controversial as those on WWI or WW2. What I did try to do, in order to add something new to the story, was to combine my own research in a number of areas (Russian army morale reports before and after Order No. 1, depositions taken after the July Days, police reports from 1917, Bolshevik finances and expropriation policies, etc.) with new work done by others since 1991 on, especially, Russia’s military performance in WWI (a topic almost completely ignored in Cold War era literature on the Revolution, both Soviet and western), to reinterpret both the February and October Revolutions. In full disclosure, I would have preferred to write an ambitious history on just 1917, where I had the most original material and new points to make, but my publisher wanted a one-volume “comprehensive” history of the Revolution, so that is what I wrote. Like most historians and writers, I like to think that I write entirely from inspiration with a free hand, but of course there are all kinds of factors that play into our work.

Getting back to your question – while I have certainly done original research for all of these books, I am hardly the only historian to take advantage of Russian archives opened after the collapse of the USSR in 1991 – including, I should add, all the incredible archival material compiled by Russian researchers in the 1990s and 2000s into huge published volumes of Soviet-era documents. I think it is my mindset that differentiates me from other scholars who have taken similar advantage of this opportunity. Simon Sebag Montefiore, for example, uncovered incredibly rich veins of new material for Stalin. Court of the Red Tsar, as Antony Beevor did for Stalingrad, both of which books made an enormous splash. They’re not exactly “revisionists”, though. Rather, these historians retell stories already partly familiar, but with reams of fascinating new details that greatly enrich the story. I think this is a wonderful way to write history, and thousands of readers evidently agree. It is just not what I do.”

Big Serge: “I’m glad you brought up The Russian Origins of the First World War. This was the first of your books that I read, and I found it interesting for a counterintuitive reason, in that its arguments seem like they should be obvious and not particularly controversial. The essence of the book is that the Tsarist state had agency and tried to use the First World War to achieve important strategic objectives. That should be obvious, after all this was an immensely powerful state with a long pedigree of muscular foreign policy, but people are very accustomed to the Guns of August sort of narrative where all the agency and initiative is with Germany, and everyone else is reduced to the role of objects in a story where Germany is the sole subject.

It makes me think somewhat of a quip that Dr. Stephen Kotkin has used in interviews about his Stalin biographies, when he says that the “big secret” of the Soviet archives was that the communists really were communist. His point is that, even in a very convoluted and secretive regime, sometimes what you see really is what you get. I think you made a similar sort of point with Russian Origins. If I could paraphrase you, the big reveal is that the big, powerful Tsarist Empire was behaving like a big powerful empire, in that it had cogent war aims and it consistently sought to work towards those – so consistently in fact that the war aims were initially largely unchanged after the fall of the monarchy in 1917. You’re saying something very similar with Stalin’s War: the shocking secret here is that a powerful, expansionist, heavily militarized Soviet regime acted like it and worked aggressively to pursue its own peculiar interests.

How do you conceptualize this? It strikes me as a little bit odd, because, as you say, there is sometimes a bit of a stigma round the label “revisionist”, but your books generally present schemas that are fairly intuitive: Tsarist Russia was a big, powerful empire that pursued big imperial aims; Stalin was the protagonist of his own story and exercised a muscular, self-interested foreign policy; the Bolsheviks used extraordinary violence to conquer an anarchic environment. Are you surprised that people are surprised at these things?”

Dr. McMeekin: “I wish I was surprised, and perhaps at first I was, but I suppose that, over the years, I have become inured to the shocked! Shocked! reactions I receive when I point out fairly obvious things. Historians, like most groups, tend to be pack animals, who like to run in safe herds. When it comes to a familiar subject such as the outbreak of World War I, the literature tends to groove around well-trodden themes and questions. Certainly it has done since Fischerites took over the field: it’s Germany all the time, with perhaps a nod to Austria-Hungary in the Serbian backstory, or Britain with the naval race. France and Russia had almost disappeared from the story, as if one of the two major continental alliance blocs was irrelevant. I was heartened that my own treatment of Russia’s role in the outbreak of the war and Russia’s war aims garnered attention and shaped the conversation, both in itself and through Christopher Clark’s bestseller Sleepwalkers (which draws on Russian Origins). By contrast, Stefan Schmidt’s pathbreaking 2009 study of the French role in the outbreak of the war (Frankreichs Aussenpolitik in der Julikrise 1914), which Clark and I draw on heavily, has still not been translated into English, making barely a ripple in the profession. Clark and I have poked around with English-language publishers, trying to gin up interest in a translation, but so far without luck.

With the Second World War, I suppose the “shock” value is still greater, and perhaps therefore even less surprising. In Germany, after all, there are laws on the books making it illegal to “trivialize” the Holocaust, for example by foregrounding Soviet war crimes on the eastern front, and of course whole areas of the war such as the Molotov-Ribbentrop Pact, Soviet war plans in 1941, and even Lend-Lease are highly sensitive in Russia, though I’ll note that there has been a curious exception for the “full-on” revisionism of Rezun-Suvorov (Icebreaker, etc.) – perhaps because his thesis is so extreme as to be easily caricatured, or maybe just because his books sell so well, it has never been difficult to find them in Russian bookstores. In a way, I also think the popularity of Suvorov’s books in Russia relates to the way they do take the Soviet Union seriously as a great power, as I do, of course – whether or not one agrees with his thesis, and I’m sure many of his Russian readers do not, it is less condescending than western histories that treat the Soviets as passive victims of fate in the Barbarossa story before Stalin woke them up.

I was perhaps more surprised at the visceral reaction to Stalin’s War in Britain, particularly my discussion of Operation Pike (eg British plans to bomb Soviet oil installations in Baku in 1940), which sent certain reviewers into paroxysms of rage I found absolutely bewildering. If anything, I should have thought my sharply critical treatment of Hopkins and Roosevelt would have offended Americans far more gravely than my slightly more sympathetic portrayal of Britain’s wartime statesmen, but it was quite the opposite. Certainly some American Roosevelt admirers were annoyed, but this was nothing like British reviewers’ hysteria over Operation Pike. Curiously enough I had dinner not long ago with one of these reviewers, and he brought up Stalin’s War. He was very civil, full of British charm, but he still wanted desperately to know why I had argued that Britain “should have gone to war against the Soviet Union instead of Nazi Germany”. As always when I am accused of this – another reviewer stated this point blank in the TLS – I simply asked him if he could locate a passage in the book where I had stated any such thing? The entire subject of World War II has become so encrusted with emotion and taboos that I think it clouds people’s vision. They see ghosts.”